The concept of “evolution” is often associated with biological processes, a gradual transformation leading to higher levels of complexity and adaptation. However, when examining the dynamic landscape of drone technology, this metaphor resonates profoundly. The question “what level does Venonat evolve” serves as an intriguing prompt to explore the stages and drivers of advancement in unmanned aerial vehicles (UAVs). In this context, “Venonat” isn’t a fictional creature but a symbolic representation of foundational, early-stage drone technology, and its “evolution” signifies the progression through different “levels” of technological sophistication, particularly within the realms of artificial intelligence, autonomy, and advanced sensing, all falling under the umbrella of Tech & Innovation.

Defining “Evolution” in Drone Technology: Beyond the Basic Flight Paradigm

In the world of UAVs, evolution is not a biological process but a relentless pursuit of enhanced capabilities, efficiency, and intelligence. It represents the journey from rudimentary, human-piloted aircraft to highly sophisticated, autonomous systems capable of complex decision-making and intricate operations. This technological evolution is primarily driven by advancements in artificial intelligence (AI), machine learning (ML), sensor integration, and computational power.

Initially, drones were glorified remote-controlled aircraft, demanding constant human input for every maneuver. Their “evolution” began with the integration of basic flight stabilization systems, making them easier to control. The subsequent inclusion of GPS modules marked a significant leap, allowing for waypoint navigation and automated return-to-home functions. These early “levels” were foundational, much like the initial stages of any developing organism. The real transformation, however, has occurred with the shift from mere automation to true autonomy, where drones can perceive their environment, understand context, make decisions, and execute tasks with minimal to no human intervention. This shift is characterized by intelligent features such as ‘follow me’ modes, dynamic obstacle avoidance, and ultimately, fully autonomous mission planning and execution in complex, unstructured environments.

The Shift from Hardware Focus to Software Intelligence

Early drone development was predominantly hardware-centric, focusing on aerodynamics, motor efficiency, and battery life. While these remain critical, the cutting edge of drone evolution is now unequivocally software-driven. Modern drones “evolve” not just through better components but through more intelligent algorithms. AI has become the brain of the drone, enabling it to interpret vast amounts of sensory data, recognize objects, predict trajectories, and make real-time operational decisions. This includes deep learning models for image recognition in agricultural applications, reinforcement learning for adaptive flight control in challenging weather, and neural networks for identifying anomalies during infrastructure inspections. Furthermore, the advent of edge computing allows drones to process complex data onboard, reducing reliance on cloud connectivity and enabling faster, more critical decision-making in the field, moving beyond mere data collection to intelligent data analysis at the source.

The “Levels” of Autonomous Intelligence: Mapping Drone Progression

To understand the “level” at which a drone has evolved, it’s helpful to consider a framework akin to the autonomy levels defined for self-driving vehicles. This provides a clear progression from human-dependent operation to full independence, charting the maturity of a drone’s intelligence and operational capabilities.

- Level 0: Manual Control (Human-Piloted): This is the baseline, where the human pilot maintains full control over all flight functions. The drone is merely an extension of the pilot’s commands.

- Level 1: Assisted Flight (Basic Stabilization): Drones at this level feature electronic stability control, GPS-assisted hovering, and basic return-to-home functions. The pilot still actively flies, but with significant assistance.

- Level 2: Semi-Autonomous (Waypoint Navigation & Basic Avoidance): These drones can follow pre-programmed flight paths (waypoints) and possess rudimentary obstacle detection and avoidance capabilities. Features like ‘follow me’ modes also fall into this category, requiring minimal human input during specific tasks.

- Level 3: Advanced Semi-Autonomous (Dynamic Environmental Awareness): At this level, drones can execute complex missions with greater autonomy, dynamically adapting to changing conditions. They can perform advanced obstacle avoidance in real-time, interpret sensor data to adjust flight paths, and even make limited decisions within defined operational parameters. Human oversight is still required, but less direct control.

- Level 4: High Autonomy (Conditional Operation): Drones at Level 4 can operate entirely without human intervention under specific environmental conditions or within defined operational envelopes. They can handle various contingencies, re-plan routes, and execute complex tasks autonomously. A human might monitor or intervene only in rare, extreme circumstances.

- Level 5: Full Autonomy (Universal Operation): This represents the apex of drone evolution, where the UAV can operate entirely independently in any environment, under all conditions, and handle every conceivable scenario without human oversight. This level is still largely aspirational but is the ultimate goal of current research and development in drone AI.

Perception, Cognition, and Action: The Pillars of Progression

Each incremental “level” of autonomy is built upon the foundational pillars of enhanced perception, sophisticated cognition, and precise action.

- Perception: A drone’s ability to “see” and understand its environment is crucial. This has “evolved” from simple optical cameras to integrated sensor suites including LiDAR (Light Detection and Ranging) for 3D mapping, radar for all-weather detection, thermal cameras for heat signatures, and multi-spectral sensors for agricultural analysis. Advanced sensor fusion techniques combine data from these disparate sources to create a comprehensive and robust understanding of the surrounding world, far surpassing human visual capabilities.

- Cognition: This refers to the drone’s ability to process perceived data, interpret it, plan actions, and make decisions. This “evolution” is driven by powerful onboard processors and advanced AI/ML algorithms that can identify objects, predict movements, assess risks, and formulate optimal flight strategies in real-time. This includes predictive analytics for equipment failure in industrial inspection or intelligent navigation through dynamic urban environments for package delivery.

- Action: Beyond just flying, “action” encompasses the precise control over flight mechanics and the intelligent operation of payloads. As drones evolve, their control systems become more adaptive, allowing them to maintain stability and execute intricate maneuvers in challenging conditions. The autonomous manipulation of payloads, such as robotic arms for inspection or precision spraying mechanisms in agriculture, also represents a significant leap in actionable intelligence.

From Foundational Platforms to Specialized Capabilities: The Venonat Metaphor

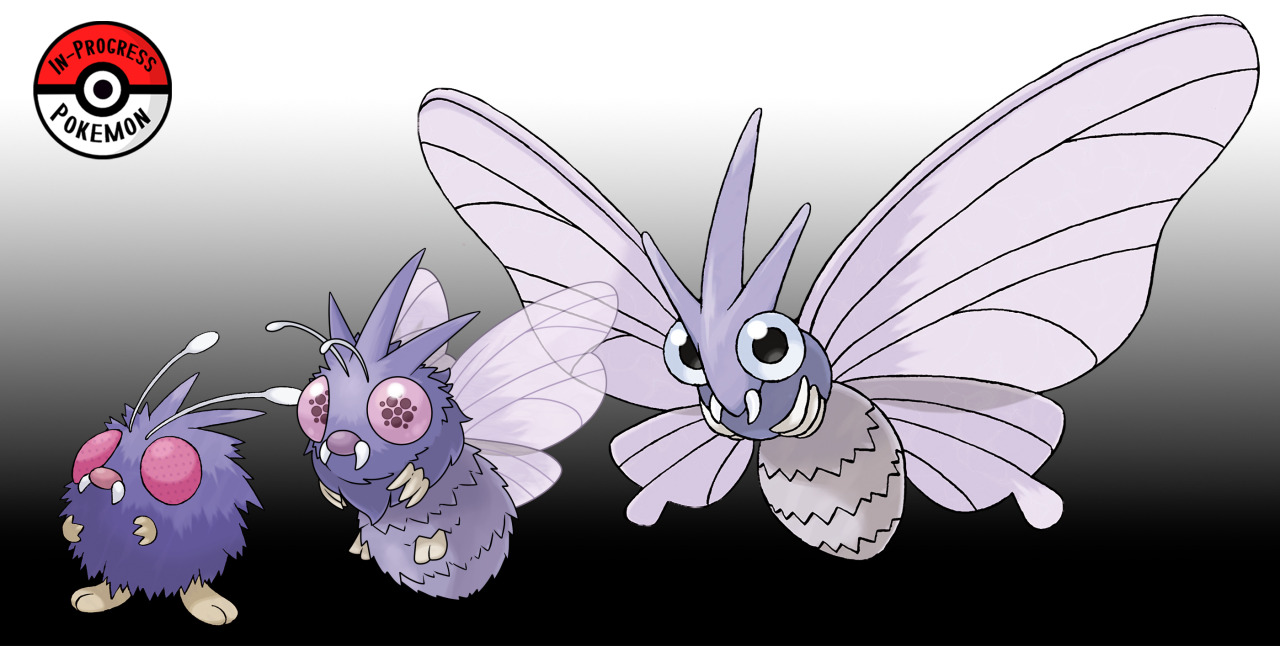

Applying our “Venonat” metaphor, an early-stage drone might be likened to the basic insect Pokémon – capable of flight and perhaps rudimentary sensing, but primarily requiring constant human guidance. Its “evolution” isn’t into a different creature, but into a highly specialized, intelligent, and autonomous system, perfectly adapted for specific tasks. This transformation is driven by the demands of diverse industries, each requiring bespoke capabilities that push the boundaries of drone technology.

A generic “Venonat” platform, perhaps a consumer-grade quadcopter with a basic camera, serves as a starting point. As it “evolves,” it branches into specialized “forms” – like Pokémon evolving into different types with unique abilities. For instance, a basic platform can evolve into:

- Inspection Drones: Equipped with high-resolution optical and thermal cameras, LiDAR, and AI for defect detection on power lines, wind turbines, or bridges.

- Delivery Drones: Optimized for payload capacity, range, and navigation in complex urban or remote terrains, integrating sophisticated GPS and obstacle avoidance.

- Agricultural Drones: Featuring multi-spectral sensors to assess crop health, AI for precision spraying, and robust flight systems for wide-area coverage.

- Search and Rescue Drones: Employing thermal imaging, powerful zoom cameras, and swarm intelligence for coordinated search patterns in disaster zones.

- Mapping and Surveying Drones: Utilizing photogrammetry, LiDAR, and RTK/PPK GPS for highly accurate 3D model generation and topographic mapping.

This specialization is not merely an attachment of different sensors; it involves tailoring the entire drone ecosystem – flight characteristics, sensor suites, onboard processing, communication systems, and AI models – to achieve optimal performance for its intended niche.

Niche Adaptation and Performance Optimization

The “evolutionary pressure” in the drone market is fueled by diverse industrial needs, regulatory frameworks, and technological feasibility. Companies demand drones that can perform specific, complex tasks more efficiently, safely, and cost-effectively than traditional methods. This pushes manufacturers and software developers to innovate, leading to highly optimized “species” of drones. This is a continuous feedback loop: drones are deployed, data is collected, performance is analyzed, and algorithms are refined, leading to further “evolution.” For example, the need for autonomous inspection of confined spaces led to the “evolution” of collision-tolerant drones with advanced SLAM (Simultaneous Localization and Mapping) capabilities, far beyond what a basic platform could offer.

Enabling Future “Evolutions”: Key Drivers of Advanced Drone Development

The trajectory of drone evolution points towards increasingly autonomous, intelligent, and integrated systems. Several key drivers are accelerating this progression, pushing drones to even higher “levels” of capability:

- Continued Advancements in AI & Machine Learning: New breakthroughs in deep learning, reinforcement learning, and generative AI will unlock unprecedented levels of perception, decision-making, and adaptive behavior in drones. This includes improved anomaly detection, predictive maintenance capabilities, and the ability to learn from unexpected scenarios.

- Sensor Miniaturization & Fusion: The development of smaller, lighter, and more powerful sensors will allow drones to carry more diverse and capable payloads without compromising flight time or agility. Crucially, the ability to seamlessly fuse data from multiple sensor types (visual, thermal, LiDAR, radar, acoustic) will provide drones with a truly comprehensive understanding of their environment, enabling robust operation in challenging conditions.

- Battery Technology: Enhanced battery energy density and faster charging technologies are critical for increasing flight duration, extending operational range, and enabling heavier payloads, thus expanding the practical applications of drones significantly.

- Regulatory Frameworks: As global aviation authorities develop and standardize regulations for autonomous drone operations (e.g., beyond visual line of sight – BVLOS), the path for broader deployment and more complex, integrated drone services will open up. Clearer rules foster innovation and build public trust.

- Cloud Integration & Edge Computing: The synergy between powerful cloud-based processing for complex mission planning and data analysis, and robust edge computing for real-time onboard decision-making, will enable drones to operate with unprecedented levels of intelligence and responsiveness.

- Human-Drone Interaction (HDI): Future “evolutions” will also focus on more intuitive and collaborative interfaces, allowing humans and drones to work together seamlessly. This includes advancements in gesture control, natural language processing for command input, and swarm intelligence where multiple drones coordinate autonomously to achieve a shared objective.

The Interplay of Hardware, Software, and Regulation

The evolution of drones is not solely dependent on one technological breakthrough but on the synergistic advancement of hardware, software, and regulatory landscapes. A powerful new sensor is useless without the software to interpret its data, and neither can be fully leveraged without a regulatory environment that permits its deployment. The ecosystem of drone manufacturers, software developers, sensor providers, and regulatory bodies must evolve in concert, each pushing the others to higher “levels” of innovation. This holistic approach ensures that “Venonat” continues its remarkable technological evolution, transforming into an increasingly sophisticated and indispensable tool across an ever-expanding array of applications.