In the rapidly evolving landscape of unmanned aerial vehicle (UAV) technology, the transition from simple flight to sophisticated data collection has redefined how we perceive the world from above. When we discuss “the row and the column” in the context of drone technology—specifically within tech and innovation, mapping, and remote sensing—we are not referring to a simple spreadsheet. Instead, we are describing the fundamental architecture of digital spatial data.

Every high-resolution map, thermal scan, or multispectral analysis produced by a drone is built upon a grid. Understanding the relationship between rows and columns is essential for anyone involved in photogrammetry, precision agriculture, or industrial inspection. This article explores how the row-and-column structure forms the backbone of digital imagery, spatial resolution, and the advanced algorithms that drive autonomous flight and remote sensing.

The Fundamentals of Raster Data in Drone Mapping

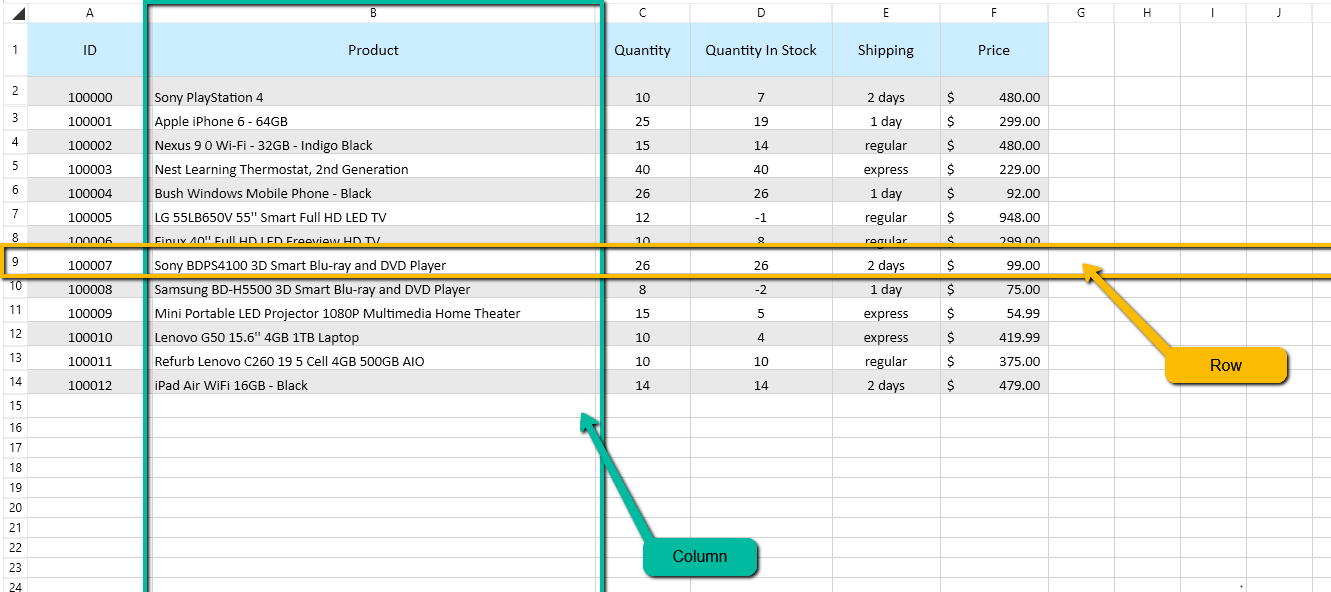

At the heart of every drone-captured image is a raster. A raster is a rectangular grid of pixels, organized neatly into rows and columns. While the drone pilot sees a beautiful cinematic landscape on their controller, the internal computer and the processing software see a complex matrix of numerical values.

Understanding the Matrix Structure

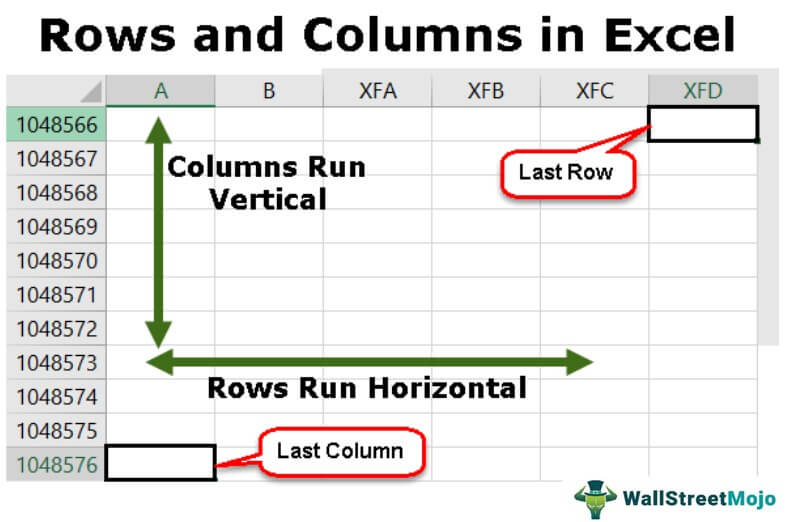

In drone mapping, every orthomosaic (a geometrically corrected map) is represented as a matrix. A “row” represents a horizontal line of data points, while a “column” represents a vertical line. The intersection of a specific row and column defines a single pixel.

In a standard digital sensor, these pixels capture light intensity. However, in the realm of remote sensing, these row-column intersections store much more. They can represent elevation data, thermal signatures, or even chemical compositions in the case of hyperspectral imaging. By organizing data into this rigid grid, software can perform mathematical operations across the entire dataset, allowing for the “stitching” of thousands of individual images into a single, cohesive map.

Pixel Resolution and Grid Geometry

The number of rows and columns in a drone’s data output determines its resolution. A sensor with 20 megapixels produces a grid with significantly more rows and columns than a 12-megapixel sensor. In technical terms, the higher the density of this grid, the more detail is captured.

Geometry also plays a role. Each “cell” or pixel within the row-column structure must be perfectly square for accurate mapping. If the grid becomes distorted during the flight—due to gimbal instability or high winds—the rows and columns will not align, leading to errors in the final spatial model. This is why stabilization and precise GPS positioning are critical to maintaining the integrity of the data grid.

How Rows and Columns Define Spatial Accuracy

In professional drone applications, such as land surveying or construction monitoring, the “row and column” concept transcends simple image quality; it becomes a matter of geographic precision.

Ground Sampling Distance (GSD) and Grid Density

The most critical metric in drone mapping is Ground Sampling Distance (GSD). GSD is the physical distance between the centers of two consecutive pixels (or the width of one cell in the row-column grid) as measured on the ground.

For example, a GSD of 2 cm/pixel means that one “cell” in the row-column matrix represents a 2 cm x 2 cm area in the real world. By increasing the number of rows and columns within a specific geographic area, a drone provides higher “grid density,” allowing for more precise measurements. This is the difference between seeing a blur on a roof and seeing a hairline crack in the concrete.

Georeferencing: Linking Rows and Columns to Earth Coordinates

A raw image exists in “pixel space,” where its location is only defined by its row and column index (e.g., Row 500, Column 1024). To make this data useful for mapping, it must be georeferenced.

Georeferencing is the process of assigning real-world coordinates (Latitude, Longitude, and Altitude) to the row-column structure of the image. When a drone uses RTK (Real-Time Kinematic) or PPK (Post-Processed Kinematic) technology, it records the exact spatial position of the sensor at the moment of capture. Processing software then “warps” the row-column grid to fit the Earth’s curvature, ensuring that every point in the matrix aligns perfectly with its physical location on the globe.

The Role of Rows and Columns in Multispectral and Thermal Analysis

In the fields of Tech & Innovation and Remote Sensing, drones often carry sensors that go beyond the visible light spectrum. This is where the row-and-column structure becomes three-dimensional.

The 3D Data Cube: Rows, Columns, and Spectral Bands

In standard photography, we deal with three layers of grids: Red, Green, and Blue (RGB). However, in multispectral remote sensing (common in precision agriculture), drones capture additional “bands,” such as Near-Infrared (NIR) or Red Edge.

Think of this as a “data cube.” The X-axis represents the columns, the Y-axis represents the rows, and the Z-axis represents the different spectral bands. Each pixel is no longer just a color; it is a vertical stack of data. By comparing the value of a specific row-column coordinate across different bands, scientists can calculate the health of a plant or the moisture content of the soil.

Processing Arrays for Vegetation Indices (NDVI)

The most famous application of this grid-based analysis is the Normalized Difference Vegetation Index (NDVI). To calculate NDVI, the software takes the numerical value of a pixel in the NIR column and compares it to the value in the Red column using a specific formula: (NIR – Red) / (NIR + Red).

This calculation is performed millions of times—once for every row and column in the dataset. The result is a new grid where the values represent plant vigor. Without the structured organization of rows and columns, this level of automated, large-scale environmental analysis would be impossible.

Data Processing Workflows: From Image Captures to Grid-Based Outputs

The journey from a drone’s flight path to a finished data product relies heavily on maintaining the consistency of the row-and-column format throughout the workflow.

Stitching and Orthorectification

When a drone flies a mapping mission, it takes hundreds of overlapping photos. Each photo has its own row-and-column grid. The “stitching” process involves finding common points (tie points) between these grids.

Through a process called orthorectification, the software removes the perspective distortion from the images. It recalculates the position of every pixel so that the view is perfectly “top-down.” This ensures that the distance between Column A and Column B is consistent across the entire map, allowing for accurate measurement of distance and area.

Digital Elevation Models (DEM) as Row-Column Grids

Rows and columns are not limited to 2D images. Digital Elevation Models (DEM) and Digital Surface Models (DSM) use the same grid structure to represent height. In these files, the “value” assigned to a row-column intersection is not a color, but a height measurement (Z-value).

By analyzing these grids, engineers can calculate the volume of a stockpile, the slope of a hill, or the potential path of floodwaters. The mathematical simplicity of the grid allows computers to process massive amounts of terrain data quickly and efficiently.

The Future of Autonomous Grid Analysis and AI

As we look toward the future of drone innovation, the row-and-column structure is becoming the primary language for Artificial Intelligence (AI) and Machine Learning (ML).

Computer Vision and Pattern Recognition

AI algorithms are designed to scan grids. When a drone is tasked with “detecting solar panel defects” or “counting cattle,” it uses computer vision to analyze the patterns of pixels within the rows and columns. Convolutional Neural Networks (CNNs) “slide” small windows across the grid, looking for specific arrangements of values that indicate a crack, a leak, or an object of interest.

Autonomous Navigation in Grid-Based Environments

Furthermore, autonomous drones use row-and-column logic for obstacle avoidance and path planning. By converting the 3D world into a “voxel grid” (the 3D equivalent of pixels), the drone can treat the environment as a series of occupied or empty cells. This allows for real-time navigation where the drone calculates the most efficient “columnar” path through a complex environment, such as a forest or a construction site.

Conclusion

While “the row and the column” may seem like basic concepts, they are the essential building blocks of the modern drone industry. In the context of Tech & Innovation and Remote Sensing, these grids are what transform a simple aerial photo into a powerful tool for global change.

From the Ground Sampling Distance that ensures surveying accuracy to the multispectral data cubes that revolutionize agriculture, the grid is everywhere. As drone technology continues to advance, our ability to manipulate, analyze, and interpret these rows and columns will only become more sophisticated, leading to deeper insights and more autonomous solutions in the world of aerial data. Understanding this structure is not just a technical requirement—it is the key to unlocking the full potential of what a drone can truly achieve.