In the ever-evolving landscape of digital presence, understanding how search engines perceive and interact with our websites is paramount. For businesses, content creators, and website administrators alike, ensuring optimal visibility and discoverability is a constant pursuit. At the forefront of this endeavor lies Google’s URL Inspection Tool, a powerful diagnostic utility designed to provide an unprecedented level of insight into how Googlebot processes individual web pages. Far from being a mere technical curiosity, the URL Inspection Tool serves a critical purpose in diagnosing, understanding, and ultimately optimizing a website’s performance within Google Search.

The fundamental objective of the URL Inspection Tool is to bridge the gap between a website owner’s perception of their content and Google’s actual interpretation. It allows for a granular examination of how Googlebot fetches, renders, and indexes a specific URL, offering actionable data that can inform strategic decisions for SEO and website management. Whether troubleshooting indexing issues, verifying canonicalization, or understanding mobile usability, the tool empowers users to move beyond assumptions and engage with concrete, verifiable information.

Understanding Google’s Crawling and Indexing Process

The bedrock of a website’s presence on Google lies in its ability to be crawled and indexed. The URL Inspection Tool provides a direct window into these fundamental processes, revealing the nuances of how Googlebot navigates and interprets web content. This understanding is crucial for any website aiming for organic search visibility.

How Googlebot Accesses Your URLs

Googlebot, the web crawler used by Google, systematically navigates the internet by following links from known pages to discover new ones. When you submit a URL for inspection, the tool simulates this process, showing you the immediate outcome of Googlebot’s encounter with that specific page. It reveals whether Googlebot could access the page, what status code it received, and how quickly it was able to retrieve the content. This is particularly vital for identifying potential crawlability issues, such as server errors, redirects that are too long, or content blocked by robots.txt.

Furthermore, the tool can distinguish between real-time fetching and the cached version of a page. For dynamic websites or those undergoing frequent updates, understanding when Googlebot last crawled a page and what version it has in its index is essential for ensuring that the most current and relevant information is being served to users. This historical perspective, provided by the inspection tool, allows for a proactive approach to content management and update strategies.

The Role of Rendering in Search Visibility

Beyond simply accessing the raw HTML, Googlebot also renders the page to understand its content and structure, especially for pages that rely heavily on JavaScript to display content. The URL Inspection Tool allows you to see how Google renders your page, revealing any errors or discrepancies that might hinder Google’s comprehension. This is particularly important in an era where single-page applications (SPAs) and dynamic content are increasingly common.

If your page’s content isn’t fully rendered or if there are JavaScript errors, Google might not be able to properly index the content, leading to reduced visibility. The tool provides a visual representation of the rendered page, highlighting any issues with JavaScript execution, CSS rendering, or missing elements. This insight is invaluable for developers and SEO specialists to pinpoint and resolve rendering-related problems that could be impacting search rankings.

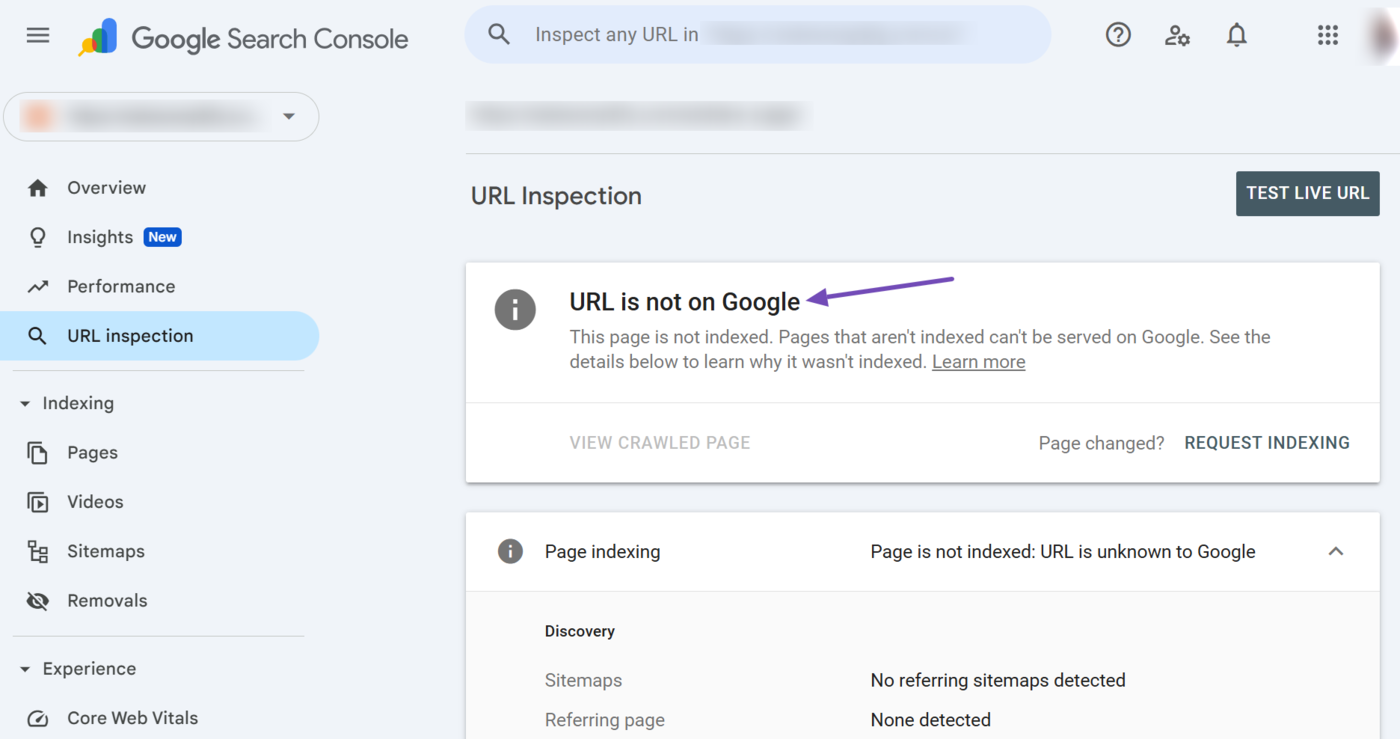

Indexing Status: The Gateway to Search Results

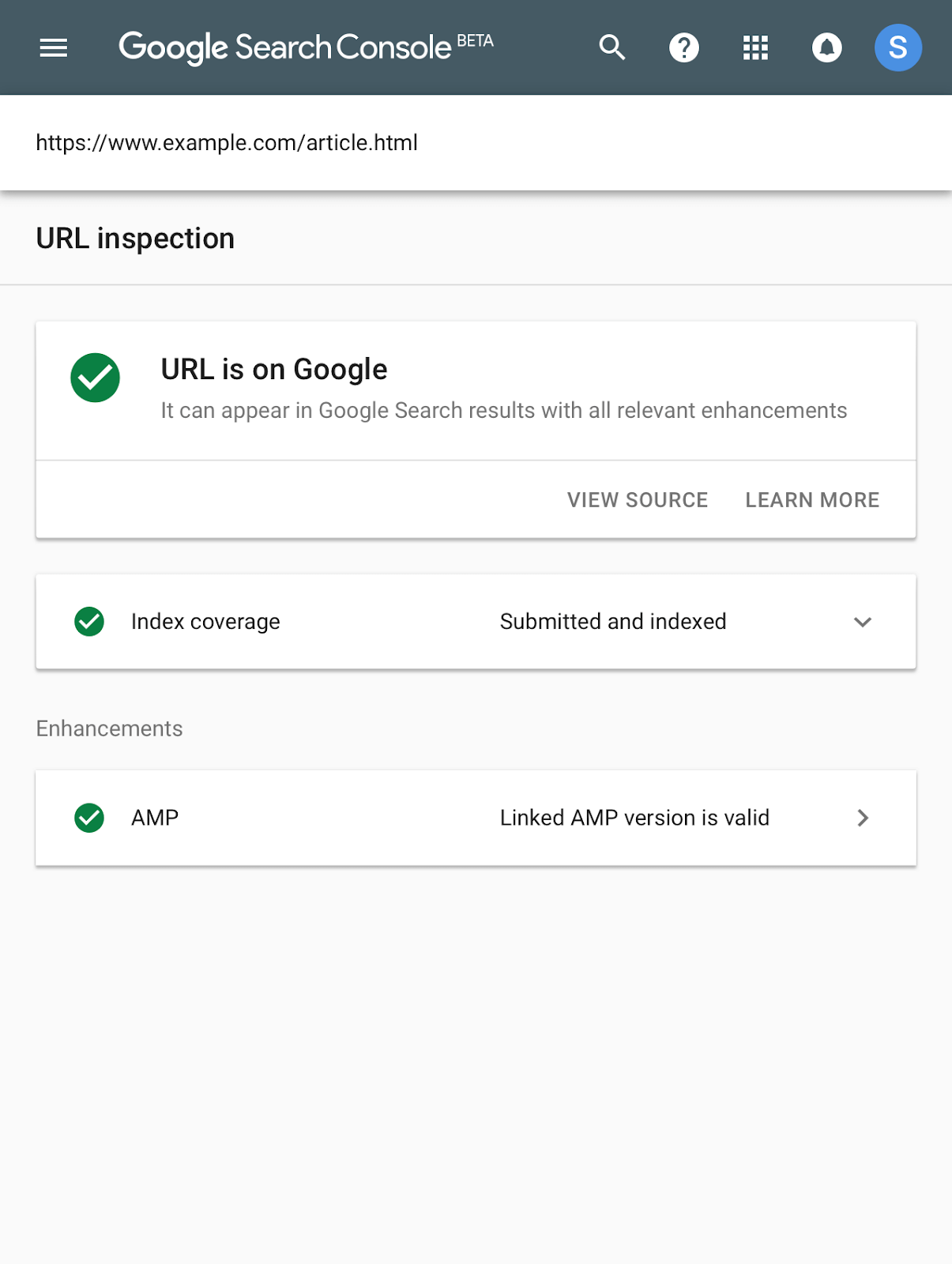

Ultimately, the goal of crawling and rendering is to get a page indexed by Google. The URL Inspection Tool provides definitive information about whether a URL is indexed and, if not, why. It can confirm if Google believes the page is already indexed, or if it has been excluded and the specific reason for that exclusion.

Common reasons for a page not being indexed include manual actions taken by Google against the site, issues with canonical tags, robots.txt directives preventing access, or the page simply not being deemed important enough to be added to the index. The tool’s detailed explanations for indexing issues are crucial for diagnosing and rectifying these problems, ensuring that valuable content reaches its intended audience through search.

Diagnosing and Resolving Technical SEO Issues

The true power of the URL Inspection Tool lies in its ability to act as a diagnostic and troubleshooting instrument for a wide array of technical SEO challenges. By providing specific error messages and actionable recommendations, it empowers website owners to address issues that might otherwise remain hidden, impacting organic search performance.

Identifying Crawl Errors and Server Issues

When Googlebot attempts to access a URL, it expects to receive a successful response. The URL Inspection Tool can identify various crawl errors, such as:

- Soft 404s: Pages that return a 200 OK status code but don’t actually contain any significant content or are effectively empty.

- Server Errors (5xx): These indicate problems with the website’s server, preventing Googlebot from accessing the page.

- Redirect Errors: Issues with redirect chains, incorrect redirect types, or redirects that lead to a loop.

By revealing these errors, the tool allows webmasters to work with their hosting providers or development teams to fix underlying server problems, optimize redirect configurations, and ensure that every page returns an appropriate status code. This proactive approach to crawl error resolution is fundamental for maintaining a healthy website that search engines can reliably explore.

Verifying Canonicalization and Duplicate Content

Duplicate content can be a significant hurdle for SEO, as it can dilute link equity and confuse search engines about which version of a page to rank. The URL Inspection Tool is instrumental in verifying canonical tags, a critical mechanism for signaling to Google which URL is the preferred version of a piece of content.

The tool clearly displays the canonical URL that Google has selected for the inspected page. This allows users to confirm whether their self-referential canonical tags are correctly implemented or if Google has chosen an alternative canonical due to various factors. Discrepancies here can lead to important pages not being indexed or ranked as intended. By ensuring correct canonicalization, website owners can consolidate ranking signals and improve the visibility of their primary content.

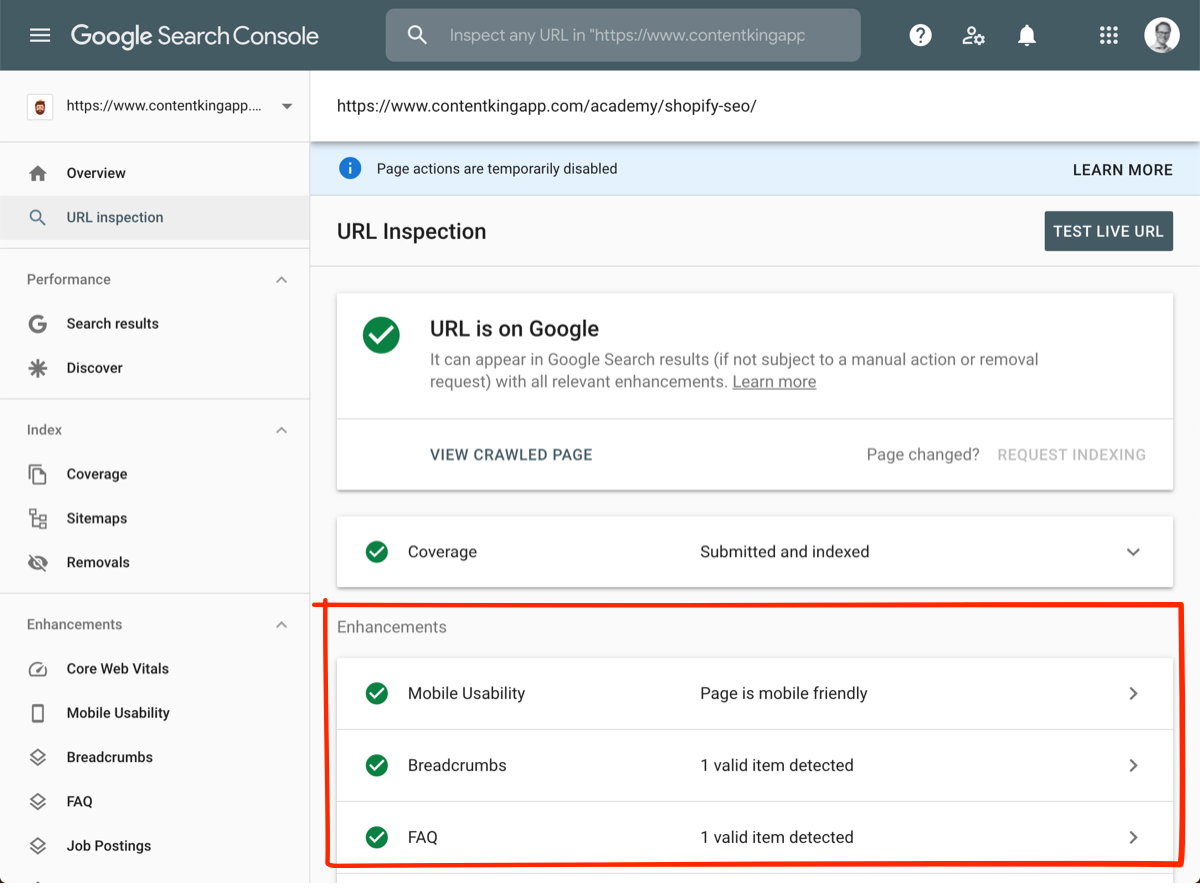

Mobile Usability and Page Experience

With the increasing dominance of mobile search, ensuring a seamless mobile experience is no longer optional; it’s a necessity. The URL Inspection Tool assesses how Google perceives the mobile usability of a page. It can identify issues that might hinder the user experience on mobile devices, such as:

- Content Wider Than Screen: When text and images don’t fit within the mobile viewport.

- Clickable Elements Too Close Together: Making it difficult for users to tap the intended links or buttons.

- Viewport Not Set: Without a properly configured viewport meta tag, the page may not display correctly on mobile devices.

Beyond mobile usability, the tool also provides insights into Core Web Vitals and other page experience signals. This holistic view of how Google evaluates the user experience on a page is crucial for identifying areas for improvement, which can directly impact search rankings and user engagement.

Optimizing Content for Search Engine Understanding

Beyond the purely technical aspects, the URL Inspection Tool also offers valuable insights into how Google understands and interprets the content of a page. This allows for a more informed approach to content creation and optimization, ensuring that the intended message resonates with both users and search engines.

Examining Structured Data Implementation

Structured data, such as schema markup, helps search engines better understand the context and meaning of your content. The URL Inspection Tool can validate the structured data implemented on a page, highlighting any errors or warnings in the markup. Correctly implemented structured data can lead to rich results in search, such as star ratings, event details, or product information, significantly enhancing click-through rates.

The tool will indicate if Google can parse the structured data, what types of schema are present, and any detected errors. This direct feedback loop is invaluable for developers and content managers to refine their structured data implementation, ensuring that their pages are eligible for these coveted search result enhancements.

Understanding Page Content and Indexing Parameters

While the tool doesn’t provide a direct preview of how your content is presented to end-users in the same way a browser does, it does offer information about the content Googlebot was able to extract. This can include details about the page’s title tags, meta descriptions, and headings, providing a confirmation that these crucial on-page SEO elements are being recognized.

Furthermore, the tool can reveal if specific content elements might be causing indexing issues. For instance, if a critical piece of content is dynamically loaded and not rendered properly, it might not be recognized by Googlebot, even if it appears on the page in a browser. Understanding these nuances allows for targeted content optimization efforts, ensuring that the most important information is accessible and understandable to search engines.

In conclusion, the URL Inspection Tool is an indispensable component of any modern SEO strategy. Its ability to demystify Google’s crawling, rendering, and indexing processes, coupled with its power to diagnose and resolve technical issues, makes it a vital instrument for website owners. By leveraging the insights provided by this tool, individuals and organizations can proactively manage their online presence, ensure optimal discoverability, and ultimately drive more organic traffic to their websites. It transforms the often opaque world of search engine optimization into a more transparent and manageable domain, empowering users to take informed action for tangible results.