Foundational Data as ‘Stock’ in Advanced Drone Systems

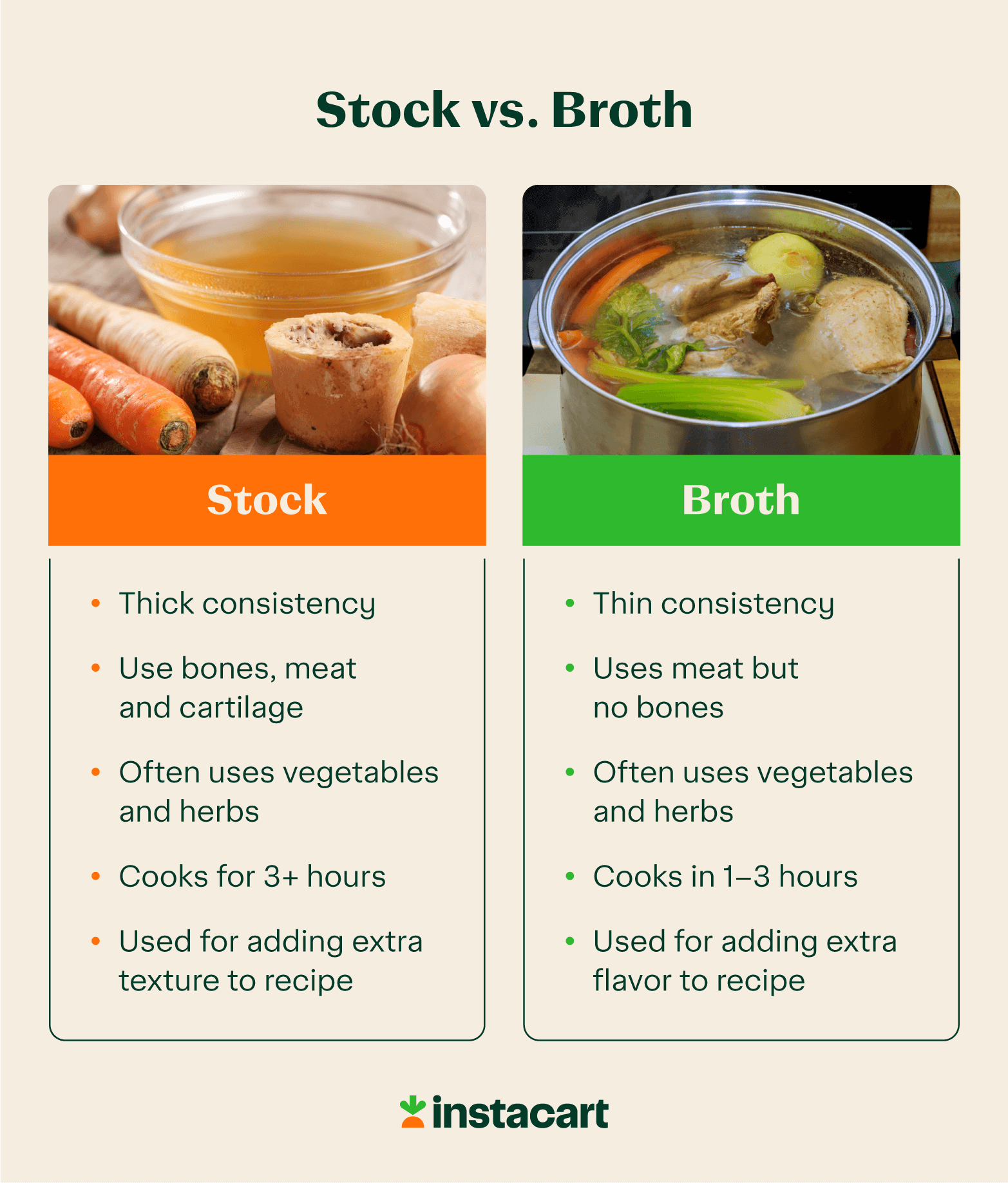

In the culinary world, soup stock is the fundamental, often unseasoned, liquid derived from simmering bones, vegetables, and aromatics. It’s rich in gelatin, body, and potential flavor but is rarely consumed on its own; it serves as a robust base for other dishes. Applying this analogy to advanced drone technology, ‘stock’ can be conceptualized as the raw, unprocessed, and foundational data streams emanating from various onboard sensors and external informational layers. This dense, unrefined influx of information forms the absolute essence from which all intelligent drone operations and sophisticated analytical processes ultimately derive.

This ‘stock’ within drone systems encompasses a wide array of initial data points. It includes the unfiltered outputs from critical Inertial Measurement Units (IMUs) such such as accelerometers, gyroscopes, and magnetometers, providing raw insights into the drone’s motion and orientation. Basic GPS signals, before any refinement or correction, also fall into this category, offering initial, albeit potentially imprecise, positional data. Furthermore, vast streams of unprocessed telemetry – encompassing everything from motor RPMs and battery voltages to internal system temperatures and communication link statuses – contribute to this foundational data pool. For drones equipped with advanced perception capabilities, the raw point clouds generated by Lidar or Radar systems, representing uninterpreted 3D spatial information, are prime examples of ‘stock’. Even uncompressed video feeds, delivering raw pixel data from onboard cameras prior to any processing for object recognition or situational awareness, fit this description. Lastly, base mapping data, comprising topographical information, satellite imagery layers, and general geographical datasets, while essential, are considered ‘stock’ until they are analyzed and contextualized for specific mission parameters.

The significance of this ‘stock’ is profound. It represents the unfiltered truth about the drone’s immediate state and its surrounding environment. It provides the essential building blocks, the fundamental empirical observations that are critical for any subsequent intelligent processing. However, much like culinary stock, it lacks immediate utility for autonomous decision-making or direct human interpretation without substantial further processing. It contains immense potential information density but is low in direct intelligence or actionable insight. This foundational data is the ‘unseasoned’ raw material, awaiting the complex ‘cooking process’ that will transform it into something usable and intelligent for complex aerial operations.

Refined Intelligence as ‘Broth’ for Autonomous Operations

In contrast to stock, culinary broth is typically a more refined liquid, often seasoned, made with meat and vegetables (though usually with less emphasis on bones), lighter in body, and intended to be consumed as is, or as a base for lighter, more delicate soups. It is a ready-to-use, palatable output. Transposing this to drone technology, ‘broth’ represents the processed, enriched, and readily actionable intelligence derived from the raw ‘stock’ data. This is the vital information that autonomous systems, sophisticated AI algorithms, and human operators can directly consume and utilize for real-time decision-making, mission execution, and insightful analysis.

The transformation from ‘stock’ to ‘broth’ within a drone’s operational framework results in several key types of refined intelligence: Stabilized Flight Data is a prime example, where filtered and fused sensor data (often through algorithms like Kalman filters or complementary filters) provides highly accurate and reliable estimates of the drone’s attitude, heading, velocity, and position. This corrected data is crucial for stable flight control and precision maneuvers. Precise GPS Positioning, achieved through technologies like RTK (Real-Time Kinematic) or PPK (Post-Processed Kinematic), takes raw GPS signals and refines them to achieve centimeter-level accuracy, which is indispensable for high-precision mapping, surveying, and targeted operations. Optimized Telemetry goes beyond raw data, presenting key performance indicators, comprehensive system health diagnostics, and even predictive analytics derived from the vast streams of foundational telemetry, allowing operators to monitor drone status proactively.

Furthermore, within advanced sensing, Semantic Scene Understanding emerges as a powerful form of ‘broth’. This involves processing raw Lidar and camera data to not only identify objects and obstacles but also to classify them (e.g., distinguishing between a tree, a building, or a person) and understand their spatial relationship and potential implications for flight paths. Object Tracking Data, crucial for applications like AI follow modes or dynamic surveillance, provides continuously updated information on the location, velocity, and predicted trajectory of specific targets. Finally, Mission-Specific Maps represent a highly refined ‘broth’, where foundational mapping ‘stock’ is overlaid with critical flight paths, dynamically updated no-fly zones, identified points of interest, or 3D models specifically relevant to the ongoing task, providing immediate operational context.

The significance of this ‘broth’ cannot be overstated. It is the catalyst that enables genuine intelligent functionality in drones. It marks the critical distinction between a drone merely receiving raw sensor input and a drone actively understanding its environment. This refined intelligence allows the drone to perform complex actions: avoiding obstacles intelligently, maintaining a rock-steady hover in challenging conditions, executing intricate, pre-programmed or adaptive flight paths, and accurately tracking a moving target. It transforms disparate data into digestible, actionable information, directly applicable for real-time control, high-level mission objectives, and informed human intervention. This ‘broth’ is the culmination of complex algorithms working tirelessly on the raw ‘stock’ to produce clarity, coherence, and profound utility in the realm of aerial robotics.

The ‘Cooking Process’: Data Transformation and Fusion

The metaphorical “cooking process” in drone technology is where raw ‘stock’ is meticulously transformed into refined ‘broth’. Just as a chef simmers, filters, seasons, and sometimes reduces or clarifies stock to create broth, sophisticated computational processes undertake an intensive journey of refinement and enrichment for drone data. This transformation is the core of how drones transition from merely collecting data to intelligently interacting with their environment.

A primary component of this ‘cooking process’ is Sensor Fusion. This involves advanced algorithms, such as Extended Kalman Filters (EKF), Unscented Kalman Filters (UKF), or Particle Filters, that skillfully combine data from multiple disparate sensors. Each sensor has its strengths and weaknesses; for instance, a GPS might provide absolute position but suffer from drift, while an IMU gives excellent relative motion but accumulates error over time. Sensor fusion intelligently synthesizes these inputs, overcoming individual sensor limitations to provide a more robust, accurate, and reliable estimate of the drone’s state (position, velocity, orientation). This is akin to a chef blending different foundational ingredients to create a richer, more complex, and balanced flavor profile than any single ingredient could offer.

Beyond fusion, Signal Processing plays a crucial role. This involves a suite of techniques to filter out noise from raw signals, de-convolution to separate mixed signals, and various enhancement methods to extract meaningful patterns that might be obscured in the initial ‘stock’. Whether it’s cleaning up noisy lidar returns or enhancing low-light camera feeds, signal processing makes the underlying information clearer and more accessible for subsequent analysis.

Machine Learning and Artificial Intelligence (AI) algorithms serve as the ‘seasoning’ that adds intelligence and flavor to the data ‘broth’. Neural networks, deep learning models, and other AI techniques are employed to analyze vast volumes of ‘stock’ data—be it imagery, lidar point clouds, or acoustic signatures. These algorithms can identify objects with remarkable accuracy, classify terrain types, predict the movements of dynamic elements in the environment, or detect subtle anomalies that a human operator might miss. For example, an AI model can process raw video ‘stock’ to identify a specific type of crop or detect early signs of plant disease, converting mere pixels into agricultural intelligence.

Data Reduction and Compression are also vital steps in creating an efficient ‘broth’. Raw data can be massive, especially from high-resolution sensors. This stage involves minimizing redundant information while meticulously preserving critical details. This makes the ‘broth’ not only manageable for real-time processing but also efficient for transmission to ground stations, ensuring that vital intelligence is available without overwhelming communication bandwidths.

Finally, Contextualization is where external knowledge is integrated with ‘stock’ data to provide richer meaning. This involves combining raw sensor inputs with mission parameters, historical data (e.g., previous flight paths, known environmental conditions), and pre-existing environmental models. For instance, knowing that a processed lidar point cloud represents a tree is one level of ‘broth’; knowing that it’s a specific type of tree in a conservation area with known wind patterns, and that this tree needs to be avoided during a specific flight trajectory, adds multiple layers of contextual intelligence. This transforms simple data into actionable understanding, enabling the drone to make informed decisions tailored to its specific operational context.

Impact on Drone Capabilities and Applications

The meticulous transformation from raw ‘stock’ to refined ‘broth’ has a profound and pervasive impact on virtually every aspect of a drone’s capabilities and its diverse applications across various industries. This process is not merely an enhancement; it is fundamental to unlocking the true potential of autonomous aerial systems.

Enhanced Autonomy is perhaps the most direct beneficiary. The quality, accuracy, and richness of the ‘broth’ directly correlate with the level of autonomy a drone can achieve. A superior ‘broth’ of refined data allows for the execution of far more complex autonomous flight paths, including navigating intricate environments, performing precise object manipulation, and reliably executing sophisticated obstacle avoidance maneuvers without constant human intervention. Without this refined intelligence, drones would be limited to basic, pre-programmed flights with minimal real-time environmental awareness.

For Precision Navigation and Mapping, the transition from raw GPS ‘stock’ to RTK/PPK ‘broth’ is revolutionary. In applications such as photogrammetry, large-scale surveying, and detailed infrastructure inspection, this refinement enables centimeter-level positional accuracy. This transforms raw aerial imagery or point clouds into precise, measurable 3D models and maps that are indispensable for construction planning, land management, and fault detection, elevating the utility of drone data from observational to quantitative.

In the realm of Advanced Remote Sensing, the extraction of ‘broth’ from specialized sensor ‘stock’ delivers unparalleled insights. For environmental monitoring, precision agriculture, or geological surveys, the ‘broth’ derived from multispectral, hyperspectral, or thermal imagery ‘stock’ can provide actionable intelligence such as crop health indices, water stress levels in vegetation, heat signatures indicating energy loss in buildings, or mineral compositions—information that raw imagery alone cannot convey. This allows for targeted interventions and informed resource management.

Intelligent Interaction, exemplified by features like AI Follow Mode and Object Tracking, relies entirely on the quality of this data transformation. The ability of a drone to intelligently identify, follow, and predict the movements of a subject—whether it’s a vehicle, a person, or wildlife—hinges on continuously processing raw visual and spatial ‘stock’ into a dynamically updated ‘broth’ of target location, velocity, and predicted path. This requires instantaneous analysis and predictive capabilities to maintain lock and adjust flight parameters accordingly.

Finally, the integrity of the data ‘broth’ is paramount for Safety and Reliability. A well-prepared ‘broth’ of comprehensive situational awareness allows drones to operate with significantly enhanced safety margins. By intelligently processing redundant data streams and applying intelligent decision protocols, drones can effectively mitigate risks arising from unpredictable environmental conditions, sudden changes in mission parameters, or potential system anomalies. This leads to more robust flight operations and significantly reduces the likelihood of accidents or mission failures, protecting both the drone and its surroundings.

Future Directions: Crafting the Perfect ‘Broth’

The continuous evolution of drone technology is inextricably linked to the quest for crafting an ever-more perfect ‘broth’ from its foundational ‘stock’. As demands for autonomy, precision, and intelligence grow, so too does the sophistication of the data transformation processes.

A significant trend is the move towards Edge Computing and AI on Board. Traditionally, much of the heavy data processing to convert ‘stock’ into ‘broth’ happened on ground-based servers. However, the future is rapidly shifting towards performing more of this computation directly on the drone itself, at the ‘edge’ of the network. This involves integrating more powerful, compact AI chips and advanced processing units directly into the drone’s hardware. The benefit is immediate: reduced latency in decision-making, significantly lower bandwidth requirements for data transmission, and enhanced real-time responsiveness, crucial for truly autonomous and time-sensitive operations. This allows the drone to generate its own ‘broth’ in situ, leading to faster, more independent reactions.

Another critical area of development is Standardization and Interoperability. Just as culinary standards allow chefs to share recipes and techniques, developing common frameworks and protocols for data ‘stock’ acquisition and ‘broth’ generation will foster greater interoperability between diverse drone platforms, sensor payloads, and ground control systems. This standardization will streamline data exchange, facilitate joint operations, and accelerate innovation across the entire drone ecosystem, preventing proprietary silos from limiting potential.

Adaptive Learning Systems represent a leap in intelligence. Future drones will not rely on static algorithms for ‘broth’ generation. Instead, they will incorporate machine learning models that continuously refine their data processing techniques. By learning from new ‘stock’ data and accumulating operational experiences, these systems will autonomously improve their intelligence and decision-making capabilities over time. This adaptability will enable drones to perform more effectively in dynamic, novel, or previously unencountered environments, making them more resilient and versatile.

Finally, the frontier extends to Predictive Analytics. Moving beyond generating real-time ‘broth’, the next generation of drone intelligence aims to produce ‘predictive broth’. This involves systems that can anticipate future states, potential issues, and upcoming environmental changes based on current ‘stock’ data combined with historical patterns and advanced modeling. This proactive intelligence will significantly enhance safety by foreseeing potential hazards, optimize mission success by predicting optimal flight paths, and enable preemptive maintenance by forecasting component failures.

The metaphor of “soup stock and broth” thus serves as a powerful and intuitive analogy for understanding the fundamental distinction between raw, foundational data and refined, actionable intelligence within the realm of advanced drone technology. Just as a chef carefully transforms basic ingredients into a rich and flavorful broth, so too do sophisticated algorithms and computational systems transform mere sensor readings into the intelligent insights that power the next generation of autonomous aerial systems. The journey from ‘stock’ to ‘broth’ is central to unlocking the full potential of drones, moving them from simple flying machines to intelligent, context-aware platforms capable of executing complex tasks and making nuanced decisions with unprecedented autonomy.