Demystifying Big O: The Foundation of Efficient Algorithms in Drone Technology

In the rapidly evolving landscape of drone technology, where autonomous flight, real-time data processing, and AI-driven capabilities are paramount, understanding the underlying principles of algorithmic efficiency is not just an advantage—it’s a necessity. At the heart of this understanding lies “Big O” notation, a fundamental concept borrowed from computer science that provides a powerful language for describing the performance and scalability of algorithms. Far from being a mere academic exercise, Big O is the bedrock upon which robust, responsive, and resource-efficient drone systems are built.

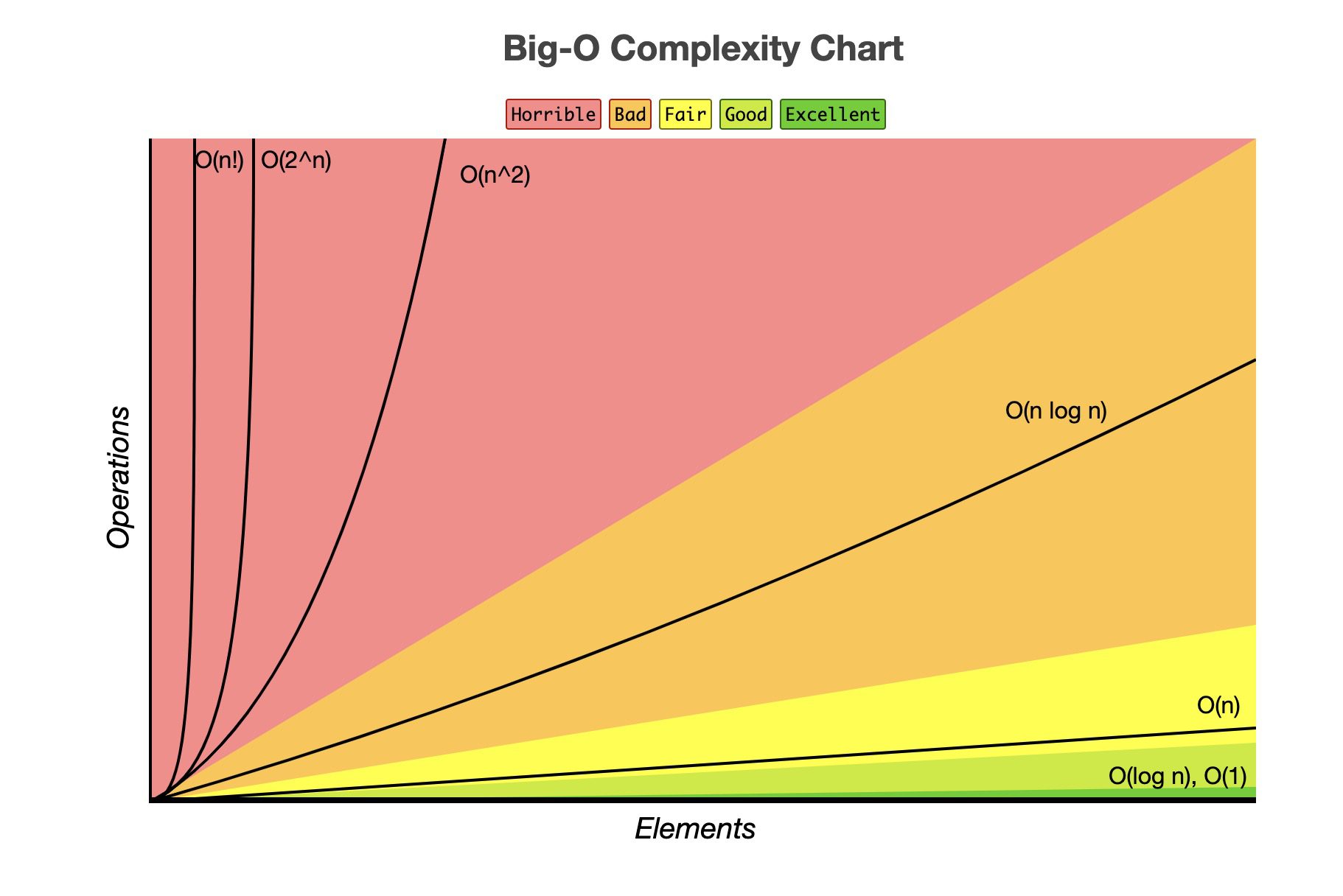

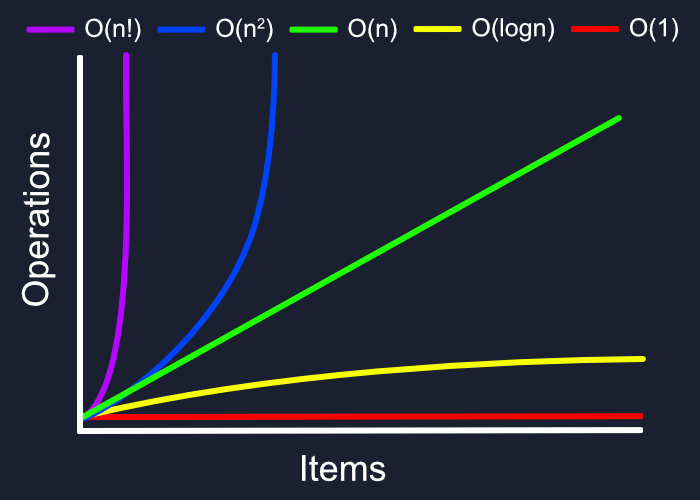

At its core, Big O notation quantifies how the running time or space requirements of an algorithm grow as the size of the input data increases. It’s not about measuring the exact execution time in seconds, which can vary wildly depending on hardware, programming language, and other transient factors. Instead, Big O offers an asymptotic analysis, focusing on the worst-case scenario and providing a high-level classification of an algorithm’s efficiency in terms of its growth rate. This abstract yet precise mathematical tool allows engineers and developers to predict how their systems will perform under increasing load, a critical consideration for drones handling ever-larger datasets from their onboard sensors, executing complex navigational tasks, or managing multiple AI processes simultaneously.

Consider a drone’s flight control system. If an algorithm responsible for processing sensor data and adjusting motor speeds has a high Big O complexity, it might struggle to keep up with real-time demands as the sensor input rate or environmental complexity increases. This could lead to latency, instability, or even system failure. Conversely, an algorithm with a lower Big O complexity will maintain predictable performance, ensuring the drone responds reliably and safely regardless of operational conditions.

Big O focuses on the dominant term in an algorithm’s complexity function, ignoring constant factors and lower-order terms. For instance, an algorithm that takes 5n + 10 operations for an input size n is classified as O(n) – “linear time.” The 5 and 10 become less significant as n grows very large, and the n term dominates the growth. This simplification is key to its utility, allowing for clear comparisons between fundamentally different approaches to solving a problem. From path planning and obstacle avoidance to advanced image recognition and remote sensing data analysis, every computational task on a drone benefits from an algorithm designed with Big O efficiency in mind.

Why Big O is Indispensable for Drone Innovation

The stakes in drone technology are particularly high. Unlike traditional computing environments, drones operate under severe constraints: limited battery life, finite processing power, restricted memory, and often, the need for real-time decision-making. These constraints elevate the importance of algorithmic efficiency from a desirable trait to an absolute necessity.

- Battery Life: Every wasted CPU cycle translates to expended energy. Efficient algorithms reduce computational load, directly extending flight duration and operational windows.

- Real-time Performance: Autonomous flight, collision avoidance, and FPV racing demand instantaneous responses. Lagging algorithms can lead to crashes or critical failures.

- Onboard Processing: Reducing reliance on ground stations requires more intelligence to be processed directly on the drone. This necessitates highly optimized algorithms to fit within the embedded hardware’s capabilities.

- Scalability: As drones collect more data (higher resolution cameras, more sensors) or operate in more complex environments (swarms, dense urban areas), the algorithms must scale without disproportionately increasing resource consumption.

Understanding Big O notation empowers developers to make informed design choices, prioritize optimization efforts, and select the most appropriate data structures and algorithms that can meet the stringent demands of modern drone applications. It’s the language of efficiency, and mastering it is crucial for pushing the boundaries of what drones can achieve.

Big O in Action: Optimizing Drone Intelligence and Autonomy

The theoretical understanding of Big O truly comes alive when applied to the practical challenges of developing intelligent and autonomous drone systems. From navigating complex environments to interpreting vast streams of sensor data, every major component of a drone’s advanced capabilities relies on algorithms whose efficiency is best understood through Big O notation.

Path Planning and Navigation

Autonomous drones must efficiently calculate optimal flight paths, avoid obstacles, and reach their destinations. Algorithms like A* (A-star) or Dijkstra’s are commonly employed for graph traversal and shortest path finding. The complexity of these algorithms often depends on the number of nodes (V) and edges (E) in the graph representing the environment.

- A* Search: In a grid-based map, the worst-case complexity can be exponential O(b^d) where ‘b’ is the branching factor and ‘d’ is the depth of the optimal path. However, with good heuristics, it often performs much better. For many practical applications, particularly when combined with efficient data structures like priority queues, its performance can be closer to polynomial. For a graph with V vertices and E edges, using a Fibonacci heap for the priority queue, Dijkstra’s is O(E + V log V). For real-time autonomous flight, reducing this complexity is paramount. Sub-optimal but faster algorithms might be chosen if absolute optimality is not critical, balancing performance with safety.

- Obstacle Avoidance: Real-time obstacle avoidance systems process data from lidar, radar, or vision sensors. Algorithms for spatial partitioning (e.g., k-d trees, octrees) can help quickly identify potential collisions. Building these structures might be O(N log N) for N obstacles, and querying them is often O(log N) or O(sqrt(N)), significantly faster than O(N) for a linear scan. The ability to identify threats quickly with minimal computational overhead is directly linked to the Big O complexity of these detection and prediction algorithms.

AI Follow Mode and Object Recognition

AI-powered features like “follow me” mode, target tracking, or object classification are core to many modern drones. These capabilities rely on complex computer vision and machine learning algorithms.

- Object Detection: Algorithms like YOLO (You Only Look Once) or SSD (Single Shot MultiBox Detector) are known for their speed. While the training phase is computationally intensive (often O(N*epochs)), the inference phase—the actual detection of objects in a live video stream—needs to be extremely fast. The inference time for a single image might be largely constant with respect to the number of objects, but the overall processing throughput per second is limited by the Big O complexity of the network’s forward pass, which grows with image resolution. An O(1) classification of a pre-processed image is ideal, but preparing that image and running it through a neural network can be more complex, making efficient network architectures critical.

- Tracking Algorithms: Once an object is detected, tracking algorithms (e.g., Kalman filters, particle filters) are used to predict its future position. The complexity of these often scales linearly with the number of tracked objects, O(N), but the underlying computations for each object can vary. For real-time tracking, these algorithms must be highly optimized, ensuring that the drone can keep a subject in frame without lag, even if the subject moves erratically.

From Sensor Data to Insight: Scalability in Remote Sensing

Drones are becoming indispensable tools for remote sensing, collecting vast amounts of data for applications ranging from precision agriculture to environmental monitoring and infrastructure inspection. The ability to efficiently process and extract meaningful insights from this data is where Big O notation truly highlights the importance of scalable algorithms.

Photogrammetry and 3D Modeling

Generating accurate 3D models and orthomosaics from drone imagery involves several computationally intensive steps: feature detection, matching, bundle adjustment, and dense reconstruction.

- Feature Matching: Identifying common points across multiple images is often a dominant factor. Brute-force matching between

Nimages, each withFfeatures, can approach O(N^2 * F^2) in the worst case, quickly becoming intractable. Optimized algorithms using spatial hashing or approximate nearest neighbor searches can reduce this significantly, perhaps to O(N log N * F) or better, by limiting comparisons to relevant image pairs and features. - Bundle Adjustment: This optimization problem simultaneously refines camera positions, orientations, and 3D point coordinates. It often involves solving large sparse systems of equations. The complexity can be high, but sparse solvers and iterative methods are employed to keep it manageable. For

Mimages andP3D points, the complexity can be approximated as O(M*P) or higher without efficient sparse matrix techniques. Efficient implementations are crucial for processing thousands of drone images. - Dense Reconstruction: Creating dense point clouds or meshes can involve algorithms with complexities that scale quadratically or cubically with the number of input pixels or voxels. Employing techniques that leverage GPU acceleration and parallel processing is common, but the underlying algorithmic efficiency (Big O) still dictates how these tasks scale with increasing image resolution and flight area.

Data Analysis and Classification

Remote sensing often involves analyzing multispectral or hyperspectral data to classify land cover, detect anomalies, or monitor crop health.

- Image Segmentation/Classification: Machine learning algorithms (e.g., Random Forests, Support Vector Machines, Neural Networks) are used. The training phase can be very resource-intensive. For inference on large imagery, the algorithm must process each pixel or region efficiently. Pixel-wise classification can be O(N) for

Npixels if each classification is constant time, but features extraction for each pixel can add to the complexity. Advanced deep learning models for semantic segmentation can have complex Big O profiles that depend on network architecture and input size. - Time-Series Analysis: Monitoring changes over time involves processing sequences of drone data. Algorithms for change detection or trend analysis must handle potentially very large historical datasets. An algorithm that takes O(N^2) to compare N time points would be impractical for long-term monitoring, favoring O(N log N) or O(N) approaches.

The sheer volume of data generated by modern drone sensors demands algorithms that scale efficiently. Neglecting Big O considerations in remote sensing processing pipelines can lead to bottlenecks, prolonged processing times, and increased cloud computing costs, ultimately hindering the utility and widespread adoption of drone-based solutions.

Navigating Computational Constraints: Why Big O Matters for On-Board Processing

The dream of fully autonomous, intelligent drones hinges on their ability to perform complex computations directly on board, minimizing reliance on ground control or cloud infrastructure. However, embedded systems on drones come with significant computational constraints—limited CPU power, restricted memory, and finite battery life. This environment makes Big O notation not just a theoretical concept, but a guiding principle for engineering practical, deployable drone solutions.

Every algorithm executed on a drone consumes processing cycles and memory. An algorithm with a poor Big O complexity, even if it performs adequately for small inputs, will quickly overwhelm the drone’s limited resources as the operational complexity or data volume increases.

Battery Life and Energy Efficiency

The primary constraint for drone endurance is battery life. Every computation, every memory access, and every data transfer consumes energy. An algorithm that scales quadratically (O(N^2)) or exponentially (O(2^N)) with input size N will drain the battery significantly faster than a linear (O(N)) or logarithmic (O(log N)) algorithm for the same task.

- Real-time Processing: Tasks like sensor fusion (combining data from GPS, IMU, vision sensors), attitude estimation, and motor control must execute within strict time limits. If a control loop takes too long due to an inefficient algorithm, the drone’s stability and responsiveness are compromised. A control algorithm with O(N^2) complexity, where N is the number of sensors or data points, might introduce unacceptable latency, whereas an O(N log N) or O(N) alternative would ensure timely execution.

- Edge AI: Running AI models directly on the drone (edge computing) is critical for applications requiring immediate decision-making, such as collision avoidance, dynamic object tracking, or search and rescue. The Big O complexity of the inference phase of these neural networks dictates how quickly they can process new sensor data. Developers often choose smaller, more efficient neural network architectures (e.g., MobileNet variants) designed with a lower Big O complexity in mind, even if it means a slight trade-off in accuracy compared to larger, more complex models that would be too slow or power-hungry for onboard execution.

Memory Footprint

Beyond execution time, Big O also describes space complexity—how much memory an algorithm requires as input size grows. Drones have limited RAM and persistent storage.

- Data Structures: Choosing the right data structure can drastically reduce memory overhead. For instance, storing a map of an environment using a sparse data structure might offer O(K) space complexity for

Koccupied cells, compared to O(M*N) for a dense grid ofMrows andNcolumns, where most cells are empty. - Algorithm Design: Some algorithms require storing intermediate results that grow with input size. An algorithm with O(N) space complexity is generally preferred over O(N^2) for large N, especially when dealing with high-resolution images or extensive environmental maps. Minimizing memory usage is critical to prevent out-of-memory errors and ensure smooth operation on resource-constrained platforms.

Ultimately, Big O notation is a vital tool for drone engineers to make informed decisions about algorithm selection and optimization. It enables the creation of systems that are not only functional but also efficient, ensuring drones can operate reliably and effectively within their physical and computational limitations, driving further innovation in the autonomous aerial domain.