The realm of drone technology, encompassing everything from sophisticated mapping platforms to agile racing machines, relies heavily on precise and reliable data. Whether it’s the accuracy of GPS coordinates, the effectiveness of obstacle avoidance sensors, or the stability of a gimbal camera, understanding how to measure and ensure the quality of these systems is paramount. This is where the concept of “test validity” becomes critically important. In essence, test validity refers to the degree to which a test accurately measures what it is intended to measure. For drone enthusiasts, developers, and professionals alike, grasping this concept is fundamental to developing, deploying, and ultimately trusting the technology that drives our aerial endeavors.

This article will delve into the multifaceted nature of test validity within the context of drone technology. We will explore its core principles, examine different types of validity as they apply to drone systems, and discuss the implications of valid testing for various aspects of the drone ecosystem, from hardware development to software algorithms and operational deployment.

The Foundational Pillars of Test Validity in Drone Technology

At its heart, test validity is about accuracy and relevance. When we design a test for a drone component or system, we want to be sure that the results we obtain truly reflect the performance or capability we are trying to assess. Misinterpreting test results due to invalidity can lead to flawed product development, unreliable operational data, and potentially unsafe flight outcomes. Therefore, establishing a clear understanding of what we are testing and how we are testing it is the crucial first step.

Defining the Measurement Objective

Before any testing begins, a clear and unambiguous definition of the measurement objective is essential. For a drone, this could be anything from determining the maximum flight endurance under specific load conditions to evaluating the accuracy of a visual simultaneous localization and mapping (vSLAM) algorithm in a complex indoor environment. Without a well-defined objective, it becomes impossible to design a test that can accurately measure it.

For instance, if the objective is to test the “stability” of a gimbal camera, we need to be precise about what “stability” means in this context. Is it the ability to counteract vibrations from the drone’s motors? Is it the smooth compensation for external wind disturbances? Or is it the precision of the camera’s pointing accuracy? Each of these requires different testing methodologies and metrics to assess validity.

Aligning Tests with Real-World Performance

A key aspect of validity is the extent to which the test results correlate with real-world performance. A laboratory test that perfectly simulates a controlled environment might not accurately predict how a drone’s navigation system will perform in the unpredictable conditions of actual flight. Therefore, valid testing often involves a combination of controlled experiments and field trials.

Consider the testing of obstacle avoidance systems. A test might involve a series of static obstacles in a clear area. While this can assess basic detection and response, it might not capture the nuances of avoiding dynamic objects, navigating cluttered environments, or reacting to unexpected movements. A more valid test would incorporate a wider range of scenarios, including moving obstacles, varied lighting conditions, and complex geometries, to better reflect real-world flight challenges.

The Role of Metrics and Evaluation Criteria

The metrics and evaluation criteria used in a test are direct determinants of its validity. If the chosen metrics are inappropriate or insufficient, the test will fail to capture the intended phenomenon. For example, simply measuring the time it takes for a drone to reach a certain altitude does not provide a comprehensive understanding of its climb performance; acceleration, rate of climb under load, and responsiveness to control inputs are also crucial.

Furthermore, the interpretation of these metrics must be grounded in a clear understanding of what constitutes success or failure. This often involves setting objective thresholds based on industry standards, regulatory requirements, or performance benchmarks. Without clearly defined and relevant evaluation criteria, even accurate measurements can lead to misleading conclusions about the test’s validity.

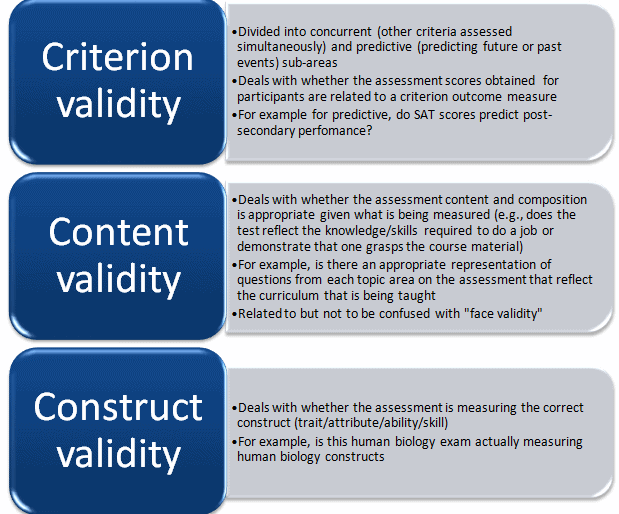

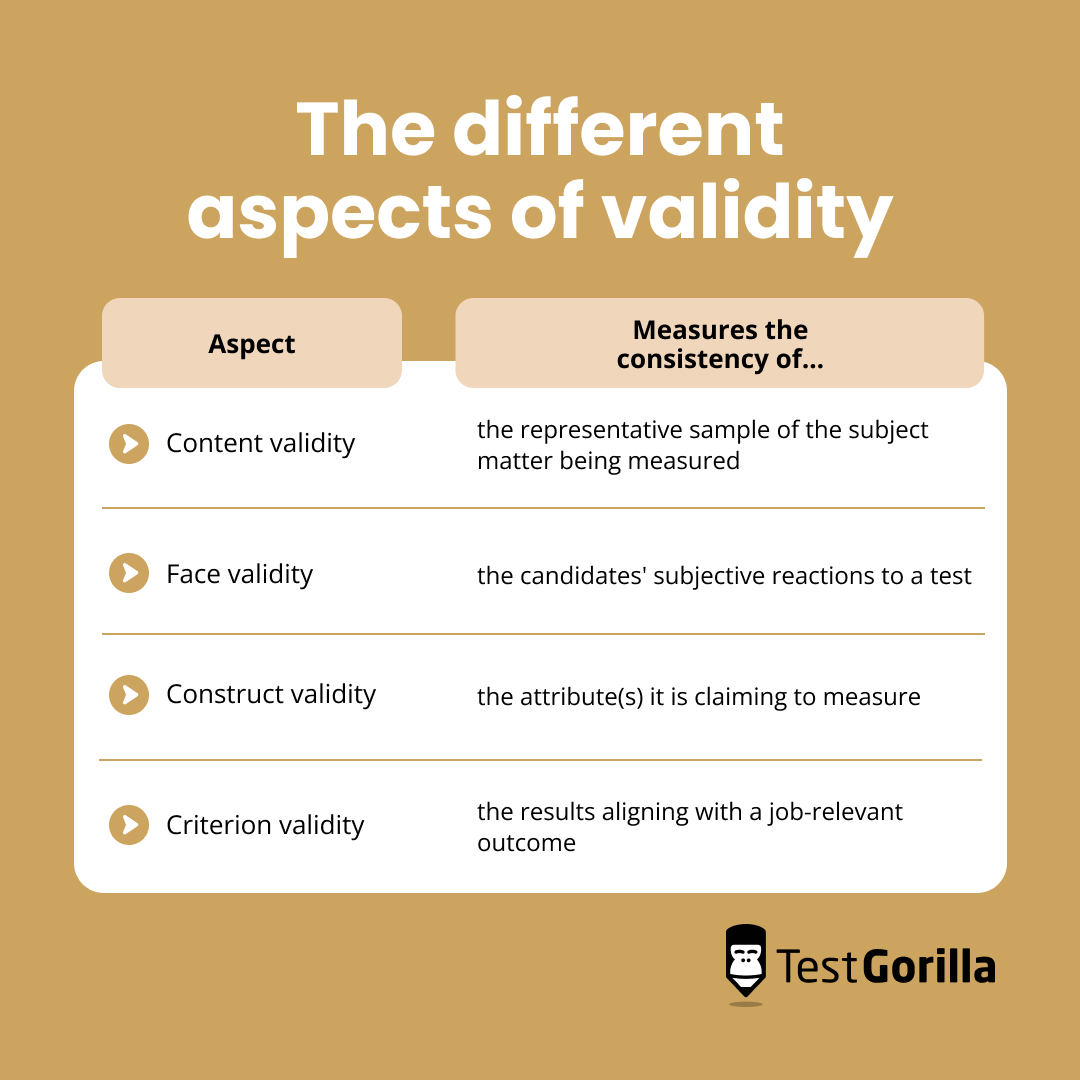

Types of Validity in Drone System Testing

Just as there are different aspects to drone technology that require testing, there are different types of validity that help us ensure our tests are truly measuring what they aim to. Understanding these distinctions allows for a more rigorous and insightful approach to evaluating drone systems.

Construct Validity: Measuring Abstract Concepts

Construct validity is concerned with how well a test measures an underlying theoretical concept or “construct.” In drone technology, many desirable qualities are abstract constructs. For example, “autonomy” is a construct. A test designed to assess a drone’s autonomous flight capabilities must demonstrate that it is indeed measuring the underlying concept of autonomy, rather than just a collection of specific programmed behaviors.

To establish construct validity for autonomous flight, a test might involve scenarios where the drone must adapt to unforeseen circumstances, such as unexpected changes in the environment or the failure of a sensor. If the drone can successfully navigate these challenges in a way that suggests intelligent decision-making and problem-solving, then the test has stronger construct validity in assessing its autonomy.

Content Validity: Representing the Domain

Content validity refers to the extent to which a test adequately samples the domain it is intended to cover. For a drone’s mapping camera system, content validity would mean ensuring that the test covers all critical aspects of its performance, from image resolution and color accuracy to distortion and low-light performance. A test that only focuses on resolution, for instance, would have poor content validity for the overall imaging capability.

When testing the reliability of a navigation system, content validity would involve assessing its performance across various GPS signal strengths, in urban canyons, under adverse weather conditions, and during periods of potential interference. A test that only considers optimal GPS reception would lack content validity for real-world navigational challenges.

Criterion-Related Validity: Predicting Performance

Criterion-related validity assesses how well a test predicts or correlates with an external criterion, which is often a measure of performance in a real-world situation. There are two main types:

Predictive Validity

Predictive validity is the degree to which a test predicts future performance. For instance, a new type of propeller designed for increased efficiency might undergo a series of bench tests. If these bench tests accurately predict the actual increase in flight time observed during real-world flight tests, then the bench tests possess good predictive validity.

In software development for autonomous drones, predictive validity is crucial. If simulations of a new flight control algorithm accurately predict its performance and stability in actual flight tests, then the simulation environment and its testing procedures have strong predictive validity.

Concurrent Validity

Concurrent validity is the degree to which a test correlates with another established measure of the same construct taken at the same time. Imagine a new, faster method for calibrating a drone’s IMU (Inertial Measurement Unit). If the results from this new method closely align with the results from a well-established, albeit slower, calibration method performed concurrently, then the new method has good concurrent validity.

For sensor fusion algorithms, concurrent validity could be established by comparing the drone’s estimated position and orientation derived from the new algorithm with the readings from a highly accurate, professional-grade inertial navigation system (INS) simultaneously.

Ensuring and Enhancing Test Validity in Drone Development

Achieving and maintaining test validity is not a one-time event but an ongoing process. It requires a systematic approach throughout the development lifecycle of any drone system.

Rigorous Test Design and Planning

The foundation of valid testing lies in meticulous design and planning. This involves:

- Defining clear objectives and hypotheses: What specific aspect of the drone system are we trying to measure, and what do we expect to find?

- Selecting appropriate methodologies: Choosing testing techniques that are best suited to measure the intended construct or criterion. This might involve simulations, laboratory bench tests, controlled field trials, or a combination thereof.

- Developing standardized protocols: Establishing precise step-by-step procedures for conducting tests ensures consistency and replicability, which are essential for valid comparisons.

- Identifying potential confounding variables: Recognizing factors that could influence the test results and taking steps to control or account for them is critical. For example, ambient temperature can affect battery performance, so this needs to be monitored and standardized if battery life is being tested.

Validation Through Diverse Environments and Conditions

A crucial aspect of ensuring validity is exposing the drone system to a wide range of environments and conditions that mirror its intended operational scope.

- Environmental variability: Testing in different terrains (urban, rural, mountainous), weather conditions (wind, rain, temperature extremes), and lighting scenarios (daylight, dusk, night) is vital for assessing robustness and reliability.

- Operational scenarios: Simulating various flight missions, including complex maneuvers, long-duration flights, and emergency situations, helps to validate performance under diverse operational demands.

- Load conditions: For systems like propulsion or battery management, testing under varying payloads and flight demands is essential to understand performance limitations and efficiency across the operational spectrum.

Ongoing Calibration and Verification

For systems that rely on precise measurements and calibrations, such as navigation systems or imaging sensors, ongoing calibration and verification are fundamental to maintaining test validity.

- Regular recalibration: Sensors and critical components should be periodically recalibrated to account for drift, environmental changes, or wear and tear.

- Cross-validation with known standards: Comparing the output of tested systems against established, highly accurate reference systems or known benchmarks (e.g., ground control points for photogrammetry) provides a continuous check on validity.

- Software updates and integrity: For systems driven by software algorithms, ensuring the integrity of code and validating that updates do not negatively impact performance is an ongoing task. Version control and rigorous regression testing are key.

The Impact of Test Validity on the Drone Ecosystem

The pursuit of test validity has far-reaching implications across the entire drone industry, influencing everything from product design and consumer trust to regulatory frameworks and the advancement of new capabilities.

Driving Innovation and Improvement

When tests are valid, they provide accurate feedback on what works well and where improvements are needed. This drives genuine innovation. Developers can confidently iterate on designs, knowing that their efforts are directed towards addressing real performance gaps. For example, if a test validly reveals that a particular flight control algorithm leads to excessive oscillations in high winds, engineers can focus on refining that specific aspect, leading to a more stable and reliable drone.

Building Consumer and Commercial Trust

For users, whether hobbyists or commercial operators, trust in drone technology is paramount. Test validity underpins this trust. When a drone is advertised with specific performance metrics (e.g., flight time, camera resolution, payload capacity), consumers expect these to be accurate. Valid testing ensures that these claims are substantiated, fostering confidence and encouraging wider adoption of drone technology for various applications.

Commercial entities relying on drones for critical tasks such as infrastructure inspection, agricultural monitoring, or emergency response need absolute certainty in their performance. Invalid testing could lead to missed defects, inaccurate data, or even mission failure, with potentially severe consequences.

Informing Regulatory Standards and Safety

Regulatory bodies responsible for the safe operation of drones rely heavily on valid testing to establish airworthiness standards and operational guidelines. Understanding the true capabilities and limitations of drone systems through valid testing is essential for developing effective regulations that balance innovation with safety. For instance, valid data on the reliability of obstacle avoidance systems is crucial for defining operational boundaries and mandated safety features.

Advancing Complex Drone Capabilities

As drone technology evolves to perform increasingly sophisticated tasks, the importance of test validity only grows. For example, the development of advanced AI-driven autonomous flight capabilities, such as complex path planning in dynamic environments or precise robotic manipulation by drones, requires highly rigorous and valid testing methodologies to ensure safety and reliability. Without valid tests, it would be impossible to gain the confidence needed to deploy such advanced systems in real-world applications.

In conclusion, test validity is not merely an academic concept; it is a practical cornerstone for the development, deployment, and continued advancement of drone technology. By understanding its principles, types, and the importance of rigorous application, we can ensure that the drones that navigate our skies are not only capable but also dependable and trustworthy.