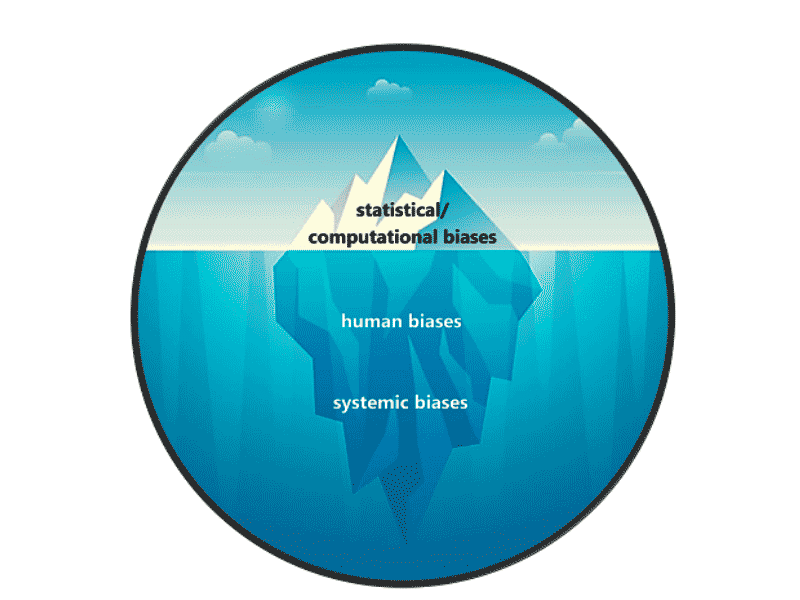

Systemic bias, at its core, refers to inherent tendencies, perspectives, or prejudices deeply embedded within the structures, policies, and practices of a system. Unlike individual bias, which stems from personal beliefs, systemic bias operates at an institutional level, influencing outcomes in a pervasive and often unintentional manner. In the rapidly evolving world of technology and innovation, particularly within areas like AI, autonomous systems, mapping, and remote sensing, understanding and addressing systemic bias is not merely an ethical imperative but a crucial factor in ensuring the fairness, reliability, and societal benefit of advanced technological deployments. When technology replicates or amplifies existing societal inequalities, it can lead to skewed results, perpetuate discrimination, and erode trust in intelligent systems.

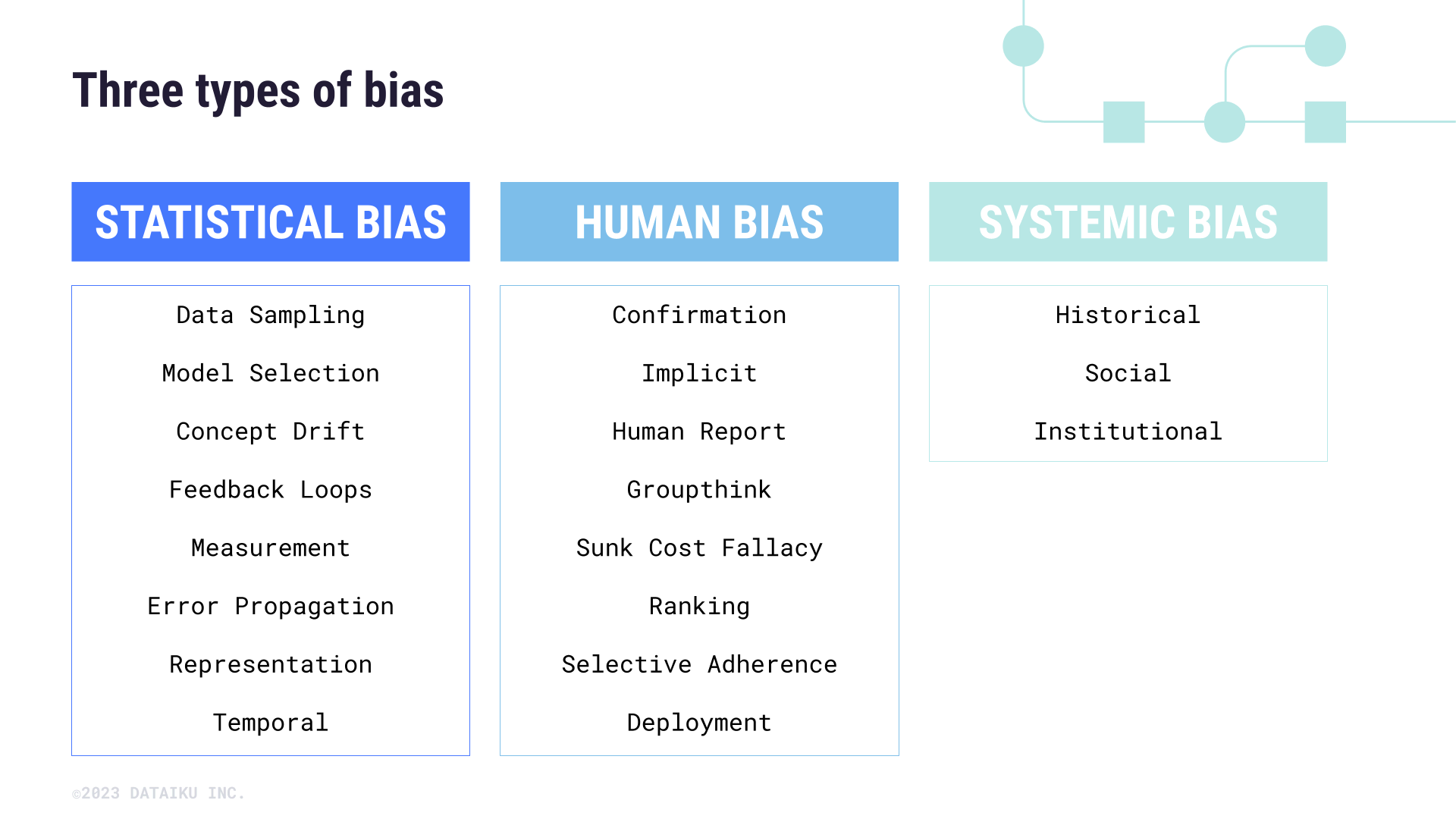

Defining Systemic Bias in a Technological Context

In the realm of tech and innovation, systemic bias often manifests when the underlying data, algorithms, or design choices reflect historical or societal prejudices. This bias isn’t necessarily a conscious malicious intent but rather an emergent property of complex systems built by humans who are themselves products of their environments. The data used to train AI models, for instance, often mirrors existing societal structures, including their inherent biases. When these biased datasets are fed into powerful algorithms, the systems learn and subsequently perpetuate these patterns, often at an amplified scale, creating a feedback loop that reinforces the initial imbalance.

Implicit vs. Explicit Bias

It’s crucial to distinguish between implicit and explicit bias in technology. Explicit bias would involve a system being intentionally programmed to favor or disfavor specific groups—an unethical practice that is generally avoided by design. More commonly, the issue is implicit bias, where the system’s design, data, or algorithms unintentionally lead to disparate outcomes. For example, an autonomous vehicle’s pedestrian detection system might perform less accurately on individuals with darker skin tones if its training data predominantly featured lighter-skinned individuals. The engineers did not explicitly code for this disparity, but the underlying data implicitly led to it.

The Role of Data

Data is the lifeblood of modern tech, from AI to mapping. Consequently, data quality, quantity, and representativeness are paramount. Systemic bias frequently originates in the data collection phase. If data is collected from a limited demographic, geographical area, or under specific conditions, the resulting model or system will inherit these limitations. A remote sensing model trained exclusively on images of urban environments, for example, might perform poorly in rural or forested areas, demonstrating a geographical data bias. This bias is systemic because it’s not about individual faulty data points, but about the systemic way data was acquired, processed, and ultimately used to build the technological solution.

Systemic Bias in Artificial Intelligence and Machine Learning

Artificial Intelligence (AI) and Machine Learning (ML) are particularly susceptible to systemic bias because their core function is to identify patterns and make predictions based on data. If the data itself contains biases, the AI will learn these biases and reproduce them in its outputs, often with significant societal implications.

Algorithmic Discrimination

Algorithmic discrimination occurs when AI systems produce unfair or discriminatory outcomes against certain groups. This can manifest in various applications:

- Facial Recognition: Historically, many facial recognition systems have exhibited higher error rates for women and people of color, leading to misidentification or failure to identify, which can have serious consequences in law enforcement or security.

- Hiring Algorithms: AI tools designed to screen job applicants can learn biases from historical hiring data, which might have implicitly favored certain demographics. This can lead to qualified candidates from underrepresented groups being unfairly overlooked.

- Credit Scoring: Loan approval algorithms might inadvertently use proxies for race or socioeconomic status (e.g., zip codes) if those factors were correlated with historical lending decisions, perpetuating economic disparities.

The discrimination is systemic because it’s embedded in the algorithm’s learned logic, influencing every decision it makes, rather than being an isolated incident.

Training Data Contamination

The primary vector for systemic bias in AI is often “training data contamination.” This refers to the presence of biased, incomplete, or unrepresentative information within the datasets used to train machine learning models. If a dataset primarily contains images of a particular gender performing specific roles, an AI model trained on this data might associate those roles predominantly with that gender, leading to biased predictions or classifications. Similarly, natural language processing (NLP) models can absorb gender stereotypes, racial biases, or cultural assumptions present in the vast amounts of text data they are trained on, subsequently generating biased language or interpretations. The scale of modern datasets makes manual review impractical, highlighting the systemic nature of this challenge.

Autonomous Systems and Decision-Making

Autonomous systems, from self-driving cars to robotic assistants, rely on AI and sophisticated sensor fusion to make decisions without direct human intervention. The integration of systemic bias into these systems poses profound ethical and safety challenges.

Ethical Considerations in Robotic Autonomy

When autonomous systems are deployed in critical applications, their decision-making processes must be free from bias. Consider an autonomous vehicle faced with an unavoidable collision. If its object recognition or prediction algorithms have inherent biases that make it less likely to correctly identify or prioritize certain groups of people (e.g., pedestrians in wheelchairs, children, or specific ethnic groups), the ethical implications are severe. Such biases could inadvertently lead to decisions that disproportionately harm certain demographics, reflecting societal inequities rather than promoting universal safety. The ethical frameworks guiding the development of these systems must explicitly address and mitigate such possibilities.

Bias in Perception and Response

Autonomous systems perceive the world through sensors like cameras, lidar, and radar. If these sensors, or the algorithms interpreting their data, are not robustly trained and tested across diverse environmental conditions, lighting, and human characteristics, they can develop systemic biases. For instance, an obstacle avoidance system might perform optimally in bright daylight but struggle in low-light conditions, or it might have difficulty recognizing certain types of obstacles if its training data was not sufficiently varied. Similarly, drones employing AI for surveillance or package delivery must be able to accurately perceive and classify objects and individuals without exhibiting biases based on appearance, clothing, or other superficial characteristics that could lead to unfair or unsafe actions.

Bias in Mapping and Remote Sensing

Mapping and remote sensing technologies provide invaluable data about our planet, aiding in urban planning, disaster response, environmental monitoring, and more. However, these fields are not immune to systemic bias, especially concerning data collection, representation, and interpretation.

Data Collection and Representation Gaps

Systemic bias in mapping can arise from historical biases in cartography, where certain regions or populations were under-represented or misrepresented. In modern remote sensing, this can translate into:

- Unequal Coverage: Satellite or drone imagery acquisition might be concentrated on economically significant areas, leaving less developed regions with sparser or outdated data. This creates a systemic disadvantage for areas lacking comprehensive mapping data, affecting resource allocation and development planning.

- Under-sampling of Minorities: In urban mapping projects using ground-level data or citizen science contributions, participation or data availability might be uneven across different socioeconomic or ethnic neighborhoods, leading to biased representations of infrastructure, services, or population characteristics.

- Historical Data Inaccuracies: Using historical maps or datasets as baselines can perpetuate past inaccuracies or colonial biases in naming conventions and territorial delineations.

These gaps are systemic because they reflect established patterns of resource allocation, political power, or historical oversight, rather than random omissions.

Interpretive Biases

Even with comprehensive data, interpretation can introduce systemic bias. Algorithms used to classify land use, identify objects, or analyze environmental change from satellite imagery can carry embedded biases. For example, an algorithm trained to classify “informal settlements” might inadvertently rely on proxies that correlate with poverty or specific ethnic groups, leading to biased categorization or misrepresentation of communities. Human interpreters, too, are susceptible to confirmation bias or cultural lenses that influence how they understand and annotate remote sensing data, potentially reinforcing existing stereotypes or overlooking nuanced information in unfamiliar contexts. The frameworks, categories, and methodologies used for interpretation can therefore embody systemic biases that influence policy and resource allocation decisions derived from these maps.

Mitigating Systemic Bias in Tech & Innovation

Addressing systemic bias requires a multi-faceted approach, integrating ethical considerations throughout the entire lifecycle of technology development, from conception to deployment and ongoing maintenance.

Diversifying Data Sources

One of the most effective strategies is to consciously diversify and audit data sources. This involves:

- Broader Representation: Ensuring training datasets for AI and autonomous systems include diverse demographics, geographical regions, environmental conditions, and cultural contexts. This means actively seeking out and incorporating data from underrepresented groups and varied scenarios.

- Data Augmentation: Employing techniques to artificially increase data diversity, such as generating synthetic data for scenarios where real-world data is scarce or biased.

- Fair Data Collection Practices: Implementing ethical guidelines for data collection, including informed consent and privacy protection, while actively working to avoid perpetuating historical data imbalances.

Algorithmic Auditing and Explainable AI (XAI)

Regular and rigorous auditing of algorithms is crucial. This involves:

- Bias Detection Tools: Using specialized tools and metrics to identify and quantify bias in model predictions and outputs.

- Fairness Metrics: Implementing fairness metrics (e.g., demographic parity, equalized odds) to assess whether a model is performing equitably across different groups.

- Explainable AI (XAI): Developing and utilizing XAI techniques that make the decision-making processes of complex AI models transparent. Understanding why an AI makes a particular decision can help pinpoint the sources of bias and allow developers to correct them.

Ethical AI Design Frameworks

Integrating ethical considerations from the initial design phase is paramount. This includes:

- Value-Sensitive Design: Explicitly incorporating societal values and ethical principles into the design requirements of technology.

- Interdisciplinary Teams: Building development teams that include ethicists, social scientists, and experts from diverse backgrounds to provide varied perspectives and identify potential biases early on.

- Regulatory and Policy Guidelines: Advocating for and adhering to robust regulatory frameworks and policies that mandate fairness, transparency, and accountability in AI and autonomous systems.

By proactively addressing systemic bias, the tech and innovation sector can ensure that its powerful tools serve all of humanity equitably, fostering trust and delivering on the promise of a more just and efficient future.