System haptics on the iPhone represents a sophisticated evolution in human-computer interaction, moving beyond purely visual and auditory cues to incorporate the sense of touch. Far from the rudimentary vibrations of early mobile phones, Apple’s implementation of haptic feedback on the iPhone is a hallmark of meticulous engineering and user experience design, transforming the device from a passive screen into an interactive, responsive companion. At its core, system haptics refers to the tactile sensations generated by the iPhone to provide feedback for various user actions, system events, and application-specific interactions. This intricate system is designed to provide precise, nuanced, and contextually relevant physical feedback, subtly guiding users, confirming actions, and enriching the overall digital experience without being intrusive. It’s a testament to the idea that technology can engage our senses in profoundly more natural and intuitive ways, pushing the boundaries of what constitutes an effective user interface.

The Technological Foundation of Haptic Feedback

The journey from simple vibration alerts to the sophisticated haptic feedback found in modern iPhones is a story of continuous technological innovation. Early mobile phones relied on eccentric rotating mass (ERM) motors, which spun an unbalanced weight to create a noticeable, albeit coarse, vibration. These were primarily binary: on or off, with little control over intensity or duration, leading to a largely undifferentiated user experience. The advent of the iPhone, particularly with the introduction of its Taptic Engine, marked a significant departure from this legacy, ushering in an era of high-fidelity haptics.

From Vibration Motor to Taptic Engine

The Taptic Engine, first introduced with the Apple Watch and subsequently integrated into iPhones, is a linear resonant actuator (LRA) — a motor that oscillates back and forth along a single axis. Unlike ERM motors, LRAs offer far greater control over the frequency, amplitude, and duration of vibrations. This precision allows the Taptic Engine to generate a wide array of distinct haptic effects, from sharp, quick taps to sustained, subtle pulses. It’s not just about vibrating; it’s about crafting specific tactile sensations that convey different meanings. The engine can start and stop almost instantaneously, delivering crisp, “local” sensations rather than a device-wide shake. This capability is critical for creating haptic feedback that feels directly tied to the on-screen action, making the interaction feel more direct and physical. The integration of this advanced hardware underscores Apple’s commitment to refining every aspect of user interaction, making touch an integral part of the digital narrative.

Precision and Nuance in Tactile Responses

The power of the Taptic Engine lies in its ability to generate nuanced tactile responses. It can simulate a button press with a satisfying click, provide a gentle thud when scrolling to the end of a list, or deliver a soft buzz for an incoming notification. These aren’t just vibrations; they are carefully designed micro-sensations intended to mimic real-world interactions or to create entirely new, intuitive cues. The precision with which these haptic effects are delivered means they can be subtle enough to not distract, yet distinct enough to convey meaningful information. For instance, a light tap might indicate a successful input, while a series of quick, firm taps could signal an error. This granularity elevates haptics from a mere alert system to a sophisticated layer of communication, adding depth and richness to the otherwise flat digital interface. This focus on precision is what differentiates system haptics on the iPhone from generalized vibration feedback, establishing it as a key pillar in the broader “Tech & Innovation” landscape of mobile computing.

Enhancing User Experience Through Sensory Interaction

The true genius of system haptics on the iPhone lies in its ability to profoundly enhance the user experience. By engaging the sense of touch, haptics provides a more immersive, intuitive, and reassuring interaction, bridging the gap between the virtual and physical worlds. This sensory feedback reinforces user actions, communicates system states, and even evokes emotional responses, making the device feel more alive and responsive.

Intuitive Interface Navigation

Haptics plays a crucial role in making the iPhone’s interface more intuitive. When navigating menus, adjusting sliders, or interacting with virtual buttons, a subtle haptic feedback can confirm that an action has been registered, eliminating guesswork and reducing cognitive load. For example, scrolling through a picker wheel often generates soft clicks that mimic the feel of a physical dial, making the digital interaction feel more grounded. Similarly, when performing system gestures like pulling down to refresh or invoking a context menu, a distinct haptic signature provides immediate confirmation of the action. This tactile reassurance fosters a sense of control and confidence, allowing users to interact with the device more naturally and efficiently without needing constant visual confirmation.

Emotional and Informational Cues

Beyond mere confirmation, system haptics can convey information and even emotional states. A gentle, persistent pulse for an urgent notification feels different from a playful bounce for a game event, preparing the user for the content of the alert before they even look at the screen. Developers can leverage the Haptic Feedback API to design custom haptic patterns that align with their app’s specific functions and branding, adding another layer of depth to their user experience. Imagine a fitness app delivering a strong, encouraging pulse when you hit a goal, or a meditation app using soft, undulating vibrations to guide breathing exercises. These applications demonstrate how haptics can transcend simple feedback to become a powerful tool for conveying nuanced information and influencing user sentiment, thus elevating the interaction beyond the purely functional.

Accessibility and Inclusive Design

System haptics also significantly contributes to accessibility, making the iPhone more usable for a wider range of individuals. For users with visual impairments, haptic feedback can provide non-visual cues for navigation, confirming selections, or alerting them to new content without relying solely on VoiceOver. For instance, different haptic patterns can be assigned to different types of notifications, allowing users to distinguish between an email, a text message, or a calendar reminder purely by touch. This tactile dimension adds a rich layer of information that can supplement or even replace visual cues, making the iPhone a more inclusive and adaptable device. The careful consideration of how haptics can serve accessibility needs highlights its role as an innovative element in responsible technology design.

The Mechanics Behind the Micro-Vibrations

Understanding the underlying mechanics of how system haptics work provides insight into the innovation required to achieve such precise tactile experiences. It’s a symphony of hardware engineering and software orchestration, meticulously tuned to deliver consistent and reliable feedback.

The Taptic Engine’s Engineering Marvel

The Taptic Engine itself is a marvel of miniaturization and precision engineering. Typically, it’s a compact rectangular component situated within the iPhone’s chassis. It houses a small, precisely weighted mass attached to a voice coil. When an electrical current is passed through the voice coil, it interacts with a magnetic field, causing the mass to move rapidly back and forth. By controlling the voltage, frequency, and waveform of this current, the iPhone’s operating system can precisely manipulate the movement of the mass, generating a wide spectrum of tactile sensations. This level of control is what allows the Taptic Engine to produce distinct “taps” or “thuds” rather than just a continuous buzz. Furthermore, the engine is designed to be highly energy efficient and durable, capable of delivering millions of distinct haptic events over the lifespan of the device without degradation in performance.

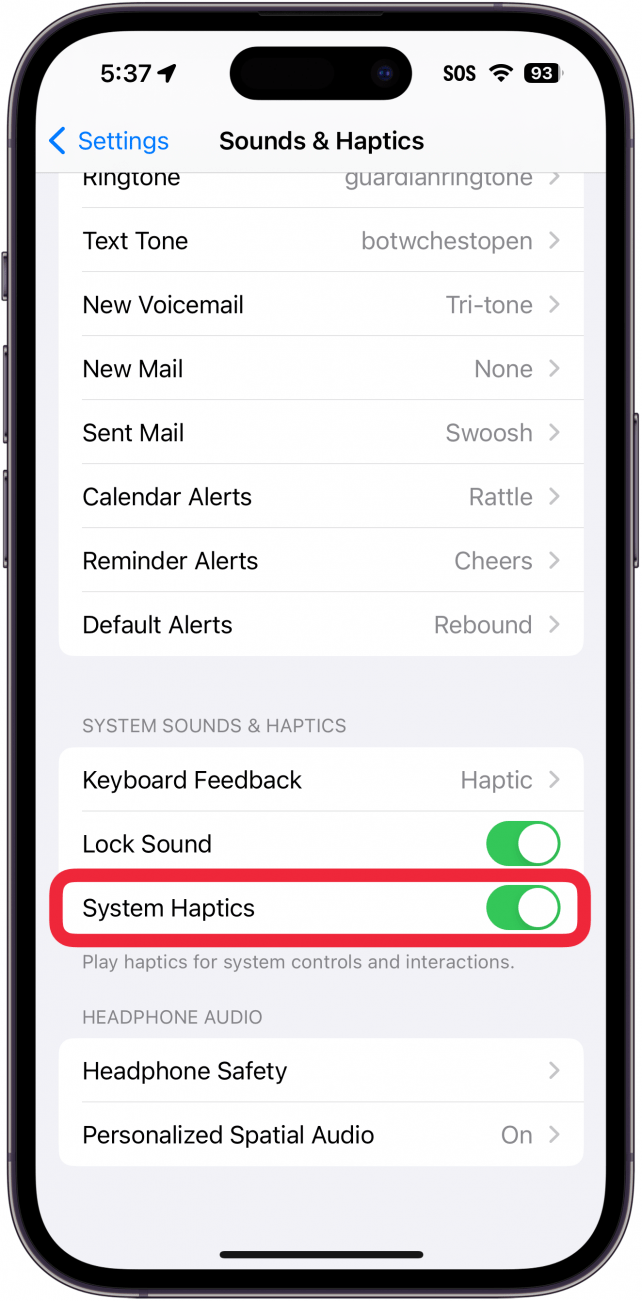

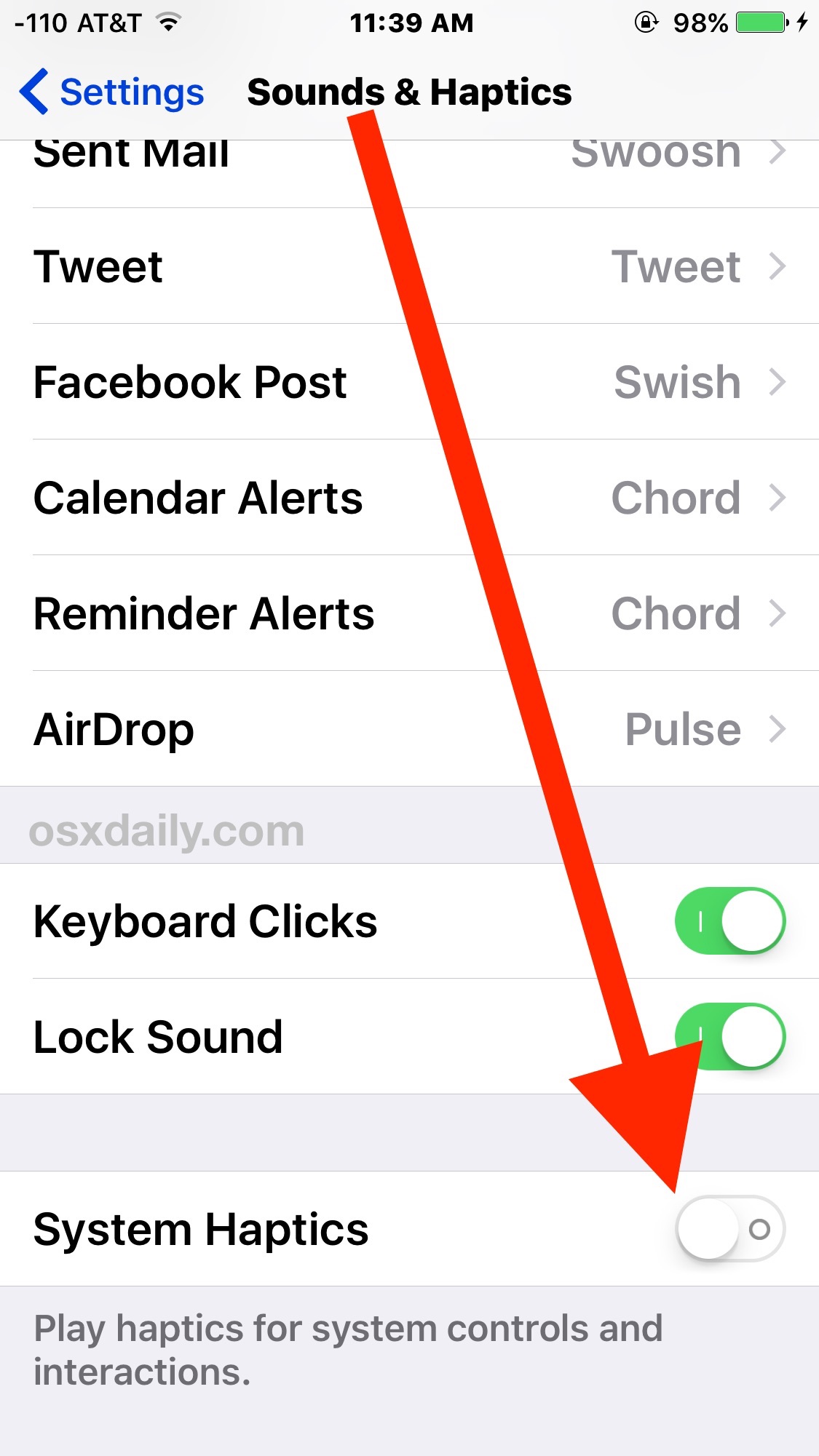

Software-Hardware Integration for Seamless Haptics

The brilliance of system haptics on the iPhone isn’t just in the hardware, but in the seamless integration between the Taptic Engine and iOS. Apple provides developers with a robust Haptic Feedback API (UIFeedbackGenerator) that abstracts away the complexities of directly controlling the motor. Developers can simply request predefined haptic feedback types, such as a “light impact,” “success notification,” or “selection changed,” and the system handles the intricate details of translating that request into the precise electrical signals needed to drive the Taptic Engine. This high-level API ensures consistency across apps and maintains the quality of the haptic experience. The iOS framework also intelligently manages haptic feedback, preventing overwhelming sensations and ensuring that feedback is delivered at the appropriate moment, often synchronized with animations or sounds for a richer, multi-sensory experience. This tight coupling of software and hardware is fundamental to delivering a truly cohesive and innovative haptic experience.

Evolution and Future Trajectories of Haptic Technology

The current state of system haptics on the iPhone is impressive, but it represents just a foundational step in the broader evolution of tactile interfaces. As technology progresses, the capabilities of haptic feedback are poised to expand dramatically, offering even richer, more immersive, and personalized interactions.

Expanding Beyond Basic Feedback

While current iPhone haptics excels at conveying discrete events and subtle confirmations, future iterations could move towards more complex and continuous tactile experiences. Imagine haptic textures that simulate different surfaces when scrolling through a list of items, or nuanced vibrations that provide directional cues in augmented reality applications. The innovation lies in moving from merely confirming an action to actively conveying information about digital objects or environments. This could involve multi-frequency haptics, where different frequencies are used simultaneously to convey multiple layers of information, or even spatial haptics, where the tactile sensation appears to originate from a specific point on the screen or within a virtual space.

Potential for Advanced Sensory Immersion

The long-term vision for haptic technology points towards advanced sensory immersion. This could involve microfluidic systems or electro-tactile feedback, allowing devices to simulate temperature changes, varying levels of pressure, or even the feeling of resistance. While these technologies are currently in research and development phases, their potential application in future iPhones or related Apple devices could create an unparalleled level of realism in digital interactions. Consider a device providing haptic feedback that makes touching a photo of water feel cool and fluid, or a game where receiving damage results in localized pain sensations. Such advancements would push the boundaries of what’s possible in user interface design, transforming passive consumption into active, multi-sensory engagement.

Haptics in a Multi-Device Ecosystem

As Apple’s ecosystem of devices continues to grow, the role of system haptics is likely to extend beyond the iPhone itself. Haptic feedback is already a cornerstone of the Apple Watch, and it could see expanded roles in AirPods (perhaps for touch controls or spatial audio cues), Apple Vision Pro (for object interaction or environmental feedback), and even potential future smart home devices. A cohesive haptic language across all these devices would create a more unified and intuitive experience, where users can instantly understand interactions regardless of the specific hardware. The innovation will be in designing haptic interactions that are appropriate for each device’s form factor and use case, while still maintaining a consistent and recognizable “feel.” This holistic approach to haptics across an integrated ecosystem represents a significant frontier in “Tech & Innovation,” promising a future where our devices don’t just respond, but truly communicate through touch.