SSL (Secure Sockets Layer), now more commonly referred to as TLS (Transport Layer Security), is the foundational technology that encrypts communication between a client (like your web browser) and a server. This encryption ensures that sensitive data, such as login credentials, credit card numbers, and personal information, is protected from eavesdropping and tampering as it travels across the internet. However, the process of encrypting and decrypting this data on every single server within a complex network can introduce significant overhead and latency, impacting performance and scalability. This is where SSL termination comes into play, offering a strategic and efficient solution.

Understanding the SSL/TLS Handshake

Before delving into termination, it’s crucial to grasp the fundamental SSL/TLS handshake process. When your browser connects to a website secured with HTTPS, a complex multi-step negotiation occurs:

Initial Connection and Certificate Exchange

The client initiates a connection to the server, sending a “Client Hello” message. This message includes the TLS versions supported by the client, a random number, and a list of cipher suites (encryption algorithms and key exchange methods) the client understands. The server responds with a “Server Hello,” indicating the chosen TLS version, another random number, and the selected cipher suite. The server then sends its SSL/TLS certificate, which contains its public key and is digitally signed by a trusted Certificate Authority (CA).

Key Exchange and Verification

The client verifies the server’s certificate by checking its validity, expiration date, and whether it was issued by a trusted CA. If the certificate is valid, the client uses the server’s public key to encrypt a pre-master secret. This pre-master secret is then sent back to the server. The server, possessing its corresponding private key, can decrypt this secret. Both the client and the server independently use the pre-master secret and the random numbers exchanged earlier to generate identical session keys. These session keys are symmetric keys, much faster for encryption and decryption than asymmetric public-key cryptography.

Secure Communication Establishment

With the session keys established, both the client and server can now encrypt and decrypt all subsequent communication. This ensures that any data exchanged during the session is confidential and protected from unauthorized access. The entire handshake process, while robust, consumes computational resources and adds a noticeable delay to the initial connection.

The Concept of SSL Termination

SSL termination is the process of decrypting SSL/TLS encrypted traffic at a point before it reaches the actual application servers. Instead of every web server or application server handling the computationally intensive task of decrypting SSL/TLS traffic, this burden is offloaded to a dedicated component. This component, often a load balancer, a reverse proxy, or an API gateway, terminates the SSL/TLS connection, decrypts the traffic, and then forwards the unencrypted (or sometimes re-encrypted with a different, internal certificate) traffic to the backend servers.

How SSL Termination Works

When a client initiates a connection to a service that employs SSL termination, the request first arrives at the termination point. This could be a hardware load balancer with SSL offloading capabilities, a software-based load balancer like Nginx or HAProxy, or a dedicated API gateway.

- Client Connection: The client establishes an SSL/TLS connection with the termination point, just as it would with a standard web server.

- SSL Handshake: The termination point performs the SSL/TLS handshake with the client, presenting its own SSL certificate. This is crucial for maintaining trust and security for the end-user.

- Decryption: Once the handshake is complete and a secure channel is established, the termination point decrypts the incoming encrypted traffic using its private key.

- Forwarding: The decrypted traffic is then forwarded to the appropriate backend server. There are two common approaches here:

- Unencrypted Forwarding: The traffic is sent to the backend server without any encryption. This is common in secure, private internal networks where the risk of interception between the termination point and the backend servers is minimal.

- Re-encrypted Forwarding: The termination point re-encrypts the traffic using a different SSL/TLS certificate (often a self-signed or internal certificate) before sending it to the backend server. This adds an extra layer of security within the internal network.

The backend application servers, now receiving decrypted traffic, do not need to manage SSL certificates or perform decryption themselves. They can focus solely on processing the application logic and serving the client’s request.

Benefits of SSL Termination

Implementing SSL termination offers a multitude of advantages for modern web infrastructures, primarily revolving around performance, scalability, and manageability.

Performance Enhancement

The most significant benefit of SSL termination is the substantial improvement in performance. The SSL/TLS handshake and ongoing encryption/decryption processes are CPU-intensive. By offloading these tasks to a dedicated appliance or software designed for this purpose, the application servers are freed from this computational burden. This leads to:

- Reduced Latency: Less time spent on cryptographic operations means faster response times for client requests.

- Increased Throughput: Application servers can handle more requests per second because they are not bogged down by SSL processing.

- Lower Server Load: CPU cycles that would otherwise be consumed by SSL processing can be used for core application functions, leading to a more efficient use of resources.

Improved Scalability

As web traffic grows, scaling the infrastructure becomes paramount. SSL termination plays a vital role in enabling seamless scalability:

- Simplified Scaling of Backend Servers: With SSL processing handled externally, adding more application servers to handle increased traffic is straightforward. These new servers don’t need to be configured with SSL certificates or complex cryptographic setups.

- Centralized SSL Management: Managing SSL certificates can be a complex and time-consuming task, especially with a large number of servers. SSL termination allows for centralized management of SSL certificates on the termination point, simplifying renewals, updates, and security patching.

Enhanced Security and Centralized Control

While SSL termination moves decryption away from backend servers, it can also enhance security when implemented correctly:

- Centralized Security Policy Enforcement: The termination point can act as a central point for enforcing security policies, such as intrusion detection, firewalling, and DDoS mitigation, before traffic reaches the backend.

- Simplified Certificate Management: As mentioned, managing certificates on a single or few termination points is far easier than managing them across hundreds or thousands of individual application servers. This reduces the risk of expired or misconfigured certificates.

- PCI DSS Compliance: For organizations handling payment card information, SSL termination can simplify compliance with Payment Card Industry Data Security Standard (PCI DSS) requirements. By decrypting traffic at a controlled point, sensitive cardholder data can be processed and handled according to strict security protocols.

Cost Efficiency

By optimizing resource utilization and simplifying management, SSL termination can lead to cost savings:

- Reduced Hardware Requirements: Application servers can be less powerful if they don’t need to handle SSL processing, potentially reducing hardware costs.

- Lower Operational Costs: Centralized management and simplified configurations reduce the administrative overhead associated with SSL.

SSL Termination Architectures and Use Cases

SSL termination is implemented in various architectural patterns, each suited for different scenarios.

Load Balancers with SSL Offloading

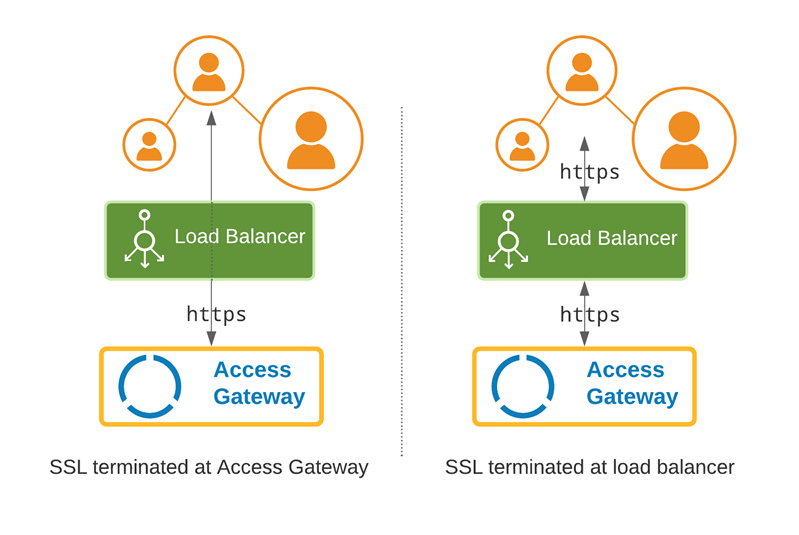

One of the most common implementations involves using hardware or software load balancers that have built-in SSL offloading capabilities. In this model:

- Client Request: A client’s request for a secure website (HTTPS) is directed to the load balancer.

- SSL Termination at Load Balancer: The load balancer establishes the SSL/TLS connection with the client, performs the handshake, decrypts the traffic, and terminates the SSL session.

- Health Checks and Distribution: The load balancer then performs health checks on the backend application servers and distributes the decrypted traffic to one of the available servers based on configured algorithms (e.g., round robin, least connections).

- Backend Server Interaction: The backend servers receive unencrypted HTTP requests, process them, and send back HTTP responses. The load balancer then re-encrypts these responses if the client connection requires it or forwards them as-is.

Use Case: This is prevalent in large-scale web applications, e-commerce platforms, and any environment requiring high availability and efficient traffic distribution.

Reverse Proxies

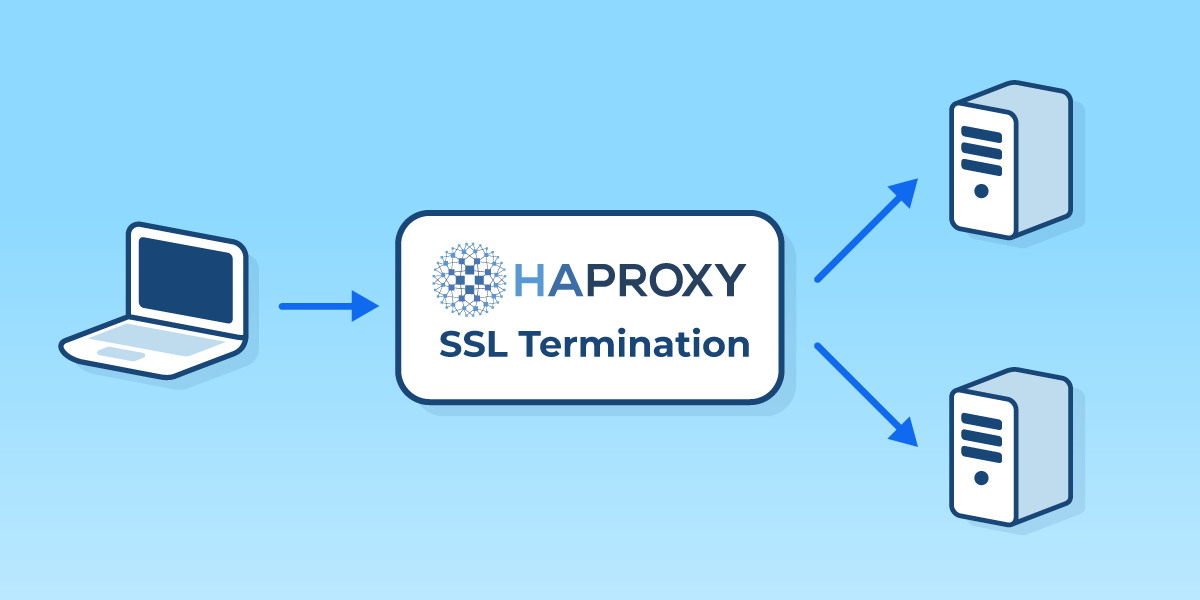

Reverse proxies, such as Nginx and HAProxy, are widely used for SSL termination, especially in microservices architectures and containerized environments. A reverse proxy sits in front of one or more web servers and intercepts client requests.

- Client Connection to Proxy: Clients connect to the reverse proxy over HTTPS.

- Proxy Handles SSL: The reverse proxy terminates the SSL connection, decrypts the traffic, and applies any configured security policies.

- Forwarding to Backend Services: The proxy then forwards the request to the appropriate backend service (which could be another microservice or a traditional web server) typically over HTTP, or re-encrypted with an internal certificate.

Use Case: Ideal for managing traffic to multiple microservices, providing a single point of entry for external traffic, and simplifying SSL certificate management across a distributed application landscape.

API Gateways

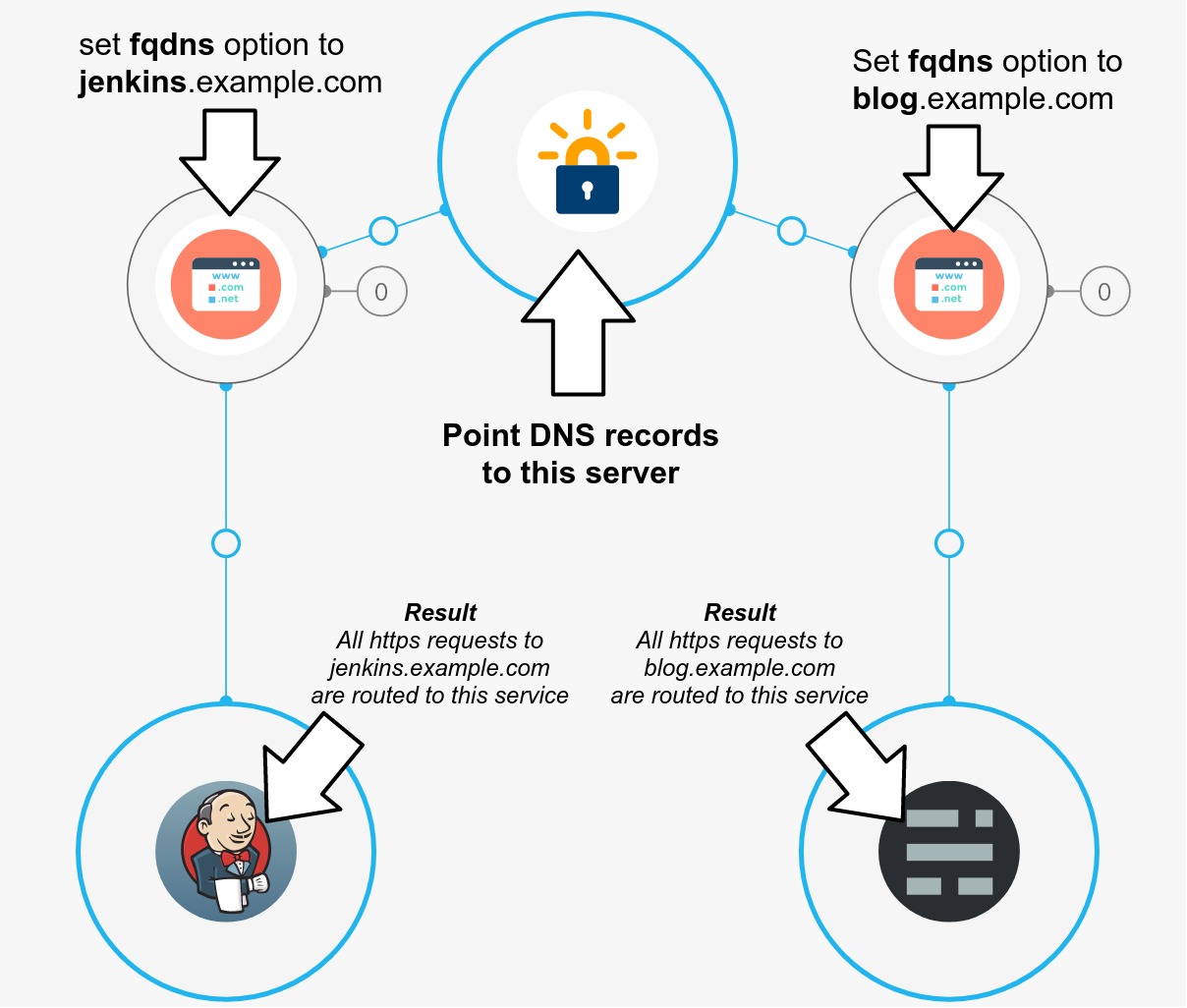

API gateways serve as a single entry point for all client requests to backend APIs. They often incorporate SSL termination as a core function.

- API Gateway as Termination Point: When an API client makes a request over HTTPS to the API gateway, the gateway terminates the SSL connection.

- Authentication and Authorization: The gateway can perform authentication and authorization before forwarding the request.

- Routing to Backend APIs: The decrypted request is then routed to the appropriate backend API service.

Use Case: Essential for managing, securing, and monitoring APIs, especially in microservices or cloud-native environments.

Content Delivery Networks (CDNs)

CDNs also employ SSL termination at their edge locations, which are geographically distributed servers closer to end-users.

- Client Connects to Nearest Edge: A client connects to the nearest CDN edge server over HTTPS.

- Edge Server Terminates SSL: The CDN edge server terminates the SSL connection, decrypts the traffic, and caches the content.

- Origin Fetch (if needed): If the content is not cached, the CDN fetches it from the origin server. This fetch can be over HTTP or HTTPS, depending on configuration.

Use Case: Enhances website performance by reducing latency for users worldwide and offloads SSL processing from origin servers.

Considerations and Potential Drawbacks

While SSL termination offers significant advantages, it’s essential to consider the implications and potential drawbacks.

Security Implications of Decryption

The primary concern is that the traffic is decrypted at the termination point. This means that if the termination point is compromised, the data becomes vulnerable in transit within the internal network. Therefore, robust security measures are crucial for the termination infrastructure itself. This includes:

- Secure Network Segmentation: Isolating the termination point and backend servers in a secure, segmented network.

- Access Control: Implementing strict access controls and monitoring for the termination infrastructure.

- Internal Encryption: As mentioned, re-encrypting traffic between the termination point and backend servers can mitigate risks in the internal network.

Complexity in Network Design

Implementing SSL termination adds another layer to the network architecture, which can increase complexity. Careful planning and expertise are required to design, deploy, and manage these systems effectively.

Load Balancer/Proxy Performance Bottlenecks

If the SSL termination point becomes a bottleneck due to insufficient capacity, it can negate the performance benefits. Proper sizing and scaling of the termination infrastructure are critical.

Private Key Management

The private key corresponding to the SSL certificate used at the termination point must be securely stored and managed. Compromise of this private key would allow attackers to decrypt traffic.

The Future of SSL and Termination

As the internet continues to evolve, so too will the methods for securing and managing traffic. Technologies like HTTP/3, which uses QUIC as its transport layer and is built on UDP, are inherently different from HTTP/1.1 and HTTP/2 which relied on TCP. QUIC incorporates TLS 1.3 from the outset and offers improvements in connection establishment time. While the underlying principles of encryption remain, the specific implementation of termination might adapt to these new protocols.

Furthermore, the rise of edge computing and decentralized architectures may lead to more distributed forms of SSL termination, where processing occurs closer to the data source or the user, further optimizing performance and security.

In conclusion, SSL termination is a critical technique for optimizing the performance, scalability, and manageability of secure web applications. By strategically offloading the computationally intensive task of SSL/TLS encryption and decryption, organizations can achieve faster response times, handle increased traffic loads, and simplify certificate management, all while maintaining a secure communication channel for their users. Understanding its mechanics, benefits, and potential drawbacks is essential for any IT professional involved in modern web infrastructure.