The Fundamental Concept of Geometric Rays

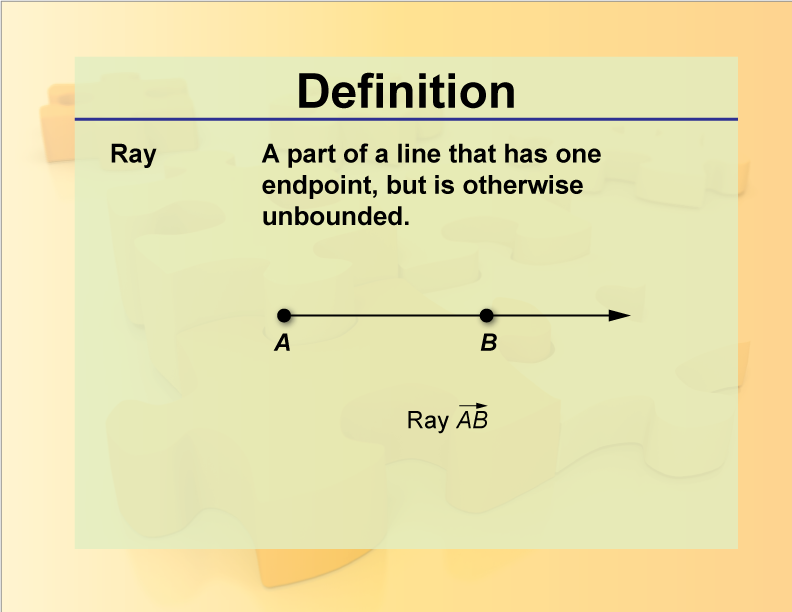

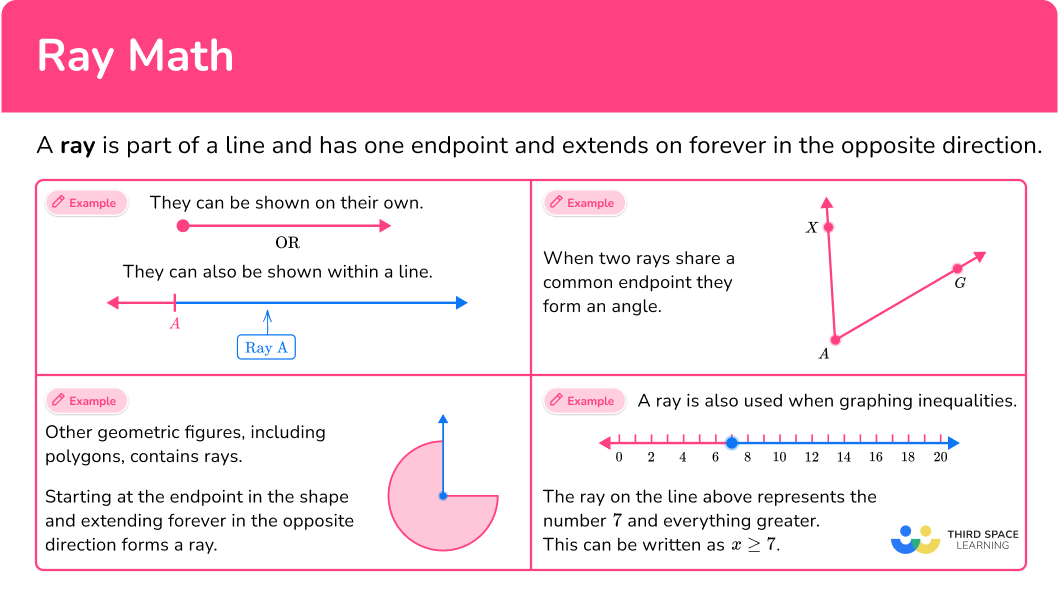

In the realm of mathematics, particularly geometry, a “ray” is a foundational concept that describes a line extending infinitely in one direction from a single fixed point. Unlike a line segment, which has two endpoints, or a line, which extends infinitely in both directions, a ray possesses a distinct origin and an unending trajectory. This unique characteristic makes it an indispensable element in defining directions, angles, and projections, forming the bedrock for understanding spatial relationships.

Imagine a flashlight beam; it originates from the flashlight and projects light outwards in a specific direction, seemingly without end. This visual serves as an excellent analogy for a geometric ray. It has a starting point, known as its endpoint or origin, and continues indefinitely along a straight path. In mathematical notation, a ray is often denoted by its endpoint and another point through which it passes. For instance, ray AB would start at point A and pass through point B, extending beyond B. The order of the points is crucial, as ray AB is distinct from ray BA (which would start at B and pass through A).

The concept of a ray is pivotal in defining angles, where two rays share a common endpoint (the vertex) to form an angle. It also underpins the understanding of vectors, which, while having both magnitude and direction, are fundamentally rooted in the directional aspect that a ray encapsulates. This simple yet profound geometric element, therefore, transcends theoretical mathematics, finding critical applications in various advanced technological domains.

Defining a Ray

Formally, a ray is a part of a line that is bounded on one side but unbounded on the other. It is an ordered pair of points, say (A, B), where A is the endpoint (origin) and B is any other point on the ray, indicating its direction. The ray comprises point A, point B, and all points on the line containing A and B that lie on the same side of A as B. This precise definition ensures clarity when discussing directional properties in complex systems.

Rays in the Cartesian Coordinate System

When working within a Cartesian coordinate system, rays can be represented using algebraic equations. A ray originating from a point $P0(x0, y0, z0)$ and extending in the direction of a vector $vec{d}(dx, dy, dz)$ can be parameterized as $P(t) = P0 + tvec{d}$, where $t ge 0$. Here, $t$ is a scalar parameter that scales the direction vector, ensuring the ray only extends from $P_0$ in the specified direction. This mathematical representation is crucial for computational geometry, allowing algorithms to precisely define and manipulate directional paths, which is fundamental for many tech applications, from graphics rendering to autonomous navigation.

Rays as the Building Blocks of Sensor Perception in Tech & Innovation

The abstract geometric concept of a ray transforms into a tangible, indispensable tool in modern technology, particularly within sensor perception. Autonomous systems, mapping technologies, and remote sensing platforms inherently rely on the principle of sending out and receiving ‘rays’ of energy – be it light, sound, or radio waves – to build a comprehensive understanding of their environment. The interpretation of these emitted and reflected rays enables these systems to perceive depth, identify objects, and construct detailed 3D models of the world.

LiDAR and Radar: Emitting and Receiving Rays for 3D Mapping

Perhaps the most direct application of geometric rays in technology is found in LiDAR (Light Detection and Ranging) and radar systems. LiDAR sensors emit millions of laser pulses (rays of light) per second. Each pulse travels outward, strikes an object or surface, and reflects back to the sensor. The time it takes for a pulse to return, combined with its known speed (the speed of light), allows the system to calculate the precise distance to the object. The direction of the emitted ray is also known, enabling the system to map the 3D coordinates (x, y, z) of each point where a ray made contact.

The aggregated data from billions of these individual “ray-return” measurements creates a dense point cloud, a highly accurate 3D representation of the environment. This point cloud data is invaluable for various applications, including high-definition mapping for autonomous vehicles, precision agriculture, urban planning, and environmental monitoring. Similarly, radar (Radio Detection and Ranging) operates on the same principle but uses radio waves instead of light, offering advantages in adverse weather conditions where light penetration is limited. Both technologies fundamentally leverage the geometric properties of rays – their origin, direction, and travel time – to construct a spatial understanding.

Computer Vision and Photogrammetry: Interpreting Light Rays

While LiDAR and radar actively emit rays, computer vision and photogrammetry are passive systems that interpret ambient light rays. Cameras capture light rays reflecting off objects in the scene, projecting them onto a 2D image sensor. Each pixel in an image represents the intensity and color of the light ray that struck that specific point on the sensor. The challenge and innovation lie in reconstructing the 3D world from these 2D projections.

Photogrammetry, for instance, uses multiple overlapping images taken from different viewpoints (often by drones) to create 3D models. The core principle involves triangulation: if a specific point in the 3D world appears in two or more images, the system can trace the light rays from that point to the respective camera lenses and then to the pixels where they landed. By finding the intersection of these rays (originating from the 3D point and passing through the camera’s optical center), the system can accurately determine the 3D coordinates of that point. This process is essentially solving for the intersection of multiple geometric rays in space, enabling the creation of detailed 3D maps, digital elevation models, and virtual reconstructions.

Autonomous Navigation and Pathfinding: Leveraging Rays for Safe Operation

The ability to perceive and understand the environment through rays is critical for autonomous systems to navigate safely and effectively. Drones, autonomous vehicles, and robotic platforms constantly “sense” their surroundings to detect obstacles, plan optimal paths, and maintain specific relative positions to targets. This perception often involves a sophisticated interplay of various sensor data, all of which boil down to the analysis of directional information provided by geometric rays.

Obstacle Avoidance and Line-of-Sight Calculations

For an autonomous drone, collision avoidance is paramount. Sensors like ultrasonic, infrared, and computer vision cameras continuously scan the environment for potential hazards. Imagine an ultrasonic sensor emitting a “ray” of sound. If that sound ray encounters an obstacle, it reflects back, informing the drone of the obstacle’s presence and distance. Similarly, computer vision algorithms can analyze image streams to identify objects and calculate their depth, effectively determining if they lie within the drone’s projected flight path.

Crucially, autonomous systems perform constant “line-of-sight” calculations. A virtual ray is projected from the drone’s current position in its intended direction of travel. If this ray intersects with a perceived obstacle within a certain safety margin, the system triggers an avoidance maneuver. This involves re-calculating the ray’s path to a new, clear direction or adjusting altitude to fly over or under the obstacle. This real-time processing of potential ray intersections ensures the drone maintains a safe trajectory, preventing collisions and enabling reliable autonomous operation.

AI Follow Mode and Relative Position Tracking

AI Follow Mode, a popular feature in many consumer and industrial drones, is another prime example of ray-based technology. When activated, the drone’s camera (and sometimes other sensors) locks onto a designated subject (e.g., a person, vehicle) and maintains a constant relative position and distance. This involves continuously “drawing” a virtual ray from the drone’s camera lens to the center of the tracked subject.

As the subject moves, the drone’s onboard AI algorithms constantly monitor changes in the direction of this virtual ray and the perceived distance. If the ray’s direction shifts significantly, or the distance changes, the drone adjusts its position and orientation to re-establish the desired configuration. This continuous process of tracking and adjustment relies heavily on the drone’s ability to:

- Identify the target (often using computer vision algorithms that detect features along the ray).

- Calculate the angular deviation of the target from the camera’s center (the direction of the ray).

- Estimate the distance to the target (using techniques like stereo vision or focus analysis, which involve interpreting light rays).

The drone then executes precise flight maneuvers to keep the target within the desired frame, essentially maintaining a dynamic, virtual geometric ray connection.

Remote Sensing and Environmental Analysis: Rays Beyond Visible Light

The application of geometric rays extends significantly into remote sensing and environmental analysis, where information is gathered about the Earth’s surface and atmosphere without direct contact. This field utilizes a broad spectrum of electromagnetic radiation, from visible light to infrared, microwave, and radio waves, all of which travel as rays, each carrying unique information about the materials they interact with.

Spectral Analysis and Multispectral Imaging

Multispectral and hyperspectral imaging sensors mounted on drones or satellites capture reflected or emitted energy across dozens or even hundreds of narrow, contiguous spectral bands. Each band essentially represents the intensity of “rays” of specific wavelengths reflecting from the Earth’s surface. Different materials — such as healthy vegetation, stressed crops, water, concrete, or specific minerals — absorb and reflect electromagnetic radiation differently across this spectrum.

By analyzing the unique “spectral signature” (the pattern of absorption and reflection across multiple wavelengths) of the rays returning from a particular point on the ground, scientists can identify and classify surface features, assess vegetation health, detect pollution, and map geological formations. For example, a healthy plant reflects highly in the near-infrared (NIR) spectrum, while stressed plants show a decrease in NIR reflection. This insight, derived from interpreting the varying intensity of different spectral “rays,” is crucial for precision agriculture, forestry, and environmental monitoring.

Mapping Topography and Volumetric Changes

Beyond classification, remote sensing rays are used to precisely map topography and monitor volumetric changes over time. Synthetic Aperture Radar (SAR) interferometry, for instance, uses radar rays to detect tiny shifts in the Earth’s surface. By sending out radar rays and analyzing the phase difference between two received rays (or two rays received at different times), scientists can measure ground deformation caused by earthquakes, volcanic activity, or subsidence with millimeter-level accuracy.

Similarly, repeat LiDAR scans over a period can quantify volumetric changes in sand dunes, glaciers, or mining stockpiles. Each LiDAR ray provides a precise elevation measurement, and by comparing point clouds from different epochs, the change in volume can be accurately calculated. This makes ray-based remote sensing an indispensable tool for understanding dynamic Earth processes and managing natural resources.

The Future of Ray-Based Technologies in Autonomous Systems

The reliance on geometric rays in advanced technology is not merely a present reality but a fundamental pillar for future innovations in autonomous systems. As algorithms become more sophisticated and sensor technologies continue to miniaturize and improve, the capabilities derived from processing ray information will expand exponentially.

Enhanced Real-time Perception

Future autonomous systems will boast even more refined real-time perception capabilities. This involves fusing data from multiple ray-based sensors (LiDAR, radar, cameras, ultrasonic) to create a hyper-accurate, low-latency 3D understanding of the environment. Imagine drones navigating complex indoor environments or dense urban canyons at high speeds, distinguishing between static obstacles, dynamic pedestrians, and environmental changes with unparalleled precision. This will be achieved by algorithms that can process billions of ray interactions per second, predicting outcomes and making instantaneous decisions. Ray tracing, historically a graphics rendering technique, is also finding new life in real-time simulation for autonomous systems, allowing for predictive environmental understanding.

Advanced Simulation and Digital Twins

The concept of “digital twins” – virtual replicas of physical assets, processes, or environments – is gaining traction across industries. Geometric rays are at the heart of building and interacting with these digital twins. For instance, in an industrial setting, a drone equipped with LiDAR could repeatedly scan a factory floor, creating a digital twin that precisely mirrors its physical counterpart. Engineers could then simulate modifications, test new layouts, or even train AI agents within this virtual environment, where virtual “rays” interact with the digital objects, mirroring real-world physics and sensor responses. This allows for risk-free experimentation and optimization, drastically reducing development costs and accelerating innovation.

Moreover, advanced simulations for autonomous flight and vehicle development rely on ray-casting techniques to model sensor performance in virtual environments. This allows developers to test millions of scenarios, including edge cases and hazardous conditions, by simulating how sensor “rays” would interact with a digitally rendered world. The precision and versatility of geometric ray concepts will continue to drive the evolution of smart, autonomous, and highly connected technological ecosystems.