Unlocking Botanical Insights Through Advanced Drone Technology

In the context of modern technological innovation, the seemingly simple question “what is parts of plants” transcends its basic biological definition to encompass the myriad granular features, structures, and physiological states of vegetation that are now meticulously analyzed from an aerial perspective. Advanced drone technologies have fundamentally revolutionized our capacity to observe, measure, and understand these plant “parts” with unprecedented detail, scale, and efficiency. No longer confined to laborious manual observation, the study of plant components, from individual leaves to entire canopies, is increasingly driven by sophisticated sensors, artificial intelligence (AI), and autonomous flight systems, paving the way for profound advancements in agriculture, forestry, environmental science, and ecological research. Drones serve as indispensable platforms, transforming how we perceive and interact with the botanical world, providing critical data that informs precision management and sustainable practices.

Remote Sensing and Spectral Analysis of Vegetation

The ability to analyze the specific “parts of plants”—whether it be their overall vigor, hydration levels, or disease presence—is dramatically enhanced by remote sensing technologies integrated into drones. These systems move beyond what the human eye can perceive, offering a comprehensive view of plant health and structure.

Beyond the Visible: Multispectral and Hyperspectral Imaging

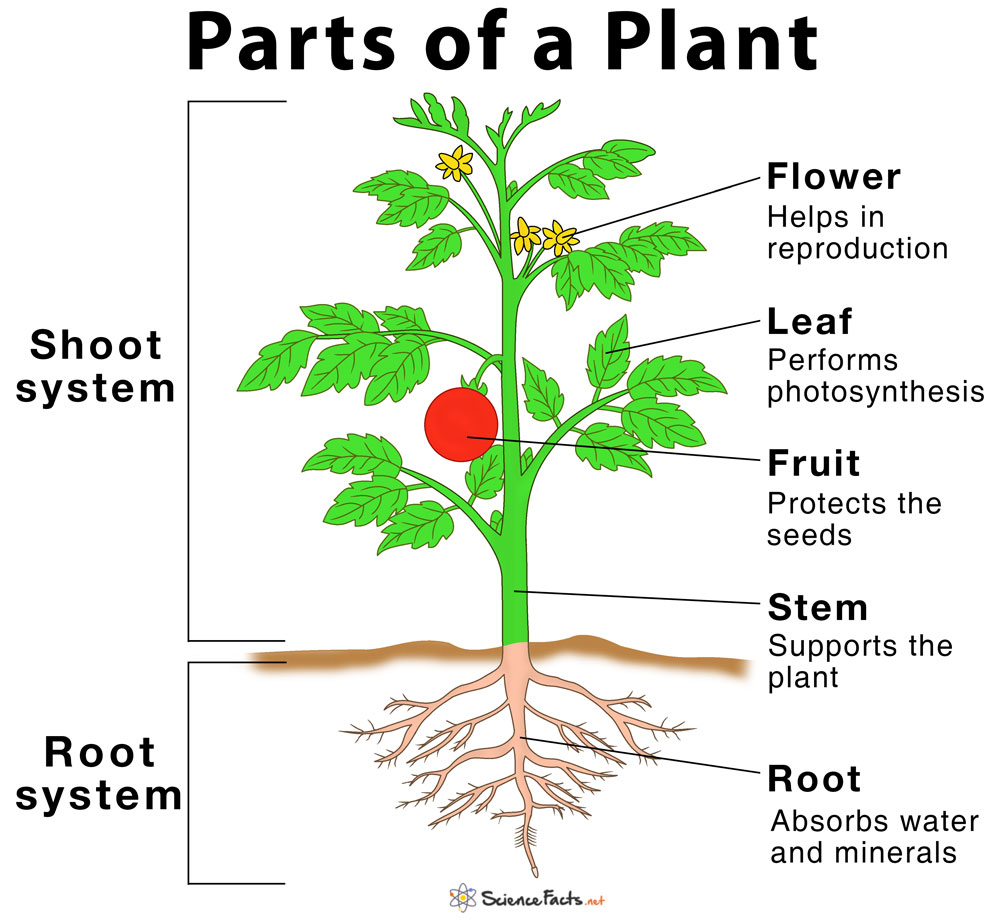

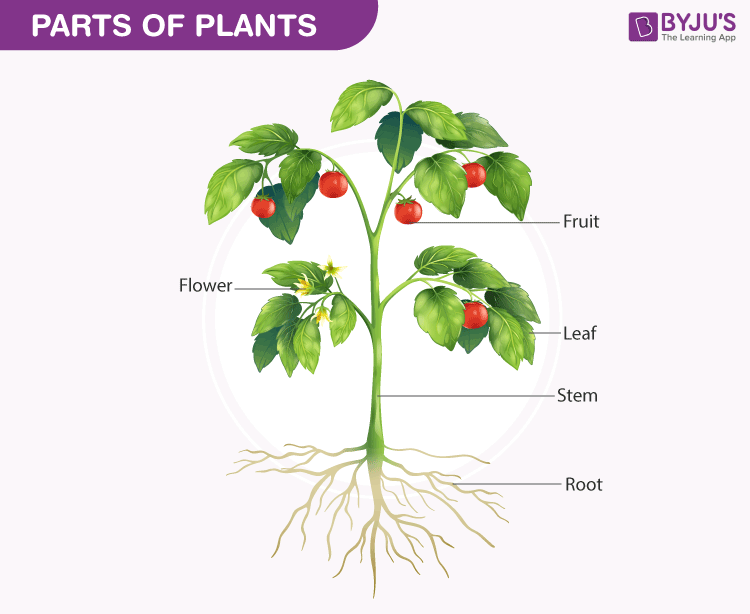

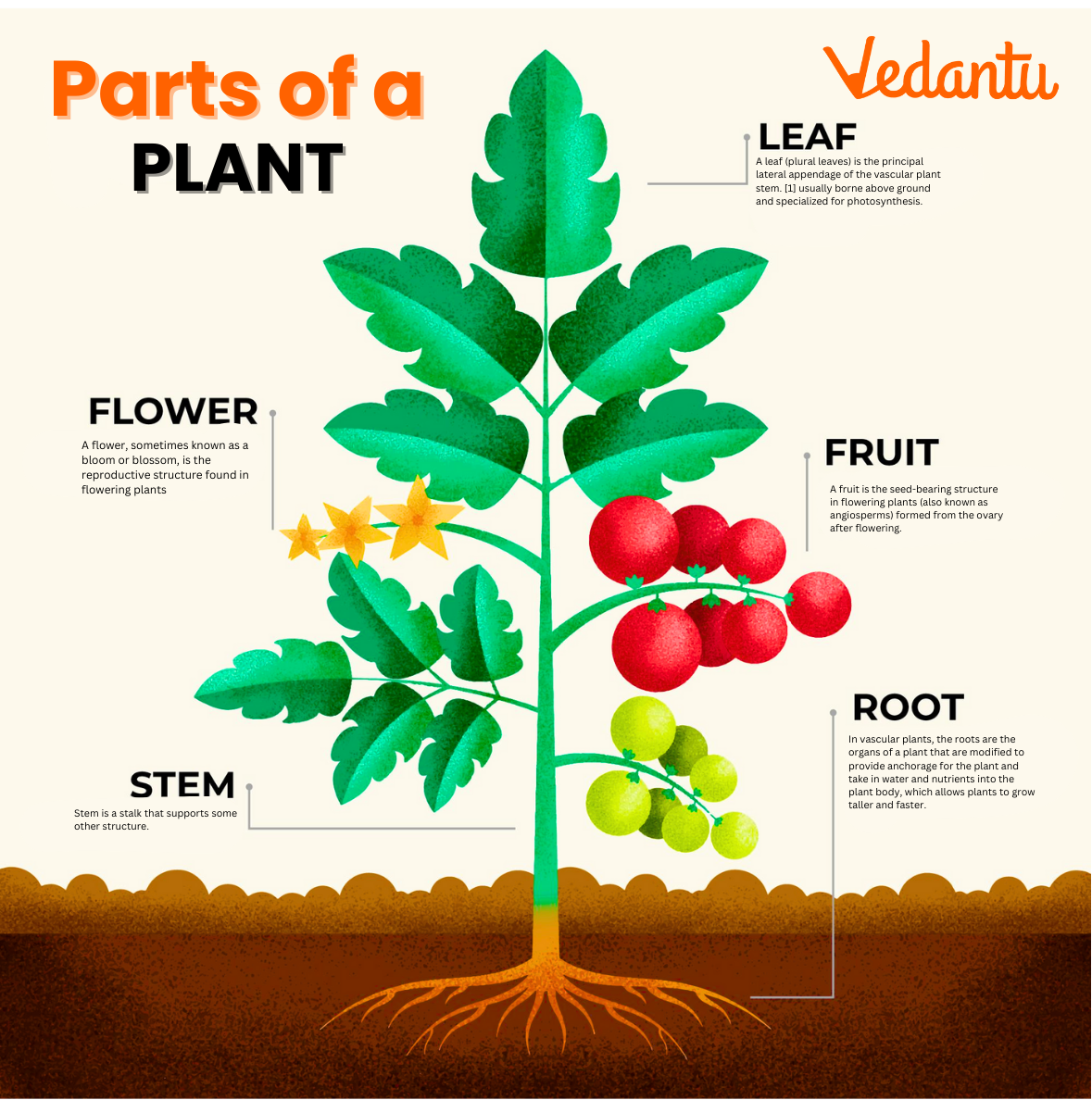

Multispectral and hyperspectral sensors are at the forefront of this revolution. Unlike standard RGB cameras that capture data in broad red, green, and blue bands, these advanced sensors record electromagnetic radiation across numerous narrow spectral bands, extending into the near-infrared (NIR) and short-wave infrared (SWIR) regions. Different “parts of plants,” such such as leaves, stems, flowers, or fruits, exhibit unique spectral signatures based on their biochemical composition, cellular structure, and physiological condition. For instance, healthy, photosynthetically active leaves typically show strong absorption in the red band (due to chlorophyll) and strong reflectance in the NIR band.

By analyzing these spectral characteristics, scientists and agriculturalists can derive various vegetation indices, such as the Normalized Difference Vegetation Index (NDVI) and the Red-Edge Normalized Difference Vegetation Index (NDRE). These indices quantify specific aspects of plant health and vigor, effectively discerning the “parts” of a plant that are thriving from those experiencing stress. NDVI, for example, is highly correlated with chlorophyll content and biomass, indicating overall plant greenness and density. NDRE, by contrast, is more sensitive to chlorophyll content in the middle to late growth stages, providing insights into nitrogen status and potential stress hidden beneath the upper canopy layers. These remote sensing techniques allow for rapid, non-destructive assessment of vast areas, enabling early detection of nutrient deficiencies, pest infestations, or disease outbreaks that might affect specific “parts” of a crop or forest.

Thermal Imaging for Physiological Stress Detection

Thermal imaging, another powerful drone-borne sensing technology, adds another layer of insight into the “parts of plants.” Thermal cameras detect the infrared radiation emitted by objects, translating temperature variations into visual data. For plants, these temperature differences are often direct indicators of their physiological state. Plants regulate their temperature primarily through transpiration – the process of releasing water vapor through tiny pores called stomata on their leaves. When plants are well-hydrated, they transpire actively, leading to evaporative cooling and cooler leaf surface temperatures. Conversely, when plants experience water stress (drought), their stomata close to conserve water, reducing transpiration and causing leaf temperatures to rise.

Drone-mounted thermal cameras can quickly identify these temperature anomalies across a field or forest, highlighting “parts of plants” that are experiencing water deficit even before visible symptoms appear. This early detection is critical for precision irrigation strategies, allowing farmers to apply water only where and when it is needed, optimizing resource use and preventing irreversible damage to crops. Beyond water stress, thermal imaging can also detect subtle temperature changes associated with certain diseases or pest damage, as localized infections or infestations can impact a plant’s metabolic activity and heat exchange with the environment.

AI-Powered Identification and Health Monitoring

The immense volume of data collected by drone-based sensors would be overwhelming without the transformative power of artificial intelligence. AI, particularly machine learning and deep learning algorithms, provides the analytical backbone for interpreting complex aerial imagery and extracting actionable insights about the “parts of plants.”

Machine Learning for Feature Extraction and Classification

AI algorithms are trained on vast datasets of annotated aerial images to identify and classify specific “parts of plants” with remarkable accuracy. This includes everything from individual leaves, flowers, and fruits to entire plant architectures. For instance, in precision agriculture, AI models can be trained to differentiate between crop plants and weeds based on subtle visual features like leaf shape, size, and texture. This allows for targeted weed management, reducing herbicide use and its environmental impact. Furthermore, AI can identify different growth stages of plants by recognizing the appearance and development of specific “parts,” such as budding flowers or ripening fruits.

Beyond identification, machine learning excels at anomaly detection. By learning the characteristics of healthy plant “parts,” AI can quickly pinpoint deviations that indicate stress, disease, or nutrient deficiencies. This might involve recognizing discoloration, wilting patterns, or abnormal growth structures in leaves and stems that are indicative of fungal infections, viral diseases, or nutrient imbalances. The ability of AI to process vast areas and identify these minute details far surpasses human capabilities, providing early warnings and enabling proactive intervention at a plant-specific level.

Autonomous Crop Scouting and Precision Agriculture

The integration of AI with autonomous drone flight pathways creates powerful systems for autonomous crop scouting and precision agriculture. Drones can be programmed to fly pre-defined routes, collecting high-resolution imagery and spectral data across entire fields. The AI then processes this data in real-time or post-flight, generating detailed maps and reports that highlight problematic areas or individual plants requiring attention.

For example, an AI-powered drone system can count individual plants, estimate yields by analyzing fruit counts and sizes (the “parts” that constitute the harvest), and generate prescription maps for variable rate application of fertilizers or pesticides. This level of precision allows farmers to apply inputs only to the specific “parts of plants” or areas that need them, optimizing resource allocation, reducing waste, and minimizing environmental impact. The shift from field-level management to individual plant-level analysis, driven by AI and drone technology, represents a paradigm shift in agricultural efficiency and sustainability.

Mapping and 3D Modeling of Plant Structures

Understanding the physical architecture and spatial distribution of “parts of plants” is crucial for various applications, from assessing biomass to managing forest resources. Drones equipped with advanced mapping technologies provide unprecedented capabilities for creating detailed 2D and 3D representations of vegetation.

Photogrammetry for Canopy Structure and Volume

Drone-based photogrammetry involves capturing numerous overlapping images of an area from different angles. Sophisticated software then stitches these images together to create highly accurate 2D orthomosaics and detailed 3D models of the terrain and vegetation. This technology allows for the precise measurement of various physical “parts of plants,” such as canopy height, density, and volume.

In forestry, photogrammetry is invaluable for conducting forest inventories, estimating timber volume, and tracking the growth of individual trees or stands. By creating 3D models of tree canopies, forest managers can analyze leaf area index (LAI), assess canopy cover, and monitor changes over time, gaining insights into the health and productivity of the forest ecosystem. In agriculture, these 3D models can help assess crop emergence, plant spacing, and overall canopy development, providing critical data for yield forecasting and optimizing planting strategies.

LiDAR for Sub-Canopy Penetration and Fine Detail

While photogrammetry excels at surface-level mapping, LiDAR (Light Detection and Ranging) offers a distinct advantage: its ability to penetrate dense foliage. Drone-mounted LiDAR systems emit laser pulses and measure the time it takes for these pulses to return after hitting an object. This generates a dense point cloud, providing highly accurate 3D coordinates of surfaces.

For analyzing “parts of plants,” LiDAR is revolutionary because it can map not only the top of the canopy but also the underlying branches, trunks, and even the ground topography beneath the vegetation. This allows for detailed reconstruction of individual tree architecture, including stem diameter, branch structure, and the overall volume of biomass. In dense forests, LiDAR can provide precise measurements of tree height and crown dimensions, which are difficult to obtain with photogrammetry alone. It also helps in understanding light penetration within a crop canopy or forest, informing decisions about pruning or selective harvesting. This detailed 3D information about the various “parts of plants” is invaluable for ecological studies, carbon sequestration estimation, and sustainable resource management.

The Future of Autonomous Plant Analysis and Interaction

The ongoing evolution of drone technology promises an even more integrated and autonomous future for analyzing and interacting with the “parts of plants.” This future envisions real-time insights driving immediate, precise actions, fundamentally transforming our approach to botanical management and research.

Real-time Decision Making and Robotic Interaction

The next frontier lies in the seamless integration of drone-collected data with ground-based autonomous robots for real-time decision-making and targeted intervention. Drones, acting as intelligent aerial scouts, can rapidly identify specific “parts of plants” in need of attention—be it a localized pest infestation, a nutrient deficiency in a particular plant, or an individual weed—and transmit this precise location data to robotic ground vehicles. These ground robots can then execute highly targeted actions, such as spot spraying only the affected plants with pesticides or herbicides, applying micro-doses of fertilizer to specific areas, or even precision harvesting individual fruits as they ripen. This eliminates the need for broadcast applications, significantly reducing chemical usage, labor costs, and environmental impact. Drones become the essential “eyes” providing real-time intelligence on plant “parts,” enabling a truly responsive and efficient system of agricultural and environmental management.

Hyper-Resolution and Hyper-Temporal Monitoring

The trend in drone technology is towards ever higher resolution imagery and more frequent data collection. This hyper-resolution and hyper-temporal monitoring will allow for the tracking of minute changes in “parts of plants” on a nearly continuous basis. Imagine monitoring the hydration status of individual leaves multiple times a day, or observing the subtle growth patterns of specific plant organs as they develop. This continuous stream of ultra-detailed data, processed by increasingly sophisticated AI, will enable unprecedented insights into plant physiology, stress responses, and growth dynamics. Autonomous drone swarms, operating collaboratively, could cover vast areas, continuously surveying every “part of the plant” within their range, building comprehensive spatiotemporal datasets. This leads to predictive analytics, where models can forecast plant health issues, estimate yields with greater accuracy, and assess environmental impacts with enhanced foresight, providing critical information for proactive management and sustainable practices globally.