The concept of “mail days to sync” is a fascinating intersection of communication technologies, network latency, and the fundamental challenges of asynchronous data transfer. While the term itself might not be a universally recognized technical jargon, it elegantly encapsulates a crucial aspect of how information, particularly in distributed systems, reaches equilibrium or becomes consistent across different locations. Understanding this “sync time” is paramount for developers, system architects, and even users navigating the complexities of modern digital interactions.

The Essence of Data Synchronization

At its core, data synchronization refers to the process of ensuring that data stored in multiple locations is consistent. In a distributed computing environment, data is often replicated or partitioned across various servers, databases, or even end-user devices. For these distributed systems to function effectively, these disparate copies of data must, at some point, reflect the same state. “Mail days to sync” then becomes a metaphorical representation of the time it takes for these updates to propagate and for all participating entities to receive and apply them, thus achieving a synchronized state.

Asynchronous Communication and Latency

The primary reason for delays in synchronization is the inherent nature of asynchronous communication. In many systems, sending a piece of information does not guarantee its immediate reception or processing. Instead, the sender dispatches the data and continues with its operations, relying on a separate mechanism for confirmation or acknowledgment. This is akin to sending a physical letter – you drop it in a mailbox, and it undertakes a journey through various sorting facilities and transportation networks before reaching its destination. The time it takes for that letter to arrive is the “sync time” for that piece of information.

In the digital realm, this latency can be influenced by a multitude of factors:

- Network Bandwidth and Congestion: The sheer volume of data being transmitted and the capacity of the network infrastructure play a significant role. If the network is saturated or the bandwidth is limited, data packets will take longer to traverse.

- Geographical Distance: The physical distance between the sender and receiver contributes to the propagation delay. Signals take time to travel through fiber optic cables or wireless transmissions.

- Server Load and Processing Power: Even if data reaches a destination quickly, the receiving server might be overloaded with other tasks, delaying its ability to process and apply the incoming updates.

- Protocol Overhead: The communication protocols used, such as TCP/IP, introduce their own overhead in terms of packet management, acknowledgments, and error checking, which can add to the overall sync time.

- Replication Strategies: Different replication strategies (e.g., master-slave, multi-master, peer-to-peer) have varying implications for sync times. Some prioritize immediate consistency, while others offer eventual consistency, where synchronization is guaranteed but not necessarily instantaneous.

Practical Manifestations of “Mail Days to Sync”

The impact of “mail days to sync” is felt across a wide spectrum of technological applications. While the term might evoke a quaint image of postal services, its modern equivalents are ubiquitous.

Email and Messaging Systems

The most direct, albeit still somewhat metaphorical, interpretation of “mail days to sync” could be found in older or less optimized email systems. Before the advent of instant messaging and real-time synchronization protocols, users would often experience delays in receiving emails. The time it took for an email to travel from the sender’s server to the recipient’s inbox could indeed feel like it took “days,” especially with dial-up internet and less robust server infrastructure.

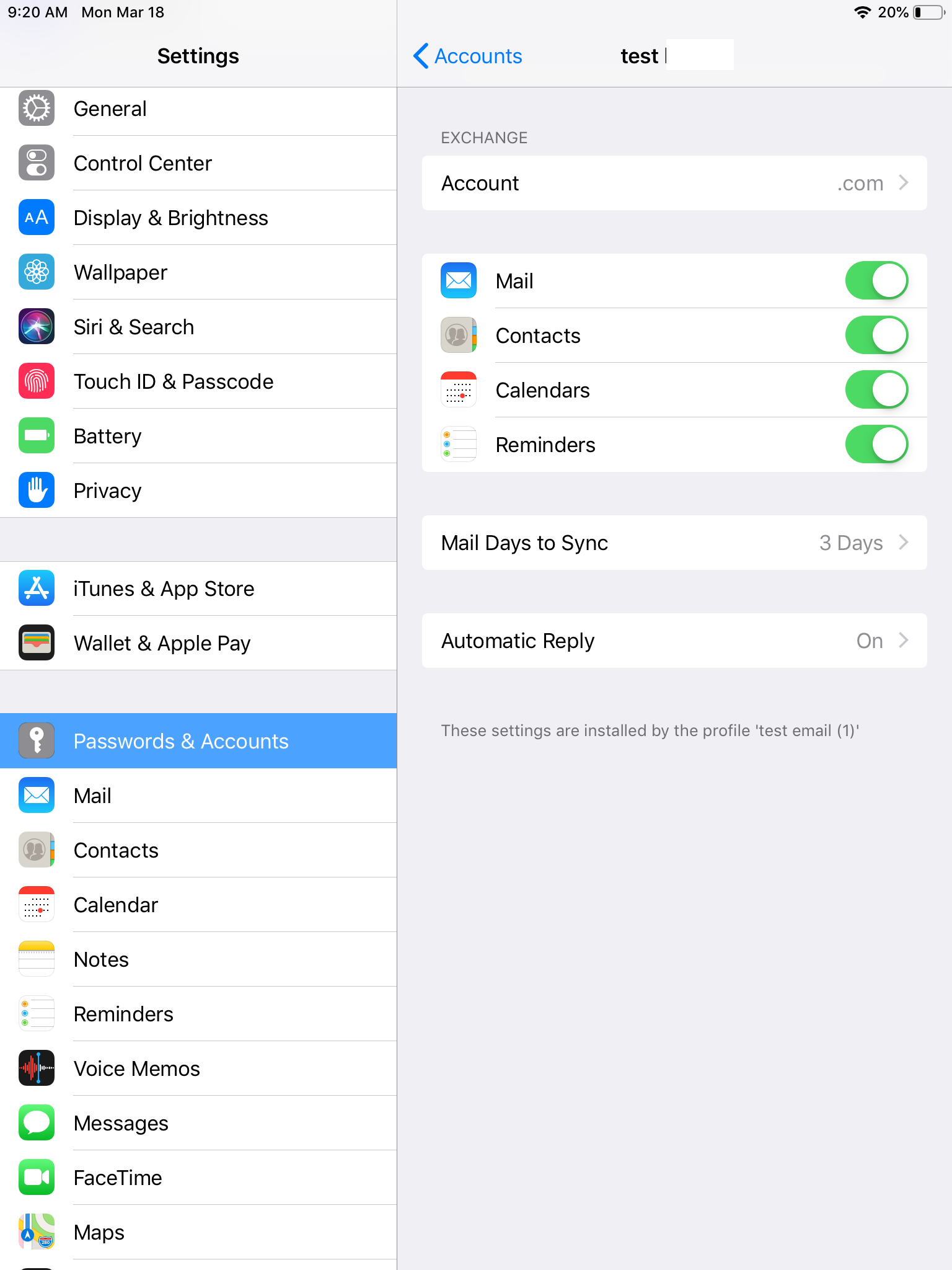

Even today, with modern email protocols like IMAP and POP3, slight delays can occur due to server load, network issues, or the frequency at which a client is configured to check for new messages. While these delays are typically measured in seconds or minutes, the underlying principle of asynchronous delivery and the time taken for synchronization remains.

Distributed Databases and Data Replication

In the realm of databases, particularly distributed databases, the concept of “mail days to sync” is a critical consideration. When data is updated in one node of a distributed database cluster, that update needs to propagate to other nodes to maintain consistency.

- Master-Slave Replication: In a master-slave setup, changes made to the master database are asynchronously replicated to the slave databases. The time lag between a commit on the master and its appearance on the slave is a direct measure of the sync time. If the network is slow or the slave is heavily utilized, this lag can increase, leading to stale data being read from the slave.

- Multi-Master Replication: In multi-master configurations, writes can occur on any node, and these changes are then propagated to all other nodes. The challenge here is handling conflicts that arise from concurrent writes. The speed at which these writes are synchronized across all masters directly impacts the perceived consistency of the data.

- Eventual Consistency: Many NoSQL databases, for instance, adopt an “eventual consistency” model. This means that given enough time without new updates, all read operations will eventually return the last updated value. The “mail days to sync” in this context refers to the time it takes for this eventual state to be reached after a write operation.

Cloud Computing and Distributed Storage

Cloud platforms rely heavily on distributed systems for storing and serving data. Services like Amazon S3, Google Cloud Storage, and Azure Blob Storage use complex replication mechanisms to ensure data durability and availability. While these systems are designed for high availability and relatively fast replication, there are still internal synchronization processes.

When a file is uploaded or updated, it might not be immediately available for read operations from all geographically dispersed data centers. The time it takes for that update to be propagated and for the data to become accessible across the entire distributed infrastructure can be considered its “sync time.” This is particularly relevant for applications that require low-latency access to data across different regions.

Real-time Collaboration Tools

Modern collaboration platforms, such as Google Docs, Microsoft 365, and project management tools, enable multiple users to work on the same document or project simultaneously. The magic behind these tools is their ability to synchronize changes in near real-time. However, even here, “mail days to sync” can be observed as subtle delays.

When one user makes a change, it’s sent to a central server or distributed network of servers. This update then needs to be propagated to all other connected clients. Network latency, server processing, and the sheer volume of changes being made by numerous users can introduce small delays, resulting in a slight lag before everyone sees the exact same version of the document. These delays are usually measured in milliseconds or seconds, a far cry from “days,” but the underlying principle of data propagation and synchronization remains.

Factors Influencing Synchronization Time

Understanding the variables that contribute to “mail days to sync” is crucial for optimizing system performance and user experience.

Network Infrastructure and Topology

The design and quality of the network are paramount. A well-connected network with high bandwidth and low latency will significantly reduce sync times. The topology, whether it’s a hub-and-spoke model or a fully meshed network, also plays a role. In highly distributed environments, geographical separation remains a fundamental physical constraint on signal travel time.

Data Volume and Update Frequency

Larger data payloads naturally take longer to transfer. Similarly, a high frequency of updates can place a strain on synchronization mechanisms, potentially leading to increased lag. Systems designed for high-volume, high-frequency updates often employ sophisticated techniques like delta synchronization (only sending the changed parts of data) and efficient queuing mechanisms.

Synchronization Protocols and Algorithms

The choice of synchronization protocol and the underlying algorithms are critical. Protocols that offer strong consistency guarantees often come with higher latency penalties. Conversely, systems prioritizing availability and low latency might opt for eventual consistency, accepting a period of potential data divergence. The complexity of conflict resolution in multi-master systems also directly impacts how quickly a consistent state is achieved.

Hardware and Software Performance

The processing power of the servers involved, the efficiency of the database engines or storage systems, and the performance of the synchronization software itself all contribute to the overall sync time. Slow hardware can become a bottleneck, even with a fast network.

Disaster Recovery and High Availability Considerations

In systems designed for high availability and disaster recovery, data is often replicated synchronously or asynchronously to multiple data centers. Synchronous replication, while providing the strongest consistency guarantees, can significantly increase latency as the primary operation must wait for acknowledgment from the replica. Asynchronous replication offers lower latency but introduces a window of potential data loss in the event of a failure. The choice between these strategies directly influences the “mail days to sync” for critical data.

Mitigating Synchronization Delays

While eliminating sync delays entirely might be impossible in many distributed scenarios, several strategies can be employed to mitigate their impact:

- Optimizing Network Infrastructure: Investing in higher bandwidth, lower latency network connections, and strategically placing data centers closer to users.

- Efficient Data Transfer Protocols: Utilizing protocols designed for speed and efficiency, and implementing techniques like data compression and delta encoding.

- Asynchronous Processing and Queuing: Decoupling update operations from primary application logic using message queues allows systems to process updates in the background, reducing the immediate impact on user experience.

- Intelligent Replication Strategies: Choosing the appropriate replication model (e.g., eventual vs. strong consistency) based on application requirements, and employing advanced replication techniques like peer-to-peer synchronization.

- Caching Mechanisms: Implementing robust caching layers can reduce the need to access the original data source for every read operation, effectively masking some synchronization delays.

- Monitoring and Performance Tuning: Continuously monitoring synchronization performance, identifying bottlenecks, and tuning system parameters are essential for maintaining optimal sync times.

In conclusion, “mail days to sync” serves as a potent metaphor for the inherent challenges of achieving data consistency in distributed systems. It highlights the critical interplay between network capabilities, processing power, and algorithmic choices. As technology continues to evolve, the quest for near-instantaneous synchronization remains a driving force, pushing the boundaries of innovation in networking, databases, and distributed computing to shrink those “mail days” into milliseconds.