In the rapidly evolving landscape of unmanned aerial vehicles (UAVs), the concept of “listening” extends far beyond mere acoustic reception. For a drone, to “listen” encompasses its entire suite of capabilities for perceiving, interpreting, and reacting to the intricate data streams flowing from its environment and operational commands. This sophisticated form of perceptive intelligence is the bedrock upon which modern drone innovation is built, enabling everything from autonomous navigation to precision remote sensing and complex AI-driven functionalities. Understanding what it means for a drone to “listen” is to grasp the core of its operational prowess and its potential for transforming industries.

Sensory Perception: How Drones “Listen” to Their Environment

A drone’s ability to “listen” begins with its array of sensors, each designed to capture a specific type of environmental data. These sensors act as the drone’s eyes, ears, and even its sense of touch, feeding a constant stream of information to its onboard processing units.

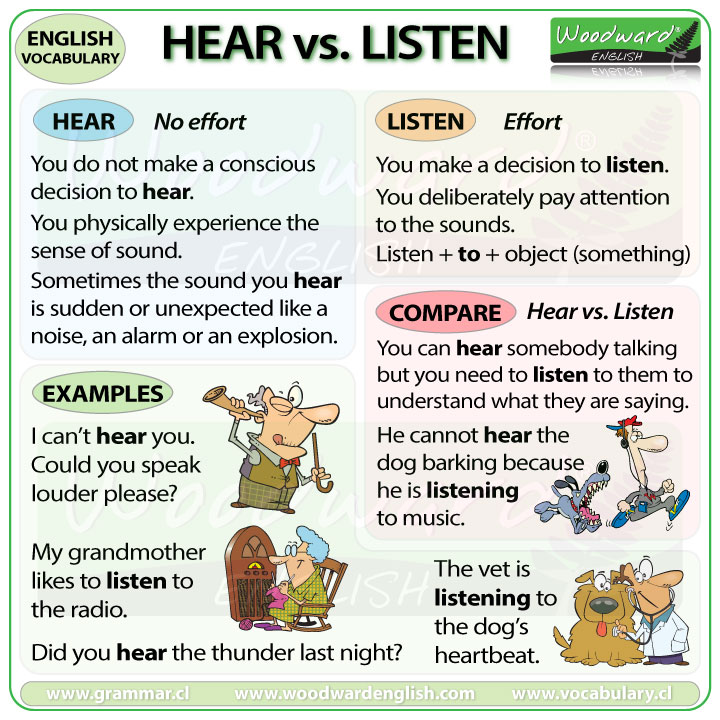

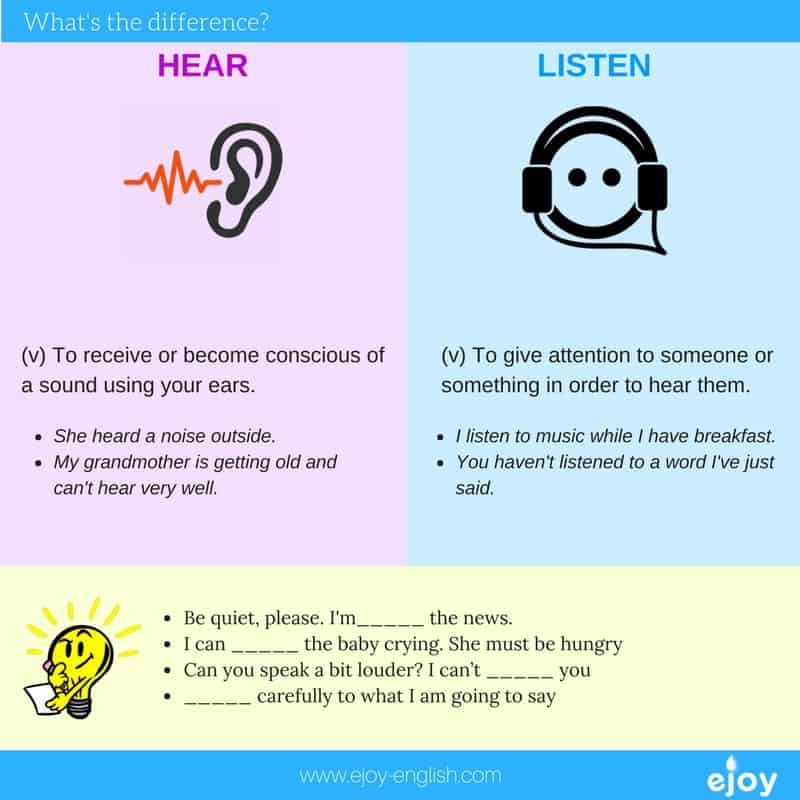

Acoustic Sensing and Noise Monitoring

While not as common as visual or inertial sensors, acoustic sensing plays a niche yet vital role, particularly in specific remote sensing applications. Microphones and advanced sound processing algorithms allow drones to “listen” for distinct auditory signatures. This can include monitoring wildlife populations by detecting specific animal calls without disturbing them, identifying anomalies in industrial machinery by listening for unusual vibrations or sounds, or even surveying human activity in remote areas. The challenge lies in filtering out the drone’s own operational noise, a feat achieved through sophisticated sound isolation and digital signal processing. As drone technology advances, acoustic listening is poised to contribute more significantly to environmental monitoring and infrastructure inspection.

Interpreting Environmental Data: Beyond the Visible Spectrum

Drones equipped with advanced payloads “listen” to the environment by capturing data across various electromagnetic spectra. Lidar (Light Detection and Ranging) systems emit laser pulses and measure the time it takes for them to return, creating highly detailed 3D maps of terrain, vegetation, and structures. This allows drones to “listen” to the physical contours of the world, understanding distances, elevations, and volumetric data. Radar systems, conversely, use radio waves, enabling them to “listen” through adverse weather conditions like fog, rain, or smoke, providing crucial data for navigation and object detection where optical sensors fail. Thermal cameras “listen” to infrared radiation, detecting heat signatures that reveal temperature differences, making them invaluable for search and rescue operations, inspecting solar panels for hot spots, or monitoring wildlife nocturnal activity. Hyperspectral and multispectral sensors “listen” to specific light wavelengths, providing detailed information about vegetation health, mineral composition, or water quality, far beyond what the human eye can perceive. These diverse data streams are the drone’s way of comprehensively “listening” to the subtle nuances of its surroundings.

Visual and Optical Flow: The Drone’s “Sight” and Movement Interpretation

Visual cameras are perhaps the most intuitive way a drone “listens” to its environment. High-resolution cameras capture images and video, which are then processed by onboard computers to understand the visual landscape. Optical flow sensors, often small cameras pointing downwards, measure the apparent motion of the ground surface relative to the drone. By analyzing the shift in pixels between consecutive frames, these sensors allow the drone to “listen” to its own movement relative to the ground, providing crucial data for stable hovering, precise positioning without GPS, and low-altitude navigation. Combined with advanced computer vision algorithms, drones can identify objects, track targets, map terrain, and even understand semantic elements of their environment, like distinguishing between roads, buildings, and vegetation. This visual “listening” is fundamental for autonomous flight, object tracking, and accurate mapping.

Autonomous Decision-Making: Processing the “Heard” Information

Merely collecting data is insufficient; the true power of a drone’s “listening” capability lies in its capacity to process, interpret, and act upon that information autonomously. This processing forms the intelligence layer, transforming raw data into actionable insights and flight commands.

AI and Machine Learning for Data Interpretation

Artificial intelligence and machine learning algorithms are the brains behind a drone’s perceptive intelligence. They enable the drone to make sense of the vast amounts of data it “hears” from its sensors. Object recognition algorithms can identify specific items in an image or video stream, such as a person, a vehicle, or a defect on a structure. Predictive analytics allow drones to anticipate future states based on current and historical data, for instance, predicting the trajectory of a moving object for safer obstacle avoidance. Machine learning models can be trained to detect anomalies, such as unusual heat signatures from a thermal camera or irregular patterns in hyperspectral data, signaling potential issues like equipment failure or disease outbreaks. This intelligent interpretation elevates the drone from a data collector to a sophisticated analytical tool, capable of understanding and responding to its environment with minimal human intervention.

Real-time Obstacle Avoidance and Navigation

One of the most critical applications of a drone’s “listening” intelligence is real-time obstacle avoidance. By continuously processing data from lidar, radar, ultrasonic, and vision sensors, the drone builds a dynamic 3D model of its immediate surroundings. It “listens” for potential collisions, identifying obstacles in its flight path – be it trees, buildings, power lines, or even birds. Advanced algorithms then calculate alternative flight paths in milliseconds, allowing the drone to autonomously reroute, hover, or land to prevent impact. This sophisticated navigational “listening” is essential for safe autonomous operations, particularly in complex or dynamic environments, ensuring the safety of both the drone and its surroundings.

Swarm Intelligence and Collaborative Listening

The concept of a single drone “listening” can be expanded to multiple drones collaboratively “listening” and sharing information. Swarm intelligence enables groups of drones to communicate and cooperate, pooling their collective sensory data to achieve common goals more efficiently. For example, in a large-scale mapping mission, multiple drones can cover an area faster by sharing real-time positional and visual data, dynamically adjusting their flight paths to avoid redundant coverage or fill in gaps. In search and rescue, a drone swarm can collaboratively “listen” for survivors using thermal or acoustic sensors, sharing detected anomalies to rapidly pinpoint locations. This collaborative “listening” allows for a distributed, resilient, and more comprehensive understanding of an environment than any single drone could achieve, pushing the boundaries of what’s possible in large-scale autonomous operations.

Human-Drone Interaction: Listening to Commands and Intent

Beyond environmental perception, a drone’s ability to “listen” also extends to understanding and executing commands from its human operators or pre-programmed mission plans. This interaction layer is crucial for effective deployment and integration into human-centric workflows.

Advanced Control Interfaces

While traditional radio controllers remain prevalent, innovations in human-drone interaction are enabling drones to “listen” to commands in more intuitive ways. Voice command systems, still in their nascent stages for complex drone operations, allow operators to issue simple commands verbally, freeing their hands for other tasks. Gesture control interfaces allow drones to “listen” to specific hand movements or body language, translating them into flight maneuvers or camera controls. Though experimental, brain-computer interfaces (BCIs) represent the ultimate frontier, where a drone could potentially “listen” to an operator’s thoughts, enabling control through direct neural signals. These advancements aim to make drone interaction more natural and seamless, allowing humans to communicate their intent more directly.

Mission Planning and User Feedback

Drones also “listen” to predefined mission plans, which are essentially detailed sets of instructions uploaded prior to flight. These plans dictate flight paths, altitudes, camera settings, and data collection triggers, allowing the drone to execute complex tasks autonomously. During flight, drones can “listen” for real-time adjustments or feedback from operators via ground control software, allowing for dynamic changes to the mission based on evolving circumstances. This iterative “listening” between the drone’s autonomous system and human oversight ensures flexibility and responsiveness in dynamic operational scenarios.

Predictive Analytics and Anomaly Detection

In a broader sense, drones can be programmed to “listen” for specific operational parameters or anomalies in their own performance data. Through self-monitoring and predictive analytics, a drone’s internal systems can “listen” for signs of impending component failure, battery degradation, or sensor malfunctions. By proactively identifying these issues, the drone can alert operators, recommend maintenance, or even initiate an autonomous return-to-home procedure, enhancing safety and reliability. This internal “listening” is a critical aspect of intelligent drone management and preventive maintenance.

The Future of Drone Perception: Enhanced “Listening” Capabilities

The trajectory of drone innovation points towards even more sophisticated “listening” capabilities, driven by advancements in sensor technology, processing power, and artificial intelligence.

Hyper-Sensitive Sensors and Multi-Modal Fusion

Future drones will likely integrate even more diverse and sensitive sensors, pushing the boundaries of what they can perceive. This could include hyperspectral sensors with an even broader range of detectable wavelengths, miniature bio-sensors capable of detecting airborne pathogens, or quantum sensors that can “listen” to extremely subtle gravitational or magnetic field variations. The true breakthrough will come from multi-modal sensor fusion, where data from all these disparate sources is intelligently combined and correlated to create an extraordinarily rich and coherent understanding of the environment, surpassing human perceptual abilities.

Edge Computing and Onboard Processing

To handle the immense data streams from these advanced sensors in real-time, future drones will increasingly rely on edge computing. This involves powerful processors directly on the drone, enabling complex AI and machine learning computations to occur onboard, rather than needing to transmit data back to a ground station for processing. This means drones can “listen,” process, and react with virtually no latency, unlocking new levels of autonomy and responsiveness for critical applications like high-speed autonomous racing, rapid emergency response, or highly dynamic environmental monitoring.

Ethical Considerations in Autonomous Listening

As drones become more adept at “listening” to their environment, ethical considerations will become paramount. The ability to collect vast amounts of detailed visual, acoustic, and other sensory data raises questions about privacy, data security, and the potential for misuse. Developers and operators must establish robust frameworks for responsible data collection, storage, and usage, ensuring that the drone’s powerful perceptive intelligence is deployed in ways that benefit society while respecting individual rights and public trust. The future of “listening” drones requires not just technological advancement, but also a commitment to ethical governance and transparency.