The internet’s backbone, the Hypertext Transfer Protocol (HTTP), has undergone significant evolution to keep pace with the ever-increasing demands of the digital age. While HTTP/1.1 served us admirably for years, its inherent limitations became increasingly apparent as web pages grew more complex, mobile traffic surged, and users expected near-instantaneous loading times. Enter HTTP/2, a protocol designed to address these shortcomings and usher in a new era of faster, more efficient web communication. This exploration delves into the core principles, key features, and profound impact of HTTP/2 on how we experience the internet.

The Genesis of HTTP/2: Addressing HTTP/1.1’s Bottlenecks

HTTP/1.1, while robust, suffered from several critical design limitations that hampered performance, especially on modern, data-rich websites. Understanding these bottlenecks is crucial to appreciating the advancements brought by HTTP/2.

Head-of-Line Blocking

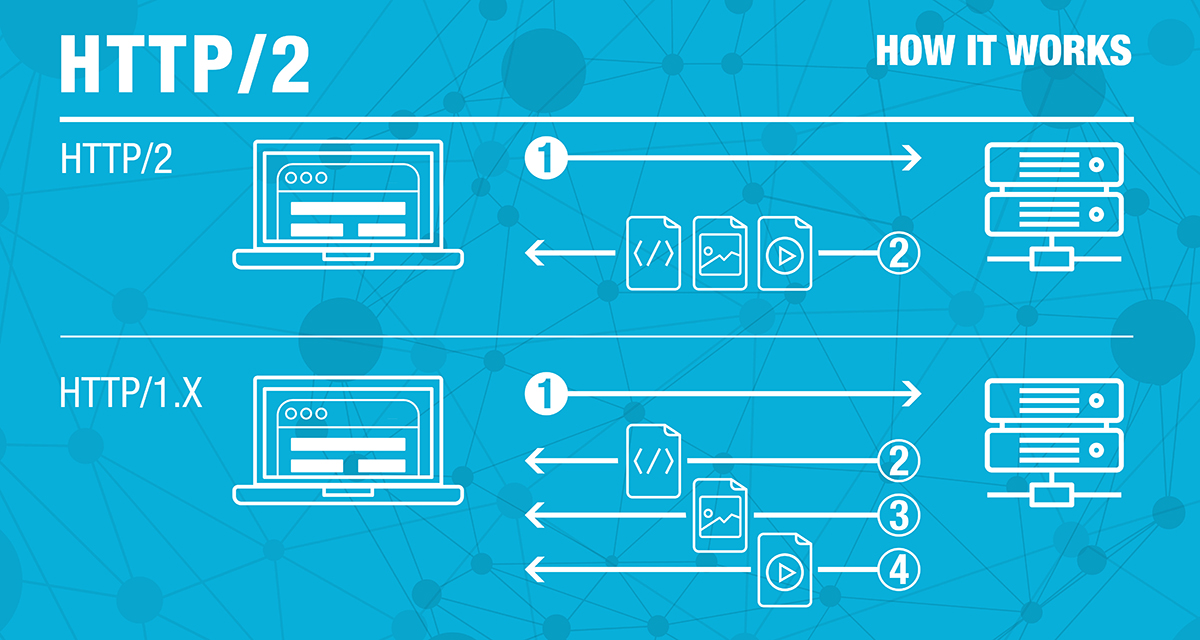

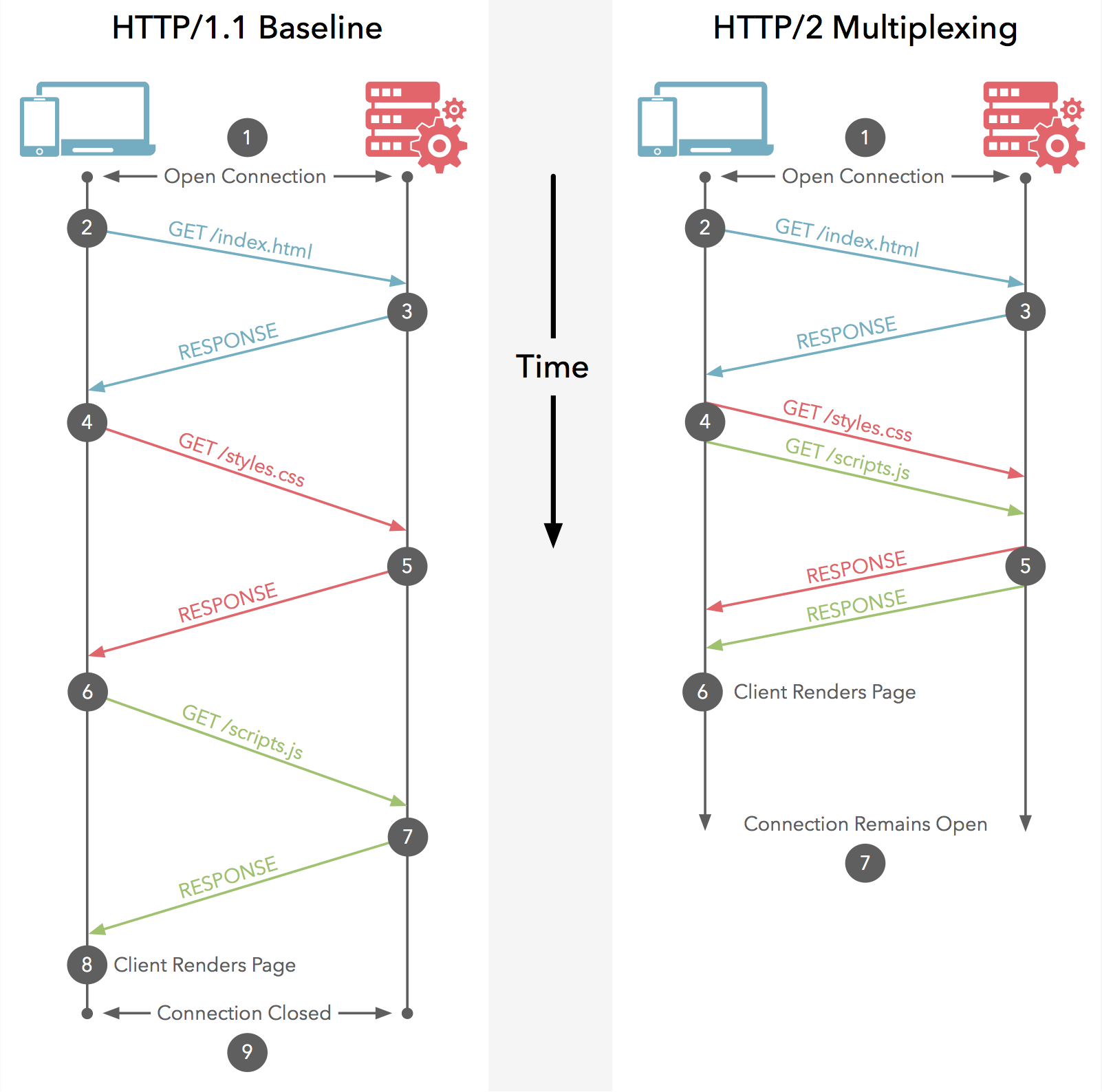

One of the most significant issues with HTTP/1.1 was its reliance on a single TCP connection for each request/response cycle. When multiple resources (HTML, CSS, JavaScript, images) were requested, they were typically sent sequentially. If one resource took a long time to download, it would block the entire stream of requests, even if subsequent resources were small and ready to be sent. This “head-of-line blocking” meant that a single slow download could delay the rendering of an entire webpage, leading to a frustrating user experience.

Multiple TCP Connections

To mitigate head-of-line blocking to some extent, browsers resorted to opening multiple TCP connections to the same server. While this helped parallelize requests, it came with its own set of problems. Each TCP connection has overhead associated with its establishment and maintenance, including the TCP handshake process (SYN, SYN-ACK, ACK), which adds latency. Furthermore, maintaining numerous concurrent connections consumes more server resources and can strain network infrastructure. The browser’s ability to open parallel connections was also limited, typically to around 6-8 connections per host.

Verbose Headers

HTTP/1.1 headers, while essential for conveying request and response metadata, could be quite verbose. With each request, identical information like cookies, user agent strings, and accepted content types were sent repeatedly, consuming valuable bandwidth and adding processing overhead on both the client and server. This redundancy became a significant factor in performance degradation, especially on mobile devices with limited bandwidth.

Inefficient Resource Prioritization

HTTP/1.1 offered limited mechanisms for clients to communicate the relative importance of different resources to the server. While some rudimentary prioritization could be inferred through the order of requests, it was far from optimal. This meant that critical resources needed for initial page rendering might be delayed in favor of less important ones, further impacting perceived load times.

HTTP/2’s Architectural Innovations

HTTP/2 was not a complete rewrite of the HTTP protocol but rather an evolution, designed to be backward-compatible and address the aforementioned issues through fundamental architectural changes at the transport layer. The key to its success lies in its binary framing layer.

Binary Framing Layer

At the heart of HTTP/2 lies the binary framing layer. Instead of text-based request/response messages, HTTP/2 breaks down all communications into smaller, manageable binary-encoded frames. These frames are then multiplexed over a single TCP connection. This fundamental shift allows for a more efficient and flexible exchange of data.

Multiplexing

Multiplexing is arguably the most significant advantage of HTTP/2. It allows multiple requests and responses to be interleaved and sent concurrently over a single TCP connection without blocking each other. Each frame is tagged with a stream identifier, allowing the receiving end to reassemble the data in the correct order for each individual request. This effectively eliminates head-of-line blocking at the HTTP level. If a large image takes time to download, it won’t prevent smaller CSS or JavaScript files from being delivered simultaneously.

Stream Prioritization

HTTP/2 introduces a sophisticated mechanism for stream prioritization. Clients can assign a weight and dependency to each stream, signaling to the server which resources are more critical for rendering the page. For example, the HTML document would likely have a high priority, followed by essential CSS and JavaScript. This allows servers to allocate resources more intelligently, ensuring that critical content is delivered first, significantly improving perceived performance and user experience.

Header Compression (HPACK)

To combat the verbosity of HTTP/1.1 headers, HTTP/2 employs HPACK (Header Compression for HTTP/2). HPACK uses a combination of techniques to reduce the size of header data. It maintains a table of previously sent headers on both the client and server. When a header is sent again, it can be represented by a simple index into this table, rather than sending the entire header field-value pair. This significantly reduces the amount of data transmitted, especially for requests that share many common header fields, leading to faster loading times and reduced bandwidth consumption.

Server Push

HTTP/2 introduces the concept of “Server Push.” In traditional HTTP/1.1, a browser requests an HTML file, parses it, and then discovers the need for other resources (like CSS, JavaScript, or images) which it then requests individually. Server Push allows the server to proactively send resources to the client before the client explicitly requests them. For example, when a browser requests an HTML page, the server can anticipate that the browser will need associated CSS and JavaScript files and send them along in advance. This can significantly reduce the number of round trips required, further accelerating page load times, particularly in scenarios with high latency.

Single TCP Connection

By leveraging multiplexing and header compression, HTTP/2 can effectively achieve all its communication goals over a single TCP connection per origin. This reduces the overhead associated with establishing and maintaining multiple connections, leading to lower latency and more efficient resource utilization on both the client and server.

Benefits and Impact of HTTP/2

The architectural changes in HTTP/2 translate into tangible benefits for users, developers, and infrastructure providers.

Faster Website Loading Times

The most immediate and noticeable benefit of HTTP/2 is improved performance. By eliminating head-of-line blocking, enabling multiplexing, and reducing header overhead, websites load significantly faster. This is particularly impactful for users on slower network connections or mobile devices.

Improved User Experience

Faster loading times directly translate to a better user experience. Visitors are more likely to engage with a website that responds quickly, leading to lower bounce rates and increased conversions. The responsiveness of interactive elements and the overall fluidity of browsing are also enhanced.

Reduced Server Load

The efficiency gains of HTTP/2 can also lead to reduced server load. With fewer open TCP connections and less redundant data to process, servers can handle more concurrent requests with the same hardware. This can lead to cost savings and improved scalability.

Enhanced Mobile Performance

Mobile users, often on less reliable and slower networks, benefit immensely from HTTP/2. The protocol’s ability to efficiently handle requests and reduce data transmission makes mobile web browsing a much smoother experience.

Easier Deployment and Migration

HTTP/2 is designed to be largely transparent to application-level code. Most existing web applications can be migrated to use HTTP/2 simply by configuring their web servers to support it. This ease of adoption has contributed to its rapid widespread implementation.

Challenges and Considerations

While HTTP/2 offers substantial advantages, there are some challenges and considerations to keep in mind during adoption.

TLS Encryption Requirement

While the HTTP/2 specification itself does not mandate encryption, most major browsers have implemented HTTP/2 only over TLS (Transport Layer Security), also known as HTTPS. This means that to leverage HTTP/2 in modern browsers, websites must be served over HTTPS. While this is a security best practice, it requires obtaining and configuring an SSL/TLS certificate.

Network Middleboxes

Some older network devices, known as “middleboxes,” may not be fully compliant with HTTP/2 and can sometimes interfere with its proper functioning. This can lead to performance issues or even prevent HTTP/2 connections from being established. However, as HTTP/2 becomes more prevalent, this issue is becoming less common.

Server-Side Implementation Complexity

While migration is often straightforward at the application level, implementing HTTP/2 efficiently on the server side requires careful configuration and tuning. Understanding the nuances of multiplexing, prioritization, and server push is crucial for maximizing its benefits.

The Future of Web Protocols

HTTP/2 has laid a strong foundation for a more performant and efficient internet. It has addressed many of the limitations of its predecessor and paved the way for further innovation. Looking ahead, the industry is already exploring even more advanced protocols like HTTP/3, which leverages the QUIC transport protocol to further enhance performance and reliability, especially in challenging network conditions. However, HTTP/2 remains the dominant standard for modern web communication, delivering a faster and more responsive experience for billions of users worldwide. Its adoption signifies a crucial step in the ongoing evolution of the internet, ensuring that it can continue to meet the demands of an increasingly connected and data-driven world.