Beyond Liquid: Filtrate in the Digital Age of Drones

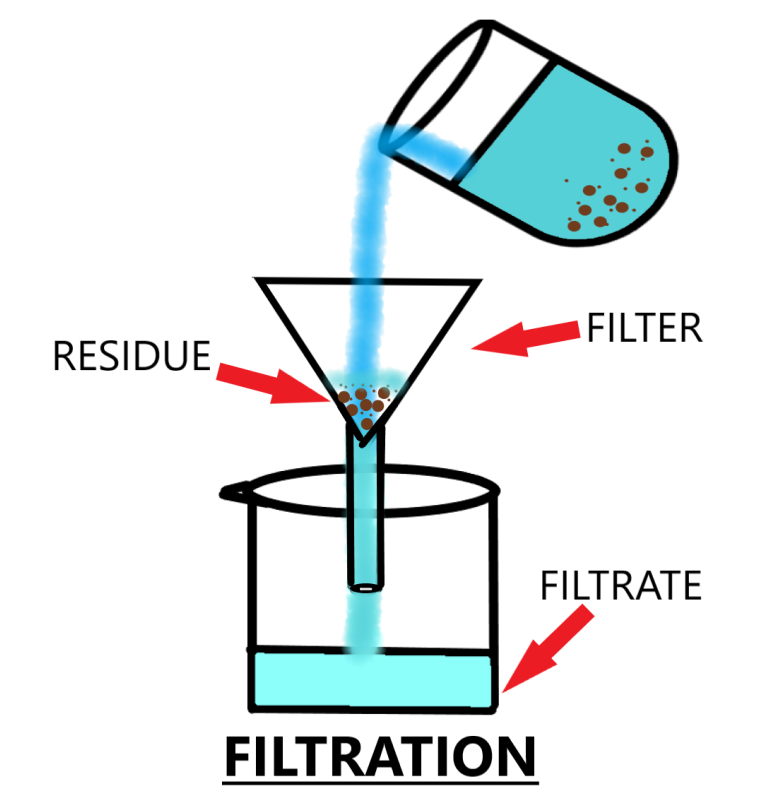

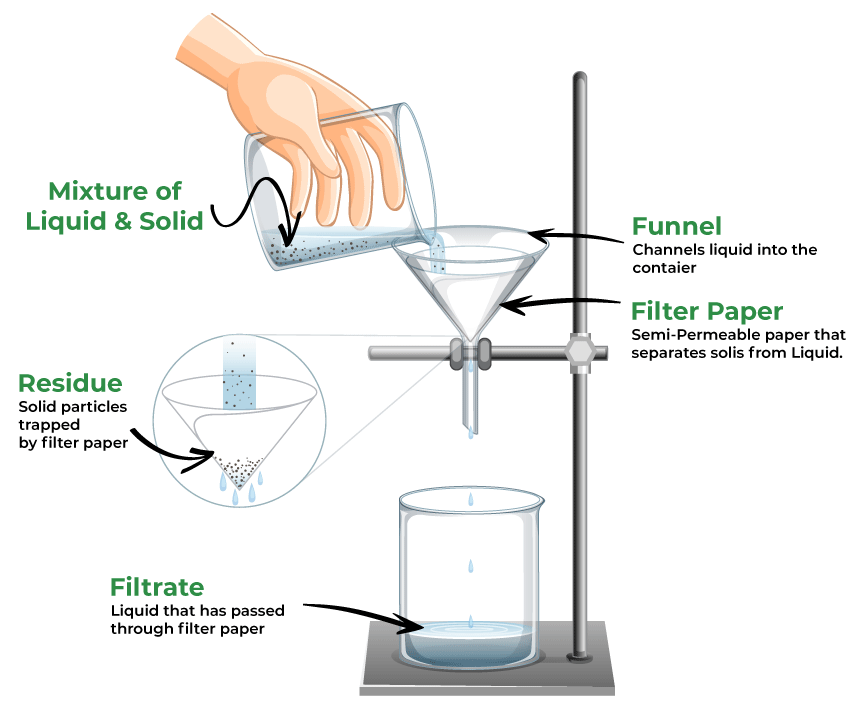

The term “filtrate” traditionally evokes images of laboratory settings, where a liquid or gas passes through a filter, leaving behind impurities and yielding a purified substance. In this context, the filtrate is the valuable, refined output—the essence extracted from a raw mixture. While drones certainly don’t filter physical liquids in the sky, the underlying principle of filtration is profoundly relevant to their operation and the revolutionary insights they provide.

In the realm of drone technology, particularly within advanced applications like mapping, remote sensing, and autonomous flight, “filtrate” takes on a powerful metaphorical meaning. Here, filtrate refers to the refined, processed, and actionable data derived from the often noisy, redundant, or incomplete raw inputs collected by a drone’s myriad sensors. Just as a physical filter separates desired elements from unwanted ones, digital filtration processes on drone data strip away noise, correct errors, and extract meaningful information, transforming raw observations into invaluable intelligence. This digital filtrate is the clean, dependable foundation upon which sophisticated drone functionalities and analytical applications are built.

The journey from raw sensor readings to meaningful filtrate is a complex one, involving sophisticated algorithms and computational power. Drones, operating in dynamic and often challenging environments, collect vast amounts of data—from precise GPS coordinates and inertial measurements to high-resolution imagery and intricate LiDAR point clouds. Without rigorous processing and filtration, this raw data would be largely unusable, leading to inaccurate navigation, flawed maps, or unreliable insights. Understanding “what is filtrate” in this digital context is crucial to appreciating the true innovation and utility of modern drone technology.

The Filtration Process: Refining Raw Drone Data

The transformation of raw drone data into valuable filtrate involves several layers of sophisticated processing, each designed to enhance accuracy, reduce noise, and extract specific information. These processes are fundamental to ensuring the reliability and utility of drone-derived insights.

Sensor Fusion and Navigation Filters

At the most foundational level of drone operation, particularly for stable flight and autonomous capabilities, is the real-time processing of sensor data. Drones are equipped with a suite of sensors—Inertial Measurement Units (IMUs) comprising accelerometers and gyroscopes, magnetometers, barometers, and Global Positioning System (GPS) receivers. Each of these sensors provides a piece of the puzzle regarding the drone’s position, velocity, and orientation. However, individual sensor readings are inherently noisy, susceptible to drift, and can be influenced by environmental factors.

This is where sensor fusion comes into play. Algorithms like Kalman filters and Complementary filters are employed to intelligently combine data from multiple, diverse sensors. For instance, an accelerometer provides instantaneous acceleration but drifts over time; a GPS provides absolute position but at a lower update rate and with its own error margins. A Kalman filter can take these disparate, noisy inputs, estimate the drone’s true state (position, velocity, attitude), and predict its future state, continuously correcting its estimates as new sensor data arrives. The output of such a filter – the precise, stable, and accurate estimate of the drone’s state – is a critical form of filtrate. This refined navigation data is what enables a drone to maintain a stable hover, follow a precise flight path, or execute complex autonomous maneuvers with confidence, forming the backbone of all advanced drone operations.

Remote Sensing Data Processing

Beyond flight stability, drone-based remote sensing applications generate even larger and more complex datasets that demand extensive filtration to become useful. Whether it’s photogrammetry, LiDAR, or multispectral imaging, the raw data needs significant refinement.

Photogrammetry, for example, involves capturing hundreds or thousands of overlapping images to create 2D maps or 3D models. The initial process generates a sparse point cloud from matched features across images. Filtering techniques are then applied to remove erroneous matches, outliers, and artifacts. Subsequent steps involve dense point cloud generation, often followed by further filtering to create clean Digital Surface Models (DSMs) and Digital Terrain Models (DTMs). The “filtrate” here is the geometrically accurate and clean 3D model or orthomosaic map, free from distortions and inconsistencies, ready for precise measurements and analysis.

LiDAR (Light Detection and Ranging) sensors emit laser pulses and measure the time it takes for them to return, creating a “point cloud” representing the 3D structure of the environment. Raw LiDAR point clouds often contain noise from atmospheric particles, multiple reflections, or sensor errors. Filtration in LiDAR involves several steps:

- Outlier removal: Identifying and eliminating points that do not belong to the actual surface.

- Ground classification: Separating ground points from non-ground points (buildings, vegetation, etc.) to generate bare-earth models.

- Noise reduction: Smoothing the point cloud to reduce random variations.

The resulting “filtrate” is a meticulously cleaned, classified, and often simplified point cloud that accurately depicts terrain, structures, and vegetation, enabling precise volume calculations, topographic mapping, and vegetation analysis.

Multispectral and Hyperspectral Imaging involves capturing data across various wavelengths beyond the visible spectrum. This raw data can be affected by atmospheric conditions, sensor calibration issues, and noise. Filtration processes include:

- Atmospheric correction: Removing the effects of haze, aerosols, and water vapor to obtain true surface reflectance values.

- Radiometric calibration: Ensuring consistency in brightness and color values across images and over time.

- Noise reduction: Eliminating random pixel variations.

The “filtrate” here is the spectrally accurate and clean image data, allowing for precise calculation of vegetation indices (e.g., NDVI), identification of plant stress, soil composition analysis, and mapping of different materials, which are crucial for precision agriculture and environmental monitoring.

In all these remote sensing applications, the filtration process transforms raw, often overwhelming data into structured, accurate, and highly relevant information – the digital filtrate – that forms the basis for advanced analysis and decision-making.

The Value of Filtrate: Actionable Insights and Autonomous Capabilities

The meticulously processed and refined data—the digital filtrate—is not merely an academic exercise; it is the cornerstone of drone technology’s most impactful applications. It empowers drones with intelligent autonomy and provides users with unparalleled insights, driving innovation across numerous sectors.

Enhancing Autonomous Flight and Navigation

The highest fidelity filtrate from sensor fusion and navigation filters directly translates into superior autonomous flight capabilities.

- Precise Navigation: With robust, filtered position and orientation data, drones can achieve centimeter-level accuracy using technologies like RTK (Real-Time Kinematic) and PPK (Post-Processed Kinematic). This precision is vital for repetitive missions, highly accurate mapping, and seamless integration into automated workflows.

- Obstacle Avoidance: Filtered data from ultrasonic, optical, and LiDAR sensors allows a drone’s onboard intelligence to accurately perceive its environment, identify obstacles, and dynamically plan collision-free flight paths. This ensures operational safety and expands the range of environments in which drones can operate autonomously.

- AI Follow Mode: Advanced AI algorithms rely on a clean stream of visual and spatial data to identify, track, and follow moving targets. The “filtrate” in this context is the stabilized and isolated target information, enabling seamless and intelligent autonomous tracking without human intervention.

Reliable filtered data is not just about stability; it’s about enabling a drone to make intelligent, real-time decisions, transforming it from a remote-controlled vehicle into an autonomous agent capable of complex tasks.

Driving Advanced Applications

The actionable insights derived from drone filtrate are revolutionizing industries and enabling new possibilities.

- Mapping and Surveying: The filtrate from photogrammetry and LiDAR (clean point clouds, accurate orthomosaics, DTMs, and DSMs) underpins the creation of highly precise 2D maps and 3D models. This allows for accurate volume calculations, site planning, progress monitoring in construction, and detailed topographic analysis. For instance, mapping the change in a stockpile volume month-over-month becomes a simple, automated process thanks to the clean, consistent data.

- Agriculture: Multispectral data filtrate provides unparalleled insights into crop health. By analyzing vegetation indices, farmers can identify areas of plant stress, nutrient deficiencies, or disease outbreaks long before they are visible to the naked eye. This enables precision farming, targeted irrigation, optimized fertilization, and early intervention, leading to increased yields and reduced resource waste.

- Infrastructure Inspection: Drones equipped with high-resolution cameras, thermal sensors, or LiDAR generate detailed filtrate that is invaluable for inspecting critical infrastructure like bridges, power lines, pipelines, and wind turbines. Filtered thermal data can reveal hot spots indicating electrical faults; filtered visual data can pinpoint structural damage or corrosion. This allows for proactive maintenance, improved safety, and reduced inspection costs and risks compared to traditional methods.

- Environmental Monitoring: Filtered data enables scientists and conservationists to monitor ecosystems, track wildlife populations, detect pollution sources, and assess disaster damage with unprecedented speed and detail. From mapping deforestation rates to identifying marine oil spills, the insights gleaned from drone filtrate are crucial for informed environmental management and conservation efforts.

In essence, the digital filtrate transforms raw observations into a powerful narrative, providing a clear, accurate, and unbiased understanding of the world from above. It moves drone technology beyond mere data collection to sophisticated data intelligence.

The Future of Data Filtration in Drones

The continuous evolution of drone technology is inextricably linked to advancements in data filtration. As drones become more sophisticated and their applications broaden, the demand for higher fidelity, more granular, and real-time filtrate will only intensify.

One significant trend is the increasing integration of Artificial Intelligence (AI) and Machine Learning (ML) into filtration pipelines. These advanced algorithms can identify subtle patterns, predict outcomes, and perform classification tasks with a level of efficiency and accuracy that surpasses traditional methods. Future filtration systems will leverage deep learning to automatically detect anomalies, segment objects, and perform semantic understanding of complex environments directly from raw sensor streams, further refining the “filtrate” into even more insightful and contextually rich information.

Edge computing is another pivotal development. Performing sophisticated data filtration onboard the drone, rather than sending all raw data to a ground station for processing, reduces latency, bandwidth requirements, and enables truly autonomous, real-time decision-making. Imagine a drone independently identifying a defect during an inspection and immediately rerouting to capture more detailed imagery of that specific area, all processed at the edge. This capability relies heavily on compact, powerful filtration algorithms that can run efficiently on limited onboard hardware.

The integration of drone filtrate with big data analytics platforms will unlock even deeper insights. By combining the precise, localized data from drones with broader datasets (e.g., historical weather patterns, demographic information, market trends), businesses and researchers can gain a holistic understanding of complex situations. The ability to cross-reference and correlate various data streams, all underpinned by high-quality drone filtrate, will drive new discoveries and optimize decision-making across virtually every sector.

Ultimately, the future of drone technology is a future of ever-improving filtrate. The ongoing quest for cleaner, more accurate, and more actionable data will continue to push the boundaries of what drones can achieve, solidifying their role as indispensable tools for innovation and progress in the digital age.