In the intricate world of drone technology, where precision flight, autonomous navigation, and sophisticated data collection are paramount, a fundamental mathematical concept underpins nearly every advanced capability: rotation math. Far more complex than simply turning left or right, rotation math deals with how objects reorient themselves in three-dimensional space. For drones, mastering this mathematical domain is not just an academic exercise; it’s the very bedrock of their ability to fly stably, navigate intelligently, capture accurate data, and execute complex autonomous missions. Without a deep understanding and precise application of rotation mathematics, the marvels of modern drone innovation—from AI follow modes to detailed 3D mapping—would simply not be possible.

The Cornerstone of Spatial Awareness and Control

At its core, a drone is an airborne robot. Like any robot operating in the physical world, it needs to understand its position and orientation to interact with its environment effectively. While position (where it is) is handled by translation, orientation (which way it’s facing) is entirely governed by rotation.

Defining Rotations in Three Dimensions

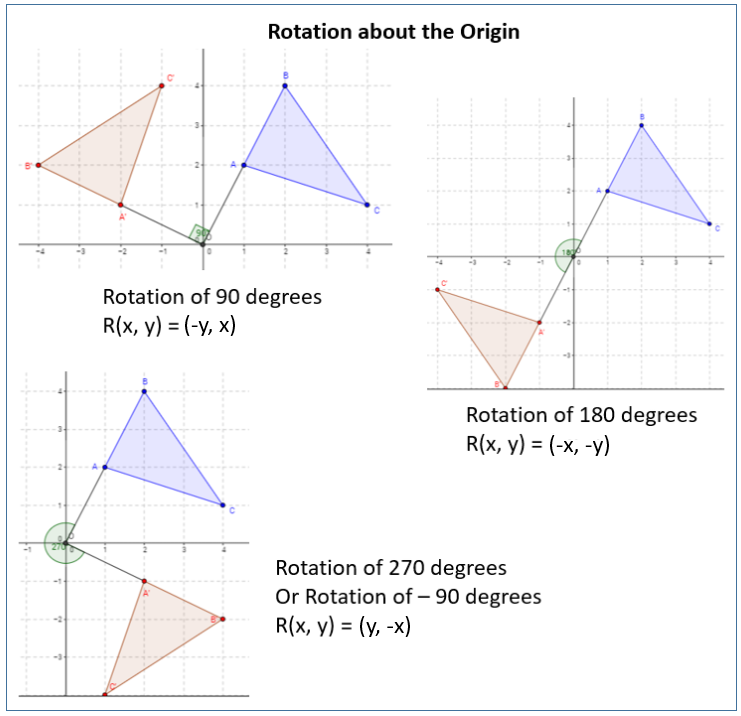

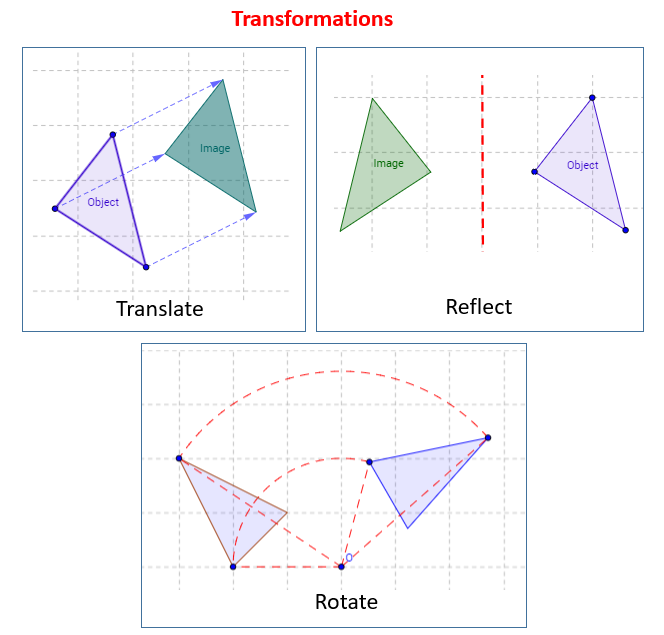

Imagine a drone hovering perfectly still. Now, picture it tilting forward, rolling to one side, or spinning on its vertical axis. These are rotations. Unlike a simple translation—moving from point A to point B without changing orientation—a rotation involves changing the angle of an object relative to a fixed point or axis. In three dimensions, this becomes significantly more complex than a simple 2D turn. A drone can simultaneously pitch (tilt up/down), roll (tilt side-to-side), and yaw (turn left/right). Each of these rotations needs to be precisely measured, calculated, and controlled for stable and accurate flight.

The challenge lies in representing these rotations mathematically in a way that is unambiguous, computationally efficient, and robust to singularities. The Earth is constantly rotating, the drone itself is rotating, and sensors within the drone are measuring these rotational changes. Bringing all these rotational dynamics into a unified mathematical framework is what “rotation math” addresses.

Why Drones Need Rotation Math

The necessity for sophisticated rotation math in drones stems from several critical operational requirements:

- Attitude Estimation and Stability: To fly stably, a drone must continuously know its “attitude”—its orientation in space (roll, pitch, yaw). Inertial Measurement Units (IMUs) containing accelerometers and gyroscopes provide raw data on angular velocities and accelerations. Rotation math processes this data to estimate the drone’s current orientation relative to the Earth or a global frame of reference. This estimation is crucial for the flight controller to make tiny, rapid adjustments to motor speeds, counteracting disturbances like wind and maintaining stability.

- Navigation and Path Planning: For a drone to fly from point A to point B, especially along a complex trajectory or while avoiding obstacles, it needs to know not only its absolute position but also its current heading and orientation. Rotation math allows the drone to transform coordinates from its own “body frame” (relative to itself) to a global “Earth frame” (relative to the ground), enabling accurate path following and waypoint navigation.

- Sensor Data Interpretation: Many drone sensors, such as cameras, LiDAR, and even GPS antennas, are fixed to the drone’s body. Their readings are initially relative to the drone’s own orientation. To make sense of this data in a real-world context (e.g., georeferencing an image or building a 3D map), the raw sensor data must be “rotated” from the drone’s body frame into the Earth frame. This process relies heavily on accurate knowledge of the drone’s orientation.

Key Mathematical Representations of Rotation

Representing rotations mathematically is not a trivial task, and several methods have evolved, each with its strengths and weaknesses. In drone technology, the choice of representation often depends on the specific application, computational constraints, and desired robustness.

Euler Angles: Intuitive but Limited

Euler angles are perhaps the most intuitive way to describe rotations, representing a sequence of three successive rotations around distinct axes. For drones, these correspond directly to:

- Roll: Rotation around the drone’s longitudinal axis (nose to tail).

- Pitch: Rotation around the drone’s lateral axis (wingtip to wingtip).

- Yaw: Rotation around the drone’s vertical axis.

They are easy for humans to understand and visualize, often used in flight displays and for setting desired attitudes. However, Euler angles suffer from a critical limitation known as gimbal lock. This occurs when two of the rotation axes align, effectively reducing the system to only two degrees of freedom. For instance, if a drone pitches up by 90 degrees, its roll and yaw axes can become aligned, making it impossible to uniquely define certain rotations or recover from extreme attitudes. This singularity makes Euler angles unsuitable for continuous, high-precision attitude estimation and control, especially in acrobatic flight or situations where the drone might experience large angular changes.

Rotation Matrices: Precision and Transformation

A rotation matrix is a 3×3 matrix that describes the orientation of one coordinate system relative to another. When you multiply a vector (representing a point or direction) by a rotation matrix, the result is the rotated vector. Rotation matrices are powerful because they allow for precise transformations between different reference frames (e.g., transforming a point from the drone’s body frame to the Earth frame). They are unambiguous and don’t suffer from gimbal lock.

However, rotation matrices come with their own set of challenges. They are less compact than other representations, requiring nine numbers to represent a rotation. More importantly, when rotation matrices are concatenated (multiplied together to combine successive rotations), small numerical errors can accumulate, leading to the matrix losing its “orthonormal” properties (columns should be orthogonal unit vectors). This drift requires periodic re-normalization, which adds computational overhead. Despite this, rotation matrices are fundamental for many coordinate transformations in mapping, sensor fusion, and robotics.

Quaternions: The Gold Standard for Drone Kinematics

Quaternions are a more advanced mathematical construct, extending complex numbers into four dimensions. A quaternion is represented by four numbers (a scalar and a 3-element vector part) and offers a highly efficient and robust way to represent 3D rotations.

For drone flight controllers and navigation algorithms, quaternions are the preferred method for several compelling reasons:

- No Gimbal Lock: Unlike Euler angles, quaternions are singularity-free, making them ideal for handling continuous, unrestricted rotations, essential for drones performing complex maneuvers or operating in challenging environments.

- Computational Efficiency: While they have four components, quaternion multiplications (which combine rotations) are often computationally faster and more stable than multiplying 3×3 rotation matrices. This is crucial for real-time flight control systems that need to perform millions of calculations per second.

- Smooth Interpolation: Quaternions allow for smooth and shortest-path interpolation between two orientations (known as SLERP – Spherical Linear Interpolation). This is invaluable for generating smooth trajectories and transitions in autonomous flight.

Due to these advantages, quaternions are at the heart of most modern drone flight control systems, particularly in their inertial navigation systems and sensor fusion algorithms, where accurate and robust attitude estimation is critical.

Applications in Drone Tech & Innovation

The practical implications of rotation math extend across nearly all facets of drone technology, driving advancements in autonomy, data collection, and system reliability.

Autonomous Navigation and Path Planning

For a drone to truly fly autonomously, it must accurately know its precise orientation relative to its environment. GPS provides positional data, but it doesn’t directly tell the drone which way it’s facing or how it’s tilted. This is where rotation math, in conjunction with IMU data, provides the critical attitude information. Autonomous flight systems use rotation math to:

- Maintain a Desired Heading: Follow a specific compass direction.

- Execute Precise Maneuvers: Perform turns, ascents, and descents while maintaining stability and orientation.

- Follow Complex Paths: Interpret a predefined flight path in 3D space and translate it into a sequence of rotational and translational commands for the drone.

- Waypoint Navigation: Align the drone’s body with the vector pointing to the next waypoint, then command it to move in that direction.

Stabilization and Flight Control Systems

The ability of a drone to hover steadily in place or resist gusts of wind is a testament to its advanced stabilization system, which relies heavily on rotation math. The flight controller continuously monitors the drone’s attitude using IMU data (often filtered through Kalman filters or complementary filters, which extensively use rotation math).

- Attitude Estimation: Rotation math is used to combine raw gyroscope and accelerometer data into a robust estimate of the drone’s current roll, pitch, and yaw. Quaternions are typically used here.

- PID Control: Proportional-Integral-Derivative (PID) controllers then take this estimated attitude and compare it to the desired attitude. The difference (error) is fed into the PID algorithm, which calculates the necessary adjustments to the motor speeds. These adjustments generate torques that rotate the drone back towards its desired orientation.

- Countering Disturbances: When a drone is hit by a gust of wind, it rotates slightly. Rotation math quickly identifies this deviation, and the flight controller uses this information to almost instantaneously apply counter-torques, bringing the drone back to its stable orientation.

Sensor Fusion and Data Interpretation

Drones are equipped with a suite of sensors—GPS, IMUs (accelerometers, gyroscopes, magnetometers), altimeters, cameras, LiDAR, ultrasonic sensors, etc. Each sensor provides data in its own specific coordinate system, often relative to the drone’s body.

- Homogenizing Data: To create a coherent picture of the drone’s state and environment, data from all these sensors must be combined or “fused.” This often involves transforming sensor readings from their local body frame into a common reference frame (usually the Earth frame or a navigation frame). Rotation matrices and quaternions are essential for these transformations.

- Attitude and Heading Reference Systems (AHRS): These systems explicitly use rotation math to combine gyroscope, accelerometer, and magnetometer data to provide accurate and drift-free estimates of the drone’s roll, pitch, and yaw, often correcting for magnetic field disturbances and accelerometer biases.

- Georeferencing: When a camera captures an image, the pixels are relative to the camera’s lens. To place this image accurately on a map (georeference it), you need to know the camera’s exact position and orientation (roll, pitch, yaw) at the moment the photo was taken. Rotation math transforms the image coordinates into global map coordinates.

Mapping, Remote Sensing, and 3D Reconstruction

The ability of drones to generate precise maps, digital elevation models, and highly detailed 3D models of structures and landscapes is a direct consequence of sophisticated rotation math.

- Photogrammetry: This technique involves taking overlapping photographs from different angles. To stitch these photos together and build a 3D model, software must accurately determine the camera’s position and orientation (rotation) for each image. Sophisticated algorithms use rotation math to align images, triangulate points in space, and correct for lens distortions and drone movements.

- Lidar Point Cloud Registration: LiDAR sensors generate millions of 3D points. When a drone flies over an area, the LiDAR scanner creates multiple point clouds from different perspectives. To merge these into a single, comprehensive 3D model, the point clouds must be “registered”—meaning their relative rotations and translations must be calculated and applied to align them perfectly.

- Orthomosaics: Creating a perfectly scaled, distortion-free map (orthomosaic) from drone images requires not only knowing the drone’s position but also its exact attitude to correct for perspective distortions in each photo.

The Future of Drone Autonomy through Advanced Rotation Math

As drone technology continues to evolve, the demands on rotation math become even more stringent, pushing the boundaries of what’s possible in autonomous flight and intelligent interaction.

AI Follow Mode and Object Tracking

For drones to autonomously follow a subject or track a moving object (e.g., a person, a vehicle), they need to continuously predict the target’s movement and adjust their own position and orientation. Rotation math plays a crucial role in:

- Target Relative Orientation: Calculating the angle and direction of the target relative to the drone’s current orientation.

- Predictive Tracking: Using rotational dynamics to anticipate the target’s future position and orientation, allowing the drone to smoothly adjust its flight path and camera angle to keep the target in frame.

- Gimbal Control: Separately, the drone’s camera gimbal also uses its own set of rotation math calculations to stabilize the camera and point it accurately at the target, independent of the drone’s own movements.

Swarm Robotics and Collaborative Flight

The future of drones includes intelligent swarms performing complex tasks collaboratively. This requires multiple drones to synchronize their movements and orientations.

- Relative Orientation Awareness: Each drone in a swarm needs to know its orientation relative to its neighbors, not just to the ground. This necessitates robust, distributed rotation math calculations to maintain formation and avoid collisions.

- Coordinated Maneuvers: When a swarm performs a synchronized turn or formation change, every drone must precisely execute its individual rotation in coordination with the others, relying on a shared understanding of rotational states.

Real-time Obstacle Avoidance

Autonomous obstacle avoidance systems use sensors like cameras, LiDAR, and ultrasonic transducers to detect objects in the drone’s path. Rotation math is vital for:

- Object Localization: Transforming sensor readings (e.g., distance to an object at a specific angle) from the drone’s body frame to a global frame to determine the object’s precise location relative to the drone’s path.

- Path Planning Around Obstacles: Calculating the necessary rotational adjustments (yaw, pitch, roll) for the drone to smoothly steer around an detected obstacle while maintaining its overall trajectory.

- Dynamic Avoidance: In highly dynamic environments, rapidly changing relative rotations between the drone and moving obstacles are continuously computed to ensure safe passage.

Empowering the Next Generation of Drones

From maintaining a steady hover to executing complex autonomous missions, rotation math is the silent, yet indispensable, force behind every sophisticated drone operation. It allows drones to not just move through space, but to truly understand their place within it, interact with their environment intelligently, and perform tasks with unprecedented precision and autonomy. As drone technology continues to push the boundaries of aerial robotics and AI, the underlying principles of rotation math will remain a critical field of innovation, empowering the next generation of smarter, safer, and more capable unmanned aerial vehicles.