In the intricate world of advanced drone technology, where autonomous systems interact with complex environments, the concepts of “direct object” and “indirect object” emerge not as grammatical terms, but as crucial distinctions in how drones perceive, process, and act upon their surroundings. As AI, machine learning, and sophisticated sensor arrays drive the evolution of unmanned aerial vehicles (UAVs), understanding these operational ‘objects’ becomes fundamental to designing effective missions, optimizing data acquisition, and ensuring safe and intelligent flight. This re-contextualization allows engineers and operators to delineate primary objectives from contextual data, fostering a more nuanced approach to drone innovation.

Redefining “Objects” in Autonomous Drone Systems

Within the domain of drone technology, particularly in areas like remote sensing, mapping, AI-driven autonomy, and specialized inspections, the term “object” transcends its everyday meaning. It refers to any identifiable entity or phenomenon that a drone’s sensors detect and its onboard intelligence systems analyze. These objects can be physical, like buildings, vehicles, people, or natural formations, or they can be abstract, such as thermal signatures, electromagnetic fields, or atmospheric conditions. The drone’s suite of technologies—ranging from LiDAR and hyperspectral cameras to advanced computer vision algorithms—is engineered to perceive and interpret these objects, converting raw sensor data into actionable information.

From Grammar to Geospatial Entities

Unlike their grammatical counterparts, which define roles in a sentence, direct and indirect objects in drone operations describe the hierarchical importance and relationship of detected entities to a mission’s primary goal. This operational framework is essential for prioritizing processing power, allocating sensor resources, and defining the scope of data collection. For instance, in an AI-powered surveillance mission, distinguishing between the primary target and the surrounding environment is critical for efficient tracking and anomaly detection. Without such a framework, autonomous systems would struggle to filter relevant information from background noise, leading to inefficiencies and potential mission failures.

Sensing and Perception Frameworks

Modern drones employ sophisticated perception frameworks that allow them to build a comprehensive understanding of their operational space. This involves simultaneous localization and mapping (SLAM), object recognition, classification, and tracking. Each object identified by the drone’s sensors is assigned attributes—such as position, velocity, size, and classification (e.g., ‘tree’, ‘car’, ‘person’). These attributes are then fed into decision-making algorithms that determine how the drone should interact with or gather data about these objects. The ability to clearly differentiate between direct and indirect objects guides these frameworks, enabling more precise navigation, targeted data capture, and adaptive mission execution.

The Direct Object: Purpose-Driven Engagement

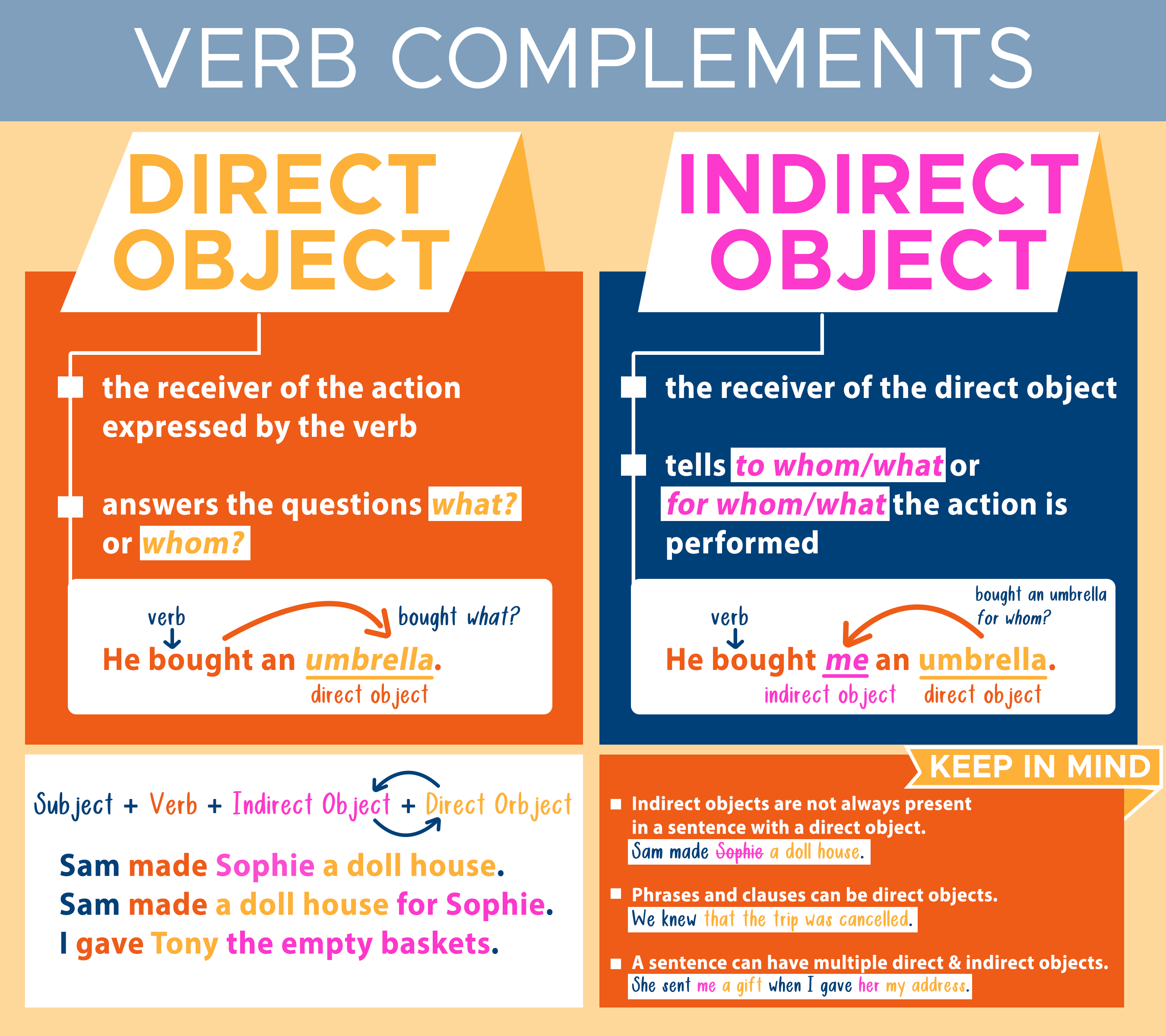

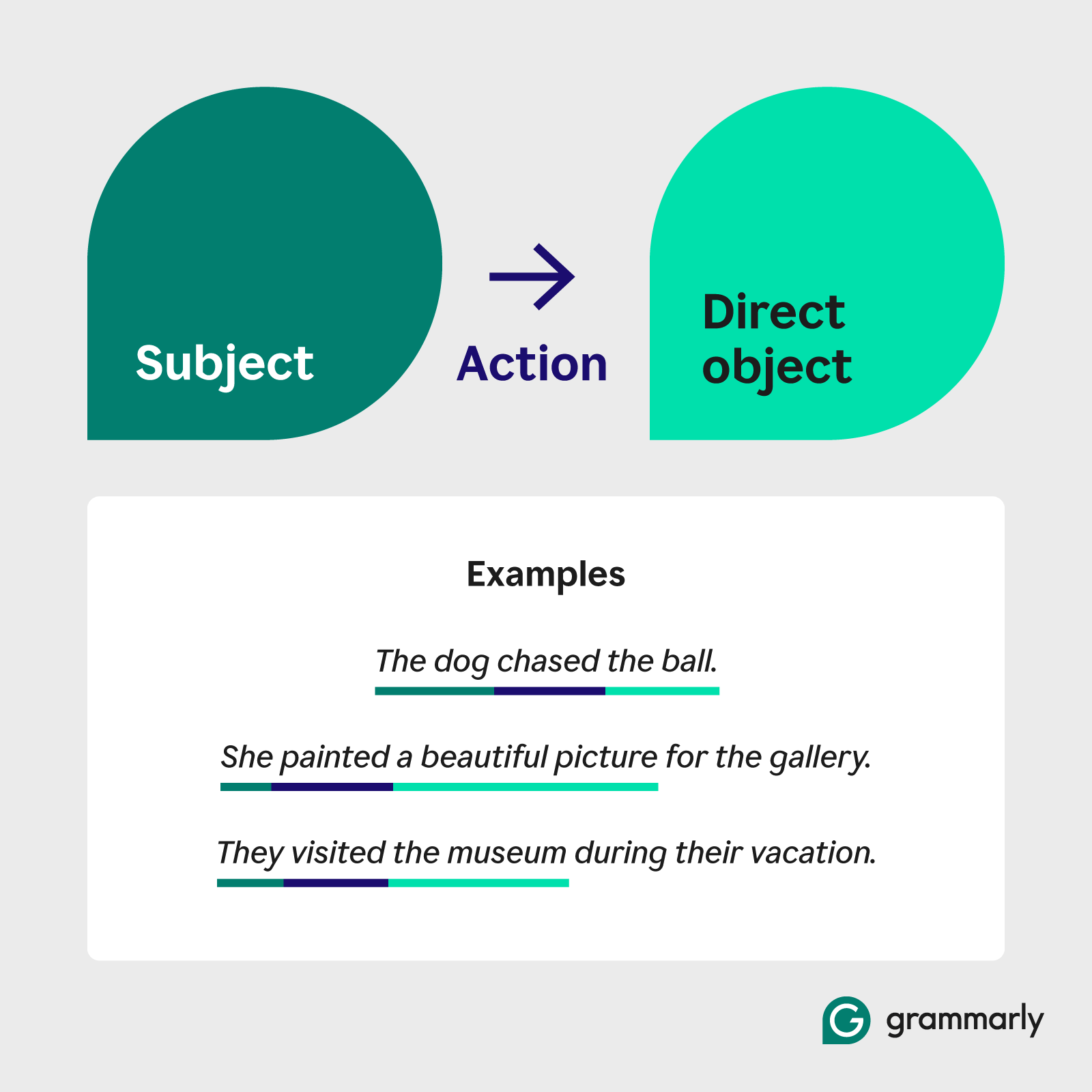

The “direct object” in drone operations refers to the primary entity, area, or phenomenon that is the explicit focus of a drone’s mission or a specific autonomous function. It is the “what” or “whom” that directly receives the drone’s primary action, data collection, or intelligent engagement. This concept is central to defining mission success, as the drone’s resources—its flight path, sensor orientation, processing power, and communication links—are predominantly dedicated to interacting with or analyzing this core objective.

Primary Targets in AI-Powered Missions

In the realm of Tech & Innovation, direct objects are pivotal for intelligent autonomy:

- AI Follow Mode: When a drone is programmed to follow a specific individual or vehicle, that entity becomes the direct object. The drone’s computer vision algorithms are constantly identifying, tracking, and predicting the movements of this specific target, adjusting its flight parameters (speed, altitude, camera angle) to maintain optimal perspective and distance. The direct object dictates the drone’s dynamic behavior.

- Precision Agriculture: In crop monitoring, a specific field or even an individual plant identified for anomaly detection (e.g., disease, pest infestation) can be the direct object. Hyperspectral or multispectral sensors are focused to gather highly specific data on its health and characteristics, informing targeted intervention strategies.

- Infrastructure Inspection: For tasks like inspecting a wind turbine blade or a section of a power line, the specific component being analyzed is the direct object. The drone employs high-resolution cameras and thermal sensors to acquire detailed imagery and data of this precise area, identifying structural fatigue or heat anomalies.

- Mapping and Surveying: When a drone is tasked with creating a detailed 3D model of a specific building or a designated construction site, that particular structure or site is the direct object. The mission planning software ensures comprehensive coverage and optimal photogrammetric data capture of this defined area.

The drone’s entire operational logic, from flight path generation to data storage and transmission, revolves around effectively engaging with its direct object, aiming to gather the most pertinent and high-quality information about it.

Actionable Data Streams and Focused Outcomes

Data collected about the direct object typically forms the core output of a drone mission. This data is usually prioritized for processing, analysis, and reporting. For example, in an agricultural context, data indicating stress levels in a specific crop (the direct object) would be immediately flagged for agronomists. In an inspection, images highlighting a crack in a bridge support (direct object) would be routed directly to maintenance teams. The quality and specificity of information derived from direct objects are paramount, as they directly lead to actionable insights and drive operational decisions. The drone’s systems are designed to ensure the integrity, precision, and relevance of this core data stream.

The Indirect Object: Contextual Data and Secondary Impact

In contrast to the direct object, the “indirect object” in drone operations refers to elements that are not the primary focus but are either closely related to the direct object, provide essential contextual information, or are incidentally captured and contribute to a broader understanding of the operational environment. While not the main goal, indirect objects are crucial for comprehensive analysis, situational awareness, safety, and enhancing the utility of the primary data.

Environmental Factors and Ancillary Information

Indirect objects often represent the surrounding environment or secondary entities that influence or are influenced by the direct object. Examples include:

- AI Follow Mode: While tracking a person (direct object), the drone’s wider field of view will capture surrounding buildings, trees, other pedestrians, or changing weather conditions. These elements, though not actively tracked, are indirect objects providing vital context for safety (obstacle avoidance) and environmental awareness. For instance, the drone needs to avoid flying into a tree (indirect object) while following a runner (direct object).

- Precision Agriculture: When monitoring a specific crop (direct object), the drone’s sensors might also collect data on soil moisture in adjacent unplanted areas, the presence of weeds between rows, or the shadow patterns from nearby structures. These indirect objects provide a more complete ecological picture and can help interpret the direct object data more accurately.

- Infrastructure Inspection: While inspecting a specific power line (direct object), the drone might also capture images of surrounding vegetation, topography, or even wildlife in the vicinity. This contextual data, though not the primary inspection target, could inform vegetation management strategies or highlight potential environmental risks.

- Mapping and Surveying: When a construction site (direct object) is being mapped, data on neighboring properties, access roads, or geological features just outside the site boundary become indirect objects. This ancillary information can be crucial for logistical planning, regulatory compliance, or assessing environmental impact.

The drone’s intelligent systems often process indirect object data to enhance the understanding of the direct object, identify potential risks, or uncover unforeseen insights. For example, identifying an unmapped power line (indirect object) while surveying a construction site (direct object) is a critical safety output.

Broader System Interactions and Unintended Benefits

Beyond providing contextual data, indirect objects can also represent secondary beneficiaries or broader systemic interactions. For instance, a drone might be deployed to monitor traffic flow on a specific highway (direct object) for a city’s transportation department. The data collected might also incidentally reveal areas of increased pedestrian activity on adjacent sidewalks or identify illegally parked vehicles, which, though not the primary mission, can provide valuable information for other city services (indirect beneficiaries/objects).

The strategic collection and analysis of data pertaining to indirect objects allows for a richer, more holistic understanding of a drone’s operational environment. It facilitates the development of more robust AI models that can better adapt to changing conditions and predict potential challenges. This comprehensive data capture is a hallmark of advanced drone innovation, moving beyond single-point objectives to embrace a more integrated understanding of complex scenarios.

Strategic Implications for Drone Tech and Innovation

The distinction between direct and indirect objects, as applied to drone operations, has profound strategic implications for the future of UAV technology and its integration into various industries. This conceptual framework guides the development of more sophisticated AI, influences mission planning protocols, and shapes data architecture for optimal utility.

Algorithm Optimization and Machine Learning

Understanding direct and indirect objects is crucial for training and optimizing machine learning models that power drone autonomy. AI algorithms must be trained to:

- Prioritize and filter: Clearly identify and focus on direct objects while maintaining awareness of indirect objects for context and safety.

- Contextualize data: Use information from indirect objects to better interpret the state and behavior of direct objects (e.g., using weather conditions from indirect objects to predict how a direct object, like a fire, might spread).

- Anomalous detection: Flag unusual interactions between direct and indirect objects (e.g., a tracked vehicle – direct object – suddenly moving towards an unexpected obstacle – indirect object).

This differential approach leads to more efficient processing, reduced latency, and more reliable autonomous decision-making.

Mission Planning and Data Architecture

For complex drone missions, defining direct and indirect objects at the planning stage ensures comprehensive coverage and data utility. Mission planners can design flight paths and sensor configurations that not only capture high-resolution data on direct objects but also collect sufficient contextual information from indirect objects. Data architects, in turn, can design storage and processing pipelines that differentiate between primary and secondary data streams, ensuring critical direct object data is immediately available while valuable indirect object data is cataloged for later analysis or broader insights. This structured approach to data management maximizes the return on investment for drone operations.

Advancing Autonomous Capabilities

Ultimately, the ability of drones to intelligently discern and interact with both direct and indirect objects pushes the boundaries of autonomous flight. It enables drones to perform more complex tasks with greater independence, adapting to dynamic environments and making informed decisions on the fly. This sophisticated understanding of operational ‘objects’ is a cornerstone of next-generation drone innovation, leading to safer, more efficient, and more versatile UAV applications across countless sectors, from urban planning and disaster response to scientific research and smart infrastructure management.