In the realm of flight technology, particularly in the sophisticated systems that govern drone navigation and operation, understanding statistical concepts like confounding variables is crucial. These variables, often unseen, can subtly distort our perception of cause and effect, leading to flawed conclusions about the performance and reliability of our airborne machines. Whether we are analyzing the efficacy of a new stabilization algorithm or the impact of sensor calibration on flight path accuracy, a confounding variable can throw a wrench into our data analysis, making it appear as though one factor influences another when, in reality, a third, unmeasured factor is the true driver.

Understanding Confounding Variables in Flight Technology

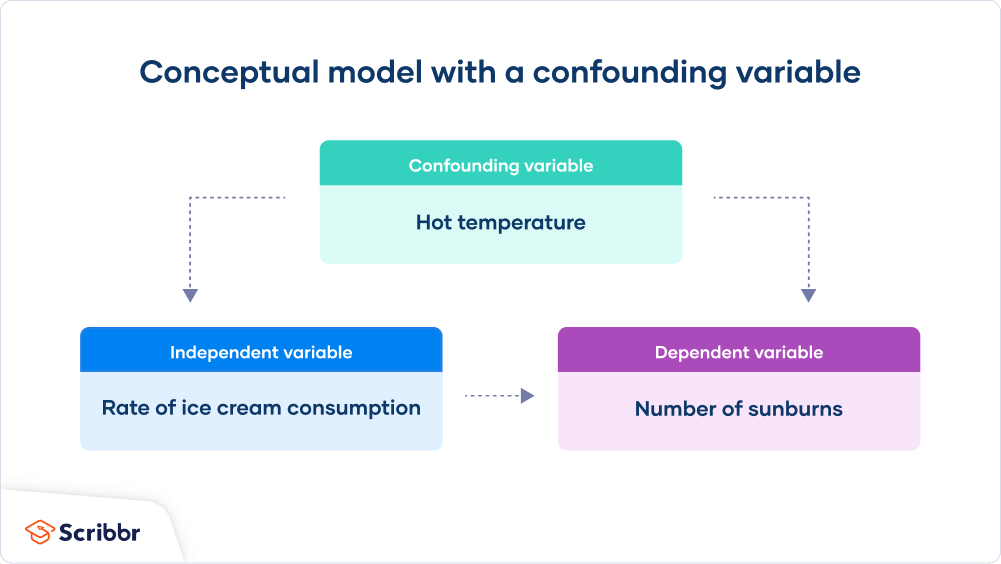

A confounding variable is an external factor that influences both the independent and dependent variables in a study. In essence, it creates a spurious association, making it seem like there’s a direct relationship between two variables when, in fact, the confounding variable is responsible for the observed effect. For a variable to be considered confounding, it must meet two criteria: it must be associated with the exposure (the independent variable) and it must be an independent cause of the outcome (the dependent variable). In flight technology, this can manifest in numerous ways, impacting everything from sensor performance to the precision of autonomous flight paths.

Consider a scenario where we are testing a new obstacle avoidance system designed to improve drone safety. Our independent variable might be the presence or absence of the new system, and our dependent variable could be the number of near-miss incidents recorded. We might observe that drones equipped with the new system have fewer near misses. However, if the test flights for the new system were exclusively conducted in clear, open airspace with excellent visibility, while the control group (without the new system) was tested in more complex, cluttered environments, then “environmental complexity” or “visibility conditions” becomes a confounding variable. Drones in the new system group benefited not just from the improved algorithm but also from the inherently safer flying conditions.

Similarly, when evaluating the impact of different GPS module manufacturers on flight path precision, we might notice that drones using Brand A’s GPS modules achieve significantly tighter flight paths than those using Brand B. If, however, Brand A modules were consistently installed in drones with more robust power management systems that ensured a more stable electrical supply, while Brand B modules were used in drones with less stable power, then “power supply stability” could be a confounding variable. The improved flight path precision might be due to the stable power, not solely the GPS module itself.

The Deception of Spurious Correlations

The core issue with confounding variables lies in their ability to create deceptive correlations. They can lead researchers and engineers to draw incorrect conclusions about the effectiveness of a particular technology or the reasons behind a specific performance outcome. In the pursuit of more reliable and efficient flight, understanding and mitigating confounding variables is paramount.

For instance, imagine we are assessing the impact of different sensor calibration techniques on the accuracy of a drone’s altitude hold feature. We hypothesize that a new, automated calibration process leads to more stable altitude holding. Our data might show fewer altitude deviations with the automated method. However, if the automated calibration was primarily implemented on newer drone models with upgraded gyroscopes and accelerometers, while the manual calibration was used on older models, then “hardware generation” becomes a confounding variable. The improved altitude hold might be due to the superior internal sensors of the newer drones, rather than the calibration method itself.

This highlights the critical need for rigorous experimental design. When testing new navigation systems, for example, it is essential to ensure that all other potentially influential factors are kept as constant as possible across the groups being compared. This includes environmental conditions, payload weight, battery charge levels, and even the specific flight controller firmware version. Failure to account for these factors can lead to conclusions that are not scientifically sound, potentially delaying the adoption of genuinely beneficial technologies or, worse, leading to the implementation of ineffective solutions.

Identifying and Controlling for Confounders

The first step in addressing confounding variables is to identify potential confounders. This requires a deep understanding of the system being studied and the factors that could plausibly influence the observed relationship. This often involves brainstorming and consulting with domain experts. Once potential confounders are identified, researchers can employ various strategies to control for them.

One common method is randomization. In a randomized controlled trial, participants (in this case, drones or flight tests) are randomly assigned to different treatment groups. This helps ensure that, on average, potential confounding variables are distributed evenly across the groups, minimizing their influence. For example, when comparing two different stabilization algorithms, randomly assigning which drones receive which algorithm helps to distribute any inherent differences in the drones themselves (e.g., slight variations in motor performance, manufacturing tolerances) evenly between the groups.

Another strategy is matching. This involves pairing individuals or units in the treatment and control groups that are similar with respect to the confounding variable. For example, if we suspect that ambient temperature might confound the results of a battery performance test, we could match drones with similar battery types that are tested at similar ambient temperatures.

Stratification is another technique. This involves dividing the study population into subgroups (strata) based on the confounding variable and then analyzing the relationship between the independent and dependent variables within each stratum. For example, if we are testing the effect of different propeller designs on flight duration and suspect that wind speed is a confounder, we could stratify our analysis by low wind conditions and high wind conditions. This allows us to see the effect of the propellers independently under different wind regimes.

Finally, statistical adjustment is often employed in the analysis phase. This involves using statistical models, such as regression analysis, to mathematically account for the influence of confounding variables. By including the confounding variable as a covariate in the model, its effect can be isolated, allowing for a clearer estimation of the relationship between the primary independent and dependent variables. For instance, if we are evaluating the impact of different sensor fusion techniques on navigation accuracy and have identified atmospheric pressure as a potential confounder, we can include atmospheric pressure measurements as a covariate in our regression model to adjust for its effect.

Confounding Variables in Navigation and Stabilization Systems

The intricate world of drone navigation and stabilization systems is particularly susceptible to confounding variables. The performance of GPS, inertial measurement units (IMUs), barometers, and other sensors is inherently influenced by a host of external and internal factors. When engineers and researchers are developing and testing new algorithms or hardware components for these systems, it’s essential to be acutely aware of these potential confounders.

Consider the development of an advanced waypoint navigation system. The independent variable might be the accuracy of the implemented algorithm, and the dependent variable could be the deviation from the intended flight path. If tests are conducted with a new algorithm on a clear, sunny day with minimal wind, and then compared to a baseline algorithm tested on a windy, overcast day, “weather conditions” becomes a powerful confounding variable. The new algorithm might appear superior not because of its inherent algorithmic advantage, but because it was tested in far more forgiving environmental conditions.

GPS Accuracy and Environmental Factors

GPS accuracy is a prime example of where confounding variables can easily creep in. While the number of visible satellites and signal strength are primary determinants of GPS accuracy, other factors can exert significant influence. For instance, urban canyons, dense foliage, and even atmospheric disturbances can degrade GPS signals. If a new GPS receiver module is being tested, and the tests are conducted in an open field with clear sky visibility, its performance might appear excellent. However, if this same module is later deployed in a dense urban environment, its performance could be drastically different, not due to a flaw in the module itself, but due to the confounding effect of signal multipath and obstruction.

When evaluating the impact of different IMU calibration techniques on flight stabilization, the ambient temperature can be a confounding variable. IMUs can exhibit temperature drift, meaning their readings can change as their temperature fluctuates. If one IMU is tested at a stable, cool temperature and another is tested after prolonged operation at higher temperatures, any observed differences in stabilization performance could be due to temperature-induced drift rather than a fundamental difference in the IMU’s intrinsic quality or the calibration method’s effectiveness.

The Influence of Power Systems on Sensor Performance

The electrical power supplied to navigation and stabilization components can also introduce confounding variables. Fluctuations in voltage, electrical noise, or intermittent power interruptions can affect sensor readings and the stability of the flight controller. For example, if we are comparing two different obstacle avoidance sensors, and one is powered by a dedicated, clean power line while the other shares a power source with high-current motors that cause voltage dips, the observed performance differences may be attributable to the power supply rather than the sensors themselves.

Similarly, the battery’s state of charge can influence the performance of various electronic components. As a battery discharges, its voltage can drop, potentially affecting the stability and accuracy of sensors. If a comparison of two flight control algorithms is performed, with one tested using a fully charged battery and the other tested as the battery approaches depletion, the observed differences in responsiveness or precision might be confounded by the varying power levels.

Interplay of Software and Hardware

In complex flight technology, software algorithms and hardware capabilities are inextricably linked. It can be challenging to isolate the precise contribution of a software enhancement from the underlying hardware’s capabilities. For instance, if a new flight path planning algorithm is introduced that promises greater efficiency, and it’s tested on drones equipped with the latest, most powerful processors, its perceived advantage might be amplified by the superior processing power. A fairer comparison would involve testing the algorithm on a range of hardware configurations to discern its true algorithmic benefit, independent of the processing capabilities.

When assessing the impact of a new stabilization firmware update, the physical condition of the drone’s motors and propellers can be a confounding factor. A drone with worn-out propellers or slightly imbalanced motors might exhibit poorer stabilization even with an improved firmware, simply due to the mechanical inefficiencies. Therefore, controlling for the mechanical state of the drone is crucial for a valid comparison of firmware performance.

By meticulously identifying and controlling for these confounding variables – through robust experimental design, randomization, matching, stratification, and statistical adjustment – engineers and researchers can gain a clearer, more accurate understanding of the true performance and impact of the flight technologies they are developing. This rigorous approach is fundamental to advancing the safety, efficiency, and capabilities of unmanned aerial systems.