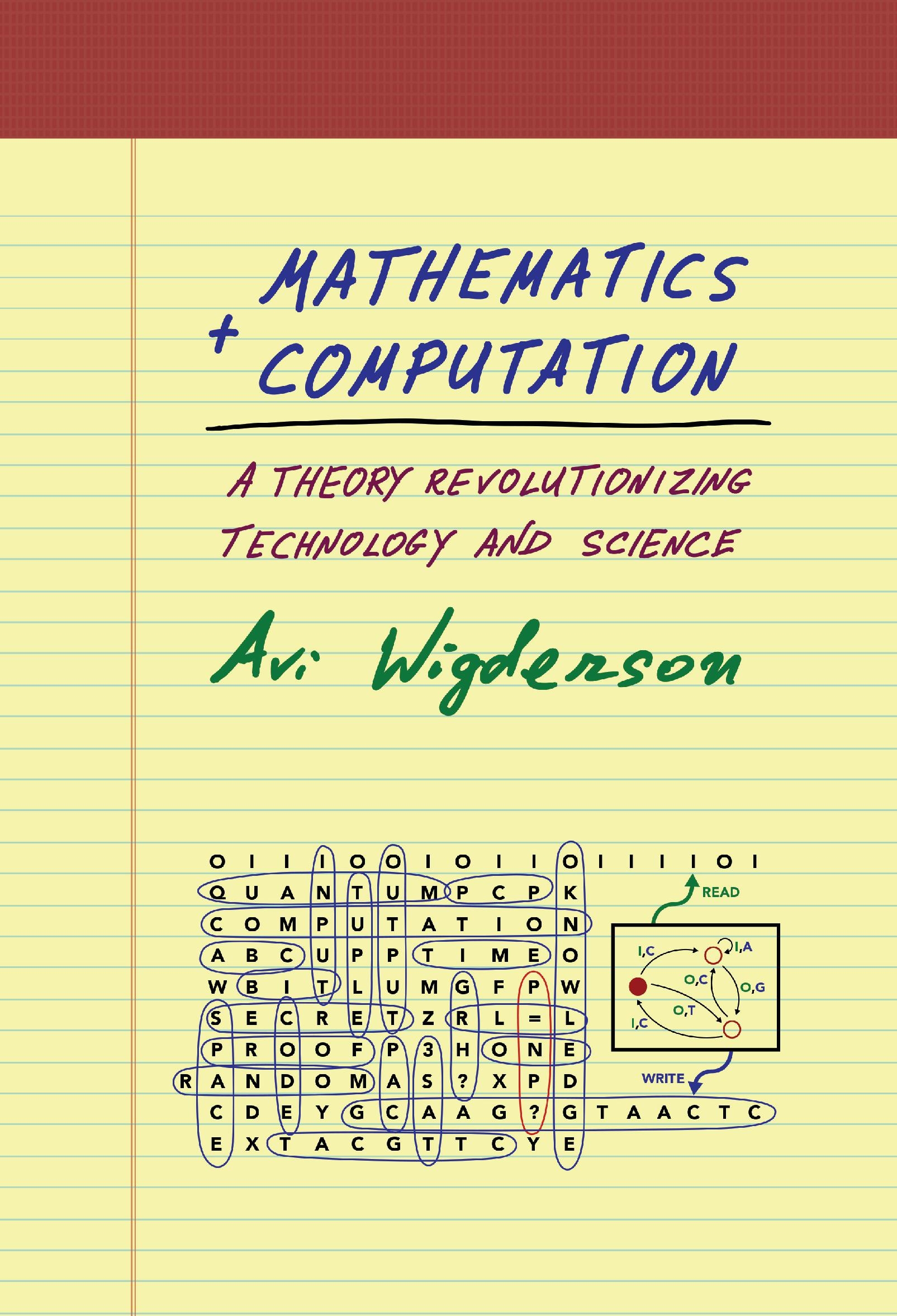

The Foundation of Algorithmic Thinking

At its core, a computation in mathematics is the process of performing a calculation or solving a problem using a defined set of rules and operations. It’s about transforming input data into output data through a sequence of discrete, unambiguous steps. This fundamental concept underpins vast areas of mathematics, from basic arithmetic to the most complex theoretical computer science. Without computation, the abstract world of numbers and symbols would remain inert, unable to be manipulated or understood.

The essence of computation lies in its methodical nature. Imagine a recipe: it’s a sequence of instructions—add flour, whisk eggs, bake at 350 degrees. Each step is precise and, when followed correctly, leads to a predictable outcome: a cake. Mathematical computation operates on a similar principle, but with the language of numbers and logic. For example, the computation of $2 + 3$ involves the rule of addition applied to the numbers 2 and 3, yielding the result 5. This seems simple, but it’s a powerful illustration of how rules can be applied to achieve a desired result.

The history of computation is inextricably linked to the development of mathematics itself. Early civilizations developed counting systems and methods for performing basic calculations to manage trade, agriculture, and astronomical observations. The invention of algorithms, systematic procedures for solving problems, by mathematicians like Euclid and later Al-Khwarizmi, marked significant steps forward. Al-Khwarizmi’s work on algebra, for instance, provided a framework for symbolic manipulation that is a hallmark of modern computation.

In the modern era, computation has been revolutionized by the advent of digital computers. These machines are designed to execute computations at incredible speeds and with remarkable accuracy. However, the underlying mathematical principles of computation existed long before their physical realization. The abstract concept of an algorithm, a step-by-step procedure, is the theoretical bedrock upon which all computational systems are built. This abstraction is what allows us to analyze the complexity, efficiency, and limitations of any computational process, regardless of the hardware used to perform it.

The Language of Computation: Algorithms and Operations

The building blocks of any computation are algorithms and operations. An algorithm is a finite, well-defined sequence of instructions that, when executed, will eventually terminate and produce a result. It’s the “how-to” guide for solving a particular problem. For instance, the Euclidean algorithm for finding the greatest common divisor (GCD) of two numbers is a classic example. It involves repeatedly applying the division algorithm until a remainder of zero is obtained. The last non-zero remainder is the GCD. This algorithm is not specific to any particular set of numbers; it’s a general procedure applicable to any pair of non-negative integers.

Operations are the individual steps within an algorithm. These are the basic actions performed on data. In arithmetic, operations include addition, subtraction, multiplication, and division. In logic, operations include AND, OR, NOT, and XOR. As mathematics advanced, more complex operations were developed, such as differentiation and integration in calculus, or matrix multiplication in linear algebra. Each operation has a precise definition of what it does and what kind of input it expects.

The efficiency of a computation is often measured by the number of operations it requires and the complexity of those operations. An algorithm that requires fewer operations or simpler operations is generally considered more efficient. This is a critical consideration in computer science, where algorithms are designed to handle vast amounts of data. Analyzing the “time complexity” and “space complexity” of an algorithm, terms that describe how the computational resources grow with the input size, is a key part of understanding its practical viability.

Furthermore, the concept of computability is central to understanding the limits of computation. Not all mathematical problems can be solved by an algorithm. Gödel’s incompleteness theorems and Turing’s work on the halting problem demonstrated that there are inherent limitations to what can be computed. These theoretical findings have profound implications for what problems can be automated and what will always require human insight and creativity.

Types of Computations: From Arithmetic to Symbolic Manipulation

Computations can be broadly categorized based on the type of data they operate on and the nature of the operations involved.

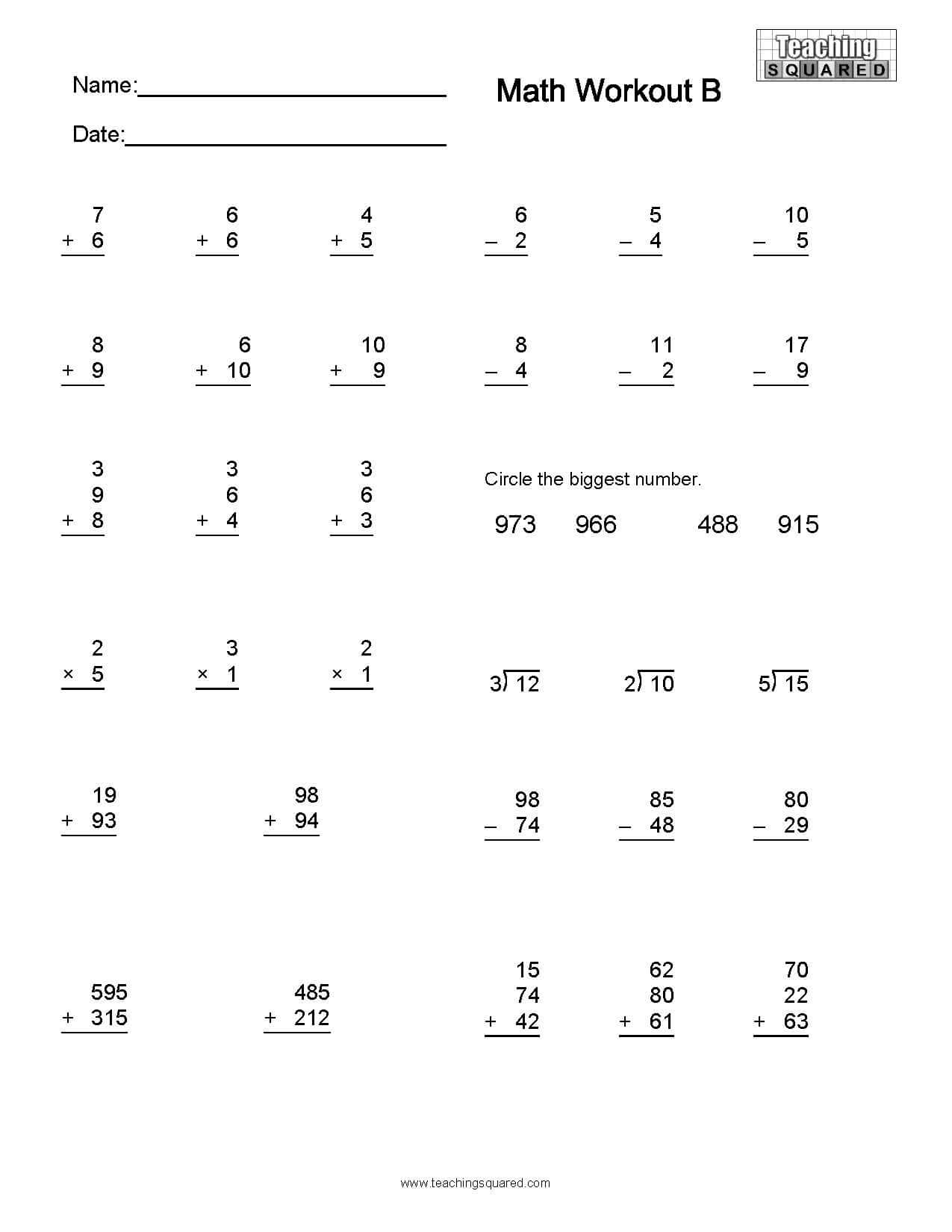

Arithmetic Computation

This is perhaps the most intuitive form of computation, dealing with numerical quantities. It involves performing operations like addition, subtraction, multiplication, and division on numbers. From simple sums in primary school to complex financial modeling and scientific simulations, arithmetic computation is ubiquitous. The precision of arithmetic computation is crucial in many fields, where even minor errors can have significant consequences. For example, in engineering, precise calculations are needed for structural integrity, and in physics, they are essential for validating theories.

Symbolic Computation

Symbolic computation, also known as symbolic mathematics or algebraic computation, deals with mathematical expressions rather than just their numerical values. Instead of calculating $2+3=5$, symbolic computation might manipulate expressions like $x^2 + 2x + 1$ to factor it into $(x+1)^2$. This is fundamental to areas like algebra, calculus, and differential equations. Computer algebra systems (CAS) are software tools that perform symbolic computations. They can solve equations, simplify expressions, find derivatives and integrals, and perform operations on matrices represented by symbols. This form of computation is invaluable in theoretical mathematics, physics, and engineering where understanding the structure and relationships within equations is as important as finding numerical solutions.

Logical Computation

Logical computation deals with propositions and truth values. It involves operations like AND, OR, and NOT, and is the foundation of Boolean algebra and digital circuit design. Every digital computer, at its most fundamental level, performs logical computations to process information. The decisions made by a computer program, the routing of data within a circuit, and the evaluation of complex conditions all rely on logical computation. Predicate logic, a more advanced form, allows for reasoning about properties of objects and relations between them, underpinning areas like artificial intelligence and formal verification.

Numerical Computation

While arithmetic computation deals with exact numerical values, numerical computation often involves approximations, especially when dealing with real numbers or iterative processes. Many mathematical problems, particularly in science and engineering, do not have exact analytical solutions and must be approximated using numerical methods. Techniques like numerical integration, solving differential equations numerically, and matrix factorization are all examples of numerical computation. The focus here is on achieving a desired level of accuracy and understanding the sources of approximation errors, such as round-off error and truncation error.

Computation in the Digital Age: The Role of Computers

The advent of digital computers has transformed the landscape of computation. Computers are specialized machines designed to execute algorithms with unprecedented speed and reliability. They operate by manipulating binary digits (bits), which represent information as sequences of 0s and 1s. The fundamental operations performed by a computer are based on logic gates, which implement Boolean operations.

Modern computers execute computations through a complex interplay of hardware and software. The Central Processing Unit (CPU) is the “brain” of the computer, responsible for fetching instructions from memory, decoding them, and executing them. This execution involves performing the basic arithmetic and logical operations that constitute the computation. The speed at which a CPU can perform these operations, measured in clock cycles per second (Hertz), is a key determinant of computational power.

Software, in the form of programs and applications, provides the algorithms and instructions that the computer executes. High-level programming languages allow humans to express complex computational tasks in a more abstract and human-readable form. These languages are then compiled or interpreted into machine code, the low-level instructions that the CPU can directly understand. This abstraction layer is crucial for making computation accessible and manageable.

The impact of computers on computation is profound. They have enabled the solution of problems that were previously intractable, fueled advancements in scientific research, revolutionized industries, and fundamentally changed how we interact with information and the world around us. From the complex simulations that design aircraft to the algorithms that power search engines, computation, as executed by computers, is at the heart of modern technological progress.

The Future of Computation: Beyond Traditional Models

The evolution of computation is far from over. Researchers are continually exploring new paradigms and technologies that push the boundaries of what is possible.

Quantum Computation

Quantum computation represents a radical departure from classical computation. Instead of using bits, quantum computers use qubits, which can represent 0, 1, or a superposition of both states simultaneously. This, along with phenomena like entanglement, allows quantum computers to perform certain types of computations exponentially faster than even the most powerful classical computers. While still in its nascent stages, quantum computation holds the promise of revolutionizing fields like cryptography, drug discovery, and materials science by tackling problems that are currently intractable.

Neuromorphic Computing

Inspired by the structure and function of the human brain, neuromorphic computing aims to build hardware that mimics biological neural networks. These systems process information in a highly parallel and distributed manner, making them potentially very efficient for tasks involving pattern recognition, learning, and adaptation, such as those performed by artificial intelligence. Unlike traditional von Neumann architectures, neuromorphic chips are designed to perform computation and memory operations more closely together, reducing energy consumption and improving processing speed for specific workloads.

Distributed and Cloud Computing

The rise of distributed and cloud computing has fundamentally changed how and where computations are performed. Instead of relying solely on a single machine, computations can be spread across vast networks of interconnected computers. Cloud computing platforms provide on-demand access to computing resources, allowing individuals and organizations to scale their computational power as needed without investing in expensive hardware. This paradigm shift has democratized access to high-performance computing and enabled the development of new applications and services that would have been impossible just a few decades ago.

The concept of computation, from its foundational mathematical roots to its cutting-edge technological manifestations, remains a vibrant and dynamic field. As our understanding of mathematics deepens and our technological capabilities advance, the ways in which we define, perform, and utilize computations will continue to evolve, opening up new frontiers of discovery and innovation.