The concept of a “vertex” is fundamental to understanding how shapes are defined and manipulated, not just in traditional geometry, but increasingly in advanced technological domains like drone-based mapping, autonomous navigation, and remote sensing. In its simplest definition, a vertex is a point where two or more edges meet, or where several faces of a solid figure intersect. It represents a precise spatial coordinate, a cornerstone upon which complex digital representations of the physical world are built.

The Fundamental Building Blocks of Digital Geometry

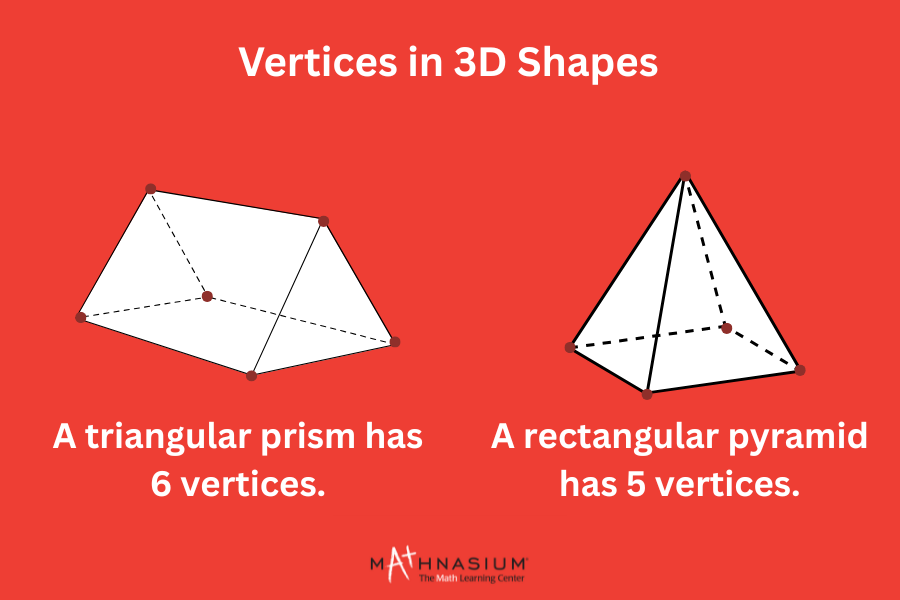

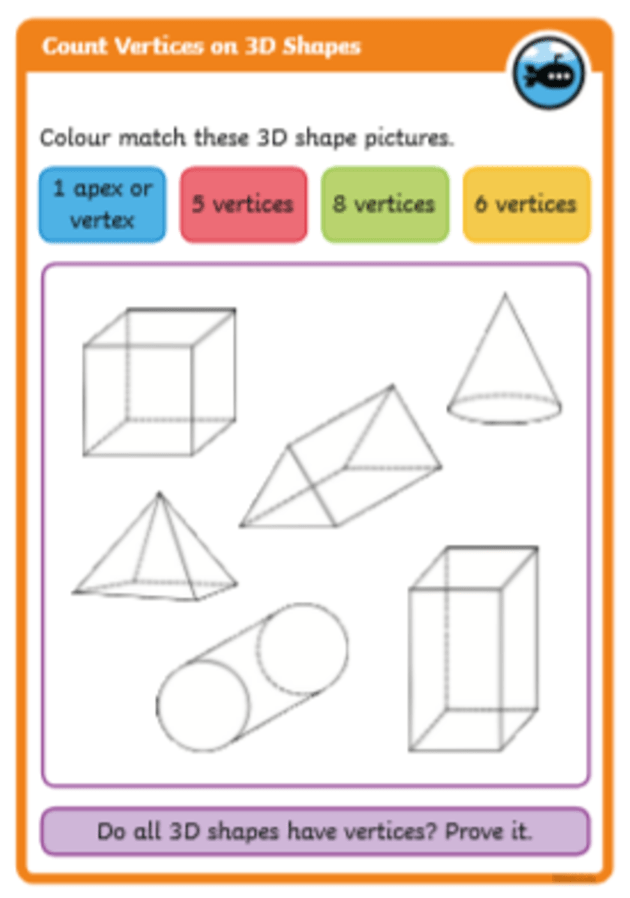

At its core, a vertex is a singular point in space, often represented by a set of coordinates—typically (x, y, z) in a 3D environment. Imagine a cube: each of its eight corners is a vertex. These vertices are connected by edges (the lines forming the sides of the cube), and these edges enclose faces (the flat surfaces of the cube). This triad of vertices, edges, and faces forms the basis of polygonal modeling, a ubiquitous method for representing three-dimensional objects in computer graphics and geospatial applications.

While the physical world is continuous, digital systems must approximate it using discrete data points. Vertices serve as these crucial points of discretization. By defining the precise location of key points on an object’s surface, we can construct a mesh—a network of connected vertices, edges, and faces—that accurately depicts the object’s form. This digital geometry is not merely an abstract concept; it is the language through which drones perceive, interpret, and interact with the environment. Without a clear understanding and robust handling of vertices, the sophisticated applications we associate with modern drone technology would be impossible. They are the initial data points that enable everything from creating detailed 3D models to facilitating real-time obstacle avoidance.

Vertices in Drone-Based 3D Modeling and Mapping

The power of drones in creating high-fidelity 3D models of the environment hinges directly on the accurate capture and processing of vertices. This capability is at the heart of photogrammetry and LiDAR scanning, two primary methods employed by drones for spatial data acquisition.

Photogrammetry and Point Clouds

Photogrammetry involves capturing multiple overlapping images of an area or object from various angles. Specialized software then analyzes these images to identify common features across different viewpoints. Each identifiable feature, which often corresponds to a distinct point or “vertex” on the object’s surface, is triangulated in 3D space. The result is a dense cloud of points, known as a point cloud, where each point is a vertex with its own unique (x, y, z) coordinates. The density and accuracy of this point cloud directly determine the detail and precision of the subsequent 3D model. Drones equipped with high-resolution cameras are ideal platforms for this, rapidly covering large areas to generate millions, even billions, of these individual vertex points.

From Points to Meshes

Once a point cloud is generated, the next step in creating a usable 3D model is typically to convert these individual vertices into a cohesive surface representation, or a mesh. Algorithms connect adjacent vertices to form edges, and these edges then form triangular or polygonal faces. This process transforms a disparate collection of points into a solid, navigable 3D model. For instance, in reconstructing a building, the point cloud might contain millions of vertices representing every brick, window frame, and roof tile. The meshing process then connects these points to form the visible surfaces of the building.

Applications Driven by Vertex Data

The accurate capture and reconstruction of objects using vertices unlock a multitude of applications across various industries:

- Architecture, Engineering, and Construction (AEC): Drones create precise “digital twins” of construction sites, buildings, and infrastructure. These models, built from countless vertices, allow for progress monitoring, volumetric calculations (e.g., earthworks), clash detection, and accurate as-built documentation.

- Agriculture: By mapping terrain and plant structures, vertices can define the topography of fields or the canopy structure of crops. Multispectral data, often associated with these vertices, can reveal crop health, irrigation needs, or pest infestations with unprecedented detail.

- Infrastructure Inspection: Detailed 3D models of bridges, power lines, wind turbines, and communication towers are generated. Specific points of interest, cracks, or wear can be identified and measured with high precision, as these anomalies manifest as unique vertex patterns or deviations from an expected surface.

- Environmental Monitoring: Monitoring erosion, mapping flood plains, tracking glacial retreat, or analyzing forest density all rely on capturing and comparing vertex data over time. Changes in the landscape are quantifiable through shifts in vertex positions.

Vertices for Autonomous Navigation and Obstacle Avoidance

Beyond static 3D modeling, vertices play an equally critical role in enabling drones to navigate autonomously and avoid collisions in dynamic environments. This involves real-time processing and interpretation of spatial data.

Simultaneous Localization and Mapping (SLAM)

For autonomous drones, especially in GPS-denied environments, Simultaneous Localization and Mapping (SLAM) is crucial. SLAM algorithms allow a drone to build a map of an unknown environment while simultaneously tracking its own position within that newly created map. In visual SLAM, the drone’s cameras identify unique, robust features—effectively, salient vertices—in its surroundings. By tracking how these vertices move across consecutive video frames, the drone can infer its own motion (localization) and update its understanding of the environment’s structure (mapping). LiDAR-based SLAM systems directly capture clouds of vertices, using these points to construct dense maps and estimate the drone’s pose within them.

Environmental Representation for Autonomy

For intelligent navigation, drones need a simplified, yet accurate, representation of their surroundings. This often involves converting raw sensor data (point clouds) into more abstract geometric primitives, which are still ultimately defined by vertices. For example, large, flat surfaces might be represented by a few vertices defining their corners, rather than millions of points. Obstacles can be modeled as bounding boxes or convex hulls, whose corners are precisely vertices. This abstracted geometric model is computationally efficient for path planning algorithms.

Collision Detection and Path Planning

The ability to avoid obstacles is paramount for drone safety and mission success. Collision detection algorithms constantly compare the drone’s planned trajectory with the geometric representation of known obstacles. This involves checking for intersections between the drone’s own bounding geometry (also defined by vertices) and the vertices and faces of objects in the environment. If a collision is predicted, the drone’s flight control system computes an alternative path. This path planning often involves building a graph of navigable space, where “nodes” are safe points (often derived from clusters of free-space vertices) and edges represent traversable paths, allowing the drone to find an optimal, collision-free route.

The Role of Vertices in Advanced AI and Remote Sensing

The intersection of drone technology, artificial intelligence, and remote sensing further elevates the significance of vertices. AI models leverage vertex data to “understand” the environment in a more sophisticated way.

Machine Learning and Object Recognition

For drones to perform tasks like inspecting power lines for damage or monitoring specific animal populations, they need to recognize and classify objects in their environment. While 2D image processing is effective, 3D data, derived from drone-captured vertices, provides richer information, including size, shape, and orientation. AI models, particularly deep learning networks trained on 3D point clouds or mesh data, can accurately segment and classify objects. For example, a model might distinguish between trees, buildings, and vehicles by analyzing the distinct clusters and patterns of vertices associated with each object.

Semantic Segmentation of 3D Models

Semantic segmentation takes object recognition a step further by assigning a specific label to every single vertex in a 3D model. Imagine a 3D model of a city: semantic segmentation could label all vertices belonging to “roof,” “wall,” “window,” “road,” or “vegetation.” This level of detail transforms a raw geometric model into an “intelligent” model, enabling precise measurements, attribute extraction, and highly targeted analyses for urban planning, infrastructure management, and environmental assessment.

Change Detection and Environmental Dynamics

By capturing 3D models (and their underlying vertex data) of an area at different points in time, drones enable powerful change detection capabilities. Comparing successive point clouds allows for the precise identification and quantification of changes—whether it’s the growth of a construction project, the erosion of a coastline, the deforestation of an area, or the shift in a landslide. Algorithms can analyze vertex density, height, or position changes to highlight areas of significant alteration, providing invaluable data for monitoring and decision-making.

Virtual Reality (VR) and Augmented Reality (AR) Integration

Drone-captured 3D models, rich in vertex detail, are becoming crucial assets for immersive technologies. These models can be directly integrated into VR platforms for remote site visualization, virtual tours, or planning simulations. In AR applications, the precise geometry defined by vertices allows digital information to be accurately overlaid onto the real world, enhancing on-site inspection, maintenance, and training experiences.

The Evolution of Vertex Capture and Processing

The continued innovation in drone technology is inextricably linked to advancements in how vertices are captured, processed, and utilized.

Sensor Technology Enhancements

The resolution of drone-mounted cameras, the ranging accuracy and point density of LiDAR sensors, and the computational power of on-board processors have all seen exponential growth. This leads to the capture of ever denser and more accurate point clouds, meaning more precise vertices are available to define the intricacies of the real world. This enhanced fidelity allows for the detection of smaller features and subtler changes, pushing the boundaries of what drone-based remote sensing can achieve.

Advanced Processing Algorithms

Software innovation in photogrammetry, SLAM, and machine learning for point cloud analysis continues to refine the transformation of raw sensor data into meaningful geometric information. Algorithms are becoming more adept at filtering noise, fusing data from multiple sensor types, and extracting semantic information directly from vertex attributes. This translates to faster model generation, cleaner data, and more intelligent interpretation of the environment.

Real-time Vertex Processing

The trend towards on-board, real-time processing of vertex data is a significant leap. Instead of sending all raw data to a ground station for processing, advanced drones are now equipped to perform complex calculations in flight. This enables instantaneous decision-making for autonomous navigation, dynamic obstacle avoidance in complex environments, and rapid deployment of analytical insights directly in the field.

The future promises even more sophisticated capabilities. We can anticipate drones generating not just dense point clouds, but intelligent 3D models with semantic understanding attributed to every vertex, all in real-time. This continuous evolution will further cement vertices as the indispensable geometric primitives underpinning the next generation of autonomous and intelligent drone applications.