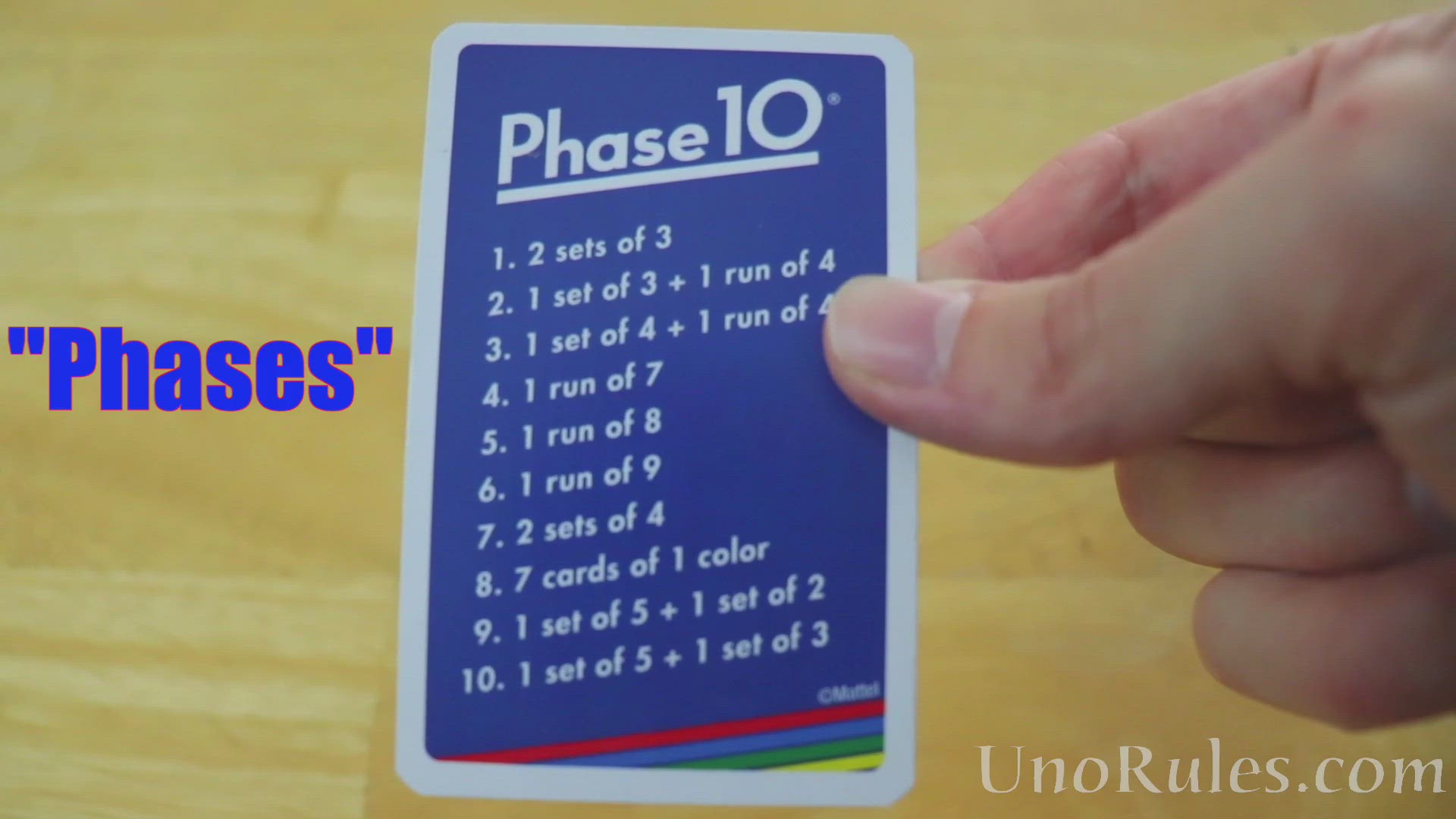

The evolution of drone technology, particularly in the realm of autonomy, intelligence, and integration, can be conceptualized as a progression through distinct developmental phases. From rudimentary remote control to sophisticated, self-governing systems, these phases represent significant leaps in technological innovation. This journey, from basic flight to what we might term “Phase 10” — a fully integrated, cognitive aerial robotic system — highlights the continuous advancements in AI, autonomous flight, mapping, remote sensing, and other cutting-edge capabilities that define the Tech & Innovation category. Understanding these phases provides insight into the current state of drone technology and offers a glimpse into its transformative future.

The Foundation: From Manual Flight to Autonomous Beginnings (Phases 1-3)

The initial stages of drone technology established the fundamental principles of flight control and laid the groundwork for future autonomy. These phases were characterized by increasing stability and the introduction of rudimentary programmed flight.

Phase 1: Manual Control and Basic Stability

At its genesis, drone operation was a purely manual endeavor. Pilots commanded every axis of movement – thrust, roll, pitch, and yaw – requiring significant skill and concentration. The core innovation here was the development of stable multi-rotor platforms capable of sustained, controlled flight. Early breakthroughs in gyroscope and accelerometer technology, coupled with electronic speed controllers (ESCs) and efficient motors, enabled these aerial vehicles to overcome inherent aerodynamic instabilities. The primary focus of this phase was achieving reliable, stable hover and controlled translation. Data acquisition, if any, was entirely at the discretion of the human operator, often limited to static photographs or shaky video footage captured by basic onboard cameras. The “innovation” here was making flight accessible beyond traditional RC aircraft, paving the way for eventual automation.

Phase 2: Programmed Waypoints and Basic Navigation

The introduction of GPS marked a pivotal moment, ushering in the era of programmed flight. Drones in Phase 2 could execute pre-defined flight paths by navigating between a series of waypoints. This capability freed the pilot from continuous manual input for routine maneuvers, allowing for repetitive and more precise flight patterns. Flight planning software, though basic, allowed users to plot routes on a map, which the drone would then follow autonomously. This significantly enhanced the drone’s utility for applications requiring systematic coverage, such as initial mapping efforts or basic aerial surveying. While still dependent on human input for mission setup and monitoring, the drone’s ability to autonomously maintain a trajectory and altitude represented a foundational step toward true autonomous flight. Basic remote sensing began to emerge, with drones capturing images along a pre-set grid for later stitching into orthomosaics.

Phase 3: Environmental Sensing and Early Obstacle Detection

Phase 3 witnessed the integration of basic environmental awareness. Drones began incorporating rudimentary proximity sensors, such as ultrasonic or single-point infrared sensors, to detect nearby obstacles. This allowed for basic reactive avoidance, where the drone would either stop or attempt to re-route slightly upon detecting an object within a certain range. Optical flow sensors, particularly for indoor flight or low-altitude operations, provided enhanced positional stability without GPS. This phase marked the initial foray into sensor fusion, combining GPS for global positioning with local sensors for immediate environment perception. While the avoidance capabilities were often limited to large, well-defined obstacles and reactive rather than predictive, it was a critical step in making drones safer and more robust, reducing the cognitive load on the pilot and hinting at more sophisticated autonomous capabilities for tasks like inspecting structures or navigating confined spaces.

The Ascent: Integrating Intelligence and Awareness (Phases 4-6)

As sensor technology and processing power advanced, drones began to move beyond simple automation, integrating sophisticated intelligence to perceive, understand, and interact with their environments in more complex ways.

Phase 4: Advanced Obstacle Avoidance and Dynamic Path Planning

The limitations of simple proximity sensors were overcome with the advent of more sophisticated vision systems and advanced ranging technologies. Stereo vision, monocular visual odometry (VIO), and eventually LiDAR (Light Detection and Ranging) systems provided drones with a much richer understanding of their 3D environment. Drones in Phase 4 could construct real-time 3D maps of their surroundings, enabling predictive obstacle avoidance and dynamic path planning. Instead of merely stopping or reacting to an immediate threat, these drones could actively identify clear routes around multiple obstacles, adjusting their flight path on the fly. This breakthrough enabled operations in far more complex and cluttered environments, opening doors for autonomous inspection of intricate industrial infrastructure, navigating dense forests for environmental research, or performing precise movements within urban canyons. This represented a significant leap in environmental autonomy and safety.

Phase 5: AI-Powered Object Tracking and Follow Modes

The integration of artificial intelligence, particularly machine learning and computer vision, transformed how drones perceived and interacted with specific targets. Phase 5 saw the development of robust AI-powered object tracking capabilities. Drones could now autonomously identify and follow a person, vehicle, or other designated object, maintaining optimal distance and framing without constant pilot input. Features like “ActiveTrack” or “Follow Me” became highly reliable, revolutionizing aerial filmmaking and personal drone usage. The on-board AI processed visual data in real-time, differentiating targets from background clutter and predicting their movement to ensure smooth and cinematic tracking. This capability extended beyond entertainment, finding practical applications in surveillance, search and rescue, and monitoring moving assets in industrial settings, showcasing the power of integrated AI for dynamic mission execution.

Phase 6: Multi-Sensor Fusion for Enhanced Situational Awareness

Phase 6 focused on aggregating and intelligently interpreting data from a diverse array of sensors, moving towards a truly comprehensive understanding of the drone’s operational environment and its mission objectives. Beyond navigation and obstacle avoidance sensors, drones began to integrate specialized payloads such as thermal cameras, multispectral or hyperspectral sensors, and advanced optical zooms. The innovation here lay in the sophisticated algorithms that could fuse data from these disparate sources to create a unified, enriched situational picture. For example, combining visual and thermal imagery could identify anomalies imperceptible to the human eye, crucial for precision agriculture, infrastructure inspection for heat leaks, or pinpointing individuals in search and rescue operations. This multi-sensor fusion capability drastically improved data quality, enabling highly accurate remote sensing, advanced mapping, and sophisticated environmental monitoring, transforming drones into versatile data collection and analysis platforms.

The Horizon: Towards Collaborative and Cognitive Autonomy (Phases 7-10)

The final stages of this evolutionary path push drones beyond mere automation, envisioning them as truly intelligent, adaptable, and collaborative entities that can operate within complex, dynamic systems with minimal human intervention.

Phase 7: Real-time Data Analysis and On-board Decision Making

Moving beyond raw data collection, Phase 7 drones gained the ability to perform significant real-time analysis directly on-board, transforming data into actionable insights instantaneously. Leveraging powerful edge computing capabilities and embedded AI models, these drones could process complex sensor data (e.g., high-resolution imagery, LiDAR scans) to identify anomalies, classify objects, or detect critical changes as they happened. This means a drone inspecting a wind turbine could immediately flag a hairline crack, or one monitoring a pipeline could identify a leak in real-time. This immediate feedback loop minimizes post-processing delays and enables adaptive mission adjustments. The drone can autonomously decide to re-inspect an area of interest, capture higher-resolution data, or alert human operators with a pre-analyzed summary, significantly increasing efficiency and responsiveness in critical applications like industrial inspection, disaster assessment, and security.

Phase 8: Autonomous Mission Adaptation and Learning

Phase 8 introduces a higher level of intelligence: the capacity for drones to learn from their experiences and adapt their missions dynamically. These drones employ advanced machine learning techniques, including reinforcement learning, to self-optimize their flight paths, data capture strategies, and response protocols based on mission objectives, environmental feedback, and past performance. They can autonomously adjust to unforeseen conditions, such as sudden weather changes or newly appeared obstacles, re-planning their routes or modifying their data collection parameters without human intervention. This adaptive capability allows for increasingly complex and long-duration autonomous operations, where the drone continuously refines its understanding of its environment and improves its task execution over time, moving towards truly self-sufficient aerial agents that evolve their operational intelligence.

Phase 9: Multi-Drone Coordination and Swarm Intelligence

The individual intelligence of advanced drones converges in Phase 9 with the development of multi-drone coordination and swarm intelligence. Here, multiple autonomous drones operate as a unified, collaborative system, sharing information, communicating seamlessly, and executing complex tasks that would be impossible for a single drone. This involves sophisticated inter-drone communication protocols, distributed AI algorithms for collective decision-making, and synchronized movements. Applications range from rapid, large-area mapping and precision agriculture with optimized coverage, to complex synchronized aerial displays, and coordinated search and rescue operations that can cover vast territories efficiently. The power of the collective, with each drone contributing to a shared objective, dramatically expands the scope and efficiency of aerial robotics, laying the groundwork for truly transformative applications in various industries.

Phase 10: Fully Integrated, Cognitive, and Self-Governing Systems

Phase 10 represents the pinnacle of drone innovation: fully integrated, cognitive, and self-governing aerial robotic systems. These drones operate with minimal to no human oversight, functioning as intelligent agents within broader smart ecosystems. They possess comprehensive situational awareness, advanced predictive capabilities, and the ability to make complex, ethical decisions based on mission parameters and real-time environmental data. Beyond flight and data collection, these drones can self-diagnose, perform minor self-repairs, communicate with other autonomous systems (ground robots, smart infrastructure), and contribute to vast, long-duration missions. Integrating seamlessly into smart cities, IoT networks, and advanced remote sensing platforms, Phase 10 drones are not just tools but active, contributing members of intelligent operational frameworks, offering Aerial Robotics-as-a-Service and pushing the boundaries of what is possible with autonomous aerial technology. This phase envisions drones as cognitive partners, realizing the full potential of aerial automation for societal benefit and industrial revolution.