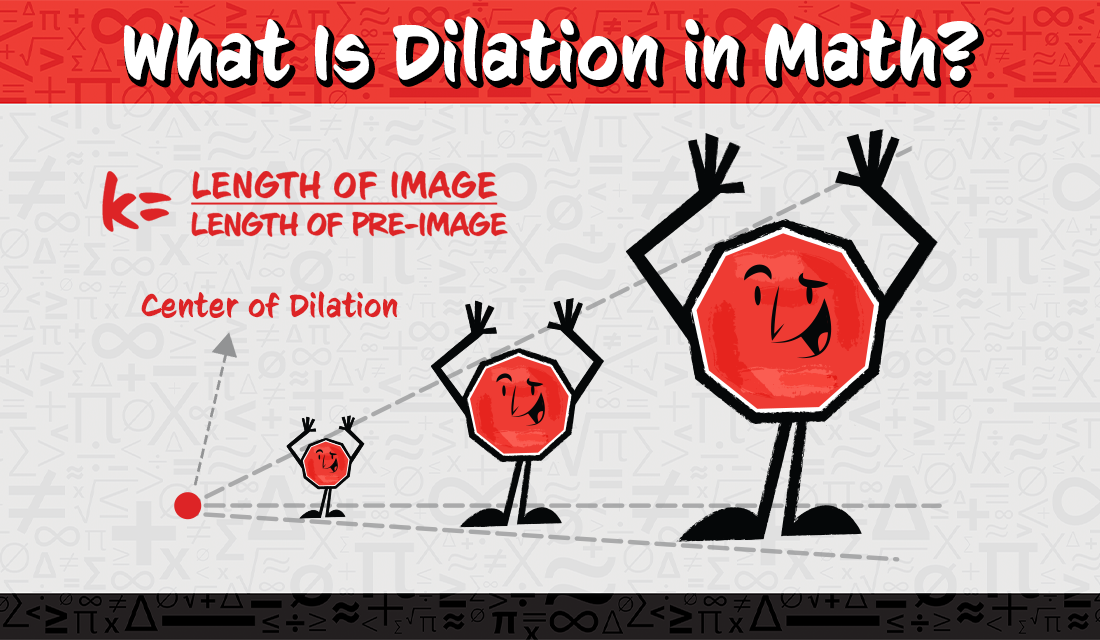

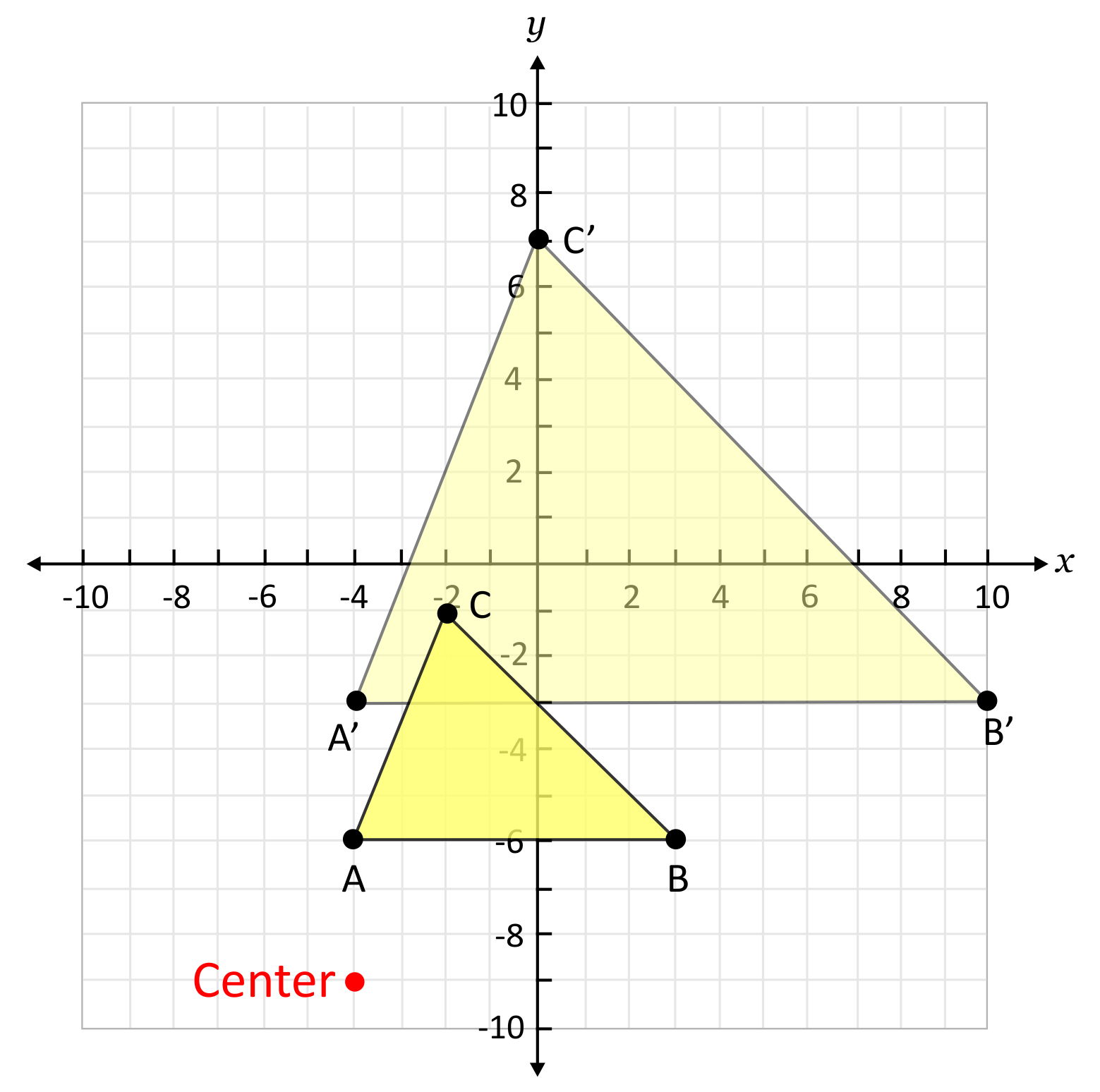

In its purest mathematical form, a dilation is a transformation that changes the size of an object without altering its shape or orientation. This geometric operation is defined by a center point and a scale factor. When an object undergoes dilation, every point on the object is moved along a line extending from the center point, with its distance from the center multiplied by the scale factor. A scale factor greater than one results in an enlargement, while a scale factor between zero and one results in a reduction. A negative scale factor introduces a rotation of 180 degrees in addition to the scaling. While this concept originates from the abstract realm of geometry, its practical implications are profoundly evident across myriad advanced technologies, particularly within the dynamic field of drone innovation, from precise aerial mapping to sophisticated AI-driven autonomous flight.

The Unseen Foundation: Dilations in Aerial Mapping and Photogrammetry

The seemingly simple mathematical concept of dilation forms a critical, often invisible, backbone for the accuracy and utility of modern drone-based mapping and photogrammetry. Drones equipped with high-resolution cameras capture vast amounts of visual data, which must then be meticulously processed to create precise two-dimensional maps (orthomosaics) and complex three-dimensional models of terrains, structures, and environments.

From Pixels to Geographic Scale: The GSD Connection

At the heart of drone mapping is the Ground Sample Distance (GSD), a direct manifestation of the dilation principle. GSD represents the real-world distance between the centers of two consecutive pixels on the ground. When a drone flies higher, the area covered by each pixel increases; consequently, the GSD value increases. Mathematically, this change in GSD is a direct result of a dilation. As the drone’s altitude changes, the effective scale factor applied to the ground image relative to the camera sensor also changes. A drone flying at 50 meters might achieve a GSD of 1 cm/pixel, meaning each pixel covers a 1 cm by 1 cm square on the ground. If the drone ascends to 100 meters, effectively doubling its distance from the ground, the GSD would approximately double to 2 cm/pixel. This is a dilation of the ground features by a scale factor of two, meaning objects appear half their size (a reduction) in the image sensor, even though their real-world dimensions remain constant.

When drone images are stitched together to form orthomosaics or 3D models, photogrammetry software must account for varying GSDs, lens distortions, and camera angles. Each image needs to be accurately scaled and transformed relative to its neighbors and to a global coordinate system. Dilations are intrinsically involved in these transformations, ensuring that the combined output is a geometrically correct and uniformly scaled representation of the surveyed area, free from discrepancies that would arise from differing flight altitudes or camera perspectives. The process essentially takes numerous “dilated” views of the ground and mathematically “undilates” and realigns them to reconstruct a single, consistent, and precisely scaled model or map.

Accurate Measurement and Spatial Analysis

The ability to perform accurate measurements from drone-generated maps hinges entirely on the correct application and understanding of dilations. Surveyors, construction managers, and agricultural specialists rely on these maps to determine precise distances, calculate areas, and quantify volumes. Without the rigorous application of mathematical dilations during the processing phase, any measurement derived from a drone map would be arbitrary and unreliable.

For instance, in precision agriculture, drones are used to monitor crop health. By analyzing multispectral imagery, farmers can identify areas of stress. The ability to accurately delineate affected zones and calculate their precise area (often in acres or hectares) is vital for targeted intervention. This involves ensuring the orthomosaic is correctly scaled (dilated) to the real world. Similarly, in construction, drones track progress by comparing successive 3D models. Measuring the volume of excavated earth or stockpiled materials requires that the 3D models are perfectly scaled replicas of the physical site, a feat achieved through careful application of dilation principles in the photogrammetric pipeline. Georeferencing, the process of assigning real-world coordinates to image pixels, also inherently involves scaling and translating the image data to fit a specific projection system, which is another form of geometric transformation deeply intertwined with dilations.

Dilations in AI, Computer Vision, and Autonomous Navigation

Beyond static mapping, the dynamic capabilities of drones, particularly in autonomous flight and AI-driven applications, are profoundly influenced by the mathematical concept of dilations. Modern drone systems leverage sophisticated computer vision and machine learning algorithms that must constantly interpret their environment, identify objects, and navigate safely, often despite significant variations in object size due to distance or perspective.

Object Recognition and Scale Invariance

One of the most challenging aspects of computer vision for autonomous drones is object recognition. Whether it’s identifying power lines for inspection, recognizing specific wildlife species for conservation, or detecting people in search-and-rescue operations, the drone’s AI needs to classify objects accurately regardless of how large or small they appear in the camera’s field of view. An object far away will appear smaller (a reduced dilation), while the same object up close will appear larger (an enlarged dilation). Deep learning models, especially Convolutional Neural Networks (CNNs), are trained to achieve “scale invariance”—the ability to recognize an object across a range of sizes.

This scale invariance is directly a computational solution to the problem posed by dilations. Training datasets for these AI models often include numerous images of the same object at different scales and perspectives. The network learns to extract features that are robust to these changes in size, effectively learning to “undilate” or normalize the perceived object size internally. Without this capability, a drone’s AI would fail to recognize a critical target if it appeared at an unexpected size, severely limiting its utility in dynamic environments.

Obstacle Avoidance and Environmental Perception

For safe autonomous flight, drones must perceive their environment and identify potential obstacles. Lidar sensors, stereo cameras, and monocular vision systems all generate data where objects are represented with varying scales (dilations) depending on their distance from the drone. An algorithm processing this data must accurately interpret the size and proximity of these “dilated” objects to make informed decisions about flight paths.

For instance, a tree branch close to the drone might fill a significant portion of the image (large dilation), while a distant building might appear as a tiny speck (small dilation). The drone’s perception system utilizes depth estimation and object sizing algorithms that implicitly account for these dilations to construct an accurate 3D model of its surroundings. By understanding how the perceived size of an object changes with distance (the inverse square law often governs light intensity, but visual angle/size is more linear with distance), the drone can estimate actual object sizes and distances, crucial for calculating collision probabilities and planning avoidance maneuvers. Feature scaling, where input data is normalized to a common range, is a common technique in machine learning that helps algorithms generalize across different perceived dilations of an object or feature.

AI Follow Mode and Relative Scaling

Features like “AI Follow Mode” in consumer and professional drones brilliantly demonstrate the real-time application of dilation principles. When a drone is tasked to follow a subject, say a cyclist or a car, it continuously monitors the subject’s size (its perceived dilation) within the camera frame. If the subject appears too small, the drone might interpret this as being too far away and close the distance, effectively enlarging the subject’s perceived dilation. Conversely, if the subject appears too large, the drone might back away.

The AI continuously adjusts the drone’s position, altitude, and even camera zoom to maintain an optimal and consistent framing of the subject. This dynamic adjustment is essentially a sophisticated feedback loop that manages the relative dilation of the subject in the camera’s view, ensuring cinematic shots or effective tracking even as the subject moves unpredictably. The drone’s internal models understand that the actual size of the subject is constant, and any change in its perceived size (dilation) is due to a change in relative distance, triggering a compensatory maneuver.

Future Innovations: Adaptive Dilations and Dynamic Scaling

As drone technology continues to evolve, a deeper and more explicit integration of mathematical dilation concepts promises to unlock even more sophisticated capabilities. Future innovations will likely leverage adaptive dilations and dynamic scaling to optimize data collection, enhance situational awareness, and push the boundaries of autonomous operation.

Dynamic Resolution and Data Compression

Imagine a drone that can intelligently adjust its data capture resolution based on the immediate needs of a mission. By understanding the concept of effective GSD (a dilation factor), future drones could dynamically vary their altitude or sensor parameters to achieve higher resolution (smaller GSD, effectively “undilating” the ground view) in areas of high interest, while maintaining lower resolution (larger GSD, “dilating” the ground view) in less critical zones. This adaptive sampling strategy could dramatically optimize data storage, processing power, and transmission bandwidth, allowing for longer flight times and more efficient missions. Furthermore, advanced compression algorithms will increasingly leverage mathematical insights into scaling to discard redundant information in images or point clouds, effectively “dilating” the data representation for storage while maintaining the ability to “undilate” it for full detail when needed.

Enhanced Situational Awareness and Augmented Reality

The integration of augmented reality (AR) overlays onto live drone feeds is another frontier where understanding dilations will be paramount. Imagine a drone transmitting a live feed where virtual markers or information panels are precisely overlaid onto real-world objects seen by the drone’s camera. For these AR elements to appear correctly scaled and positioned relative to the real environment, the system must continuously calculate the appropriate dilation factor. As the drone changes position, the virtual overlays must also “dilate” or “contract” in real-time to maintain their proportional accuracy, offering truly immersive and informative situational awareness for pilots and ground crews. Predictive modeling, which anticipates how the perceived size (dilation) of objects will change as the drone maneuvers, could further enhance path planning and decision-making, allowing drones to simulate future visual scenarios and optimize their flight strategies for various tasks.

In conclusion, while “dilations in math” might sound like an abstract concept confined to geometry textbooks, its principles are foundational to the complex and rapidly evolving world of drone technology and innovation. From enabling accurate aerial maps and 3D models to empowering AI for robust object recognition, autonomous navigation, and dynamic subject tracking, the silent power of scaling transformations underpins many of the most impressive feats achieved by modern UAVs. As drones become more intelligent and autonomous, the explicit and implicit application of mathematical dilations will continue to drive advancements, shaping the future of aerial robotics.