In the sophisticated realm of drone-based inspection, mapping, and surveillance, the environment a UAV operates within presents a myriad of challenges that directly impact the efficacy of its onboard imaging systems. While often associated with vast outdoor landscapes, drone technology is increasingly deployed for intricate interior missions, from industrial facility inspections to confined space analysis. In such environments, the seemingly trivial characteristics of interior surfaces, much like the “paint sheen” one might consider for a domestic “bathroom,” can have profound implications for data capture quality across a spectrum of camera technologies. Understanding how different surface finishes interact with light and other electromagnetic spectra is paramount for optimizing visual, thermal, and depth imaging performed by drones in enclosed, often reflective, settings.

Understanding Surface Reflectivity in Enclosed Environments for Drone Imaging

The concept of “paint sheen” refers to the level of glossiness or reflectivity of a painted surface. This characteristic is determined by the ratio of pigment to binder and the size of the pigment particles, dictating how light is scattered or reflected. In an interior drone mission, whether inspecting the tight quarters of a ventilation shaft, the machinery of a power plant, or a sealed experimental chamber (metaphorically, a “bathroom” in terms of its confined and potentially challenging nature), these surface properties become critical variables.

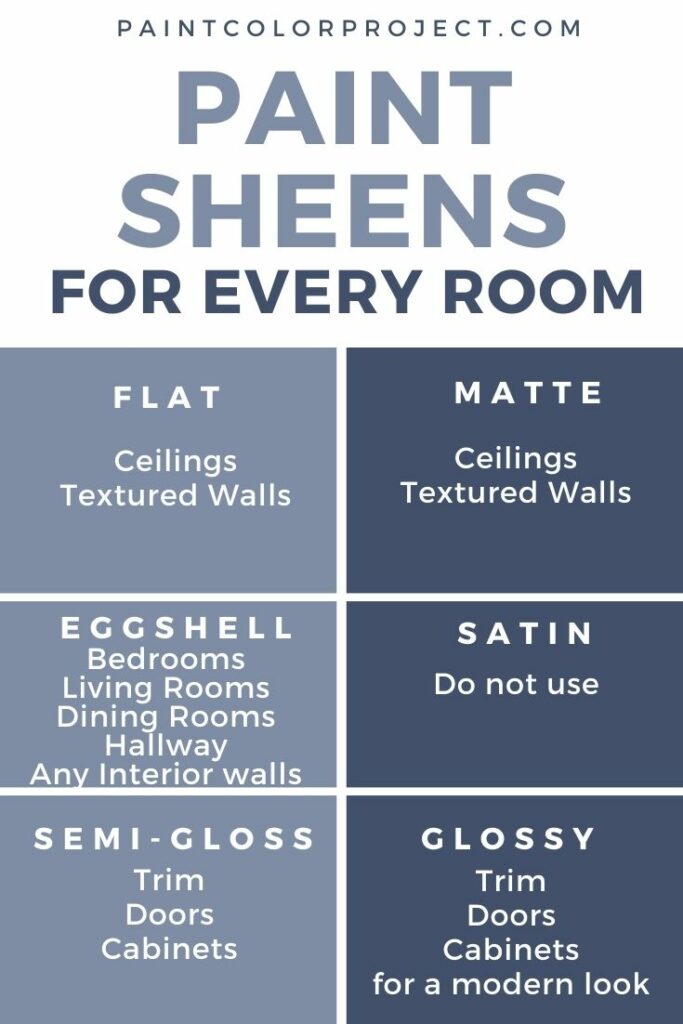

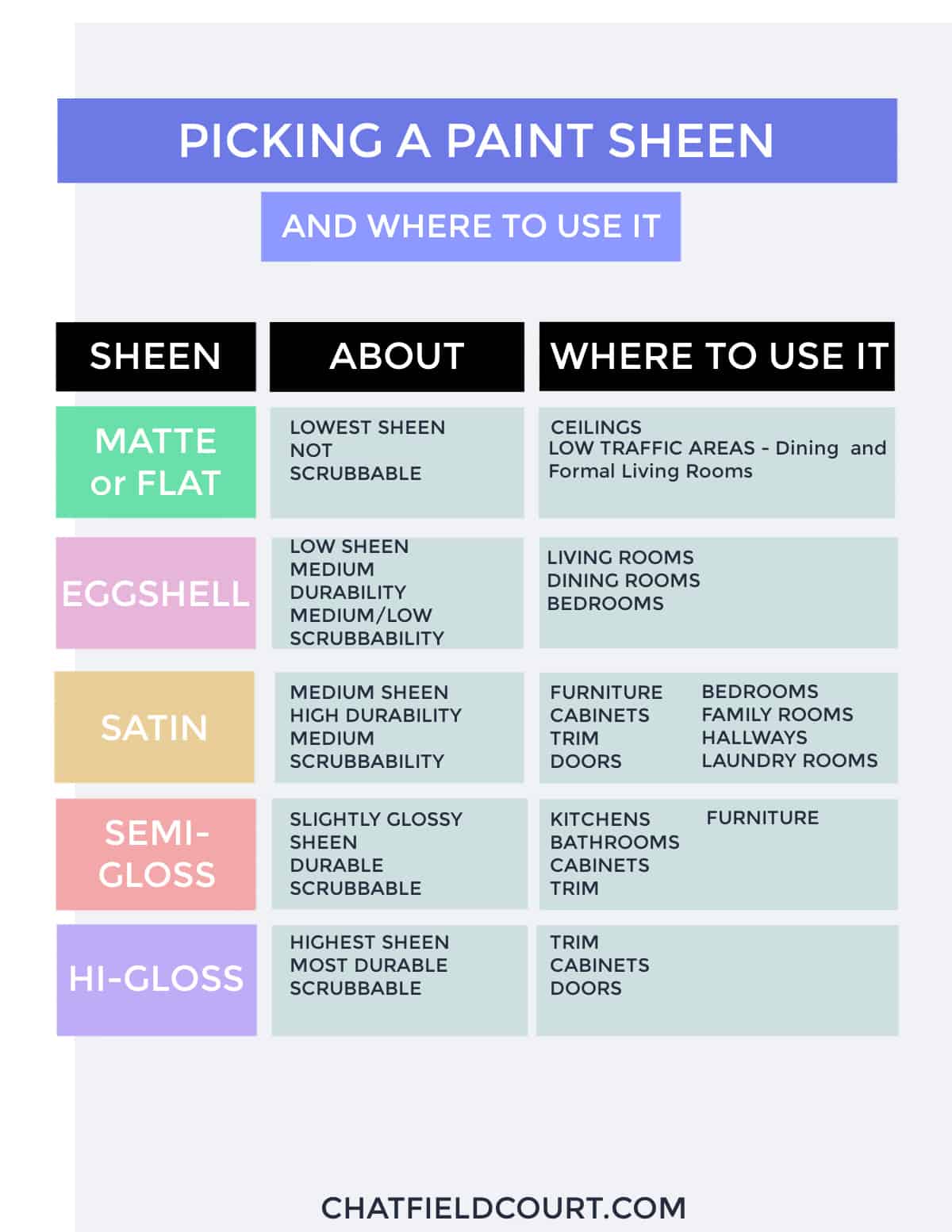

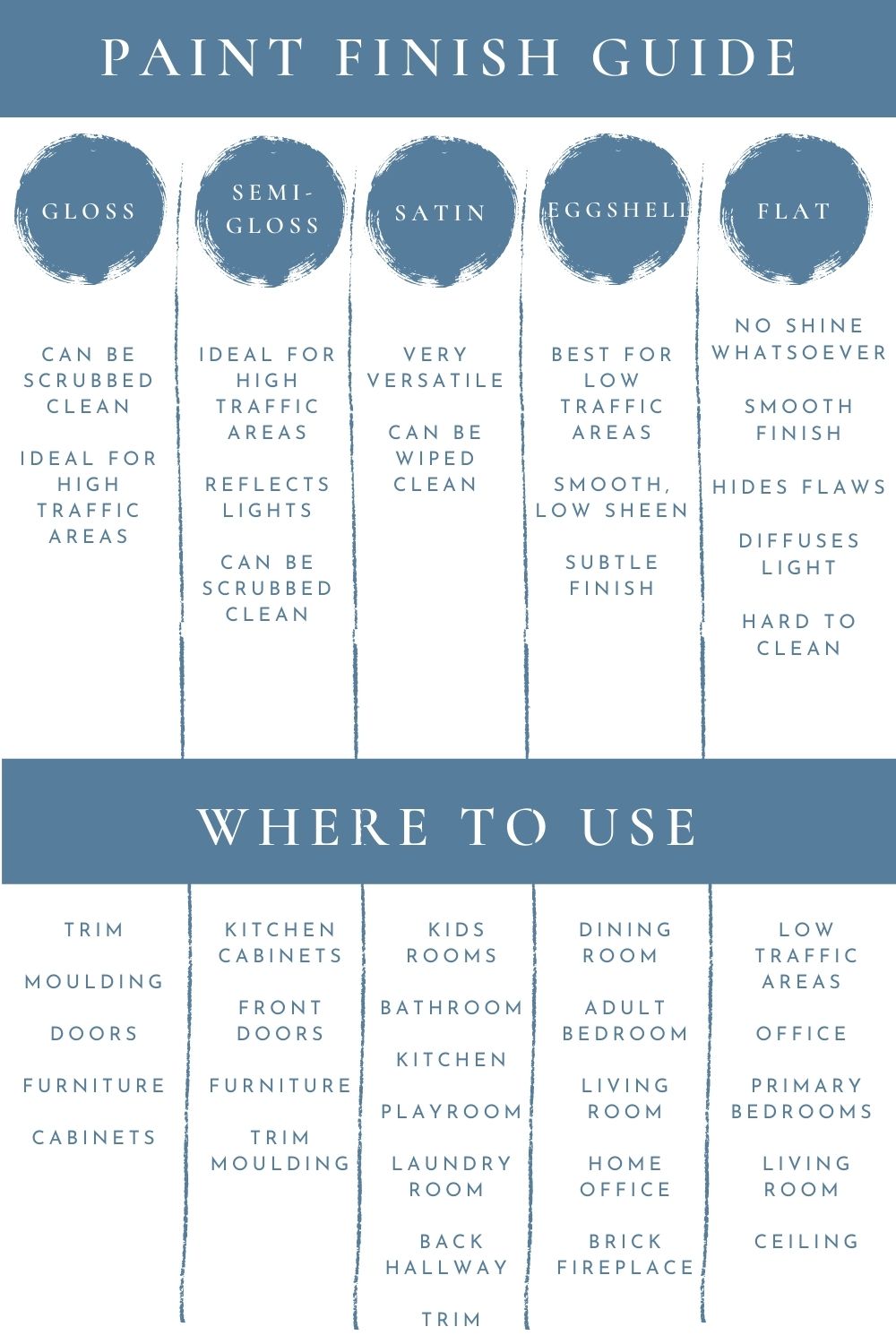

The Spectrum of Sheen: From Matte to Gloss

- Matte (Flat) Finishes: These surfaces absorb most incident light and scatter the remainder diffusely. They exhibit very little shine, reducing glare significantly. For drone cameras, matte surfaces generally offer the most forgiving photographic conditions, minimizing hotspots and reflections, leading to more uniform illumination and potentially clearer textural details.

- Eggshell/Satin Finishes: Offering a slight sheen, these surfaces present a subtle challenge. They diffuse some light but also reflect a portion directionally. This can introduce mild glare, particularly when lighting is direct or harsh, but generally maintains good color representation and detail.

- Semi-Gloss Finishes: Noticeably shinier, semi-gloss surfaces reflect a significant amount of light directionally. This can lead to pronounced specular highlights and glare, obscuring details and creating blown-out areas in drone footage, especially with high-intensity onboard lighting.

- Gloss/High-Gloss Finishes: These surfaces are highly reflective, behaving almost like mirrors. They bounce back nearly all incident light in a concentrated direction. When a drone’s camera encounters high-gloss surfaces, extreme glare, intense reflections of the drone itself or its lights, and significant loss of surface detail are common. Such surfaces can also create complex light patterns that confuse camera auto-exposure and white balance algorithms.

Light Interaction and Image Fidelity

The way a surface’s sheen interacts with the drone’s illumination (whether ambient light, external light sources, or onboard LEDs) directly influences the image’s fidelity. For RGB and 4K cameras, excessive reflectivity can wash out colors, hide defects, and distort geometric accuracy. For thermal cameras, variations in emissivity caused by different sheens can lead to inaccurate temperature readings. For LiDAR and structured light systems, diffuse scattering versus specular reflection significantly impacts point cloud density and accuracy. Therefore, understanding and anticipating these interactions is a core component of effective interior drone imaging.

Visual Imaging Challenges and Solutions

Drone-mounted visual cameras, from high-resolution 4K sensors to standard RGB imagers, are the primary tools for capturing detailed visual data. However, highly reflective interior surfaces can significantly degrade the quality and utility of this data.

RGB and 4K Capture: Glare, Hotspots, and Color Distortion

The primary adversaries for visual cameras in environments with varied “paint sheens” are glare and hotspots.

- Glare occurs when light reflects directly into the camera lens, causing a bright bloom that can obscure large portions of the image. On semi-gloss or high-gloss surfaces, this is particularly prevalent.

- Hotspots are localized areas of extreme brightness, often leading to overexposure and loss of detail in those specific regions. These are direct consequences of specular reflections.

- Color Distortion and Shift: Reflections can also introduce color casts from ambient light or other reflective surfaces, leading to inaccurate color representation, which is critical for material analysis or defect detection.

Mitigating Reflectance: Camera Settings and Ancillary Tools

To combat these challenges, drone operators can employ several strategies:

- Adjusting Lighting: Utilizing diffuse lighting rather than direct, harsh light can significantly reduce glare. If the drone uses onboard LEDs, experimentation with their angle and intensity is crucial. In some scenarios, external, strategically placed softboxes or diffusers might be necessary.

- Polarization Filters: A circular polarizer filter (CPL) is an invaluable tool. It can be rotated to block specific angles of reflected light, effectively reducing glare from non-metallic surfaces like painted walls, glass, or water. This enhances color saturation and contrast, making details more visible.

- Exposure Bracketing (HDR): Capturing multiple images at different exposure levels (under-exposed, correctly exposed, over-exposed) and combining them into a High Dynamic Range (HDR) image can help retain detail in both very bright and very dark areas, mitigating the effects of hotspots.

- Adjusting Aperture and Shutter Speed: A narrower aperture (higher f-stop) increases depth of field and can sometimes reduce the intensity of glare. A faster shutter speed can prevent overexposure in bright areas, though this must be balanced with adequate lighting.

- Manual White Balance: Automated white balance systems can be confused by strong color casts from reflections. Manual white balance, set to a neutral gray card within the environment, ensures more accurate color reproduction.

Beyond Visible Light: Thermal and Depth Sensing Considerations

While visual imaging addresses what we see, drones are increasingly equipped with sensors that extend beyond the human visual spectrum. These systems also face unique challenges when confronted with varied surface sheens.

Emissivity and Thermal Camera Performance

Thermal cameras detect infrared radiation emitted by objects, correlating it to temperature. However, the accuracy of these readings is heavily dependent on an object’s emissivity – its ability to emit thermal energy.

- Matte surfaces generally have high and uniform emissivity, making them ideal for thermal imaging as they accurately radiate their intrinsic temperature.

- Glossy surfaces, conversely, tend to have lower emissivity and are highly reflective of infrared radiation from surrounding objects. A high-gloss wall might reflect the heat signature of a warm pipe behind the camera, leading to inaccurate temperature readings for the wall itself. This phenomenon can create ghosting or false temperature differentials, complicating defect detection or heat signature analysis.

- Solutions: Operators must be aware of surface emissivity variations. Sometimes, applying a high-emissivity (e.g., matte black) tape to a small section of a reflective surface can provide a known reference point for calibration. Advanced thermal cameras with features like emissivity correction settings can also help, but require accurate input about the surface material.

Structured Light and LiDAR: Signal Interference

Depth sensing technologies like Structured Light and LiDAR (Light Detection and Ranging) rely on emitting light (infrared patterns or laser pulses) and analyzing its return.

- Structured Light systems project a known pattern onto a scene and analyze its deformation to calculate depth. Highly reflective or glossy surfaces can scatter this pattern unpredictably or reflect it away from the sensor, leading to gaps or inaccuracies in the 3D model.

- LiDAR systems emit laser pulses and measure the time it takes for them to return. Matte surfaces typically provide strong, diffuse returns, resulting in dense and accurate point clouds. Glossy or highly specular surfaces, however, can reflect the laser pulse directly away from the receiver, leading to “no-return” zones or sparse data. Conversely, highly reflective surfaces can also cause false returns if the laser bounces off multiple surfaces before returning, creating “multipath” errors.

- Solutions: Multi-sensor fusion, combining LiDAR data with visual imagery, can help fill in gaps. Adjusting the angle of incidence of the laser or structured light projector can sometimes improve returns. For critical areas, using a scanning strategy that approaches the surface from multiple angles can help ensure comprehensive data capture.

Strategic Imaging Approaches for Confined, Reflective Spaces

Effective drone imaging in environments with diverse “paint sheens” requires more than just technical camera adjustments; it demands strategic planning and operational intelligence.

Pre-Mission Planning and Environment Analysis

Before deploying a drone into a challenging interior space, thorough pre-mission planning is essential. This includes:

- Surface Characterization: Identifying the types of surfaces present (matte, glossy, metallic, glass) and their distribution. This informs decisions about sensor selection and flight path.

- Lighting Assessment: Understanding ambient light conditions, including potential reflections from windows, artificial light sources, or even external light filtering through openings.

- Risk Assessment: Recognizing areas prone to severe glare or data dropouts based on surface properties and planning alternative data capture strategies.

- Software Simulation: Utilizing simulation tools to predict how light will interact with surfaces and optimize flight paths and camera angles.

Post-Processing Techniques for Image Enhancement

Even with meticulous in-field adjustments, some artifacts from challenging surfaces may persist. Post-processing can recover and enhance data:

- De-glaring and Highlight Recovery: Advanced image processing software can intelligently reduce glare and recover detail from overexposed highlights by analyzing adjacent, properly exposed pixels or leveraging data from HDR captures.

- Color Correction: Precisely adjusting white balance and color curves can mitigate color shifts introduced by reflections.

- Noise Reduction and Sharpening: While not directly related to sheen, these techniques can improve the overall clarity of images where subtle details might be obscured by residual noise.

- 3D Model Reconstruction Algorithms: For depth data, algorithms can interpolate missing data points in sparse areas caused by specular reflections, generating a more complete 3D model.

Future Innovations in Drone Imaging for Complex Interiors

The challenges posed by varying surface “sheens” in confined spaces are driving innovation in drone imaging technology.

- Advanced Sensor Fusion: Integrating data from multiple sensor types (RGB, thermal, LiDAR, hyperspectral) and processing it with AI-driven algorithms will enable more robust environment understanding, automatically correcting for phenomena like reflections and emissivity variations.

- Adaptive Optics and Smart Lenses: Future drone cameras may incorporate liquid lenses or active optical elements that can rapidly adjust focus, aperture, and even polarization in real-time based on scene analysis, dynamically optimizing for changing surface conditions.

- AI-Powered Reflection Suppression: Machine learning models trained on vast datasets of images with various reflections could autonomously detect and suppress glare in real-time during flight, providing cleaner data directly from the drone.

- Swarm Robotics with Distributed Sensing: Multiple micro-drones, each equipped with specialized sensors, could cooperatively map and inspect complex interiors, with their combined data compensating for individual sensor limitations caused by challenging surfaces.

- Programmable Illumination Systems: Drones might feature multi-directional, programmable LED arrays that can adjust intensity, color temperature, and angle of incidence on the fly, actively mitigating reflections and illuminating surfaces optimally for different sensor types.

Ultimately, just as one chooses a “paint sheen” for a bathroom to achieve a specific aesthetic and functional outcome, drone operators must meticulously consider surface properties in interior environments to ensure their imaging systems capture the highest quality, most reliable data for critical analysis. The interplay of surfaces, light, and advanced sensor technology continues to push the boundaries of what autonomous systems can achieve in complex, human-built spaces.