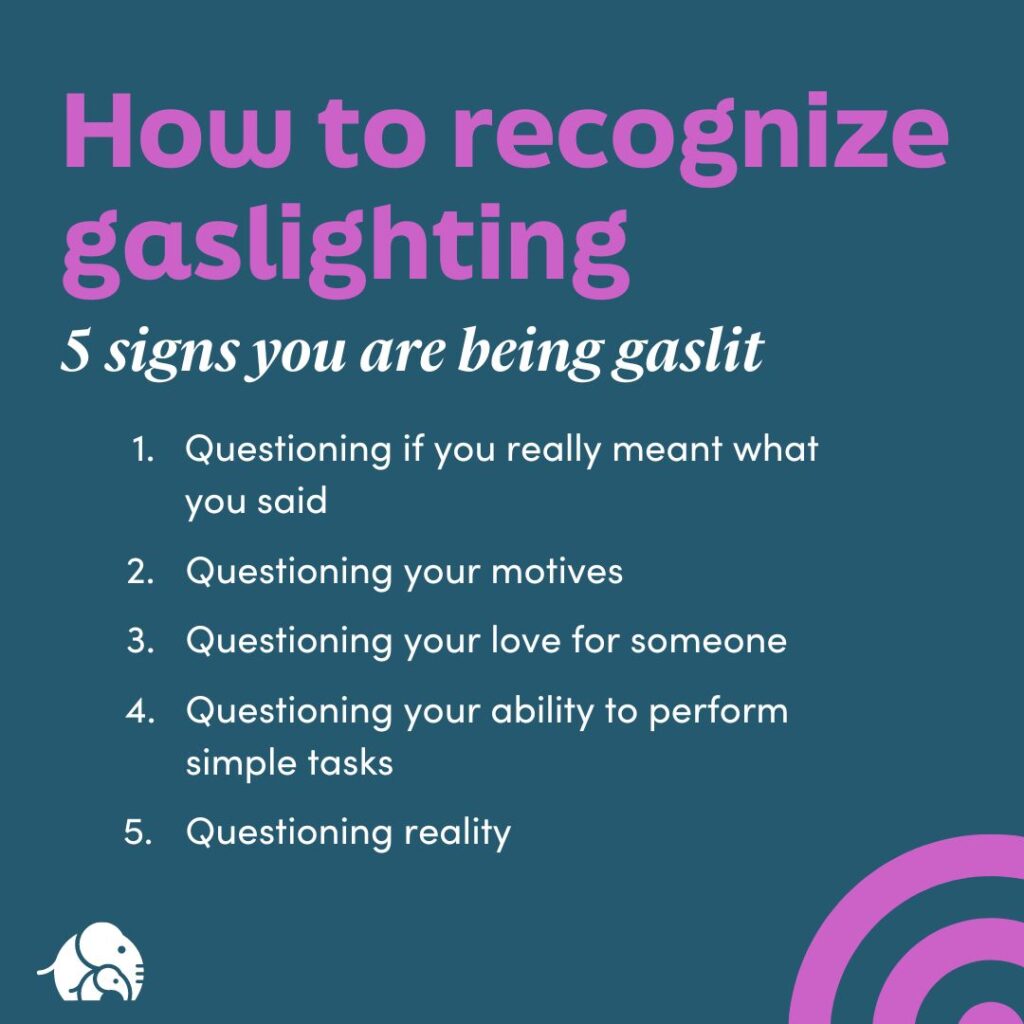

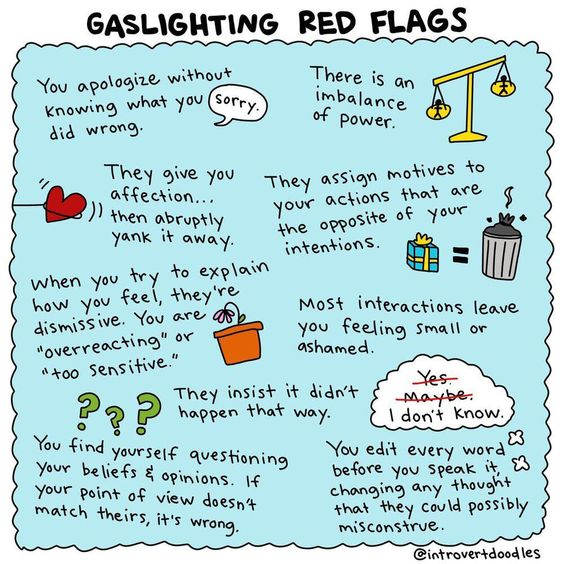

The term “gaslighting” has become a common fixture in psychological discourse, referring to a manipulative tactic where an individual or group subtly undermines another’s perception of reality, leading them to question their own sanity, memory, or judgment. While its origins are rooted in interpersonal abuse, the concept of gaslighting can be metaphorically applied to other domains where manipulation and the distortion of information are present. In the realm of advanced technology, particularly in the burgeoning field of drone technology, understanding how subtly misleading information or functionality can impact user perception and trust is crucial. This article will explore how principles akin to gaslighting can manifest within drone technology, focusing on the user experience, system reliability, and the ethical implications of advanced autonomous features. We will delve into how perceived malfunctions, intentional design choices, or even genuine technological limitations can be misinterpreted or misconstrued by users, leading them to question the integrity and predictability of their drone systems.

The Subtle Art of Misdirection in Drone Operations

The complexity of modern drone systems, while offering unprecedented capabilities, also presents opportunities for users to experience phenomena that can feel like a form of “gaslighting.” This isn’t necessarily malicious intent from the manufacturer, but rather a consequence of intricate systems interacting with user perception and expectation.

Perceived System Inconsistencies

Users often develop a mental model of how their drone should behave. When this model is contradicted by the actual performance, it can be disorienting. For instance, a drone might consistently hover perfectly in calm conditions but exhibit slight, unpredictable drifts in seemingly benign wind. A user who has only experienced the stable behavior might begin to doubt their own spatial awareness or the accuracy of their controls, especially if the drift is subtle and not immediately attributable to external factors.

The Illusion of Perfect Stability

Many advanced drones boast sophisticated stabilization systems. However, these systems operate within specific parameters. When external forces, such as sudden gusts of wind or complex atmospheric conditions, push the drone beyond its compensation capabilities, it may exhibit movements that a user perceives as erratic. If the drone’s software or display doesn’t adequately communicate why this instability is occurring – perhaps by failing to highlight wind speed or direction data prominently during such moments – the user might feel the drone is “acting up” or that their piloting skills are suddenly deficient. This disconnect between expected flawless performance and actual, albeit explainable, deviations can be a form of subtle information distortion.

Navigational Ambiguities

GPS inaccuracies, though increasingly rare, can still occur. A user might observe their drone veering slightly off its intended path or exhibiting a “GPS drift” that isn’t clearly flagged by the system. If the navigation system doesn’t provide real-time, easily understandable feedback on GPS signal strength or potential errors, the pilot might attribute the deviation to their own misjudgment. They might believe they made a slight input error, when in reality, the system itself was experiencing a temporary navigational anomaly. This lack of transparent communication about internal system states can lead to a user questioning their own input or memory of their actions.

The Role of User Interface and Feedback Mechanisms

The way a drone’s system communicates its status and intentions to the pilot plays a critical role in preventing or, conversely, inducing these feelings of doubt.

Inadequate Error Reporting

When a drone encounters an issue, the nature and clarity of the error message are paramount. A cryptic error code or a vague warning like “System Anomaly Detected” can leave a pilot feeling helpless and confused. They may not understand the severity of the issue, what caused it, or what actions they should take. This ambiguity can lead to a user questioning their ability to operate the drone safely or even their understanding of basic flight principles, especially if they have a history of successful flights. The system, by failing to provide clear diagnostic information, implicitly suggests the problem might be with the pilot’s interpretation or action.

Over-reliance on Automation and Its Limits

Many modern drones feature sophisticated autonomous modes, such as “Follow Me” or “Intelligent Flight Modes.” While incredibly useful, these modes are not infallible. They rely on sensors, algorithms, and pre-programmed logic, all of which can have limitations. If a “Follow Me” mode suddenly loses track of the subject in an unexpected visual environment (e.g., the subject blends into the background, or a sudden shadow falls), and the drone doesn’t smoothly transition to a safe holding pattern or a clear “lost subject” alert, the user might feel the drone is arbitrarily abandoning its task or behaving erratically. They may question if they activated the mode correctly, if their subject moved too quickly, or if the drone is simply malfunctioning, all without a clear, objective explanation from the system.

When Technology “Gaslights” the User: Intentional vs. Unintentional Distortions

The concept of gaslighting implies a degree of intentional manipulation. In the context of drone technology, while outright malicious intent from manufacturers is rare and highly discouraged by regulatory bodies, the effect of perceived manipulation can still occur due to design choices, commercial pressures, or a lack of foresight regarding user experience.

The Influence of Marketing and Hype

The drone industry is characterized by rapid innovation and aggressive marketing. Manufacturers often highlight the most impressive capabilities of their systems, sometimes setting user expectations unrealistically high. When a user purchases a drone based on marketing that suggests effortless, perfect flight in all conditions, and then encounters situations where the drone struggles, they may feel that the product hasn’t lived up to its advertised potential. This discrepancy can lead to a form of buyer’s remorse that borders on feeling deceived, where the user questions their own judgment in believing the marketing in the first place.

The “Smart” Drone Paradox

Drones are increasingly marketed as “smart” or “intelligent,” implying a level of autonomy and decision-making that can surpass human capability. However, these “smart” features are still based on complex algorithms and sensor data. When a “smart” mode makes a decision that the pilot finds counterintuitive or even unsafe, it can be disconcerting. For example, an obstacle avoidance system might engage aggressively, forcing the drone to make an abrupt maneuver that the pilot did not anticipate or desire. The pilot might question the algorithm’s logic, wondering if it correctly interpreted the environment, and subsequently question their own ability to override or anticipate such decisions. This creates a scenario where the technology’s “intelligence” appears to override the pilot’s own judgment, leading to a sense of being undermined.

Genuine Technological Limitations vs. Perceived Failures

It’s crucial to distinguish between genuine technological limitations and what might be perceived as deliberate obfuscation. All technologies have inherent limitations. The challenge lies in how these limitations are communicated to the user.

Environmental Dependencies

Drones are highly susceptible to environmental factors. Strong winds, electromagnetic interference, and even poor lighting conditions can affect performance. If a drone’s software doesn’t proactively warn the user about these adverse conditions, or if the warnings are subtle and easily missed, the resulting performance degradation can be interpreted by the user as a system failure or an inexplicable flight anomaly. The user might blame the drone for not flying stably, when in reality, the environment is the primary culprit, and the system failed to adequately convey this crucial information.

Software Glitches and Updates

Like any complex software, drone operating systems can have bugs or require updates. A software glitch might cause unexpected behavior, such as a camera malfunctioning or a flight controller behaving erratically. If a firmware update is pushed that subtly alters flight characteristics or introduces new quirks, and this isn’t clearly communicated, users might experience what feels like a regression in performance. They might question their own piloting skills, believing they have somehow forgotten how to fly, rather than recognizing the impact of a recent software change. This can be particularly disorienting if the change is subtle and doesn’t trigger an explicit error message.

Mitigating “Drone Gaslighting”: Towards Transparency and Trust

Addressing the potential for “gaslighting” within drone technology requires a proactive approach focused on user education, transparent system design, and clear communication. The goal is to build trust between the user and the machine, ensuring that perceived anomalies are understood and resolved collaboratively, rather than leading to user doubt.

Enhancing User Education and Expectation Management

A significant portion of perceived “gaslighting” can be mitigated by setting accurate expectations from the outset.

Comprehensive Onboarding and Training

Manufacturers should invest in thorough onboarding processes that go beyond simply demonstrating basic functions. This includes educating users about the environmental factors that can influence drone performance, the inherent limitations of sensors and autonomous systems, and the different operational modes. Interactive tutorials and simulations can help users develop a more nuanced understanding of how their drone operates in various scenarios. This proactive education helps users build a more accurate mental model, reducing the likelihood of being surprised by unexpected behavior.

Realistic Marketing and Product Information

The hype surrounding drone technology needs to be tempered with realism. Marketing materials should clearly articulate the operational boundaries and potential challenges associated with drone flight, rather than exclusively focusing on aspirational capabilities. Detailed product specifications and user manuals should be readily accessible and presented in an easy-to-understand format, covering everything from sensor limitations to the impact of weather conditions.

Designing for Transparency and Clarity

The design of drone systems, particularly their user interfaces and feedback mechanisms, is critical in fostering trust and preventing misinterpretation.

Intuitive Real-Time Feedback

Drone software should prioritize providing clear, actionable, and real-time feedback to the pilot. This includes intuitive visual cues and audible alerts for critical information such as GPS signal strength, battery levels, wind speed, and any detected system anomalies. For instance, during a flight where wind is a significant factor, the interface should prominently display wind speed and direction, allowing the pilot to correlate any slight movements with environmental conditions.

Clear Diagnostic and Error Reporting

When errors do occur, the system should provide specific, jargon-free explanations. Instead of a generic “error code,” the system should communicate what component is affected, the potential cause, and recommended actions. This empowers the user to understand the situation, take appropriate measures, and learn from the experience, rather than feeling confused or incompetent. Post-flight logs should also be easily accessible and interpretable, allowing users to review flight data and understand the sequence of events.

The Ethical Imperative of Trustworthy Technology

Ultimately, the concept of “gaslighting” in drone technology highlights an ethical imperative for manufacturers. The goal is not just to create powerful tools, but to create tools that users can rely on, understand, and trust.

Building a Collaborative Relationship

By prioritizing transparency, education, and clear communication, manufacturers can foster a collaborative relationship with their users. This moves beyond a simple transactional exchange of goods to one where the user feels empowered and informed. When a user understands why a drone is behaving in a certain way, they are more likely to adapt their piloting or understand the limitations, rather than question the integrity of the technology or their own capabilities.

The Future of Autonomous Flight and User Confidence

As drone technology advances towards greater autonomy, the challenge of maintaining user trust becomes even more critical. Autonomous systems must be designed with human oversight and understanding in mind. This means not just enabling machines to make decisions, but ensuring that those decisions are explainable and that the system provides a clear rationale for its actions. By actively working to prevent scenarios that could be perceived as “gaslighting,” the drone industry can pave the way for a future where advanced technology enhances human capabilities without undermining human confidence and judgment. The pursuit of robust, transparent, and user-centric drone technology is not merely about technical excellence, but about building an enduring foundation of trust.