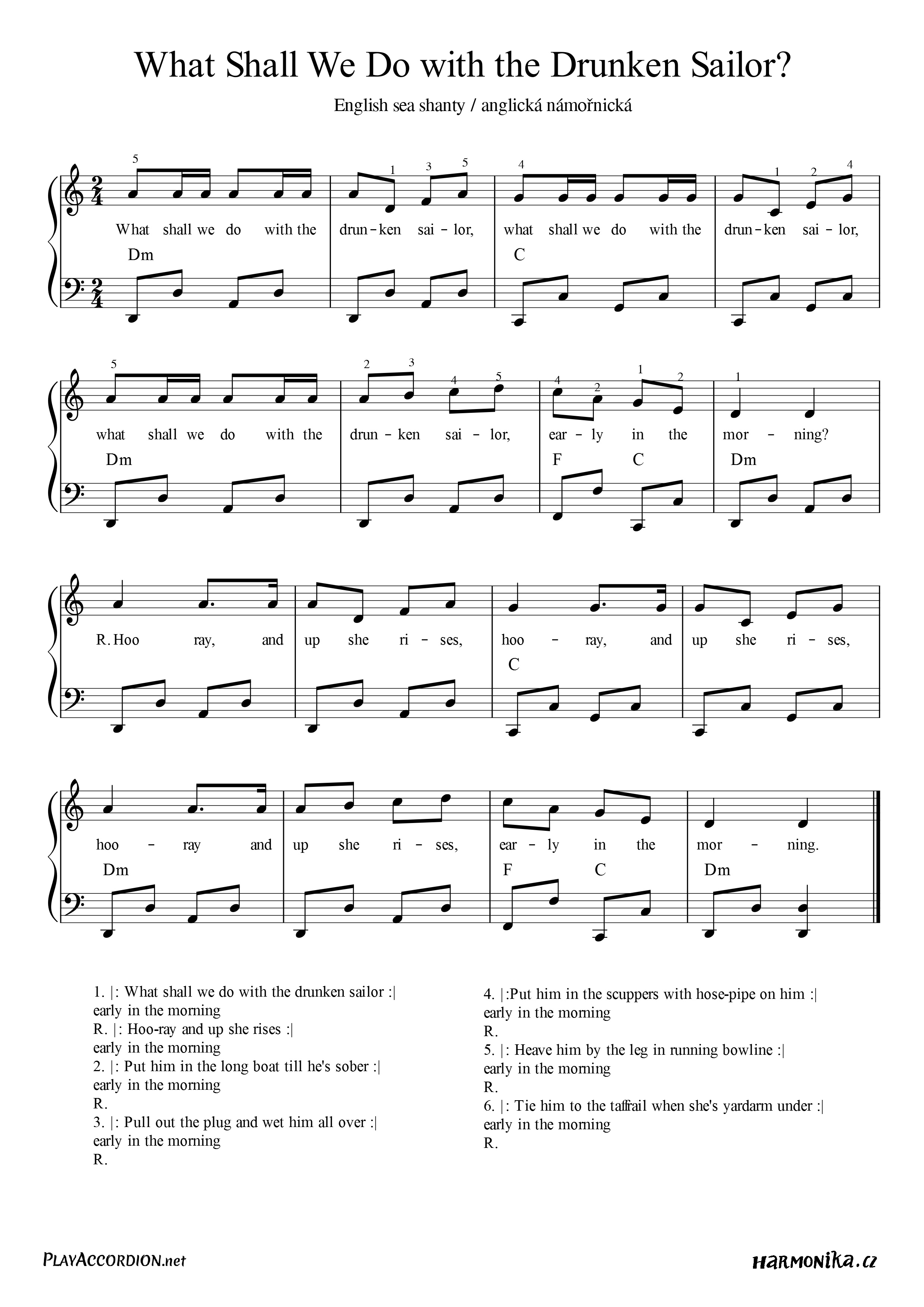

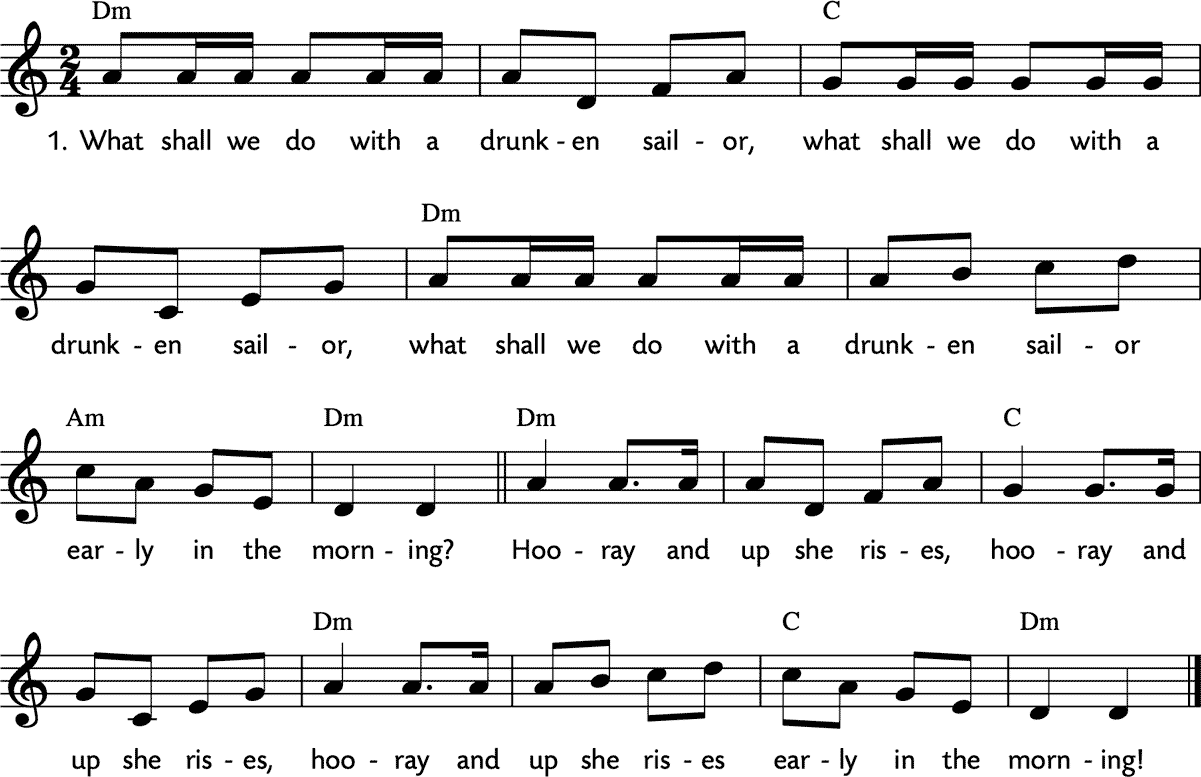

The dream of truly autonomous flight, where drones operate independently and intelligently, is rapidly becoming a reality. However, the path to this sophisticated level of operation is fraught with complexities, mirroring the age-old maritime riddle of what to do with a “drunken sailor.” In the context of drones, this translates to understanding and overcoming the challenges posed by unpredictable environments, sensor noise, and the very real need for robust decision-making in the absence of human intervention. This article delves into the technological frontiers being explored to imbue drones with the intelligence and resilience required for autonomous navigation and operation in increasingly demanding scenarios.

The Perils of the Unpredictable Sky: Sensor Fusion and Environmental Awareness

Autonomous drones must possess a profound understanding of their surroundings to navigate safely and effectively. This requires integrating data from a multitude of sensors to create a coherent and accurate representation of the environment, a process known as sensor fusion. The “drunken sailor” analogy here refers to the potential for individual sensors to provide misleading or erroneous data, akin to a sailor’s impaired judgment.

Overcoming Sensor Inaccuracies

-

LiDAR and Vision Integration: Light Detection and Ranging (LiDAR) provides precise depth information, while cameras offer rich visual texture and semantic understanding. Combining these allows for robust object detection and mapping, even in challenging lighting conditions or when visual features are scarce. For instance, LiDAR can detect an obstacle in low light where a camera might fail, while a camera can help LiDAR differentiate between a wall and a reflective surface that might otherwise be misinterpreted.

-

IMU Calibration and Drift Compensation: Inertial Measurement Units (IMUs), comprising accelerometers and gyroscopes, are crucial for estimating orientation and motion. However, IMUs are prone to drift over time, accumulating errors that can significantly degrade navigation accuracy. Advanced algorithms, often employing Kalman filters or complementary filters, are employed to fuse IMU data with other sources like GPS or visual odometry, continuously correcting for drift and maintaining a stable pose estimate. This is akin to a navigator constantly recalibrating their compass to account for magnetic variations.

-

GPS and GNSS Robustness: While Global Positioning System (GPS) and other Global Navigation Satellite Systems (GNSS) are foundational for outdoor navigation, they are susceptible to signal blockage in urban canyons, multipath interference, and jamming. To counter this, autonomous systems increasingly rely on multi-constellation GNSS receivers and integrate GPS data with visual-inertial odometry (VIO) or landmark-based navigation for seamless operation even in GNSS-denied environments. The “drunken sailor” might lose their bearings, but a well-equipped autonomous system has multiple ways to re-establish its position.

Real-time Mapping and Localization

Accurate localization – knowing precisely where the drone is within its environment – is paramount. This is achieved through simultaneous localization and mapping (SLAM) techniques.

-

Visual SLAM (VSLAM): VSLAM algorithms use camera imagery to build a map of the environment while simultaneously tracking the drone’s position within that map. This is particularly useful in GPS-denied areas. However, VSLAM can struggle with textureless environments or rapid motion.

-

LiDAR SLAM: LiDAR-based SLAM offers high accuracy in mapping and localization, especially in environments with distinct geometric features. Its robustness to lighting changes makes it a strong contender for challenging industrial inspections or search and rescue operations.

-

Hybrid SLAM: The most advanced systems often employ hybrid SLAM approaches, combining the strengths of visual and LiDAR sensors to achieve superior robustness and accuracy. This multi-pronged approach ensures that even if one sensor is compromised, the drone can still maintain situational awareness.

The Decision-Making Labyrinth: Intelligent Path Planning and Obstacle Avoidance

Once the drone understands its environment, it needs to make intelligent decisions about how to navigate through it. This involves sophisticated path planning algorithms and robust obstacle avoidance systems, the digital equivalent of a sober captain making critical choices. The “drunken sailor” here represents a system that might make impulsive or ill-advised maneuvers.

Dynamic Path Planning

- Global Path Planning: This involves determining an overall route from a starting point to a destination, considering known environmental constraints and objectives. Algorithms like A* (A-star) or Dijkstra’s algorithm are commonly used for this purpose.

-

Local Path Planning: Local planners are responsible for making real-time adjustments to the global path to avoid newly detected obstacles or dynamic elements in the environment. Techniques like Dynamic Window Approach (DWA) or Vector Field Histogram (VFH) are employed to ensure smooth and safe trajectories.

-

Model Predictive Control (MPC): MPC is a powerful control strategy that uses a dynamic model of the drone and its environment to predict future behavior and optimize control inputs over a finite horizon. This allows for proactive adjustments and smoother trajectories, minimizing the chance of sudden, erratic movements.

Advanced Obstacle Avoidance

-

Reactive Obstacle Avoidance: These systems react to detected obstacles in real-time, triggering immediate avoidance maneuvers. While effective, they can sometimes lead to jerky movements and may not always find the most efficient path.

-

Proactive Obstacle Avoidance: This approach leverages predictive models of the environment and potential future obstacle positions to plan avoidance maneuvers in advance. This leads to smoother, more efficient, and less disruptive flight paths, much like a seasoned pilot anticipating potential air traffic.

-

Learning-Based Avoidance: Emerging techniques involve training neural networks on vast datasets of flight scenarios to learn optimal avoidance strategies. This can lead to highly nuanced and adaptive avoidance behaviors, capable of handling complex, unforeseen situations. The drone learns not just to avoid a wall, but to weave around a flock of birds with natural grace.

Beyond Simple Navigation: Mission Autonomy and Intelligent Behavior

The ultimate goal of autonomous flight is to empower drones to perform complex missions without continuous human oversight. This requires an even higher level of intelligence, encompassing mission planning, task execution, and adaptive learning. The “drunken sailor” in this scenario is a drone that gets lost or fails to complete its assigned task.

Mission Planning and Execution

-

Task Decomposition: Complex missions are broken down into a series of smaller, manageable sub-tasks. For example, an inspection mission might involve surveying an area, identifying anomalies, and collecting relevant data.

-

Contingency Planning: Autonomous systems must be programmed with contingency plans to handle unexpected events, such as sensor failures, communication loss, or changes in mission objectives. This ensures that the drone can gracefully recover from disruptions and continue its mission as effectively as possible.

-

Goal-Directed Behavior: Drones equipped with artificial intelligence can be programmed to pursue specific goals, dynamically adjusting their behavior to achieve those objectives. This could involve finding a specific object, monitoring a particular area, or responding to environmental triggers.

AI-Powered Decision-Making and Learning

-

Reinforcement Learning (RL): RL algorithms allow drones to learn optimal behaviors through trial and error in simulated or real-world environments. By receiving rewards for desired actions and penalties for undesirable ones, the drone can gradually refine its decision-making processes.

-

Computer Vision for Semantic Understanding: Advanced computer vision techniques allow drones to not only detect objects but also understand their semantic meaning. This enables them to identify specific types of infrastructure for inspection, locate specific individuals in a search and rescue operation, or differentiate between various types of terrain for mapping.

-

Human-Robot Interaction (HRI) for Collaborative Autonomy: While fully autonomous systems are the goal, many applications will benefit from human-robot collaboration. This involves designing systems where drones can effectively communicate their intentions to human operators and receive high-level commands or guidance, creating a partnership rather than complete delegation. This is the ultimate solution to the “drunken sailor” problem – a competent crew that can steer the ship even when one member is indisposed.

The pursuit of truly autonomous flight is a testament to human ingenuity and our relentless drive to push the boundaries of what’s possible. By addressing the challenges of sensor fusion, intelligent path planning, and mission autonomy, we are gradually transforming drones from remote-controlled toys into sophisticated, intelligent agents capable of navigating the complexities of our world with unprecedented precision and resilience. The “drunken sailor” of the early days of unmanned systems is being replaced by a crew of highly capable, digitally intelligent navigators, ready to undertake missions once deemed impossible.