In the burgeoning field of precision agriculture and environmental monitoring, the question of what organelle photosynthesis takes place in is not merely a biological curiosity; it is the fundamental basis for the most advanced remote sensing technologies currently deployed on unmanned aerial vehicles (UAVs). To the drone pilot or data analyst, the answer is the chloroplast. Understanding the structure and function of the chloroplast is essential for leveraging multispectral and hyperspectral imaging to assess plant health, optimize crop yields, and monitor ecosystem vitality from the air.

The Biological Foundation: Understanding the Chloroplast through Remote Sensing

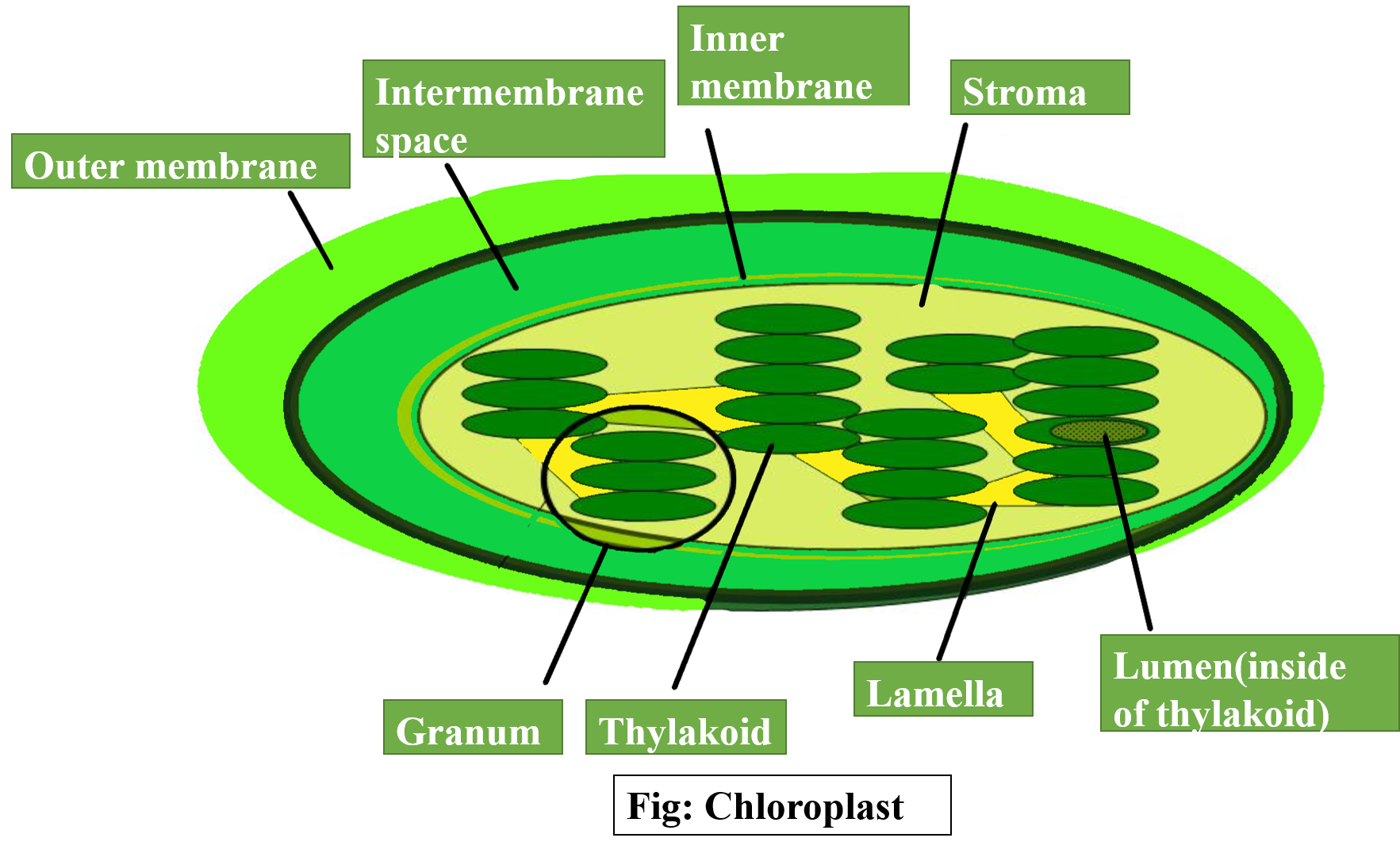

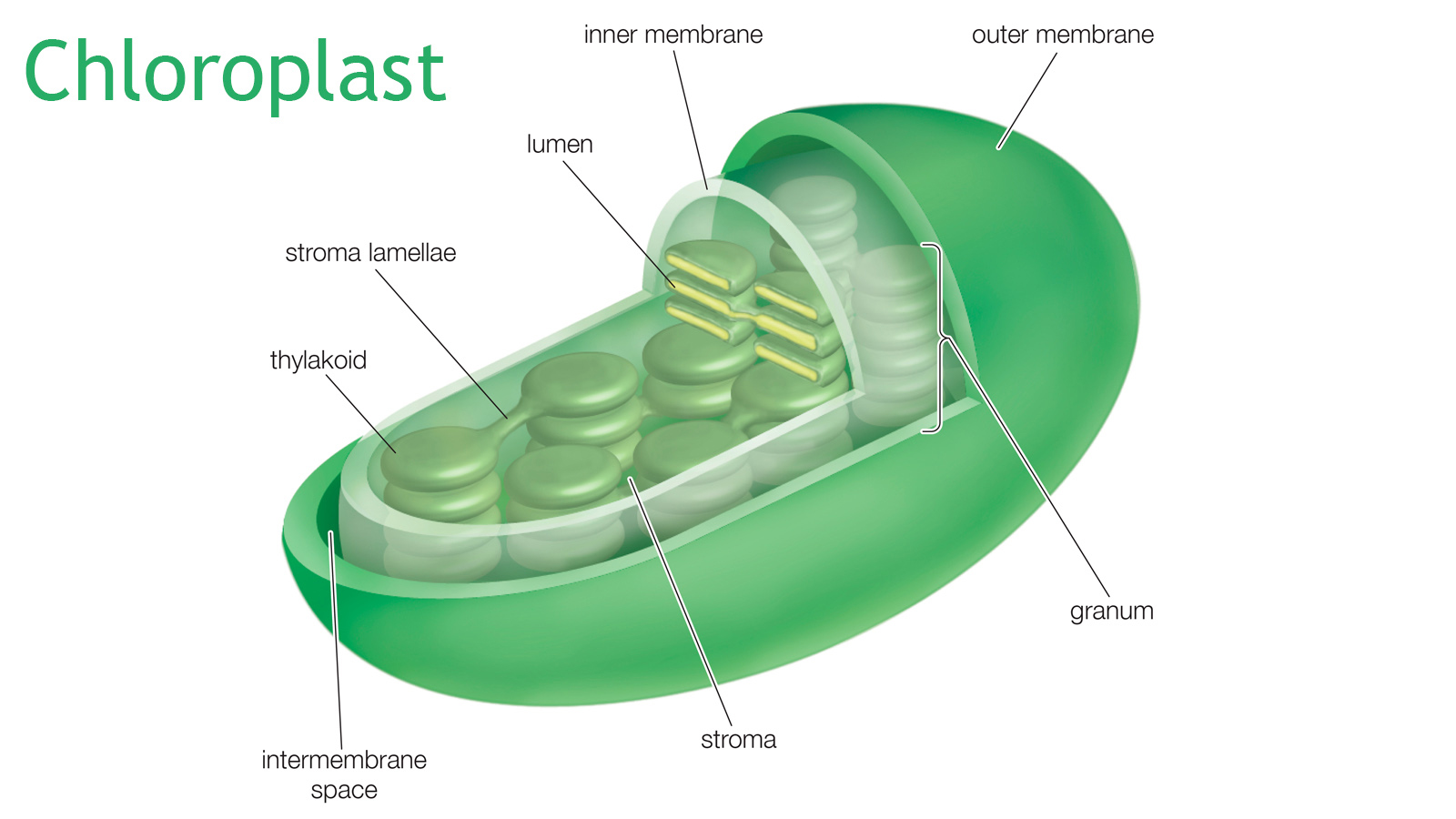

The chloroplast is the specialized organelle within plant cells where the transformative process of photosynthesis occurs. For those operating in the tech and innovation sector of the drone industry, the chloroplast acts as the primary “signal generator” for remote sensing equipment. Within these organelles, chlorophyll pigments absorb sunlight, primarily in the blue and red spectrums, while reflecting green light—which is why plants appear green to the human eye.

The Role of Chlorophyll and Light Reflectance

Chlorophyll a and b, housed within the thylakoid membranes of the chloroplast, are the drivers of energy conversion. However, for drone-based mapping, what the plant doesn’t use is just as important as what it does. Healthy chloroplasts absorb a vast majority of the visible red light they receive to power the synthesis of glucose. Simultaneously, the cellular structure of the leaf, particularly the spongy mesophyll, strongly reflects Near-Infrared (NIR) light. By comparing the absorption of red light (the power source for the chloroplast) with the reflection of NIR light, drone-mounted sensors can calculate the vigor of the photosynthetic process.

The Red Edge and Plant Stress

One of the most innovative areas in drone-based remote sensing is the “Red Edge” transition. This is the region of the electromagnetic spectrum that lies between the visible red light and the invisible near-infrared light. When the chloroplasts begin to fail due to water stress, nutrient deficiency, or disease, the slope of this Red Edge shifts. Modern drone technology utilizes narrow-band filters to capture these minute changes at the organelle level, providing an early warning system that is invisible to the naked eye but crystal clear through the lens of a multispectral camera.

Advanced Sensor Integration: Measuring the Invisible

To capture the activity occurring within the chloroplast, drones must be equipped with specialized imaging payloads. Standard RGB (Red, Green, Blue) cameras are insufficient for deep analytical work because they do not capture the NIR or Red Edge bands necessary to quantify photosynthetic efficiency.

Multispectral vs. Hyperspectral Payloads

The drone industry has seen a massive leap in sensor miniaturization. Multispectral sensors, such as those produced by Micasense or DJI, typically capture 5 to 10 discrete bands of light. These are designed specifically to target the wavelengths that correlate with chlorophyll absorption.

Hyperspectral imaging takes this a step further, capturing hundreds of narrow, contiguous bands. This allows for “chemical imaging,” where drones can identify specific pigments within the chloroplast or even detect the presence of specific proteins and nitrogen levels. While hyperspectral sensors were once restricted to satellites or large manned aircraft, new lightweight versions are now being integrated into professional-grade UAVs, allowing for unprecedented granularity in agricultural mapping.

Active vs. Passive Sensing in Aerial Mapping

Most drone-based remote sensing is passive, meaning it relies on sunlight reflecting off the plant’s chloroplasts. However, innovation in the field is moving toward active sensing, such as Solar-Induced Fluorescence (SIF). When chloroplasts absorb light, a small fraction of that energy is re-emitted as a faint glow in the far-red to near-infrared spectrum. This fluorescence is a direct proxy for the actual rate of photosynthesis. Detecting SIF from a drone requires extremely high-resolution sensors and sophisticated noise-reduction algorithms, representing the cutting edge of current aerial imaging technology.

Mapping and Analytics: Translating Photosynthesis into Data

Collecting spectral data is only the first half of the equation. The true innovation lies in how drone flight software and post-processing platforms translate the activity of the chloroplast into actionable maps.

The Normalized Difference Vegetation Index (NDVI)

The most common output of a photosynthetic drone survey is the NDVI map. By applying the formula (NIR – Red) / (NIR + Red), software can produce a color-coded map showing exactly where photosynthesis is most active. In these maps, high values represent dense, healthy chloroplast activity, while low values indicate areas where plants are struggling or dormant. This allows farmers and land managers to apply fertilizers or water only where the data shows a physiological need, drastically reducing waste and environmental impact.

Beyond NDVI: NDRE and SAVI

As drone technology matures, we are moving beyond simple NDVI. The Normalized Difference Red Edge (NDRE) index is more sensitive to chlorophyll content in permanent crops or dense canopies where traditional NDVI might “saturate.” Additionally, the Soil Adjusted Vegetation Index (SAVI) helps filter out “noise” from the ground in early-stage crops where the chloroplast density is low. These indices are calculated through autonomous processing pipelines, where raw imagery is uploaded to the cloud, stitched into orthomosaics, and analyzed by AI-driven algorithms.

AI and Machine Learning in Feature Extraction

Innovation in the drone space is increasingly dominated by Artificial Intelligence. Modern mapping platforms can now use machine learning to identify individual plants within a field and report on the photosynthetic health of each one. By training models on thousands of images of chloroplast-driven spectral signatures, these systems can automatically detect the onset of specific blights or pest infestations before they spread, essentially performing a “medical checkup” on a forest or farm from 400 feet in the air.

Innovation in Autonomous Flight and AI Analysis

The ability to monitor the chloroplast’s function at scale depends heavily on the evolution of drone flight technology. Precision is paramount when mapping photosynthetic activity, as even a slight misalignment in data can lead to incorrect analysis.

RTK and PPK for Precision Mapping

Real-Time Kinematic (RTK) and Post-Processed Kinematic (PPK) systems have revolutionized how we track drone position. For an accurate map of photosynthesis, every pixel must be georeferenced to within centimeters. This ensures that when a drone identifies a group of trees with failing chloroplasts, a ground team or an autonomous tractor can navigate to that exact spot. This level of precision is the backbone of “Variable Rate Application,” where drones work in tandem with ground machinery to optimize plant health.

Autonomous Flight Paths and Overlap

To generate a reliable spectral map, drones utilize autonomous flight planning software. These systems calculate complex “lawnmower” patterns that ensure high levels of frontal and side overlap (often 80% or more). This overlap is necessary for photogrammetry software to reconstruct a 3D model of the canopy. By viewing the chloroplast-rich leaves from multiple angles, the software can account for “bidirectional reflectance distribution function” (BRDF) effects—essentially the way the angle of the sun changes the way light reflects off the plant’s surface.

The Role of Edge Computing

The next frontier in drone innovation is “Edge Computing.” Rather than waiting to land and upload data to a server, new drones are being equipped with onboard processors capable of calculating vegetation indices in real-time. This allows for immediate decision-making. For example, a drone could identify a zone of low photosynthetic activity and immediately deploy a high-resolution 4K gimbal camera to take “scouting” photos for closer inspection, all without human intervention.

The Future of Remote Sensing and Environmental Monitoring

As we look toward the future, the study of the organelle where photosynthesis takes place will continue to drive drone innovation. We are moving toward a world of “persistent surveillance,” where autonomous drone nests (drones-in-a-box) launch daily to monitor the photosynthetic output of our planet’s green spaces.

Carbon Sequestration and Climate Mapping

With the global focus on climate change, drones are becoming essential tools for measuring carbon sequestration. Since the chloroplast is responsible for pulling CO2 out of the atmosphere, measuring photosynthetic efficiency is a direct way to calculate how much carbon a forest is storing. This data is increasingly used in the carbon credit market, where verifiable, drone-derived data is more valuable than manual ground estimates.

Scaling for Global Food Security

As the global population grows, the efficiency of the chloroplast must be maximized. Drones represent the most scalable technology for monitoring global food security. By providing high-resolution, high-frequency data on photosynthetic health, drones allow for a level of agricultural management that was previously impossible. From detecting nitrogen deficiencies in the corn belt to monitoring the health of palm oil plantations in Southeast Asia, the integration of biological knowledge and drone technology is creating a more resilient and efficient world.

In summary, the chloroplast is the biological engine that makes drone-based remote sensing possible. By understanding that this is the organelle where photosynthesis takes place, the tech industry has developed a suite of sensors, flight systems, and analytical tools that turn light reflection into life-saving data. The synergy between botany and robotics continues to be one of the most exciting and impactful areas of modern technological innovation.