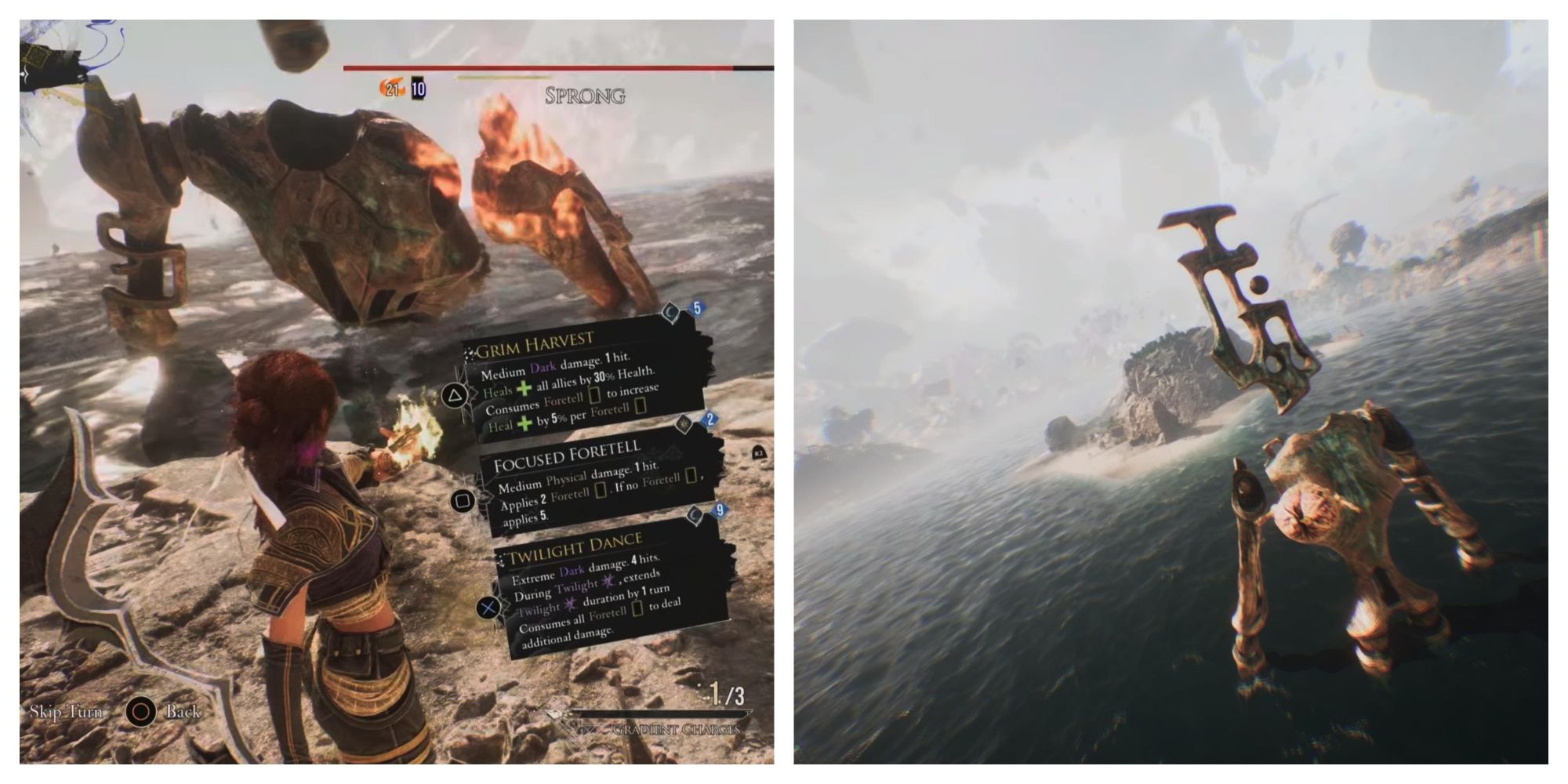

The evolution of autonomous drone operations has reached a fever pitch with the introduction of the Sprong Expedition 33 protocol. This mission, often discussed in specialized tech circles as the ultimate test of unmanned aerial vehicle (UAV) endurance and intelligence, represents a significant milestone in remote sensing and autonomous mapping. When professionals ask “what level” is required to beat Sprong Expedition 33, they are rarely talking about a simple difficulty setting. Instead, they are referring to the Technological Readiness Level (TRL), the sophistication of AI pathfinding, and the precision of the sensor suites required to successfully navigate the complex “Sprong” environments—high-interference, signal-denied zones that challenge the very limits of modern innovation.

To “beat” or successfully complete Expedition 33, an operator must integrate Level 9 system integration with advanced AI-driven spatial awareness. This article explores the technical architecture, the innovation required in remote sensing, and the autonomous frameworks necessary to conquer the most demanding drone expedition to date.

Understanding the Sprong Expedition 33 Framework

The Sprong Expedition series originated as a standardized testing environment for drones designed for subterranean and high-altitude mapping. Expedition 33 is widely considered the “breakout” mission where standard GPS-reliant flight becomes obsolete. In this environment, the “Sprong” factor refers to the localized magnetic anomalies and structural density that render traditional navigation systems useless.

The Genesis of Autonomous Mapping Missions

Before delving into the levels required for success, one must understand the shift from manual flight to total autonomy. Early expeditions relied on FPV (First Person View) pilots using low-latency links. However, Expedition 33 introduces a “blackout” phase where the drone must operate entirely on its own internal logic. This shift necessitated a transition from basic obstacle avoidance to complex environmental interpretation.

Autonomous mapping in these contexts isn’t just about taking pictures; it’s about real-time SLAM (Simultaneous Localization and Mapping). The drone must build a 3D model of an unknown space while simultaneously calculating its own position within that space. For Expedition 33, the level of precision required is sub-centimeter, making it a benchmark for industrial-grade tech innovation.

Why the 33rd Iteration Demands Higher Tech Tiers

Previous iterations of the expedition focused on simple “Point A to Point B” navigation. Expedition 33 changes the game by introducing dynamic obstacles—moving machinery, changing light conditions, and fluctuating thermal vents. To beat this level, the UAV must possess a high “Cognitive Flight Level.” This refers to the drone’s ability to prioritize mission objectives (such as mapping a specific corridor) over simple survival (avoiding a wall). Without a Level 4 or Level 5 autonomy rating (as defined by international UAV standards), the drone is likely to enter a “failsafe” loop, failing the mission before it even begins.

Technological Readiness Levels (TRL) for Success

In the world of high-tech drone deployment, “beating a level” is synonymous with achieving a specific Technological Readiness Level. The Sprong Expedition 33 is designed to be impossible for any system below TRL 7, with a 100% success rate only achievable at TRL 9.

Achieving Level 7: System Prototype Demonstration

To even attempt Expedition 33, a system must reach TRL 7. At this level, the drone is no longer a laboratory experiment; it is a prototype demonstrated in an operational environment. This involves the integration of solid-state LiDAR and an onboard processing unit capable of executing edge computing.

At TRL 7, the drone can handle the physical rigors of the Sprong environment, but it may still struggle with the high-level decision-making required when sensors provide conflicting data. For example, if the LiDAR detects a ghost image due to dust or steam—a common occurrence in Expedition 33—a Level 7 system might pause or deviate unnecessarily. “Beating” the mission at this level requires significant “babysitting” from a remote operator, which technically limits the “autonomous” victory.

The Leap to Level 9: Actual System Flight Proven

To truly master Expedition 33, the hardware and software must reach TRL 9. This means the system is “flight-proven” through successful mission operations. At this level, the drone utilizes a “Sensor Fusion” algorithm that cross-references LiDAR, ultrasonic sensors, and visual odometry to filter out noise.

Innovation at Level 9 includes the use of AI “Relocalization.” If the drone loses its way in a featureless part of the Sprong corridor, it can search its internal memory for the last recognizable landmark and mathematically re-situate itself. This level of tech is what separates a successful expedition from a lost asset.

Innovative AI and Sensor Integration

The core of the Sprong Expedition 33 challenge lies in the drone’s ability to see and think. The “level” of the camera and sensor suite is the most critical hardware variable in the mission.

LiDAR and Remote Sensing in High-Interference Zones

Traditional drone cameras are often blinded by the low-light or high-contrast environments found in Expedition 33. To beat this level, the integration of high-definition LiDAR (Light Detection and Ranging) is mandatory. Unlike cameras, LiDAR sends out its own light source in the form of laser pulses, allowing the drone to “see” in total darkness.

Recent innovations in “Flash LiDAR” have allowed these sensors to become small enough for micro-drones. These sensors provide a 360-degree field of view, creating a real-time point cloud that the drone’s AI uses to navigate. In Expedition 33, the density of this point cloud—often exceeding 1 million points per second—allows the drone to identify thin wires or glass partitions that would be invisible to standard obstacle avoidance systems.

AI Pathfinding: Moving Beyond Simple Obstacle Avoidance

Beating Expedition 33 requires an AI that can perform “Predictive Pathing.” Standard drones react to an obstacle when they get close to it. An Expedition 33-ready drone uses AI to predict the most efficient flight path through a maze-like structure 50 meters in advance.

This is achieved through Neural Radiance Fields (NeRFs) and advanced machine learning models trained on thousands of similar environments. The “innovation” here is the drone’s ability to learn on the fly. As it traverses the Sprong environment, the AI updates its navigation model, becoming more efficient with every meter traveled. This level of autonomous innovation reduces power consumption, allowing the drone to stay airborne long enough to complete the mission.

Strategic Logistics and Remote Sensing Calibration

Success in Expedition 33 isn’t just about the flight; it’s about the data. The “level” of data fidelity determines whether the expedition is a success or a failure in the eyes of the engineers.

Signal Propagation in the Sprong Environment

One of the greatest hurdles in Expedition 33 is the “Signal Shadow.” This is a phenomenon where the structural composition of the environment blocks all external radio frequencies. To beat this, innovation in “Mesh Networking” is required.

Drones designed for this level often deploy small signal repeaters as they fly, creating a “breadcrumb” trail of connectivity. This allows the drone to transmit mapping data back to the base station even when it is deep inside a signal-denied zone. The technology required to manage this network autonomously, ensuring each node is placed in an optimal position, represents a peak in remote sensing coordination.

Battery Density and Power Management Innovation

You cannot beat Expedition 33 if your drone runs out of power at the 90% mark. Because the mission requires intense onboard processing for AI and LiDAR, battery drain is significantly higher than in standard flights.

The “level” of battery tech required usually involves Solid-State Battery technology or high-energy-density Lithium-Sulfur cells. Beyond the hardware, “Smart Power Management” software is crucial. This innovation allows the drone to dynamically throttle its processor—slowing down the AI frequency when in a simple hallway and ramping it up when navigating complex geometry—to squeeze every possible second of flight time out of the cells.

The Future of Expedition-Class Drone Tech

Beating Sprong Expedition 33 is more than a feat of piloting; it is a demonstration of the current ceiling of drone technology. The “level” required to succeed is a moving target, as new innovations in AI and sensor fusion continue to push the boundaries of what is possible.

Scalability and Autonomous Fleet Coordination

The next step in “beating” these challenges is the shift from a single drone to a “Swarm Level” operation. In future expeditions, success will be measured by how well a fleet of drones can divide and conquer the Sprong environment. This involves “Collaborative Mapping,” where multiple drones share their SLAM data in real-time to build a comprehensive map faster than any single unit could.

Innovation in swarm intelligence—where drones can “negotiate” who covers which sector—is the next frontier. To reach the level where Expedition 33 is considered “easy,” we must move toward these decentralized, fully autonomous ecosystems.

In conclusion, to beat Sprong Expedition 33, you need more than just a drone; you need a flying supercomputer. It requires a Level 9 TRL, high-density LiDAR, predictive AI pathfinding, and innovative power management. As we continue to refine these technologies, the lessons learned from Expedition 33 will pave the way for drones that can navigate any environment on Earth—and beyond—without any human intervention.