The landscape of modern technology is undergoing a radical transformation, shifting from systems that merely follow programmed instructions to those that can perceive, interpret, and react to their environment in real-time. At the heart of this evolution lies a specialized piece of hardware that is often overshadowed by the more famous Central Processing Unit (CPU) and Graphics Processing Unit (GPU). This component is the Vision Processing Unit, or VPU.

As we push the boundaries of what is possible in the realm of Tech & Innovation—specifically regarding autonomous flight, AI-driven mapping, and remote sensing—the VPU has emerged as the critical “brain” enabling machines to see and understand the world. This article explores the intricate architecture of the VPU, its pivotal role in edge computing, and how it is driving the next wave of industrial and consumer innovation.

What is a Vision Processing Unit (VPU)?

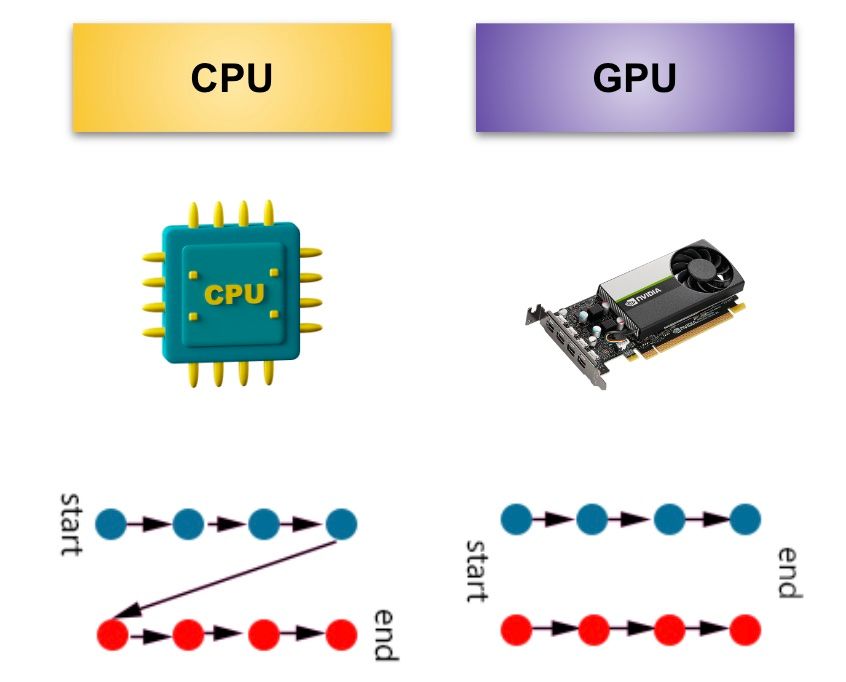

To understand the VPU, one must first understand the unique demands of visual data. Unlike text or basic numerical data, visual information is incredibly dense. A single second of high-resolution video contains millions of pixels, each requiring processing to identify shapes, depth, and movement. While a standard CPU can handle these tasks, it does so linearly, which is far too slow for real-time applications.

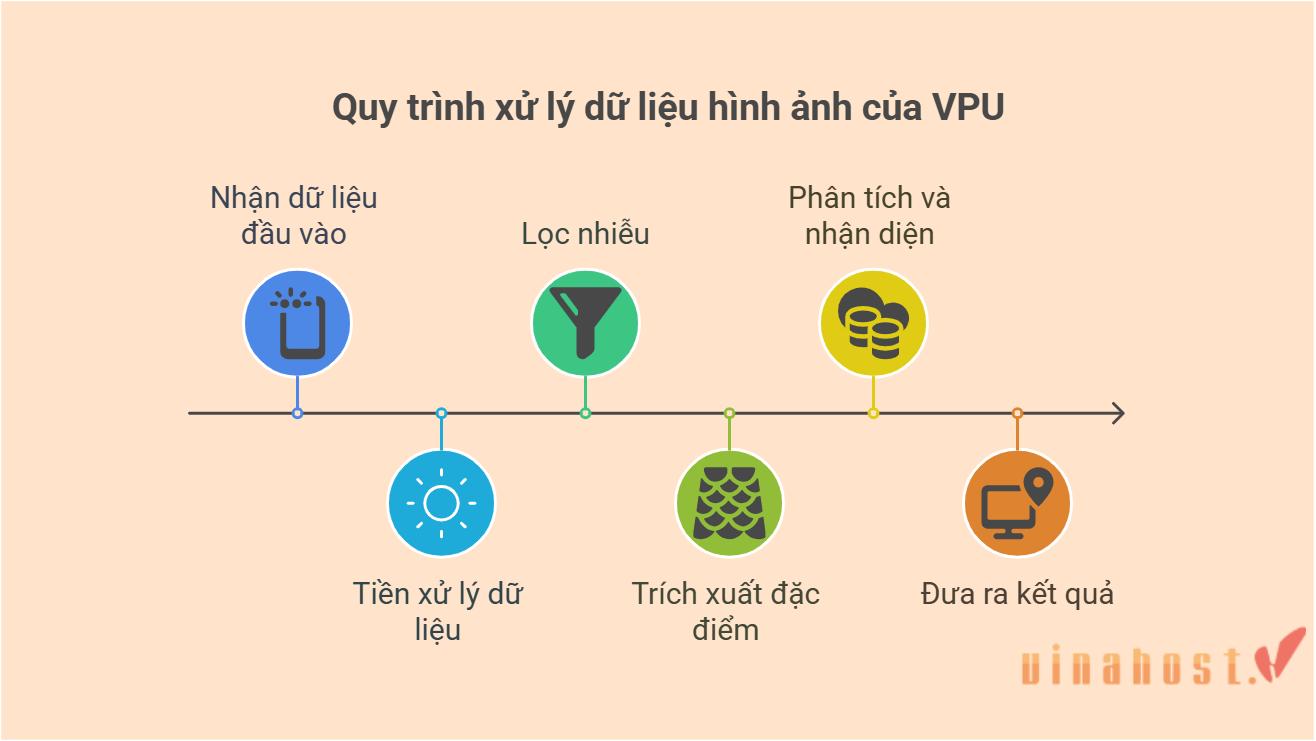

A Vision Processing Unit is a class of microprocessor specifically designed to accelerate machine vision tasks. It is an AI-optimized chip that excels at the parallel processing required for deep learning and neural networks, all while maintaining a remarkably low power profile.

Defining the Core Architecture

The architecture of a VPU is fundamentally different from traditional processors. While a CPU is a “generalist” designed to handle a wide variety of logic tasks through a few powerful cores, a VPU is a “specialist.” It typically consists of a series of highly parallel programmable compute engines combined with hardware accelerators specifically for image processing and neural network inference.

In the context of tech innovation, the VPU’s architecture allows it to perform complex mathematical operations—such as convolutions, which are the building blocks of modern AI vision—much faster than a general-purpose processor. By offloading these tasks to a dedicated VPU, the rest of the system’s hardware is freed up to manage flight controls, telemetry, and communication, ensuring a more stable and responsive autonomous platform.

VPU vs. CPU and GPU: Key Differences

It is common to confuse VPUs with GPUs, as both handle parallel workloads. However, the distinction is vital for innovation in mobile and autonomous tech. GPUs were originally designed for rendering graphics; they are incredibly powerful but also power-hungry and heat-intensive. This makes them less ideal for small, battery-operated devices like autonomous drones or remote sensors.

The VPU, by contrast, is designed for “inference at the edge.” It is stripped of the overhead required for rendering complex 3D graphics and is instead laser-focused on analyzing data. A VPU can perform trillions of operations per second (TOPS) while consuming only a fraction of the wattage a GPU would require. This efficiency is what allows innovation in “edge AI,” where the intelligence resides on the device itself rather than in a distant cloud server.

The Role of VPUs in Autonomous Systems and AI

The most significant impact of VPU technology is seen in the advancement of autonomous systems. In the field of Tech & Innovation, “autonomy” refers to a machine’s ability to navigate and make decisions without human intervention. This requires a sophisticated “Sense and Avoid” capability, which is only possible through the high-speed processing provided by a VPU.

Enabling Real-Time Edge Computing

In traditional setups, data captured by a sensor would be sent to a cloud server to be analyzed, with the results sent back to the device. In an autonomous flight scenario, this latency (the delay in communication) could be catastrophic. If a drone is flying at 30 miles per hour, it cannot wait half a second for a cloud server to tell it there is a power line in its path.

The VPU enables “Edge Computing,” meaning the AI processing happens directly on the device. Because the VPU can process visual frames in milliseconds, it allows for near-instantaneous decision-making. This is the technology behind “AI Follow Mode,” where a device can lock onto a subject and predict its movement patterns, adjusting its own trajectory in real-time to maintain a specific distance and angle, even in complex environments with moving obstacles.

Power Efficiency in Remote Sensing

Remote sensing—the process of gathering information about an object or phenomenon without making physical contact—relies heavily on the longevity of the hardware. Whether it is a drone surveying agricultural land or an autonomous rover inspecting an industrial site, battery life is the primary constraint.

The power efficiency of the VPU is a game-changer here. By utilizing specialized hardware to run AI models, developers can implement advanced features like automated crop health analysis or leak detection in pipelines without draining the battery in minutes. This efficiency allows for longer missions and the collection of more comprehensive data sets, which is the cornerstone of modern data-driven innovation.

Mapping and Spatial Intelligence through VPU Integration

One of the most complex tasks for any autonomous system is understanding its place in three-dimensional space. This isn’t just about knowing GPS coordinates; it’s about “Spatial Intelligence.” The VPU is the primary engine that transforms raw 2D images into 3D environmental maps.

SLAM (Simultaneous Localization and Mapping)

SLAM is a hallmark of high-end tech innovation. It is the process by which a device builds a map of an unknown environment while simultaneously keeping track of its own location within that map. This is essential for autonomous flight in GPS-denied environments, such as inside warehouses, under bridges, or in dense forests.

A VPU processes data from multiple sources—stereoscopic cameras, LiDAR, and ultrasonic sensors—to create a “point cloud” of the environment. By identifying static “features” in the environment (like the corner of a building or a specific tree), the VPU can calculate the device’s movement with incredible precision. This level of spatial awareness is what enables drones to fly through narrow gaps or perform complex indoor maneuvers with millimeter-level accuracy.

Object Recognition and Semantic Segmentation

Innovation in AI isn’t just about seeing that something is there; it’s about knowing what it is. This is known as semantic segmentation. A VPU allows a system to categorize every pixel in its field of view. For example, in a mapping mission, the VPU can distinguish between a road, a vehicle, a pedestrian, and a building in real-time.

This capability is vital for autonomous urban planning and emergency response. In a search and rescue operation, a VPU-equipped drone can scan a disaster zone and automatically highlight “objects of interest” (like a person’s heat signature or a specific color of clothing) while ignoring the surrounding rubble. This automated filtering significantly reduces the cognitive load on human operators and speeds up critical decision-making processes.

The Future of VPU Technology in Innovation

As we look toward the future, the evolution of VPU technology will continue to dictate the pace of innovation in autonomous tech. We are moving toward an era of “Super-Intelligence” at the edge, where the VPU will not just be a secondary processor, but the central hub of all situational awareness.

Advancements in Swarm Intelligence

One of the most exciting frontiers in Tech & Innovation is “Swarm Intelligence”—the coordination of multiple autonomous units to achieve a single goal. This requires massive amounts of data sharing and localized processing. Each unit in a swarm must be aware of its neighbors’ positions to avoid collisions while simultaneously executing its part of the mission.

VPUs are essential for swarms because they provide the localized “visual intelligence” needed for decentralized coordination. Instead of a central computer controlling fifty drones, each drone uses its VPU to “see” its peers and make micro-adjustments to its flight path. This leads to more resilient systems that can cover vast areas for mapping or environmental monitoring in a fraction of the time.

Integration with 5G and Cloud Connectivity

While the VPU excels at edge processing, the future lies in a hybrid approach. The integration of VPUs with 5G connectivity will allow for a seamless flow of data between the edge and the cloud. The VPU will handle immediate, time-sensitive tasks (like obstacle avoidance), while the 5G link sends processed, high-level data to the cloud for long-term storage and global analysis.

This synergy will enable “Remote Sensing 2.0,” where autonomous fleets can update global maps in real-time. Imagine a world where autonomous delivery drones or inspection units are constantly feeding data into a “Digital Twin” of a city. The VPU is the technology that makes this data stream manageable, ensuring that only relevant, processed information is transmitted, thereby optimizing bandwidth and maximizing the speed of innovation.

Conclusion

The Vision Processing Unit is much more than just a chip; it is the fundamental enabler of modern machine intelligence. By providing the specialized computational power needed for real-time visual perception, the VPU has unlocked the potential for true autonomy in drones, robotics, and remote sensing.

As we continue to innovate in fields like AI-driven mapping and autonomous flight, the VPU will remain at the forefront, turning raw visual data into actionable intelligence. For anyone looking to understand the future of technology, the VPU is the essential piece of the puzzle that bridge the gap between a machine that simply moves and a machine that truly understands its world.