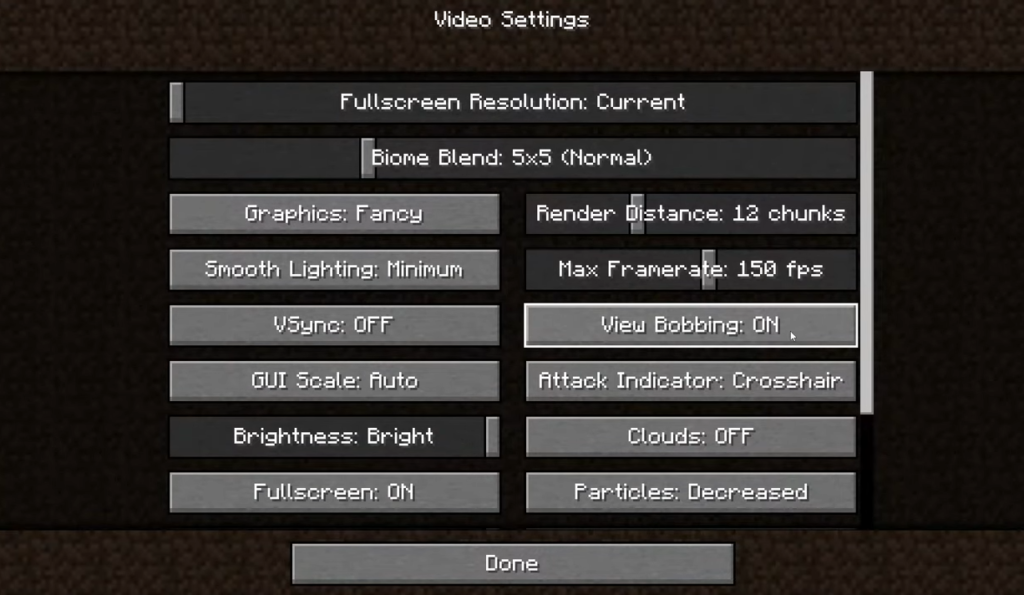

In the realm of digital environments, particularly within the sandbox world of Minecraft, “view bobbing” is a toggleable setting that simulates the rhythmic up-and-down motion of a human gait. When a player moves, the camera oscillates to mimic the natural movement of the head. In the sophisticated world of drone technology and aerial imaging, this concept transitions from a simple aesthetic choice to a critical technical challenge. View bobbing in drone cameras represents the unintended—and sometimes intentional—visual oscillations caused by flight physics, wind resistance, and mechanical vibration.

For drone pilots, cinematographers, and FPV (First Person View) enthusiasts, understanding the mechanics of view bobbing is essential for capturing professional-grade footage and maintaining flight precision. This article explores the technical nuances of camera movement, stabilization systems, and how the “bobbing” effect influences the viewer’s perspective and the pilot’s control.

The Mechanics of Camera Oscillation: From Simulated Gait to Aerial Flight

In the context of cameras and imaging, “view bobbing” refers to the vertical and lateral shifting of the frame during movement. While Minecraft uses this to increase immersion by simulating a walking person, a drone must contend with physical forces that produce similar, though often less desirable, effects.

The Physics of Drone Motion and Image Stability

A drone is a dynamic platform. Unlike a tripod-mounted camera, a drone is constantly fighting gravity and environmental factors. When a quadcopter moves forward, it tilts its entire body. If the camera is fixed to the frame (as seen in many racing or freestyle FPV drones), the “view” changes immediately. As the motors adjust RPM thousands of times per second to maintain stability, micro-vibrations can translate into the video feed. This produces a high-frequency version of view bobbing, often referred to in the industry as “jello effect” or “shimmer.”

Simulated Movement vs. Physical Vibration

In digital imaging, there is a distinct difference between “intentional bobbing” and “mechanical noise.” Intentional bobbing is used in aerial filmmaking to convey a sense of speed or to make a shot feel more “organic.” Conversely, unintended bobbing is the enemy of the imaging professional. It distracts the viewer and can confuse AI-driven object tracking systems. Understanding how to manage these oscillations begins with the sensor itself and how it interprets movement relative to the horizon.

The Role of Stabilization in Counteracting View Bobbing

To combat the natural tendency of a flying camera to bob and weave, the drone industry has developed two primary paths: mechanical stabilization and digital processing. Both technologies aim to neutralize the “view bob” to create a perfectly level, cinematic experience.

Mechanical 3-Axis Gimbals

The most common solution for eliminating view bobbing in professional drones is the 3-axis gimbal. By using brushless motors and high-speed IMUs (Inertial Measurement Units), a gimbal compensates for the drone’s tilt, roll, and pan in real-time. When the drone pitches forward to accelerate—which would naturally cause the camera to “bob” downward—the gimbal tilts up to maintain a locked horizon. This creates the “floating camera” effect that is a hallmark of modern aerial cinematography.

Electronic Image Stabilization (EIS) and RockSteady

For smaller drones where a mechanical gimbal is too heavy or fragile, Electronic Image Stabilization (EIS) takes over. Technologies like DJI’s RockSteady or GoPro’s HyperSmooth use advanced algorithms to crop the image and shift the frame digitally to counteract movement.

Unlike a gimbal, which prevents the movement physically, EIS allows the camera to “bob,” but the software processes the frames to remove the oscillation. This is particularly important in FPV systems where the pilot needs a stable image to navigate tight spaces, but the drone is undergoing extreme physical stress. The software analyzes the metadata from the drone’s internal gyroscopes to predict where the “bob” will occur and corrects it before the frame is ever displayed to the pilot.

View Bobbing and the Immersive Experience in FPV Systems

While the goal of most aerial imaging is to eliminate bobbing, there are specific scenarios in the FPV world where a certain degree of “view bobbing” or camera movement is actually beneficial. This brings us back to the immersive quality found in Minecraft’s implementation of the feature.

The Psychological Connection to Speed

In high-speed drone racing or cinematic “chase” shots, a perfectly stabilized image can sometimes feel sterile or disconnected. Pilots often prefer a fixed camera or a “limited” stabilization mode. This allows the camera to tilt and oscillate slightly with the drone’s movements. This “visual feedback” provides the pilot with a better sense of momentum and “proprioception” within the digital space. If the camera doesn’t bob or tilt when the pilot pushes the throttle, it can lead to a sensory mismatch, sometimes resulting in motion sickness or a loss of spatial awareness.

Motion Sickness and the Digital Horizon

One of the primary reasons users disable view bobbing in Minecraft is to prevent motion sickness. The same principle applies to FPV goggles. If a drone’s camera bobs excessively due to wind or poor tuning, the pilot’s brain receives conflicting signals: the eyes see movement that the inner ear does not feel.

Imaging systems now utilize “Horizon Leveling” features to mitigate this. By keeping the horizon perfectly flat while allowing for slight lateral “bobbing,” manufacturers can maintain the feeling of flight without inducing the nausea associated with erratic camera movements. This balance is critical for long-range FPV missions where the pilot may be wearing a headset for extended periods.

Advanced Post-Processing: Using AI to Refine Camera Motion

As we look toward the future of drone imaging, the management of view bobbing is moving from the hardware on the drone to the software in the editing suite. This transition allows for even more control over the “rhythm” of the footage.

Gyroflow and Post-Flight Stabilization

A significant innovation in the FPV and drone community is the use of Gyroflow. Instead of stabilizing the image during the flight, the drone records raw gyroscope data into a separate file. In post-production, software uses this data to smooth out the “view bobbing” with surgical precision.

The advantage here is that the filmmaker can choose exactly how much bobbing to keep. For a high-action scene, they might keep 20% of the movement to maintain a sense of grit and realism. For a commercial real estate shot, they would dial it to 0% for a perfectly smooth glide. This level of control represents the pinnacle of modern imaging technology, turning what was once a mechanical limitation into a creative tool.

The Future of Dynamic Perspective

With the integration of AI, future drone cameras may be able to distinguish between different types of movement. Imagine a camera system that can recognize the “bobbing” caused by wind and eliminate it, while simultaneously keeping the “bobbing” caused by a pilot’s intentional banking turn. By using machine learning to analyze thousands of flight hours, camera systems are becoming “context-aware,” ensuring that the view bobbing the viewer sees is always intentional and never a result of technical failure.

Conclusion: Balancing Stability and Realism

What started as a simple visual toggle in a video game has profound implications in the world of drone technology. View bobbing—the rhythmic movement of the camera—is a fundamental element of how we perceive motion in a digital or remote-controlled space.

In the field of cameras and imaging, the goal is rarely to just “turn off” view bobbing, but rather to master it. Through the use of 3-axis gimbals, sophisticated EIS, and post-processing software like Gyroflow, the modern drone operator has more power than ever to dictate the viewer’s experience. Whether the goal is the clinical perfection of a stabilized cinematic pan or the visceral, bobbing rush of a 100-mph FPV dive, understanding the “view bob” is key to capturing the sky.