In the rapidly evolving landscape of drone technology and innovation, the term “variability” holds immense significance. At its core, variability refers to the extent to which data points or system behaviors deviate from a central tendency, an expected outcome, or a consistent pattern. It’s the inherent lack of perfect uniformity or predictability in any measurement, process, or performance. For advanced drone applications – from AI-driven autonomous flight to highly precise mapping and remote sensing – understanding, quantifying, and managing variability is not merely an academic exercise; it is fundamental to ensuring reliability, accuracy, and safety.

Variability manifests in countless ways: sensor readings might fluctuate due to environmental factors, an AI algorithm’s performance could differ based on input data nuances, or an autonomous drone’s flight path might deviate subtly from its planned trajectory. Ignoring variability leads to brittle systems, unreliable data, and ultimately, a failure to meet operational objectives. Conversely, a deep comprehension of variability empowers engineers and developers to design more robust systems, refine algorithms, and build truly innovative solutions that can thrive in complex, unpredictable real-world environments. This article delves into the multifaceted nature of variability within drone tech and innovation, exploring its sources, impacts, and the sophisticated strategies employed to mitigate its challenges.

Understanding Variability in Data, Sensors, and the Environment

The foundation of any intelligent drone system lies in its ability to gather, interpret, and act upon data. However, this data is rarely pristine; it is constantly influenced by various forms of variability. Recognizing these sources is the first step toward building resilient and accurate drone technologies.

Sensor Noise and Drift

Drones rely on a sophisticated array of sensors – GPS, IMUs (Inertial Measurement Units comprising accelerometers and gyroscopes), magnetometers, barometers, LiDAR, cameras, and more – to perceive their environment and determine their own state. Each of these sensors is susceptible to inherent noise. Sensor noise refers to random fluctuations in the electrical signal generated by the sensor, leading to slight inaccuracies in the measured values. This noise can be electronic (thermal noise, shot noise) or mechanical. For instance, an IMU might report slightly different accelerations or angular velocities even when the drone is perfectly still.

Beyond random noise, sensors also experience drift. Drift is a slow, gradual change in a sensor’s output over time, even under constant input conditions. This can be due to temperature changes, aging components, or other environmental factors. A gyroscope, for example, might report a small, persistent angular velocity when none exists, leading to accumulated errors in attitude estimation over longer flight durations. Managing sensor noise and drift is critical for maintaining accurate navigation and stable flight.

Environmental Factors and Contextual Data Inconsistencies

The operational environment introduces a significant layer of variability. A drone operating outdoors is subject to winds, temperature fluctuations, humidity, and varying light conditions. Wind gusts can instantaneously alter a drone’s position and orientation, requiring active compensation from the flight controller. Temperature affects battery performance, sensor calibration, and even the air density, which influences propeller efficiency. Humidity can impact sensor performance, particularly optical or chemical sensors.

Furthermore, contextual data, such as GPS signals, can be highly variable. Satellite availability, multipath interference (signals bouncing off buildings or terrain), and atmospheric conditions can all degrade GPS accuracy, leading to position errors. In urban canyons or dense foliage, GPS signals might be intermittent or entirely lost. For mapping and remote sensing, changes in lighting conditions, shadows, and reflective surfaces introduce variability in captured imagery, challenging computer vision algorithms. Understanding and modeling these environmental variables are crucial for developing robust drone systems that can perform reliably across diverse conditions.

Data Inconsistencies for AI and Machine Learning

Artificial intelligence and machine learning models, particularly those powering features like AI follow mode, object detection, and autonomous navigation, are highly dependent on the quality and consistency of their training data. Variability in this training data can lead to models that generalize poorly or exhibit unpredictable behavior in real-world scenarios.

Inconsistencies can arise from various sources: variations in camera angles, lighting conditions, object poses, or occlusions during data collection. If an AI follow mode model is primarily trained on data where subjects move predictably in open spaces, it might struggle when encountering erratic movements or complex backgrounds. Similarly, for obstacle avoidance, if the training data lacks sufficient diversity of obstacle types or environmental conditions, the drone might fail to detect novel obstructions. Addressing this requires extensive, diverse, and carefully curated datasets, along with robust data augmentation techniques to introduce simulated variability and improve model resilience.

Variability in Autonomous Systems and AI

The promise of fully autonomous drones hinges on their ability to make intelligent decisions and execute complex tasks without constant human intervention. However, the inherent variability in inputs, environmental conditions, and system interactions presents significant challenges to achieving truly consistent and reliable autonomous operation.

Path Planning and Execution Deviations

Autonomous flight relies on sophisticated path planning algorithms to generate trajectories that navigate the drone from one point to another while avoiding obstacles. However, the actual execution of these planned paths is rarely perfect. Variability here can stem from several sources:

- Environmental Disturbances: Wind gusts, air density changes, or unexpected thermal currents can push the drone off its intended course, requiring the flight controller to make continuous adjustments.

- Control System Limitations: Even the most advanced PID controllers have inherent response times and limitations, meaning the drone might overhoot or undershoot desired positions, especially during rapid maneuvers.

- Localization Errors: Inaccuracies in the drone’s estimated position (due to GPS variability or IMU drift) mean the drone might think it’s on the path when it’s slightly off, leading to cumulative deviations.

- Dynamic Environments: In scenarios with moving obstacles (other drones, vehicles, wildlife), the planned path might become obsolete, necessitating real-time replanning, which itself can introduce variability in the new trajectory.

These deviations, while often small, can accumulate over time or become significant in high-precision tasks, impacting mission success and safety.

AI Model Performance Fluctuations (e.g., AI Follow Mode)

AI-powered features like “AI Follow Mode” are designed to intelligently track a moving subject. The performance of such models, however, can fluctuate significantly depending on the real-world conditions.

- Subject Variability: Different subjects move at different speeds, in varying patterns, and wear diverse clothing. An AI trained predominantly on uniform movement might struggle with unpredictable changes in direction or speed.

- Environmental Changes: Lighting conditions (bright sunlight, shadows, dusk), background clutter, and occlusions (subject moving behind trees or buildings) can all degrade the AI’s ability to accurately detect and track the target.

- Algorithm Robustness: The underlying algorithms might have varying levels of robustness to noise or partial occlusions. A sudden change in appearance (e.g., a subject putting on a hat) might temporarily confuse the tracker.

These fluctuations mean that while AI Follow Mode might work flawlessly in a controlled park environment, its performance could be inconsistent in a dense urban setting or a complex natural landscape, impacting the quality of the captured footage or the reliability of the tracking.

Decision-Making Under Uncertainty

At the heart of autonomous systems is the ability to make decisions. In a perfectly predictable world, this would be straightforward. However, due to inherent variability in sensor data, environmental conditions, and the behavior of other agents, drones must often make decisions under uncertainty.

- Probabilistic Assessments: Instead of definitive statements, sensors might provide probabilistic information (e.g., “there’s an obstacle with 80% certainty at this location”). Autonomous systems must weigh these probabilities to make the safest and most efficient decision.

- Ambiguous Situations: An object might be partially obscured, making its identity or trajectory uncertain. Should the drone proceed cautiously, alter its path, or pause? The decision-making process must account for this ambiguity.

- Conflicting Information: Different sensors might provide slightly conflicting information (e.g., GPS says one thing, visual odometry another). The system needs robust methods (like Kalman filters or sensor fusion) to reconcile these discrepancies and make a coherent decision.

Variability in the information available to the drone directly translates into variability in its decision-making process, demanding sophisticated algorithms that can reason and act effectively in the face of incomplete or uncertain data.

Impact of Variability on Advanced Drone Applications

The consequences of unmanaged variability reverberate across all advanced drone applications, directly influencing their accuracy, reliability, safety, and overall utility.

Mapping and Remote Sensing Accuracy

For applications like photogrammetry, 3D mapping, agricultural surveying, or infrastructure inspection, accuracy is paramount. Variability directly undermines this.

- Positional Errors: Inaccurate GPS data (due to variability) during image capture translates directly into positional errors in the generated maps or 3D models. If the drone’s reported position is off by a meter, all collected data points will be similarly shifted.

- Image Georeferencing Issues: Even with precise GPS, if the drone’s attitude (roll, pitch, yaw) is estimated with variability (e.g., from IMU drift), the angles at which images are captured will be slightly incorrect, leading to distortions and misalignments when stitching images together.

- Sensor Calibration Drift: For LiDAR or multispectral sensors, changes in environmental temperature can subtly alter sensor calibration, leading to variability in the intensity or spectral data collected, which can affect analysis results (e.g., vegetation health indices).

These inaccuracies can render maps unreliable for critical decision-making, compromise the quality of 3D models for construction or simulation, or lead to incorrect assessments in agricultural or environmental monitoring.

Reliability of Autonomous Operations

The ultimate goal of many innovative drone applications is fully autonomous, reliable operation. Variability is the primary hurdle.

- Mission Success Rates: If an autonomous inspection drone frequently veers off its precise flight path due to wind variability, or if its AI object detection system occasionally fails to identify a critical anomaly due to lighting variability, the overall mission success rate will suffer.

- Predictability: For drones to integrate safely into airspace or operate alongside human workers, their behavior must be highly predictable. Unmanaged variability leads to unpredictable behavior, which erodes trust and limits the scope of autonomous deployment.

- Edge Cases: Variability often exposes “edge cases” – unusual but plausible scenarios where the drone’s system might fail. A robust autonomous system must be designed to handle a wide range of variable conditions, not just the typical ones.

Safety and Compliance Challenges

Perhaps the most critical impact of variability is on safety and regulatory compliance.

- Collision Risk: If obstacle avoidance sensors exhibit high variability in their detection range or accuracy, the risk of collisions with unforeseen obstacles increases dramatically.

- Geo-fencing Violations: Autonomous drones must adhere to virtual boundaries (geo-fences). Variability in GPS positioning or flight path execution could inadvertently lead to a drone drifting outside an approved operating zone, posing safety risks and violating regulations.

- Regulatory Hurdles: Aviation authorities worldwide are developing regulations for autonomous drone operations. Demonstrating a low level of variability in performance and a high degree of predictability is essential for obtaining flight certifications and expanding operational permissions. For instance, achieving “Type Certification” for autonomous package delivery drones requires rigorous proof that the drone will perform consistently and safely across diverse conditions. Uncontrolled variability makes such certification incredibly difficult.

Strategies for Mitigating and Managing Variability

Given the pervasive nature and critical impacts of variability, significant innovation in drone technology is dedicated to mitigating and managing it. These strategies range from sophisticated data processing to advanced control engineering and rigorous testing.

Advanced Sensor Fusion and Filtering

A cornerstone of managing sensor variability is sensor fusion. Instead of relying on a single sensor, drone systems integrate data from multiple, complementary sensors (e.g., GPS, IMU, barometer, vision sensors, LiDAR). Algorithms like the Extended Kalman Filter (EKF), Unscented Kalman Filter (UKF), or Particle Filters are used to combine these diverse inputs, estimate the drone’s state (position, velocity, attitude) more accurately, and filter out noise and drift. If GPS temporarily becomes unreliable, the IMU and vision sensors can help maintain an accurate position estimate, compensating for the GPS variability. Similarly, complementary filters can combine high-frequency, noisy IMU data with slower, more accurate GPS data to provide a robust and smooth state estimate.

Robust Control Algorithms and Adaptive Systems

To counteract environmental variability and maintain stable flight, drone systems employ robust control algorithms. Proportional-Integral-Derivative (PID) controllers are common, but more advanced techniques like Model Predictive Control (MPC) or LQR (Linear Quadratic Regulator) are used for complex maneuvers or in systems requiring higher precision and stability. These controllers are designed to compensate for external disturbances (like wind) and internal system variability (like motor efficiency changes).

Furthermore, adaptive control systems can learn and adjust their parameters in real-time to cope with changing conditions. For instance, an adaptive controller might modify its response to wind as it learns the drone’s aerodynamic characteristics in varying wind speeds. This allows the drone to maintain desired performance levels even as its environment or its own physical characteristics (e.g., payload weight) change.

Machine Learning for Anomaly Detection and Prediction

Machine learning plays an increasingly vital role in managing variability, particularly in detecting anomalies and predicting future behavior.

- Anomaly Detection: ML models can be trained to recognize unusual sensor readings, unexpected deviations from a planned trajectory, or irregular system behaviors that might indicate a problem caused by variability. This allows for early intervention or warnings.

- Predictive Maintenance: By analyzing historical data, ML can predict when a component (e.g., a motor, battery) might start exhibiting increased variability or failure characteristics, enabling proactive maintenance.

- Environmental Modeling: ML can be used to model complex environmental variables (like localized wind patterns based on terrain and weather data), providing the autonomous system with better context to anticipate and compensate for variability.

- AI Model Robustness: Techniques like adversarial training, where AI models are exposed to slightly perturbed or “adversarial” input data, help them become more robust to real-world variability and noise, improving their generalization capabilities.

Rigorous Testing and Validation

Ultimately, the effectiveness of any variability mitigation strategy must be proven through rigorous testing and validation.

- Simulations: High-fidelity simulations allow engineers to test drone systems across a vast range of environmental conditions, sensor variabilities, and failure scenarios that would be impractical or dangerous to replicate in the real world. This helps identify weaknesses and refine algorithms.

- Hardware-in-the-Loop (HIL) Testing: This involves connecting actual drone hardware (flight controller, sensors) to a simulated environment. It provides a more realistic testbed than pure software simulation, accounting for real-world electronic noise and timing issues.

- Extensive Field Trials: Real-world flight testing across diverse geographical locations, weather conditions, and operational scenarios is indispensable. This helps uncover unforeseen interactions and validates the drone’s performance in unpredictable environments.

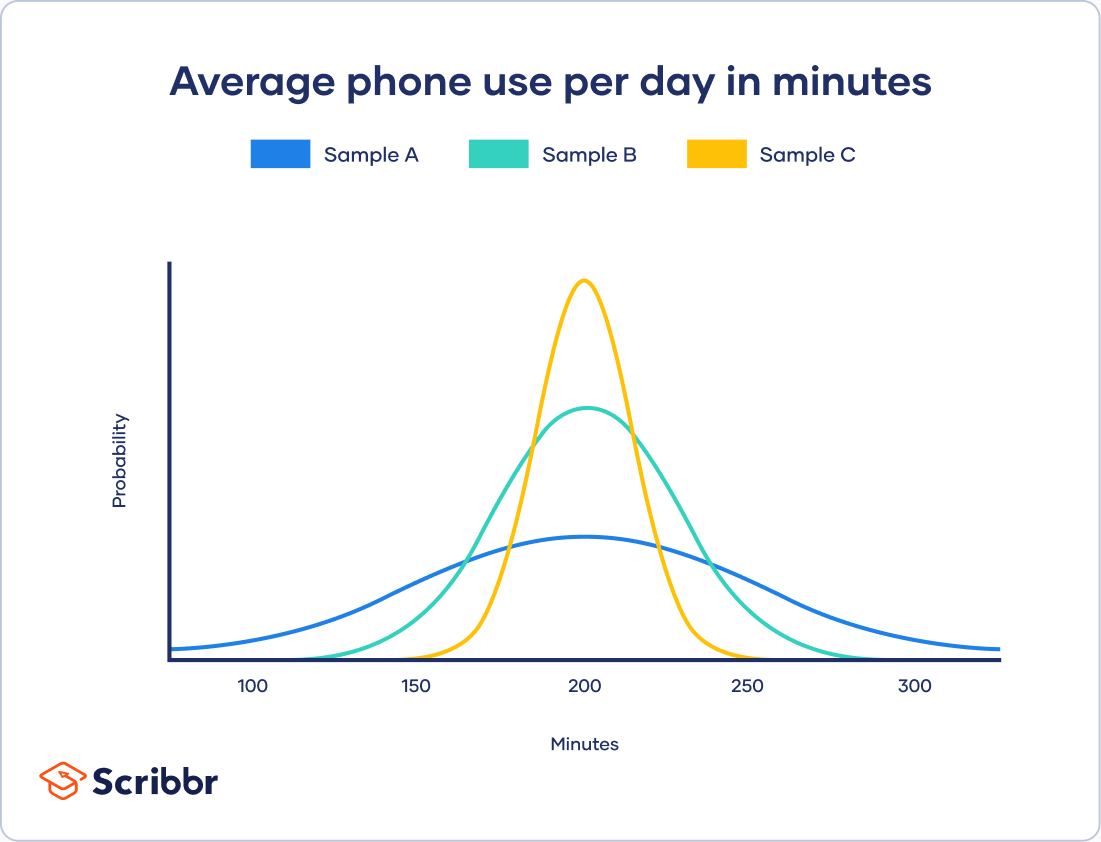

- Statistical Analysis: Collecting and statistically analyzing performance data from all testing phases helps quantify variability, identify performance bottlenecks, and provide empirical evidence for reliability and safety claims.

Conclusion

Variability is an intrinsic characteristic of the natural world and complex technological systems. Within the domain of drone tech and innovation, it is a constant companion, influencing everything from sensor accuracy and AI performance to autonomous reliability and overall safety. Far from being a mere nuisance, understanding and skillfully managing variability is a hallmark of truly advanced engineering. By employing sophisticated techniques like sensor fusion, robust control algorithms, predictive machine learning, and rigorous testing, developers are continuously pushing the boundaries of what drones can achieve. As drones become more integrated into our daily lives – from delivering packages and inspecting infrastructure to monitoring agriculture and facilitating emergency response – the mastery of variability will remain the defining factor in their continued evolution, ensuring their precision, reliability, and transformative impact on the future.