The digital landscape is characterized by an ever-increasing demand for computational power, agility, and seamless integration. As organizations grapple with the complexities of modern IT infrastructure, the concept of a unified computing system has emerged as a pivotal solution. Far from being a single piece of hardware, a unified computing system represents a paradigm shift in how computing resources are designed, deployed, and managed. It is an architectural approach that consolidates various computing components – servers, networking, storage, and management – into a single, cohesive, and intelligent platform. This integration aims to simplify operations, enhance efficiency, and unlock greater agility in responding to business needs.

At its core, a unified computing system seeks to break down traditional silos within data centers. Historically, servers, network switches, and storage arrays were separate entities, often managed by different teams using disparate tools. This fragmentation led to inefficiencies, increased operational overhead, and made it challenging to provision and scale resources dynamically. A unified computing system addresses these challenges by creating a highly integrated and software-defined environment where these components are tightly coupled and managed as a unified whole. This essay will delve into the fundamental principles, key components, benefits, and future implications of unified computing systems, highlighting their transformative potential for modern enterprises.

The Pillars of Unified Computing: Integration and Abstraction

The essence of a unified computing system lies in its ability to bring together disparate hardware and software functionalities into a unified, manageable fabric. This integration is not merely about physical proximity; it’s about creating a synergistic relationship that allows for intelligent resource allocation and streamlined operations.

Server and Network Convergence

One of the foundational aspects of unified computing is the convergence of server and network functionalities. Traditionally, servers were connected to the network via separate network interface cards (NICs) and external switches. In a unified system, this boundary is blurred. This is often achieved through converged network adapters (CNAs) or integrated network fabrics that can handle both network traffic and I/O operations for storage. This convergence reduces the number of physical cables, simplifies cabling infrastructure, and lowers power consumption. More importantly, it allows the network to be programmed and managed in conjunction with server resources, enabling more dynamic and efficient traffic management. For instance, network virtualization can be directly integrated with server virtualization, allowing for the creation of virtual networks that are dynamically provisioned and assigned to virtual machines. This eliminates the need for complex manual network configuration for each new server or virtualized workload.

Storage and Compute Interoperability

Similarly, unified computing systems emphasize the seamless interoperability of storage and compute. Instead of dedicated, siloed storage arrays, unified systems often incorporate technologies that bring storage closer to the compute or allow for unified management of storage resources. This can manifest as converged infrastructure appliances where compute and storage are packaged together, or through software-defined storage (SDS) solutions that abstract the underlying storage hardware and present it as a unified pool of resources. The key is to enable compute resources to access and utilize storage efficiently without the traditional bottlenecks and complexities associated with separate storage networks (SANs) and direct-attached storage (DAS). This allows for faster provisioning of storage for new applications and easier scaling of storage capacity as demand grows. Furthermore, features like data deduplication, compression, and thin provisioning can be managed at a system level, optimizing storage utilization across the entire unified environment.

Key Components of a Unified Computing System

A unified computing system is not a monolithic entity but rather a carefully orchestrated collection of technologies working in concert. While specific implementations may vary, several core components are essential to its functionality.

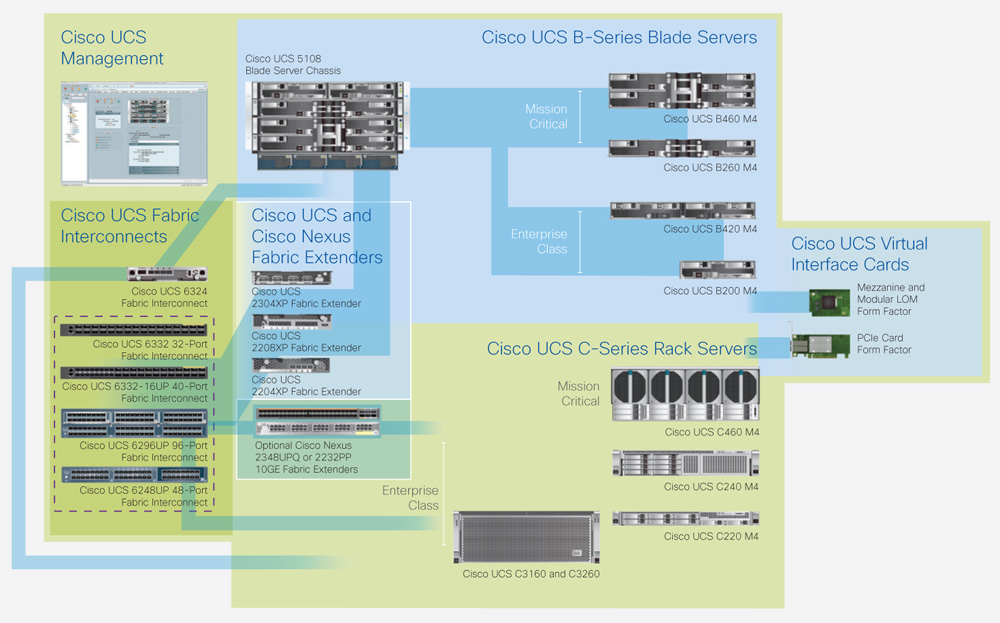

Converged Infrastructure and Blade Servers

Converged infrastructure represents a significant step towards unified computing. It typically involves pre-integrated bundles of compute, storage, and networking hardware designed to work together seamlessly. This often includes blade servers, which are compact, modular servers designed to slide into a chassis that provides shared power, cooling, and network connectivity. Blade server chassis act as a unified platform for multiple server blades, simplifying management and reducing the physical footprint. The network connectivity within the chassis can be highly integrated, with virtual switches and fabric interconnects that manage traffic flow between blades and to the external network. This approach consolidates physical hardware, reduces the complexity of rack and stack operations, and streamlines deployment. The shared resources in a blade chassis, such as power supplies and cooling fans, also contribute to increased energy efficiency and reduced downtime.

Software-Defined Control Plane and Management

Perhaps the most critical element of a truly unified computing system is its software-defined control plane and comprehensive management layer. This is where the intelligence of the system resides. It abstracts the underlying physical hardware, allowing for programmatic control and automation. This management layer provides a single pane of glass for monitoring, configuring, and orchestrating all aspects of the unified system, including servers, network ports, storage allocation, and virtual machines. Through APIs (Application Programming Interfaces), this control plane can be integrated with higher-level orchestration tools and cloud management platforms, enabling true automation of IT operations. For instance, a developer could request a specific compute and storage configuration for an application through a self-service portal, and the unified computing system would automatically provision the necessary resources without manual intervention from IT administrators. This software-defined approach fosters agility, reduces the potential for human error, and allows for rapid adaptation to changing business requirements.

Fabric Interconnects and Virtualization Technologies

The “fabric” is a crucial concept in unified computing, referring to the high-speed, low-latency network that connects all components within the system. Fabric interconnects, such as those found in Cisco’s Unified Computing System (UCS) or Dell EMC’s VxRail, consolidate network and storage I/O, providing a unified data path. These interconnects often support technologies like Fibre Channel over Ethernet (FCoE) or virtualized network functions (VNFs) to streamline communication. Complementing the hardware fabric are virtualization technologies, such as server virtualization (e.g., VMware vSphere, Microsoft Hyper-V), network virtualization, and storage virtualization. These technologies allow for the creation of virtualized resources that can be dynamically allocated and managed by the unified control plane. Server virtualization, for example, enables multiple operating systems and applications to run on a single physical server, maximizing hardware utilization. Network virtualization allows for the creation of virtual networks that are decoupled from the physical network, offering greater flexibility and security.

The Transformative Benefits of Unified Computing

The adoption of unified computing systems offers a compelling array of advantages that directly address the pain points of traditional IT infrastructures, leading to significant operational and strategic improvements.

Operational Simplicity and Efficiency

One of the most immediate and profound benefits of a unified computing system is the drastic reduction in operational complexity. By consolidating hardware and software into a single, manageable platform, IT teams are no longer burdened by the intricacies of managing disparate systems and interfaces. This translates to fewer physical components to rack, cable, and maintain. The centralized management plane further simplifies tasks such as provisioning new resources, monitoring system health, and troubleshooting issues. Automation, driven by the software-defined control plane, reduces manual effort and the associated risk of human error. This increased efficiency allows IT staff to focus on more strategic initiatives rather than routine maintenance. For example, deploying a new application that previously required coordination between server, network, and storage teams could now be accomplished with a single set of commands or through a self-service portal within the unified system.

Enhanced Agility and Scalability

In today’s fast-paced business environment, the ability to adapt quickly to changing demands is paramount. Unified computing systems provide the agility and scalability necessary to meet these challenges. The abstraction and automation inherent in these systems allow for rapid provisioning and de-provisioning of resources, enabling businesses to scale up or down their IT infrastructure as needed. Whether it’s spinning up new virtual machines for a sudden increase in user demand or decommissioning resources that are no longer required, the process is significantly faster and more fluid. This dynamic allocation of resources ensures that IT can keep pace with business growth and new project deployments without lengthy procurement and configuration cycles. The ability to scale compute, storage, and network resources independently or in concert within the unified fabric provides a flexible and responsive infrastructure.

Improved Resource Utilization and Cost Savings

By consolidating resources and optimizing their allocation, unified computing systems lead to significant improvements in resource utilization and, consequently, substantial cost savings. The convergence of compute, network, and storage reduces the need for redundant hardware and cabling. Furthermore, by enabling tighter integration and more intelligent management, these systems can maximize the utilization of server CPUs, memory, and storage capacity, minimizing idle resources. This consolidation also leads to reduced power consumption and cooling requirements within the data center, contributing to lower operational expenses. The simplified management and automation capabilities further reduce the need for extensive IT staffing, leading to further cost efficiencies. Over time, the total cost of ownership (TCO) for IT infrastructure can be significantly lowered due to these combined factors.

The Future of Computing: Towards Autonomous Operations

The evolution of unified computing systems points towards a future where IT infrastructure operates with increasing levels of autonomy, driven by artificial intelligence and machine learning.

AI-Driven Optimization and Predictive Maintenance

The intelligence embedded within the control plane of unified computing systems is poised to become even more sophisticated with the integration of artificial intelligence (AI) and machine learning (ML). AI algorithms can analyze vast amounts of operational data from the unified system to identify patterns, predict potential issues, and optimize resource allocation proactively. This could include predictive maintenance, where the system anticipates hardware failures and schedules proactive replacements before they impact operations. AI can also dynamically adjust resource provisioning based on real-time application performance and user demand, ensuring optimal performance and efficiency without manual intervention. This shift from reactive to proactive management is a hallmark of advanced IT operations. For example, an AI could detect a gradual increase in latency for a specific application and automatically reallocate resources or adjust network configurations to resolve the issue before users even notice.

Autonomous Infrastructure and Self-Healing Capabilities

The ultimate goal of many unified computing initiatives is to achieve autonomous infrastructure – an IT environment that can manage itself with minimal human oversight. This involves developing systems that can automatically detect, diagnose, and resolve issues, a concept often referred to as self-healing. Unified computing systems, with their integrated architecture and sophisticated control plane, provide the foundation for such autonomy. This could involve automated provisioning of new workloads, self-correction of performance bottlenecks, automatic load balancing, and even self-remediation of security vulnerabilities. As AI and ML capabilities mature, we can expect unified computing systems to become increasingly capable of operating independently, freeing up IT professionals to focus on innovation and strategic business objectives. This move towards autonomy not only enhances efficiency but also significantly improves the resilience and reliability of IT services.