In the rapidly evolving landscape of Tech & Innovation, particularly within areas like mapping, remote sensing, and autonomous systems, data-driven decisions are paramount. Understanding whether a new algorithm performs better, if a novel sensor provides significantly different readings, or if an updated autonomous flight system yields improved results often hinges on robust statistical analysis. Among the suite of tools available to researchers and developers, the two-sample t-test stands out as a fundamental method for comparing the means of two independent groups of data. It provides a clear, quantitative answer to the question: are these two groups truly different, or are any observed disparities merely due to random chance?

The Core Principle of Comparative Analysis

At its heart, the two-sample t-test is a hypothesis test used to determine if there is a statistically significant difference between the means of two independent populations. Imagine you’ve developed two distinct remote sensing algorithms designed to estimate vegetation health, or perhaps two different AI-powered obstacle avoidance systems for autonomous drones. You deploy both, collect data, and observe a difference in their average performance metrics – perhaps the new algorithm yields a slightly higher accuracy score, or the updated obstacle avoidance system records fewer near-misses. The critical question isn’t just if there’s a difference, but if that difference is statistically meaningful enough to conclude that one system is genuinely superior to the other, or if the observed variation is simply a fluke of the data collected.

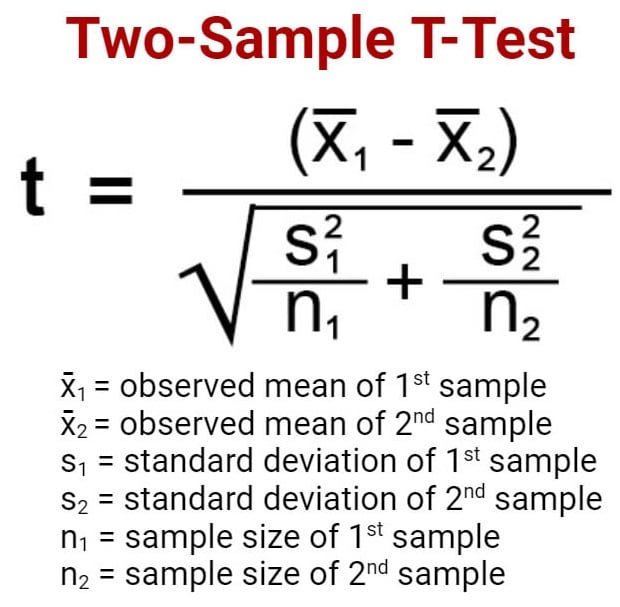

The test operates on the principle of null and alternative hypotheses. The null hypothesis (H0) typically states that there is no significant difference between the population means (i.e., µ1 = µ2). Conversely, the alternative hypothesis (H1) posits that there is a significant difference (i.e., µ1 ≠ µ2 for a two-tailed test, or µ1 > µ2 or µ1 < µ2 for a one-tailed test). By collecting sample data from each group and performing calculations, the t-test generates a “t-statistic” and a corresponding “p-value.” This p-value is the probability of observing a difference as extreme as, or more extreme than, the one in your sample data, assuming the null hypothesis is true. A small p-value (typically less than 0.05 or 0.01) indicates strong evidence against the null hypothesis, leading us to reject H0 and conclude that a statistically significant difference likely exists between the two population means.

Assumptions and Prerequisites for Robust Testing

Like any statistical test, the two-sample t-test relies on several key assumptions to ensure the validity and reliability of its conclusions. Violating these assumptions can lead to erroneous interpretations, making it crucial for professionals in Tech & Innovation to understand them before applying the test to critical operational data.

Independence of Observations

Perhaps the most crucial assumption is that the observations within each group, and between the two groups, must be independent. This means that the measurement of one drone’s mapping accuracy, for instance, should not influence or be related to the measurement of another drone’s accuracy. For remote sensing applications, this implies that data collected from different sites or at different times for comparison should not be inherently linked or correlated. Non-independent data often requires different statistical approaches, such as paired t-tests or repeated measures ANOVA.

Normality of the Data

The t-test assumes that the data in both populations from which the samples are drawn are approximately normally distributed. While the t-test is remarkably robust to minor deviations from normality, especially with larger sample sizes (due to the Central Limit Theorem), significant skewness or extreme outliers can distort the results. Tools like histograms, Q-Q plots, or formal normality tests (e.g., Shapiro-Wilk test) can be used to assess this assumption. If data is highly non-normal and sample sizes are small, non-parametric alternatives like the Mann-Whitney U test might be more appropriate.

Homogeneity of Variances

Another important assumption, particularly for the standard (Student’s) two-sample t-test, is that the population variances of the two groups are equal. This is often referred to as homoscedasticity. If the variances are significantly different (heteroscedasticity), a modified version of the t-test, known as Welch’s t-test, is more appropriate. Welch’s t-test adjusts the degrees of freedom to account for unequal variances and is often the default choice in many statistical software packages due to its robustness. Levene’s test or Bartlett’s test can be used to formally check for homogeneity of variances.

Interval or Ratio Scale Data

The dependent variable (the data being compared) should be measured on an interval or ratio scale. This means the data should have meaningful numerical properties where differences are consistent and interpretable. Examples include flight time, sensor accuracy (e.g., in meters), processing speed (in seconds), or energy consumption (in Joules).

Performing the Test: Steps and Interpretation

Applying the two-sample t-test typically involves a structured approach, whether conducted manually (for small datasets and educational purposes) or, more commonly and efficiently, using statistical software.

1. Formulate Hypotheses

Clearly define your null (H0) and alternative (H1) hypotheses. For instance:

- H0: The average mapping accuracy of Algorithm A is equal to Algorithm B.

- H1: The average mapping accuracy of Algorithm A is not equal to Algorithm B. (Two-tailed test)

2. Collect Data

Gather two independent samples of data, one for each group being compared. For example, run a series of identical mapping missions using Algorithm A and another series using Algorithm B, carefully recording the accuracy metric for each mission.

3. Choose Significance Level (Alpha)

Select a significance level (α), typically 0.05 (5%) or 0.01 (1%). This threshold represents the maximum probability you are willing to accept of making a Type I error – incorrectly rejecting a true null hypothesis.

4. Calculate Test Statistic

Using statistical software (e.g., R, Python with SciPy, SPSS, SAS, Excel’s Data Analysis Toolpak), input your data. The software will calculate the t-statistic based on the means, standard deviations, and sample sizes of the two groups. It will also typically perform a test for equal variances (like Levene’s test) to determine whether to use Student’s t-test or Welch’s t-test.

5. Determine P-value

The software will output a p-value corresponding to your calculated t-statistic and degrees of freedom.

6. Make a Decision and Interpret Results

Compare the p-value to your chosen significance level (α).

- If p < α: Reject the null hypothesis. There is statistically significant evidence to conclude that the means of the two groups are different.

- If p ≥ α: Fail to reject the null hypothesis. There is not enough statistically significant evidence to conclude that the means of the two groups are different. This does not mean the means are equal, just that your data doesn’t provide sufficient evidence to say they are different.

Always interpret the results in the context of your original research question. For example, “We found a statistically significant difference (p < 0.05) in mapping accuracy between Algorithm A (mean = 2.5m) and Algorithm B (mean = 1.8m), suggesting Algorithm B provides superior accuracy.”

Real-World Applications in Tech & Innovation

The utility of the two-sample t-test extends across numerous facets of Tech & Innovation, providing critical insights for development, deployment, and optimization.

1. Comparing Performance of AI/Autonomous Systems

Consider the development of AI Follow Mode capabilities for drones. Engineers might develop two different machine learning models (Model X and Model Y) for object tracking and following. To assess which model performs better, they could conduct multiple flight tests under controlled conditions, measuring a key performance indicator like “tracking error” (deviation from the target’s center) for each model. A two-sample t-test could then determine if the average tracking error of Model X is statistically different from Model Y, informing which model to integrate or further develop. Similarly, in autonomous navigation, comparing the average “obstacle avoidance success rate” or “path efficiency” between a new SLAM algorithm and a baseline algorithm would be a prime application.

2. Evaluating Remote Sensing Data Quality and Accuracy

In remote sensing, the quality and consistency of data are paramount. Suppose a team is testing two new spectral sensors (Sensor P and Sensor Q) for a specific agricultural application, like estimating crop biomass using Normalized Difference Vegetation Index (NDVI). They collect NDVI data from identical plots using both sensors. A two-sample t-test could be employed to determine if the mean NDVI values obtained from Sensor P are statistically different from those obtained from Sensor Q. This could indicate sensor calibration issues, inherent differences in spectral response, or differing levels of noise, guiding decisions on sensor selection or post-processing adjustments. Another example might involve comparing the accuracy of ground truth measurements taken by two different teams or two different GPS receivers.

3. Assessing Mapping and Surveying Technologies

When developing or deploying new mapping technologies, such as photogrammetry software or LiDAR processing pipelines, validating their output is essential. A common application of the t-test here would be to compare the average vertical accuracy (e.g., Root Mean Square Error in Z-axis) of digital elevation models (DEMs) generated by two different software packages (Software M vs. Software N) over the same terrain. By taking multiple sample points and comparing the observed elevation against known ground control points, the t-test can reveal if one software consistently produces more accurate elevation data than the other. This directly impacts the reliability of topographic maps and volumetric calculations.

4. Benchmarking Drone Hardware and Components

Beyond software and algorithms, the two-sample t-test can also be applied to evaluate physical components. For example, a drone manufacturer might test two new propeller designs (Design A vs. Design B) to see which offers better flight efficiency. By conducting repeated controlled flights and measuring average flight duration or power consumption under identical loads, a t-test can determine if one design provides a statistically significant improvement in performance. Similarly, comparing the average lifespan or cycle count of two different battery chemistries (LiPo vs. LiHV) under controlled discharge cycles would also be an appropriate use case.

In conclusion, the two-sample t-test is an indispensable statistical tool for professionals in Tech & Innovation. It provides a robust framework for evidence-based decision-making by objectively comparing the means of two independent groups. By understanding its underlying principles, assumptions, and practical applications within areas like AI, autonomous flight, remote sensing, and mapping, researchers and developers can confidently draw conclusions from their data, driving progress and ensuring the reliability of their cutting-edge solutions.