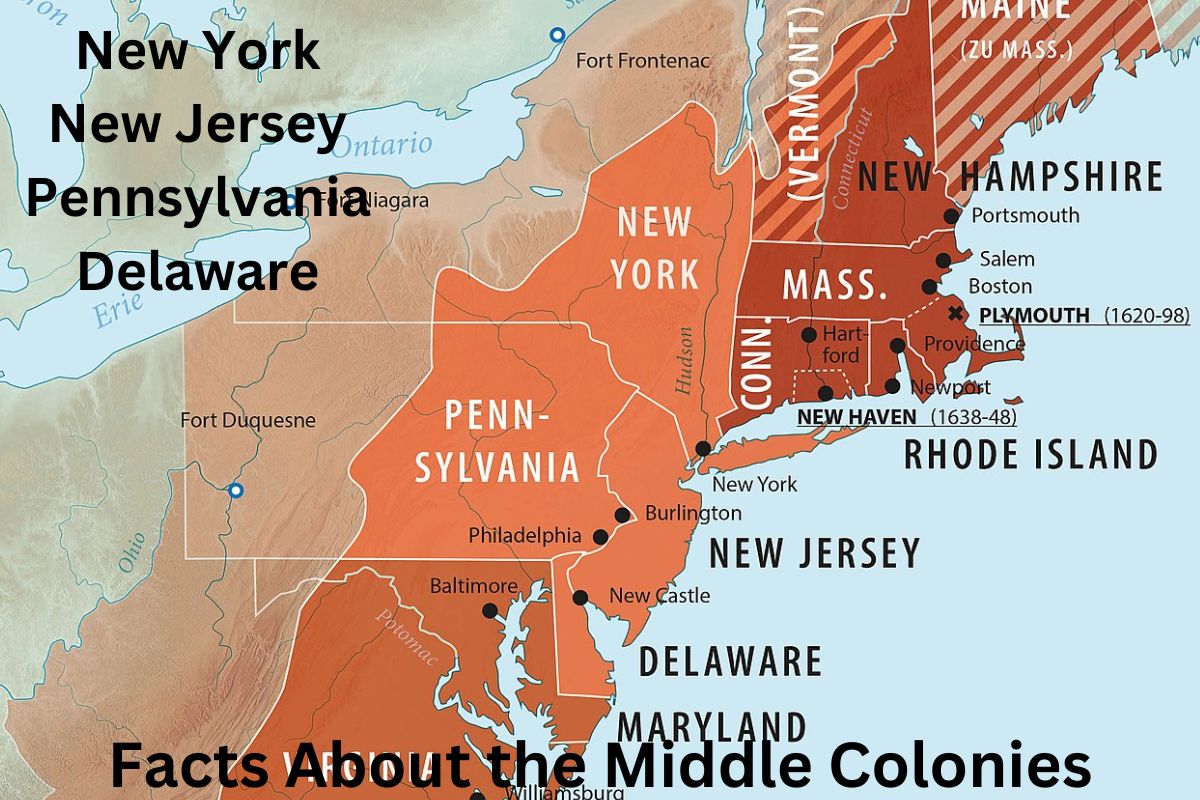

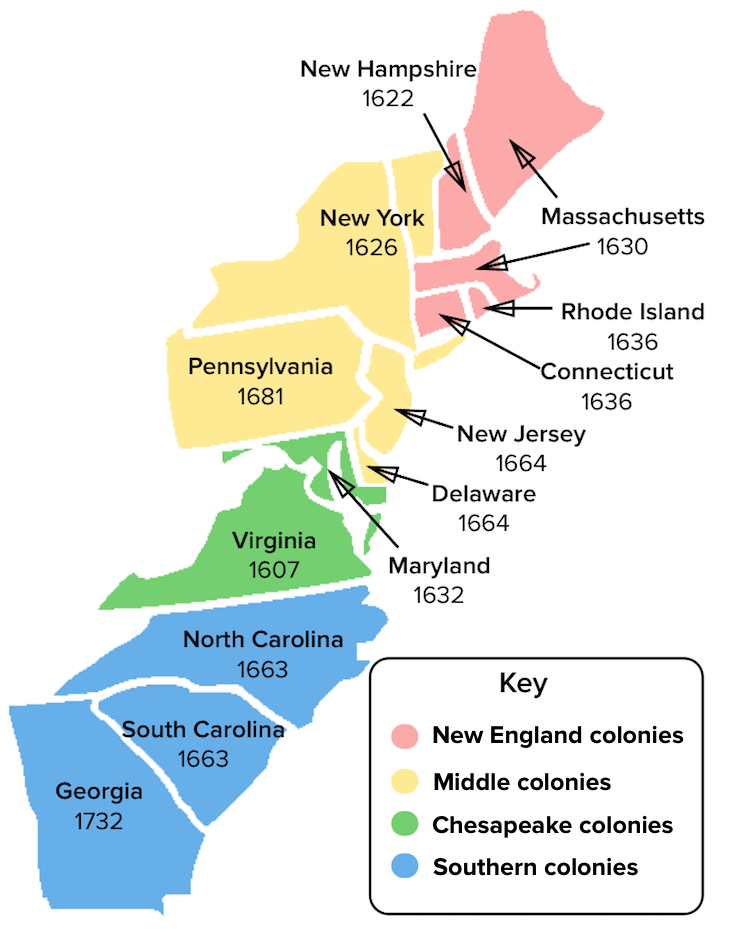

In the rapidly evolving landscape of unmanned aerial vehicles (UAVs), the industry is moving beyond the concept of the “lone pilot” and toward the sophisticated realm of collective autonomy. At the heart of this transition lies a concept increasingly referred to in high-level robotics and research circles as the “Middle Colony.” While the term historically referred to a geographic region of the early American colonies, in the context of modern tech and innovation, it represents the critical intermediary layer of autonomous drone swarms. This “Middle Colony” acts as the connective tissue between high-level human intent and the granular, localized actions of individual drone units.

As we delve into the complexities of remote sensing, AI-driven follow modes, and autonomous mapping, understanding the Middle Colony is essential for anyone looking to grasp the future of aerial technology. This article explores how this architectural layer is revolutionizing how we deploy drones in complex environments, from search and rescue to precision agriculture.

Understanding the Architecture of the Middle Colony

To understand the Middle Colony, one must first view a drone deployment not as a single machine, but as a hierarchical ecosystem. In traditional drone operations, the hierarchy is flat: a controller talks to a drone. However, in advanced autonomous systems, a multi-tiered architecture is required to manage the sheer volume of data and the complexity of flight paths.

The Bridge Between Command and Edge

The Middle Colony serves as the strategic management layer within a distributed drone network. In this framework, the “Command” layer consists of the human operator or a centralized cloud AI that sets the mission parameters—for example, “map this 500-acre forest.” The “Edge” layer consists of the individual drones executing physical tasks like motor adjustment and sensor data collection.

The Middle Colony is where the intelligence happens. It is the decentralized brain that resides across a subset of high-processing-power “leader” drones or localized ground stations. It translates the broad command (“map the forest”) into specific, real-time assignments for individual units, ensuring that no two drones collide and that battery resources are optimized across the entire fleet. By delegating decision-making to this middle tier, the system reduces the need for constant communication with a distant central server, significantly lowering latency.

Communication Protocols in Distributed Networks

Communication is the lifeblood of the Middle Colony. Unlike standard FPV or consumer drones that rely on a direct radio link to a remote, Middle Colony drones utilize mesh networking. In a mesh network, every drone acts as a node, relaying information to its neighbors.

This creates a self-healing “colony” where, if one drone loses its connection or suffers a hardware failure, the rest of the swarm can reroute data through other nodes. This level of innovation ensures that autonomous missions remain resilient even in “GNSS-denied” environments, such as deep canyons or indoor industrial complexes. The Middle Colony manages these protocols, prioritizing critical telemetry data over high-bandwidth video feeds to ensure the safety and cohesion of the flight group.

The Role of Artificial Intelligence in Swarm Coordination

The true power of the Middle Colony is unlocked through Artificial Intelligence and Machine Learning. Without AI, a group of drones is merely a collection of machines; with it, they become a cohesive unit capable of emergent behavior—similar to a flock of birds or a colony of ants.

Peer-to-Peer Processing and Consensus Algorithms

In a Middle Colony setup, drones must constantly agree on their state and the state of their environment. This is achieved through consensus algorithms. For instance, if three drones in a swarm detect an obstacle simultaneously, the Middle Colony processing layer must reconcile these different perspectives into a single, actionable map.

This peer-to-peer (P2P) processing allows the swarm to make collective decisions. If the “colony” determines that a specific area of a mapping target is obscured by smoke, the Middle Colony logic can autonomously reassign drones with thermal sensors to that specific sector without waiting for human intervention. This shift from “automated” (following a pre-set path) to “autonomous” (making decisions based on environmental changes) is the hallmark of modern drone innovation.

Dynamic Reconfiguration and Fault Tolerance

One of the most impressive feats of Middle Colony intelligence is dynamic reconfiguration. In complex missions, the “shape” of the drone colony may need to change. For example, during a search and rescue operation in a mountainous region, the drones might need to transition from a wide “search grid” formation to a tight “reconnaissance circle” once a target is identified.

The AI within the Middle Colony calculates the most efficient flight paths to achieve this transition, accounting for wind resistance, remaining battery life, and sensor coverage. Furthermore, it provides high-level fault tolerance. If a “leader” drone (a node in the Middle Colony) goes down, the system uses elective algorithms to instantly promote another drone to take over its coordination duties, ensuring the mission continues seamlessly.

Practical Applications of Middle Colony Technology

While the theory of decentralized drone colonies is fascinating, its real-world applications are where the technology truly shines. By utilizing a Middle Colony architecture, industries can scale their operations far beyond what was possible with manual or single-drone automated flights.

Agricultural Monitoring at Scale

In precision agriculture, the Middle Colony approach allows for “blanket coverage” with high-resolution detail. Rather than one drone flying for hours to cover a large vineyard, a swarm of smaller, cheaper drones can be deployed. The Middle Colony manages the swarm, ensuring that as drones return to docking stations for charging, new ones take their place without leaving gaps in the multispectral data.

The innovation here lies in the “on-the-fly” processing. Instead of uploading gigabytes of data to the cloud after the flight, the Middle Colony layer can process the data in real-time. If it detects a “hot spot” of pest infestation or dehydration, it can immediately divert a sub-section of the colony to perform a more detailed, lower-altitude scan, providing the farmer with actionable insights before the drones even land.

Urban Infrastructure Inspection and Mapping

Mapping complex urban environments or industrial sites like oil rigs presents unique challenges: signal interference, moving obstacles, and tight spaces. The Middle Colony excels here by providing a “collaborative mapping” framework. As individual drones move through different levels of a structure, they feed data back to the colony’s shared internal map.

This shared perception allows the drones to “see” around corners using the eyes of their peers. If one drone identifies a high-voltage line, that information is instantly propagated through the Middle Colony, and every other drone in the swarm updates its obstacle avoidance parameters accordingly. This level of collective awareness is vital for safe operations in high-stakes industrial environments.

Future Innovations and the Path Toward Total Autonomy

As we look toward the next decade of drone technology, the Middle Colony will move from a specialized research concept to a standard feature in commercial drone ecosystems. The integration of 5G/6G connectivity and dedicated AI edge-processors will further accelerate this trend.

Edge Computing and the Reduction of Latency

The future of the Middle Colony is deeply tied to the advancement of edge computing. Currently, much of the heavy lifting for swarm coordination is done by powerful onboard computers that consume significant battery power. However, as specialized AI chips become smaller and more efficient, we will see drones capable of running complex neural networks natively.

This reduction in latency means that the Middle Colony can react to millisecond-level changes in the environment. For example, in a “Follow Mode” scenario involving a high-speed vehicle, a swarm could maintain a perfect cinematic formation while dodging unforeseen obstacles with superhuman reflexes. The “Middle” layer becomes a real-time reactive shield, ensuring mission success in the most volatile conditions.

Collaborative Sensing and Shared Perception

Perhaps the most exciting innovation on the horizon is the concept of “Shared Perception.” In this future state, the Middle Colony doesn’t just share location data; it shares raw sensor data to synthesize a “Super-Sensor.” By combining the optical zoom of one drone, the thermal imaging of another, and the LiDAR data of a third, the Middle Colony creates a composite, multi-layered view of the world that no single drone could ever achieve.

This level of tech integration will redefine remote sensing. We will move away from viewing drone footage as a “video feed” and toward viewing it as a “live digital twin” of reality. The Middle Colony is the architect of this digital twin, organizing the chaos of individual data points into a coherent, intelligent, and highly functional whole.

In conclusion, the “Middle Colony” represents the next great leap in drone technology and innovation. By moving away from centralized control and toward decentralized, AI-driven swarm intelligence, we are unlocking the ability to perform tasks that were once the stuff of science fiction. As this technology matures, the Middle Colony will be the invisible force guiding the swarms that map our world, protect our environments, and revolutionize our industries.