In the world of professional imaging, the quest for the “darkest black” is far more than a poetic pursuit; it is a rigorous technical challenge that defines the limits of modern sensor technology. Whether in the context of high-end cinematography, satellite remote sensing, or precision industrial inspection, the ability to render absolute black—the total absence of light—determines the quality of the entire visual spectrum. To understand what the darkest black truly is, we must look beyond the color palette and delve into the physics of light absorption, the architecture of CMOS sensors, and the complex algorithms that manage digital noise.

The Physics of Absence: Defining Black in Digital Imaging

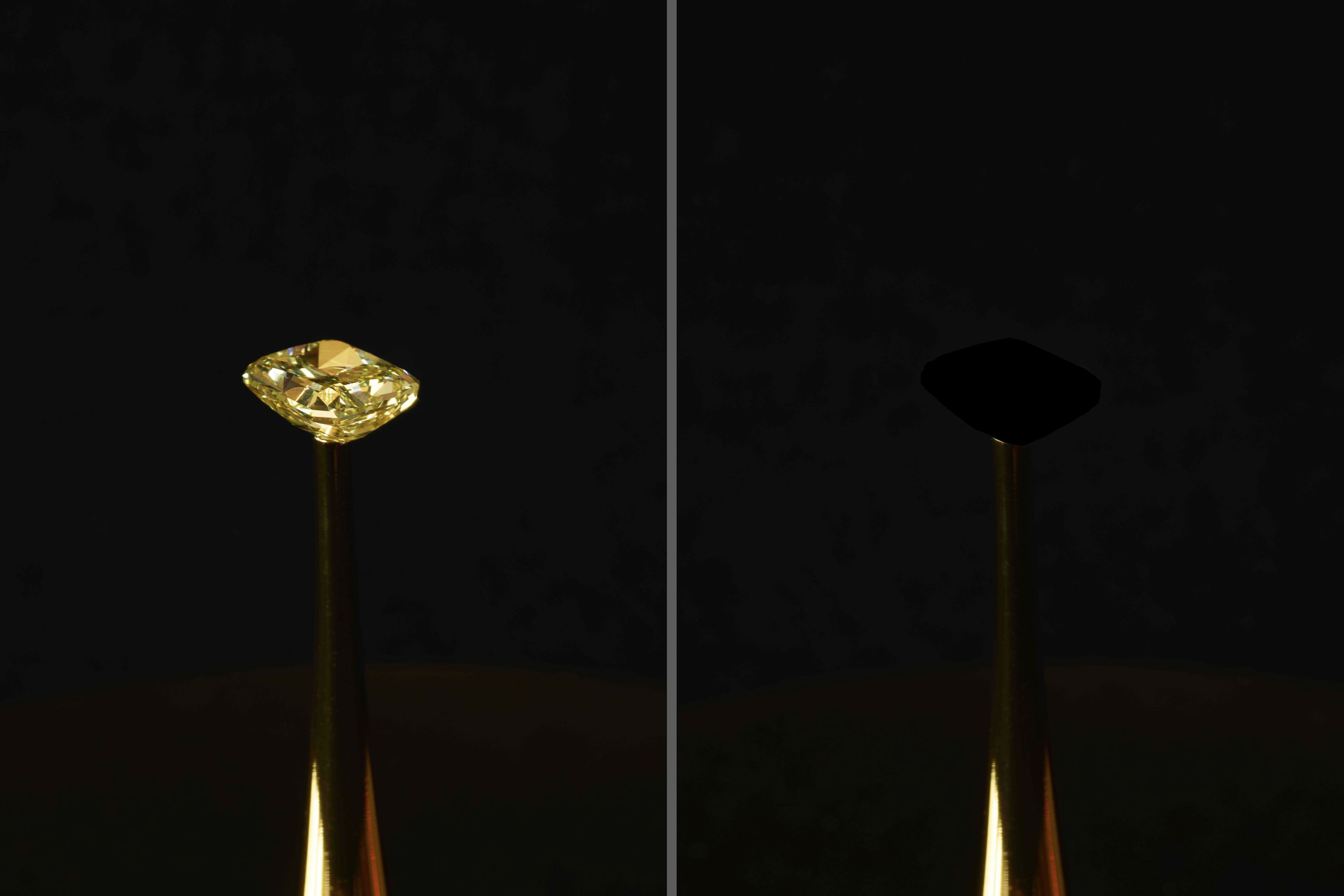

In a purely physical sense, “black” is the result of a surface or volume absorbing all incoming light across the visible spectrum. In the realm of material science, substances like Vantablack or Surrey NanoSystems’ latest iterations have achieved absorption rates of 99.965%, effectively making three-dimensional objects appear as two-dimensional voids. However, in the niche of Cameras & Imaging, the definition of the darkest black is governed not by carbon nanotubes, but by the “noise floor” of an imaging system.

Light Absorption vs. Signal Floor

When a camera sensor captures an image, it is essentially counting photons. To achieve the “darkest black,” a pixel (photodiode) should ideally record zero photons. In a perfect vacuum with a perfect sensor, this would result in a digital value of absolute zero. However, in real-world imaging, sensors encounter “dark current”—the accumulation of electrons even when no light is hitting the sensor. This electrical leakage creates a baseline signal. The darkest black in digital imaging is, therefore, defined as the point where the signal is indistinguishable from this inherent electronic noise.

The Role of the Photodiode in Capturing Darkness

The photodiode is the heart of the imaging sensor. Its job is to convert light into an electrical charge. The challenge in achieving deep blacks lies in the “well capacity” and the efficiency of the charge transfer. If a photodiode is “leaky,” it introduces stray electrons into the dark areas of the frame, turning a deep midnight sky into a muddy, charcoal gray. Modern high-end imaging systems focus on isolating these photodiodes to prevent “crosstalk,” ensuring that a pixel intended to be black isn’t contaminated by light from an adjacent bright pixel.

Dynamic Range and the Pursuit of True Black

The quality of an image is often measured by its dynamic range—the ratio between the brightest highlights and the darkest shadows that a camera can capture simultaneously without losing detail. When we ask “what is the darkest black,” we are essentially asking about the bottom end of a camera’s dynamic range.

Measuring Bit Depth and Tonal Gradation

Bit depth plays a crucial role in how we perceive black. An 8-bit image offers only 256 levels of gray, meaning the transition from “almost black” to “true black” is abrupt and prone to banding. In professional 12-bit or 14-bit imaging systems, there are thousands of increments between light and dark. This allows for a much more nuanced “crushing” of blacks, where the filmmaker or technician can decide exactly where the shadow detail ends and the “true black” begins. The deeper the bit depth, the more “room” there is to define the darkest black without introducing artifacts.

HDR and the Contrast Ratio Revolution

High Dynamic Range (HDR) technology has redefined our expectations of darkness. In traditional imaging, “black” was often limited by the display technology’s inability to turn off pixels entirely. With the advent of OLED and advanced MicroLED displays, which can achieve infinite contrast ratios by turning individual pixels completely off, the camera’s ability to record “true black” has become more critical. If the sensor captures even a tiny amount of noise in a dark area, an HDR display will reveal it as a flickering grain rather than a solid black void.

Noise Floor: The Enemy of the Darkest Black

The primary obstacle to achieving the darkest black in digital photography and videography is noise. In low-light environments, such as deep-sea exploration or night-time surveillance, the camera must amplify the small amount of available signal. This amplification also boosts the noise, creating “grain” that obscures the true black levels.

Thermal Noise and Long Exposure Challenges

One of the most significant contributors to “non-black” blacks is heat. As a sensor operates, it generates thermal energy, which can displace electrons and create “hot pixels” or a general haze known as thermal noise. This is why high-end scientific cameras, such as those used in astrophotography, often feature integrated cooling systems. By lowering the temperature of the sensor, engineers can suppress thermal noise, allowing the camera to see a “blacker” version of the night sky than a standard consumer camera ever could.

Signal Processing and Internal De-noising Algorithms

Modern image signal processors (ISPs) use sophisticated mathematics to identify and remove noise from dark areas. Techniques like “Black Level Compensation” involve measuring the signal from “masked pixels”—pixels on the edge of the sensor that are physically covered so they never see light. By measuring the noise on these masked pixels, the processor can subtract that same level of noise from the rest of the image. This computational approach is currently the most effective way to reach the “darkest black” in compact imaging systems where physical cooling is impossible.

Hardware Innovations: From Vantablack Coatings to CMOS Advancements

To capture the darkest black, the physical construction of the camera hardware must be as sophisticated as the software. This involves preventing light from bouncing around inside the lens barrel and ensuring that the sensor can capture every single available photon.

Anti-Reflective Coatings in Lens Design

True black can be ruined before the light even reaches the sensor. “Flare” and “ghosting” occur when light reflects off the internal elements of a lens, scattering into the dark areas of the frame. To combat this, manufacturers use nano-coatings—microscopic structures that trap light. Some of the most advanced lenses now use materials inspired by the same principles as Vantablack to ensure that the “blacks” remain pure and untainted by stray light.

Back-Illuminated Sensors (BSI) and Low-Light Performance

The physical layout of a sensor impacts its black levels. In traditional Front-Illuminated sensors, the wiring sits in front of the light-sensitive area, creating shadows and reducing efficiency. Back-Illuminated (BSI) sensors flip this architecture, putting the wiring behind the photodiodes. This allows the sensor to capture more light in the “brights” and maintain a cleaner, lower-noise signal in the “blacks.” This hardware evolution has been the single biggest leap in allowing small-format cameras to achieve professional-grade black levels.

The Future of Deep Blacks in Aerial and Industrial Imaging

As we look toward the future, the definition of the “darkest black” continues to evolve alongside Artificial Intelligence and new sensor materials. We are moving toward an era where “black” is not just a lack of light, but a perfectly reconstructed data point.

AI-Enhanced Low Light Recovery

Artificial Intelligence is now being used to “predict” what the darkest black should look like. By training neural networks on pairs of noisy, short-exposure images and clean, long-exposure images, AI can now strip away the “veil” of noise in real-time. This technology allows cameras to see into shadows that were previously considered “pure black” voids, extracting detail while maintaining a clean, deep black floor where no data exists.

Organic CMOS and the Next Frontier

The next revolution in imaging may come from Organic CMOS sensors. Unlike traditional silicon sensors, organic sensors use a thin film of organic photoconductive chemicals. These sensors offer much higher light absorption coefficients and can be designed to have almost zero dark current. If successful, Organic CMOS could provide the “darkest black” ever recorded by a digital device, effectively matching the theoretical limits of physics and providing a level of contrast that currently exists only in the most advanced laboratory settings.

In conclusion, the “darkest black” in the niche of Cameras & Imaging is a moving target. It is the boundary between the known (signal) and the unknown (noise). As sensor technology moves closer to the quantum limit of light detection, our ability to capture the void improves, giving us images with more depth, more realism, and a more profound sense of immersion. Whether it is through cooling the sensor, applying nano-coatings to glass, or using AI to scrub away electronic interference, the industry’s obsession with the darkest black continues to drive the most significant innovations in modern visual technology.