In the rapidly evolving landscape of unmanned aerial vehicles (UAVs) and autonomous systems, the term “language learning” has transcended its traditional linguistic roots. In the context of drone technology and innovation, the skeletal structure of language learning refers to the complex hierarchical architecture that allows a machine to interpret data, communicate via protocols, and autonomously “understand” its environment through machine learning and artificial intelligence. This structural framework is what separates a simple remote-controlled toy from a sophisticated, autonomous industrial tool capable of mapping, sensing, and decision-making.

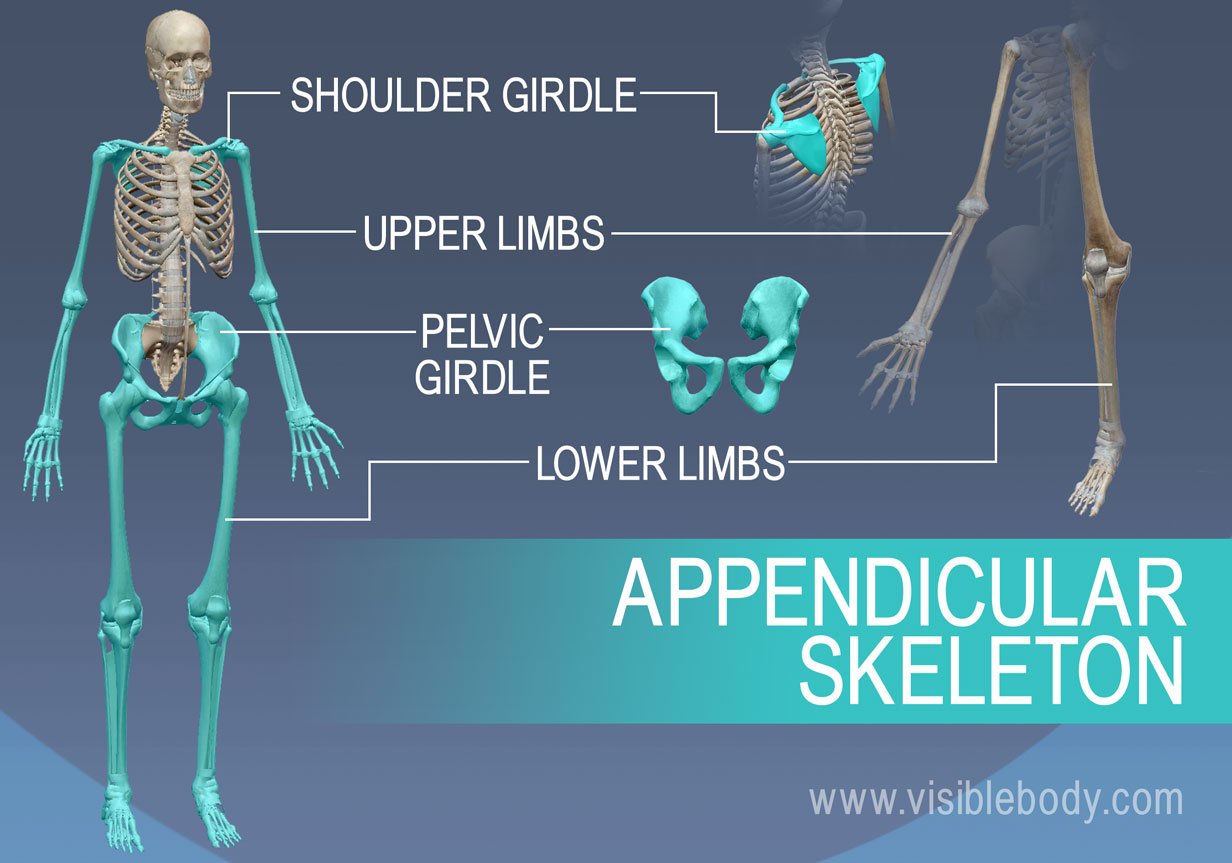

The skeletal structure of this learning process is built upon three primary pillars: the communication protocols (the syntax), the neural network architectures (the cognitive brain), and the sensor fusion algorithms (the sensory perception). Together, these elements allow a drone to acquire “fluency” in its operational theater.

The Syntactic Backbone: Communication Protocols and Software Frameworks

Just as human language relies on a rigid grammar and syntax to convey meaning, the skeletal structure of drone “learning” begins with its communication protocols. These are the standardized sets of rules that govern how data is packaged, transmitted, and interpreted between the flight controller, the peripheral sensors, and the ground control station (GCS).

MAVLink: The Universal Grammar of UAVs

At the core of most modern drone ecosystems is MAVLink (Micro Air Vehicle Link). This is a very lightweight, header-only message marshaling library. If we view drone operation as a language, MAVLink is the fundamental grammar. It defines how “heartbeat” messages are sent to confirm a connection, how telemetry data (GPS, altitude, attitude) is reported, and how mission commands are executed.

The skeletal importance of MAVLink lies in its efficiency. For an AI to “learn” or respond to its environment, it needs a stream of low-latency data. MAVLink provides the structure for this data, ensuring that regardless of the hardware manufacturer, the “language” remains consistent across the platform.

ROS (Robot Operating System) and Middleware

While MAVLink handles the communication, the Robot Operating System (ROS) acts as the cognitive framework. ROS is not an operating system in the traditional sense but a middleware suite that provides a collection of software frameworks for robot software development. In the skeletal structure of drone learning, ROS serves as the “vocabulary” builder. It allows developers to create “nodes”—individual software modules that handle specific tasks like obstacle avoidance or SLAM (Simultaneous Localization and Mapping)—and enables these nodes to communicate through a publish-subscribe model. This modularity is essential for innovation, as it allows the drone to learn new “skills” (like AI-based object tracking) without rewriting the entire flight code.

The Cognitive Architecture: Neural Networks and Deep Learning

For a drone to truly “learn,” it must move beyond pre-programmed responses and enter the realm of autonomous decision-making. This is where the skeletal structure of neural networks becomes vital. In the niche of tech and innovation, “language learning” for a drone is essentially the process of training a model to recognize patterns in high-dimensional data.

Convolutional Neural Networks (CNNs) and Computer Vision

The primary “eye” of the drone is the camera, but the “vision” is provided by Convolutional Neural Networks. These are deep learning algorithms specifically designed to process pixel data. The skeletal structure of a CNN—comprising input layers, hidden layers (convolutional, pooling, and fully connected), and output layers—mimics the human visual cortex.

Through a process of supervised learning, a drone is fed thousands of images of power lines, agricultural crops, or human figures. Over time, the weights within the neural network are adjusted until the drone can “translate” a raw video feed into a semantic map. This is the basic skeletal structure of how a drone learns to distinguish a tree from a building, a process known as semantic segmentation.

Reinforcement Learning and Behavioral Adaptation

Beyond simple recognition, drones use reinforcement learning (RL) to learn the “language” of flight dynamics. In this structure, an agent (the drone) takes actions in an environment to maximize a reward. For example, in developing autonomous racing drones, the “language” being learned is the optimal racing line. The skeleton of this process involves a feedback loop: state, action, and reward. By crashing thousands of times in a simulated environment, the AI “learns” the skeletal physics of its own airframe, eventually mastering complex maneuvers that would be impossible for a human pilot to program manually.

Sensor Fusion: The Alphabet of Environmental Perception

A drone cannot learn if it cannot perceive. The “alphabet” of its language consists of the raw data points provided by its sensor suite. However, raw data is often noisy and contradictory. The skeletal structure of language learning in drones relies heavily on “sensor fusion”—the integration of data from multiple sources to create a more accurate representation of reality.

The Kalman Filter and State Estimation

The Kalman Filter is a mathematical algorithm that serves as a cornerstone of the drone’s internal logic. It acts as a real-time “spell checker” for the drone’s sensors. If the GPS says the drone is at one coordinate, but the IMU (Inertial Measurement Unit) suggests a sudden acceleration in the opposite direction, the Kalman Filter calculates the most probable “truth.” This skeletal layer of logic is what allows a drone to maintain a stable “hover” and provides the reliable data foundation necessary for higher-level AI learning.

LiDAR and Ultrasonic Integration

For drones involved in mapping and remote sensing, the skeletal structure of learning includes the interpretation of spatial depth. LiDAR (Light Detection and Ranging) sends out laser pulses to create a 3D point cloud of the environment. The “learning” here involves the drone’s ability to take this massive, unstructured cloud of points and convert it into a navigable 3D map. By combining LiDAR with ultrasonic sensors (for close-range obstacle detection) and optical flow sensors (for position holding in GPS-denied environments), the drone builds a comprehensive “understanding” of its physical surroundings.

Autonomous Follow Mode and Predictive Analytics

One of the most visible applications of the skeletal structure of AI learning in drones is “Follow Mode” or autonomous tracking. This involves a sophisticated blend of tech and innovation, where the drone must not only see a target but predict its future movements.

Transforming Visual Data into Predictive Flight Paths

When a drone uses AI Follow Mode, it is engaging in a continuous loop of language processing. It identifies the “subject” (the noun), determines its “action” (the verb), and calculates a “response” (the syntax of the flight path). The skeletal structure here often involves “Transformers”—the same type of AI architecture used in Large Language Models like GPT.

In a drone, a Transformer model can be used to process sequences of visual data over time, allowing the drone to understand context. For instance, if a mountain biker disappears behind a tree, a drone equipped with this skeletal learning structure doesn’t simply stop; it uses its predictive model to anticipate where the biker will emerge based on their previous speed and trajectory.

Mapping and Remote Sensing as a Form of Translation

In industrial applications, the learning structure is focused on translation—translating physical reality into digital twins. Through photogrammetry and remote sensing, a drone “learns” the topography of a construction site or the health of a vineyard. The skeletal structure of this innovation involves the alignment of 2D images into a 3D space using “keypoints.” This mathematical language allows the drone’s software to reconstruct the world with sub-centimeter accuracy, providing actionable insights that would be invisible to the naked eye.

The Future of the Skeletal Structure: Edge Computing and On-Board AI

The next frontier in the skeletal structure of drone language learning is the move toward “Edge AI.” Traditionally, complex learning and processing were done in the cloud or on powerful ground-based computers. However, innovation in hardware—specifically the development of specialized NPU (Neural Processing Unit) chips for drones—is allowing this skeletal structure to exist entirely on the aircraft.

Real-Time Decision Making without Latency

By hosting the learning architecture on-board, drones can process the “language” of their environment in microseconds. This is critical for obstacle avoidance at high speeds or for autonomous swarming, where multiple drones must communicate and “learn” from each other’s positions to avoid collisions. The skeletal structure of a drone swarm is a masterpiece of distributed AI, where the “language” is a constant, high-speed exchange of positional data and intent.

Natural Language Processing (NLP) in Human-Drone Interaction

Finally, we are seeing the integration of literal language learning into drone tech. Through NLP, operators can now give complex, high-level commands to drones using natural speech. Instead of manually inputting coordinates, a pilot might say, “Survey the northern perimeter and alert me to any structural anomalies.” The skeletal structure of the drone’s AI then breaks this sentence down: it identifies the task (survey), the location (northern perimeter), and the specific condition to look for (structural anomalies). This represents the ultimate convergence of human language and machine learning, built upon the robust, innovative skeletal structures that define the modern UAV era.