In the rapidly evolving landscape of Unmanned Aerial Vehicles (UAVs) and remote sensing, the transition from hobbyist flight to professional-grade data acquisition has necessitated a shift toward rigorous scientific validation. As drone technology integrates deeper into sectors like autonomous mapping, precision agriculture, and infrastructure inspection, the ability to prove the reliability of captured data is paramount. At the heart of this validation process lies a fundamental statistical tool: the T-test.

While the term might sound like a relic from a college statistics course, the T-test is a critical component in the development and deployment of drone innovations. Whether a developer is testing a new AI-based obstacle avoidance algorithm or a surveyor is validating the accuracy of a LiDAR sensor against ground truth data, the T-test provides the mathematical certainty required to move from “observation” to “evidence.”

What is a T-Test and Why Does it Matter for Drone Innovation?

In the context of drone tech and innovation, a T-test is a statistical hypothesis test used to determine if there is a significant difference between the means of two groups. In the world of high-tech sensors and autonomous systems, data is rarely “perfect.” There is always noise, environmental interference, and margin for error. The T-test helps engineers and data scientists distinguish between a genuine performance improvement and a mere fluke caused by random variation.

Defining the Statistical Foundation

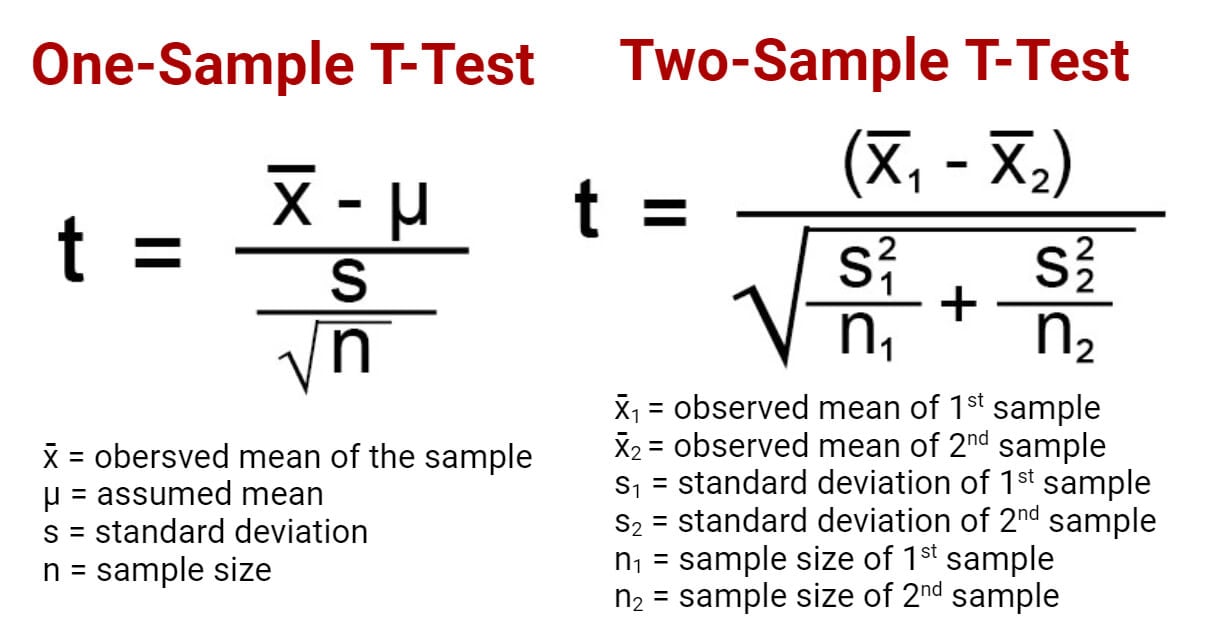

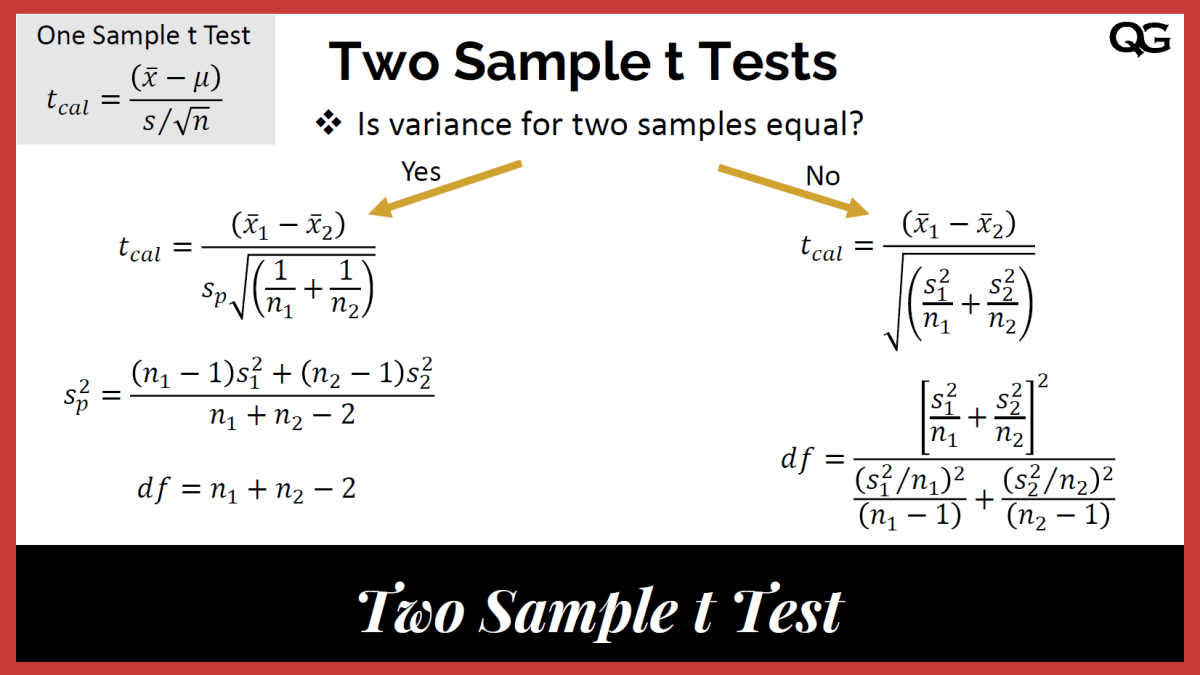

The T-test assesses the “T-statistic,” which represents the ratio of the difference between group means to the variability within the groups. In drone research—such as testing the battery efficiency of a new propulsion system—one might compare the flight times of ten drones using a standard ESC (Electronic Speed Controller) against ten drones using a newly developed AI-optimized ESC. The T-test analyzes these results to see if the AI-optimized drones actually perform better or if the variation is just due to wind conditions during the test.

The Role of Null and Alternative Hypotheses

Innovation thrives on proving that a new method is better than the old one. In statistical terms, this involves the Null Hypothesis ($H0$) and the Alternative Hypothesis ($H1$).

- The Null Hypothesis suggests that there is no real difference between the two groups being tested (e.g., “The new firmware update does not improve GPS lock-on speed”).

- The Alternative Hypothesis suggests that there is a statistically significant difference.

For a tech company looking to market a “more accurate” mapping drone, successfully rejecting the null hypothesis using a T-test is the gold standard for providing empirical proof to stakeholders.

P-Values and Significance Levels in Aerial Data

The “P-value” is the result of the T-test that everyone looks for. Usually set at a threshold of 0.05 (5%), a P-value tells the researcher the probability that the observed results occurred by chance. If a new autonomous flight mode shows a 10% reduction in power consumption with a P-value of 0.01, the innovation team can state with 99% confidence that the improvement is a result of their technical changes, not random luck.

Types of T-Tests Applied in Aerial Mapping and Remote Sensing

Not all data sets are structured the same way, and as such, different versions of the T-test are applied depending on the drone technology being evaluated. Choosing the right test is essential for maintaining data integrity in remote sensing and AI development.

Independent Samples T-Test: Comparing Different Hardware

The independent samples T-test (or two-sample T-test) is used when comparing the means of two distinct, unrelated groups. In drone innovation, this is most commonly used for A/B testing hardware configurations.

- Example: An engineering firm wants to know if a triple-frequency GNSS receiver provides significantly better positioning accuracy than a dual-frequency receiver. They fly two different drone models over the same path multiple times and compare the mean horizontal error. Since the hardware units are independent of each other, this test determines which hardware configuration is superior for precision mapping.

Paired Samples T-Test: Pre- and Post-Processing Calibration

The paired samples T-test is used when the same subject is tested twice. This is invaluable for software and firmware innovation within the drone industry.

- Example: A developer creates a new image-denoising algorithm for thermal sensors. They take a set of raw thermal images (the “before” group) and process them through the new AI filter (the “after” group). By using a paired T-test, they can measure the exact improvement in signal-to-noise ratio across the same set of images, ensuring that the algorithm is consistently enhancing the data quality across various thermal signatures.

One-Sample T-Test: Benchmarking Against Ground Truth

In mapping and remote sensing, drones are often used to generate digital twin models. A one-sample T-test compares the mean of a single group against a known value or “standard.”

- Example: A drone-based LiDAR system captures the elevation of a runway. The “ground truth” elevation is already known through traditional land surveying. A one-sample T-test is performed to see if the drone’s mean elevation data deviates significantly from the surveyed standard. If the T-test shows no significant difference, the drone system is validated for professional use in civil engineering.

Practical Applications: Measuring Precision in Autonomous Systems

As we move toward a future of fully autonomous drone swarms and remote sensing networks, the T-test serves as a gatekeeper for safety and reliability.

Evaluating GPS and GNSS Accuracy

Precision is the backbone of autonomous flight. When developers implement RTK (Real-Time Kinematic) or PPK (Post-Processed Kinematic) systems, they must quantify the improvement over standard GPS. By applying T-tests to the coordinate data, innovators can pinpoint exactly how much “drift” is reduced. This statistical backing is what allows drones to perform high-stakes tasks like bridge inspections or automated docking where centimeters matter.

Validating AI-Driven Object Detection Models

In modern drone innovation, AI “Follow Mode” and obstacle avoidance are high-demand features. However, an AI is only as good as its training. When a developer updates an object detection model (e.g., training it to better recognize power lines), they use a T-test to compare the detection success rates of the old model versus the new one. This ensures that adding new layers to a neural network actually improves performance rather than introducing new errors or latency.

Assessing the Impact of Environmental Factors on Flight Stability

Drone tech must be resilient. Research teams often use T-tests to study how environmental variables—like wind speed or electromagnetic interference—affect flight stability. By comparing flight telemetry data from a “controlled environment” (a hangar) against an “active environment” (an offshore wind farm), engineers can use the T-test to quantify the exact degradation in stability, leading to the development of better stabilization sensors and more robust flight controllers.

Best Practices for Implementing T-Tests in Drone Research and Development

To get meaningful results from a T-test in the tech and innovation sector, one must adhere to specific data protocols. Misapplying statistics can lead to “false positives,” where a company thinks their technology is better than it actually is.

Ensuring Data Normality and Homogeneity

The T-test is a “parametric” test, meaning it assumes the data follows a normal distribution (the bell curve). When collecting data from drone sensors, outliers are common—perhaps a sudden gust of wind caused a single massive error in a GPS log. Before running a T-test, data scientists must clean the data and ensure that the variance between the two groups is relatively equal (homogeneity of variance). If the data is too messy, the T-test results will be unreliable.

Avoiding “P-Hacking” in Sensor Testing

In the rush to bring new drone tech to market, there is a temptation to “P-hack”—the practice of running multiple tests or manipulating data until a significant P-value is found. In the drone industry, this is dangerous. If an obstacle avoidance system is marketed as “99% effective” based on flawed T-test results, it could lead to hardware failure or accidents. True innovation relies on honest statistical reporting, including the publication of “null” results where a new feature didn’t actually perform as expected.

Tools and Software for Statistical Analysis in UAV Tech

Modern drone developers don’t do these calculations by hand. Integration of statistical libraries into the development workflow is key.

- Python (SciPy/Statsmodels): Widely used for analyzing flight logs and sensor data.

- R: The gold standard for academic research in remote sensing and geospatial analysis.

- MATLAB: Often used in the aerospace industry for simulating flight dynamics and validating control systems through statistical testing.

Conclusion: The Path to Verifiable Innovation

The “T-test” might seem like a small Cog in the massive machine of drone innovation, but it is the tool that transforms a “good idea” into a “proven technology.” In an era where aerial mapping, AI-driven flight, and remote sensing are becoming critical infrastructure, we cannot rely on anecdotal evidence or impressive-looking marketing videos.

By employing the T-test, drone innovators can provide the rigorous, mathematical proof that their sensors are more accurate, their algorithms are more efficient, and their autonomous systems are safer. As the industry moves forward, the marriage of high-tech hardware and classical statistical analysis will continue to be the foundation upon which the next generation of aerial technology is built. Whether you are developing the next breakthrough in LiDAR or optimizing a drone’s AI brain, understanding the T-test is not just a statistical requirement—it is a competitive necessity.