In the rapidly evolving landscape of drone technology, where innovation introduces sophisticated AI, intricate autonomous systems, and advanced sensing capabilities, the need for rigorous validation is paramount. The term “Randomized Control Trials” (RCTs) might traditionally evoke images of medical research or clinical studies. However, the fundamental principles underpinning RCTs – systematic comparison, minimization of bias through randomization, and the establishment of cause-and-effect relationships – are not only applicable but are becoming increasingly indispensable in the “Tech & Innovation” sector of the drone industry. Understanding what RCTs are, and more importantly, how their core methodologies can be adapted and applied to drone technology, is crucial for building reliable, safe, and truly effective unmanned aerial systems.

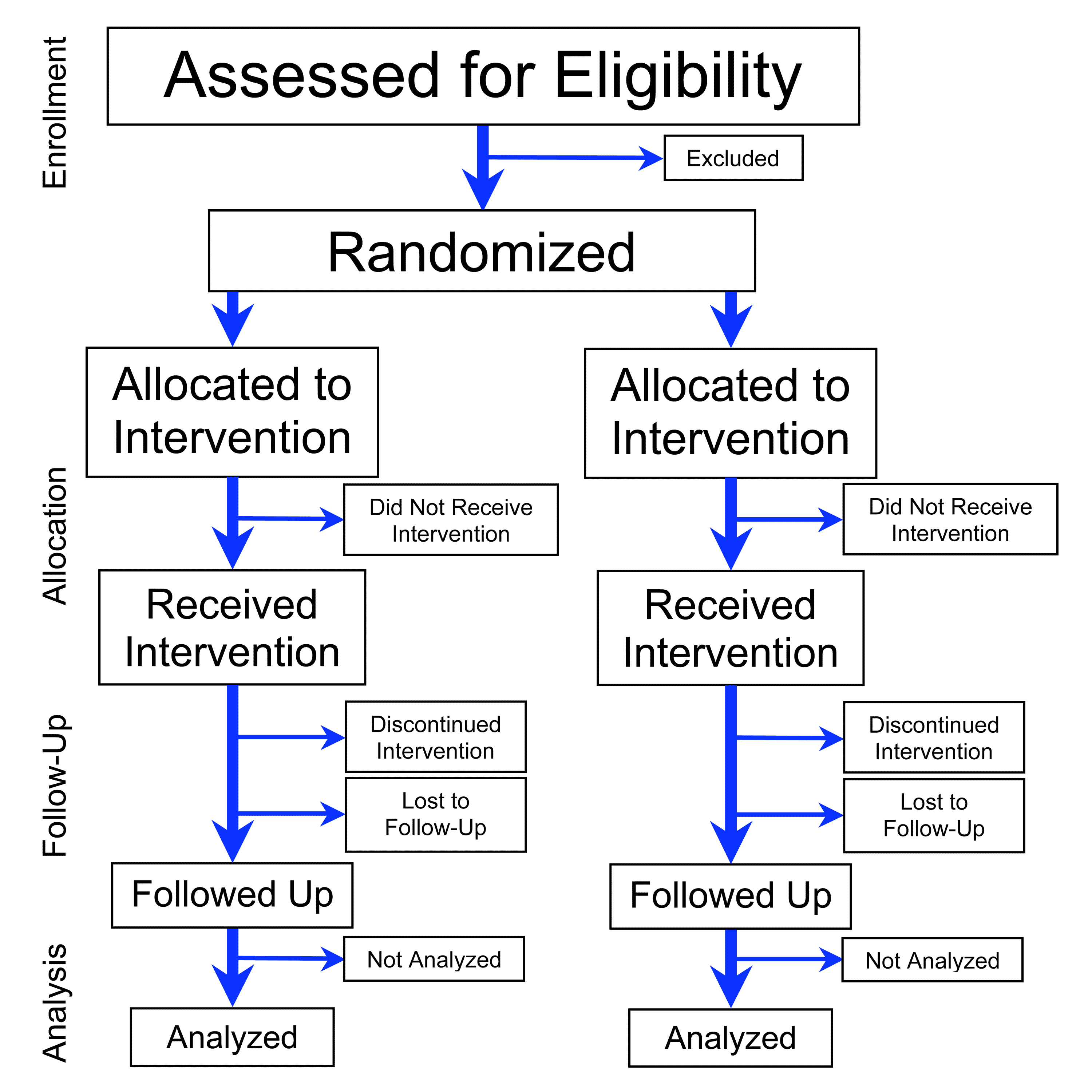

At its heart, an RCT is an experimental design aimed at evaluating the effectiveness of an intervention by comparing it against a control or alternative. This comparison is made between groups of subjects who have been randomly assigned to either receive the intervention or not. The power of randomization lies in its ability to balance known and unknown confounding factors between groups, thereby isolating the effect of the intervention. In the realm of drones, an “intervention” could be a new obstacle avoidance algorithm, an AI-driven object tracking system, a refined autonomous navigation protocol, or a novel remote sensing payload. The “subjects” might be identical drone platforms, diverse flight environments, or various data sets used for training and testing. By embracing the spirit of RCTs, drone developers can move beyond anecdotal evidence and subjective evaluations to quantitatively prove the superiority and safety of their innovations.

The Imperative for Rigorous Testing in Drone Innovation

The stakes in drone technology are incredibly high. From autonomous package delivery and critical infrastructure inspection to environmental monitoring and public safety operations, the reliability and performance of drones directly impact economic viability, operational efficiency, and, most critically, human safety. This reality necessitates a testing paradigm that leaves no room for uncertainty, a void that RCT-inspired methodologies are perfectly suited to fill.

The Complexity of Autonomous Systems

Modern drones are no longer simple remote-controlled aircraft; they are complex cyber-physical systems integrated with sophisticated software, artificial intelligence, and intricate sensor arrays. Each component, from flight controllers and navigation modules to computer vision algorithms and communication protocols, interacts in multifaceted ways. When introducing a new feature, say, an AI-powered “follow mode” or an enhanced autonomous landing sequence, its performance is influenced by countless variables: wind conditions, lighting, terrain, communication latency, and battery levels, among others. Without a structured, controlled, and randomized approach, isolating the true impact of the new feature amidst this complexity becomes a monumental, if not impossible, task. Rigorous testing, informed by RCT principles, allows developers to dissect these interactions, understanding precisely how an upgrade performs under a variety of conditions and why.

Ensuring Safety and Reliability

The safety implications of drone operation cannot be overstated. A failure in an autonomous navigation system could lead to a collision, property damage, or even injury. A flaw in an AI-driven anomaly detection system for industrial inspection could result in missed critical defects, leading to catastrophic infrastructure failures. To gain public trust and regulatory approval, drone technologies must demonstrate an extremely high level of safety and reliability. This requires more than just functional testing; it demands comparative studies where new systems are pitted against established benchmarks or human-operated controls in diverse, real-world (or meticulously simulated) scenarios. By randomizing test conditions and comparing outcomes, developers can systematically identify potential vulnerabilities, quantify risk, and robustly validate safety assurances before deployment.

Validating Performance Metrics

Beyond safety, performance is a key differentiator in the competitive drone market. Whether it’s the accuracy of a mapping payload, the efficiency of an automated inspection route, or the precision of an AI tracking algorithm, performance needs to be objectively measured and validated. Simply observing that a new system “seems to work better” is insufficient. RCT-inspired methodologies provide the framework to define measurable outcomes (e.g., mapping error rates, inspection completion time, tracking accuracy), randomize the conditions under which these outcomes are measured (e.g., different lighting, varying target speeds, diverse landscapes), and statistically analyze the results to definitively prove whether a new innovation delivers superior performance compared to its predecessors or alternatives. This empirical evidence is vital for marketing claims, engineering decisions, and investor confidence.

Adapting RCT Principles for Drone Technology Development

While a medical RCT directly compares a drug to a placebo, the drone industry can creatively adapt these core tenets to its unique challenges. The underlying logic remains the same: create controlled comparisons to evaluate an “intervention” objectively.

Defining “Interventions” in Drone Tech

In drone tech, an “intervention” is any specific change, enhancement, or new feature whose effectiveness or performance needs to be assessed. This could include:

- Software Upgrades: A new flight controller firmware version, an updated AI model for object recognition, a revised path planning algorithm for autonomous navigation.

- Hardware Modifications: A new sensor type (e.g., a novel LiDAR system), a more efficient propeller design, or a redesigned gimbal for camera stabilization.

- Operational Protocols: A new method for swarm coordination, an optimized battery swap procedure for continuous operation, or a specific flight pattern for maximizing data capture.

The key is that the intervention must be clearly defined and quantifiable in its application, allowing for a distinct comparison against a baseline.

Establishing Control and Test Groups

Just as a medical RCT has a control group receiving standard care (or a placebo) and a test group receiving the new treatment, drone testing requires analogous groups.

- Control Group: This could be a drone operating with the current, established technology (e.g., the previous version of an AI algorithm, a standard GPS navigation system), a drone following a manually piloted route, or even a human operator performing the task for comparison against automation. The control provides the baseline against which the “intervention’s” performance is measured.

- Test Group: This group of drones or test scenarios incorporates the new “intervention.” For instance, a fleet of drones running the experimental AI follow mode, or a single drone performing multiple flights with the new obstacle avoidance system engaged.

Careful selection and configuration of these groups are crucial to ensure that any observed differences in outcome can be attributed to the intervention, rather than other confounding factors.

The Role of Randomization in Drone Testing

Randomization is the cornerstone of RCTs, minimizing bias by ensuring that unknown variables are evenly distributed between comparison groups. In drone testing, pure “random assignment of subjects” might not always be feasible in the same way as human trials, but the principle can be adapted:

- Randomized Test Environments/Scenarios: When evaluating a new autonomous navigation system, drones could be randomly assigned to fly in different types of environments (urban, rural, open field), varying weather conditions (windy, calm, cloudy), or at different times of day (dawn, noon, dusk).

- Randomized Flight Paths/Tasks: For testing a new AI object tracking algorithm, the target could be instructed to move along randomly generated paths, at random speeds, or perform random maneuvers to challenge the system.

- Randomized Data Sets: In the development of machine learning models for drone vision or mapping, training and validation data sets should be randomly sampled and rigorously partitioned to prevent overfitting and ensure generalizability.

- Randomized Order of Interventions: If comparing multiple versions of an algorithm on the same drone, the order in which each version is tested should be randomized to prevent learning effects or environmental drift from biasing the results.

By incorporating randomness, developers can be more confident that observed performance differences are due to the innovation itself, rather than systemic biases in the testing procedure.

Practical Applications of RCT-Inspired Methodologies

The application of RCT principles within the drone tech and innovation sector spans various critical functionalities, leading to more robust and reliable systems.

Autonomous Navigation and Obstacle Avoidance Systems

Testing a new autonomous navigation algorithm or an improved obstacle avoidance system is a prime candidate for RCT-inspired methodology. Developers could:

- Define a set of complex, varied test environments (e.g., dense forest, urban canyon with dynamic obstacles, open terrain with sparse obstacles).

- Randomly assign multiple drones (some with the new algorithm, others with the control/old algorithm) to navigate these environments.

- Measure metrics like collision rate, path efficiency, time to complete the mission, and energy consumption.

- Randomize the initial starting positions, wind conditions (simulated or real), and types/speeds of simulated moving obstacles.

This approach provides statistically sound data on the new system’s robustness and efficiency across diverse, unpredictable scenarios.

AI-Driven Object Recognition and Tracking

For AI models that enable drones to recognize objects (e.g., agricultural pests, critical infrastructure defects, missing persons) or track moving targets, RCT-inspired testing is essential.

- Intervention: A new deep learning model trained for specific object detection.

- Control: A previous model version or a human-annotated baseline.

- Randomization: Presenting the AI with randomly selected images or video clips from a diverse, annotated dataset, containing varying lighting, angles, occlusions, and clutter. For tracking, targets could move with randomized patterns and speeds.

- Outcome: Precision, recall, F1-score for recognition; tracking accuracy, re-identification rate for tracking.

This ensures the AI’s performance is truly robust and not just overfitting to specific test cases.

Mapping Accuracy and Data Integrity

When developing advanced mapping solutions or photogrammetry techniques, the accuracy and integrity of the collected data are paramount.

- Intervention: A new mapping software pipeline, a different sensor configuration, or an optimized flight pattern for 3D model generation.

- Control: Standard industry mapping practices or a previously validated method.

- Randomization: Mapping different geographical areas selected randomly, under varying atmospheric conditions, or with different ground control point distributions.

- Outcome: Root Mean Square Error (RMSE) for topographical accuracy, completeness of 3D models, consistency of measurements across multiple flights.

Such rigorous comparisons demonstrate the real-world advantages of new mapping innovations.

Remote Sensing Payload Performance

For specialized remote sensing payloads (e.g., thermal cameras for inspection, multispectral sensors for agriculture, LiDAR for volumetric analysis), comparative trials can quantify improvements.

- Intervention: A new thermal camera with enhanced resolution or a novel spectral band filter.

- Control: A standard thermal camera or a conventional multispectral setup.

- Randomization: Capturing data over randomly chosen targets (e.g., crops, industrial components, animal populations) at different times of day, varying altitudes, or under different environmental conditions.

- Outcome: Accuracy in temperature measurement, precision in plant health indices, detection rates of specific anomalies.

This helps establish clear performance benchmarks for advanced sensing capabilities.

Challenges and Future Directions

Implementing RCT-inspired methodologies in drone tech is not without its challenges, primarily due to the complexity and variability of real-world drone operations.

Ethical Considerations and Real-World Limitations

While randomization is ideal, conducting large-scale, fully randomized real-world drone trials can be expensive, time-consuming, and sometimes impractical or ethically complex, especially when safety is a concern. For instance, deliberately randomizing flight paths that might encounter unexpected obstacles for an untested system could be irresponsible. Therefore, much of this work relies heavily on high-fidelity simulation environments where thousands of randomized “trials” can be run safely and efficiently, complemented by smaller, highly controlled real-world validation tests. The ethical imperative remains to ensure that any real-world testing prioritizes safety and regulatory compliance above all else.

Data Volume and Analysis

RCTs generate significant amounts of data, and drone trials are no exception. From flight logs and sensor readings to image and video data, the sheer volume requires sophisticated data management, processing, and statistical analysis techniques. The future of applying RCT principles in drone innovation will heavily depend on advancements in automated data annotation, big data analytics, and machine learning models capable of identifying patterns and anomalies in complex trial data. This will include developing specialized statistical methods tailored to the unique characteristics of drone performance metrics.

The Evolving Landscape of Drone Regulations

As drone technology advances, so too do the regulatory frameworks governing its use. Demonstrating robust safety and reliability through RCT-inspired testing will become increasingly critical for obtaining certifications and operational approvals for new autonomous capabilities. Regulatory bodies will likely look for evidence of systematic validation processes that minimize bias and provide statistically significant proof of performance. The integration of RCT principles into industry best practices could standardize how new drone technologies are evaluated, fostering greater confidence from regulators, industry, and the public alike.

In conclusion, “What is Randomized Control Trials” for the drone industry is about adopting a mindset of rigorous, unbiased, and systematic experimentation. It’s about ensuring that every new AI algorithm, every autonomous function, and every sensor upgrade is not just conceptually sound but empirically proven to be superior, safer, and more reliable. By embracing the spirit of RCTs, the drone “Tech & Innovation” sector can build a future where unmanned aerial systems operate with unparalleled precision, efficiency, and trustworthiness.