In the fast-paced world of technology and innovation, the development and deployment of new systems, algorithms, and solutions are constant. From advanced AI models powering autonomous vehicles to sophisticated remote sensing platforms providing critical data, understanding the real-world impact and effectiveness of these innovations is paramount. However, the ideal conditions for a true randomized controlled experiment are often unattainable in practical technological contexts. This is where quasi-experimental research designs emerge as an indispensable methodological tool.

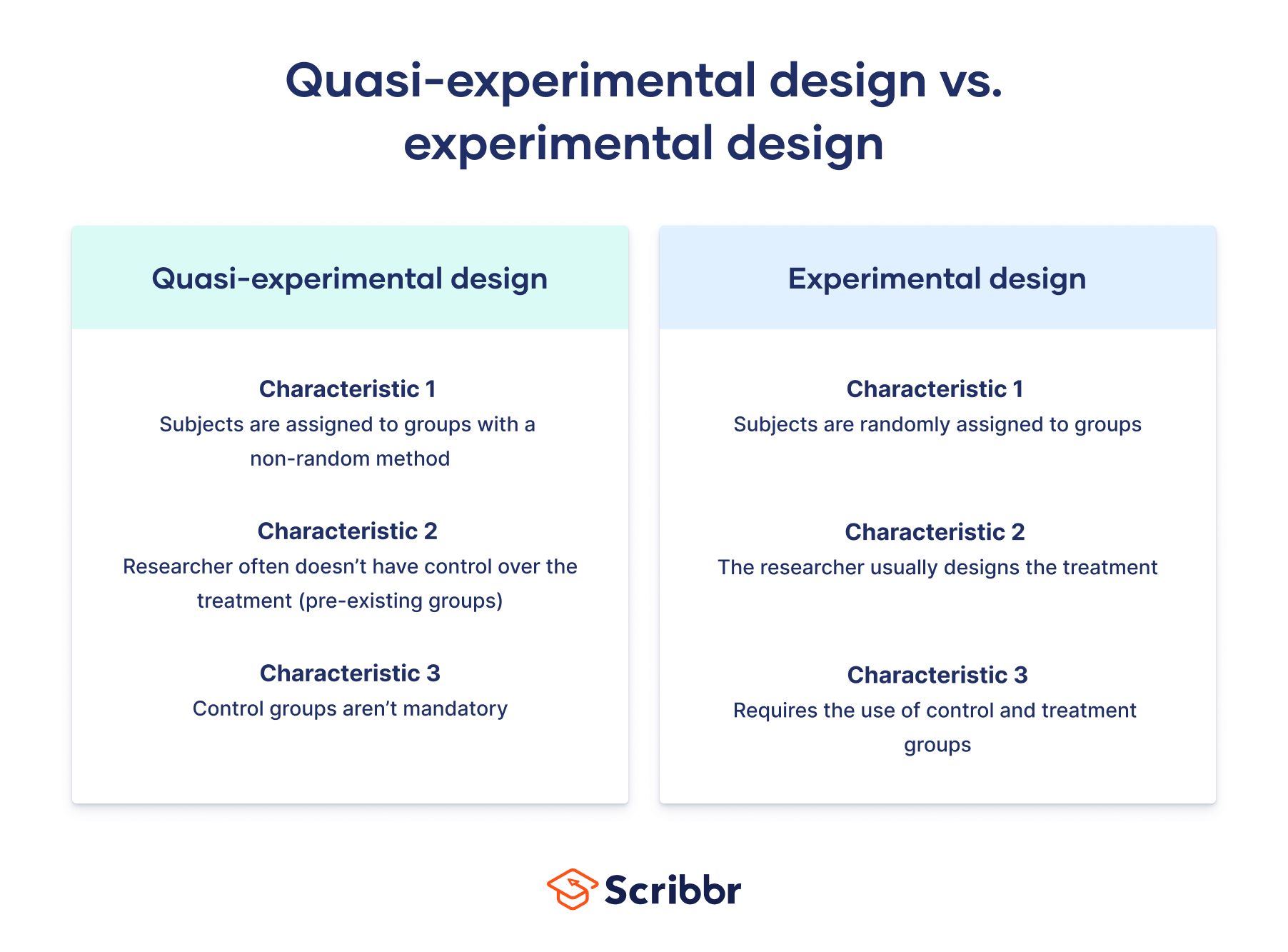

Quasi-experimental research, at its core, refers to empirical intervention studies used to estimate the causal impact of an intervention on target populations without random assignment. Unlike true experiments, which rely on randomization to ensure comparability between treatment and control groups, quasi-experiments typically work with pre-existing groups or naturally occurring comparisons. In the realm of tech and innovation, this means evaluating new software features, AI algorithms, drone applications, or smart city initiatives when it’s impractical, unethical, or impossible to randomly assign users or environments to receive or not receive the intervention.

The ability to rigorously assess the efficacy, safety, and societal implications of emerging technologies without perfect experimental control is critical for informed decision-making, policy development, and continuous improvement. This article delves into what quasi-experimental research entails within the context of tech and innovation, its unique value proposition, and how it enables robust evaluation in complex, dynamic environments.

The Imperative for Quasi-Experimental Approaches in Technology

The evaluation of technological advancements presents a unique set of challenges that often preclude the use of traditional randomized controlled trials (RCTs). While RCTs are the gold standard for establishing causality, their stringent requirements for randomization can be a significant hurdle when dealing with real-world tech deployment.

Limitations of True Experiments in Real-World Tech Deployment

Consider the deployment of a new AI-powered traffic management system across a city, or the introduction of a novel autonomous drone delivery service in a specific region. In such scenarios, randomly assigning entire city districts or neighborhoods to either receive or not receive the technology would be logistically complex, politically contentious, and potentially unethical. Stakeholders often demand the best available technology be implemented where it’s most needed, not where a random number generator dictates.

Furthermore, ethical considerations frequently limit the applicability of true experiments. Denying a potentially beneficial technology, such as a new health monitoring system or an advanced disaster response tool, to a randomly selected control group might be deemed unacceptable. Similarly, the “Hawthorne effect,” where subjects alter their behavior simply because they know they are being studied, can be amplified in tech contexts, making clean experimental control difficult. The sheer scale and interconnectedness of modern technological systems also make isolation for experimental purposes challenging. A new security patch for a widespread operating system cannot be randomly applied to some users while others are left vulnerable for the sake of an experiment.

Evaluating the Impact of Emerging Technologies

Despite these limitations, the need to evaluate the impact of emerging technologies remains paramount. Tech companies, governments, and research institutions require robust evidence to justify investments, understand unintended consequences, and guide future development. How do we assess if a new remote sensing algorithm improves agricultural yield predictions, or if an AI-driven predictive maintenance system for industrial machinery genuinely reduces downtime? How do we measure the effectiveness of new drone-based inspection techniques compared to traditional methods?

Quasi-experimental designs provide a pragmatic and powerful framework for answering these questions. They enable researchers and innovators to draw strong inferences about cause and effect by meticulously analyzing existing data, constructing credible comparison groups, and employing sophisticated statistical techniques to account for confounding factors. This allows for evidence-based decision-making even when perfect experimental conditions are out of reach, bridging the gap between theoretical potential and real-world performance.

Core Characteristics and Design Types Relevant to Tech Evaluation

Quasi-experimental designs encompass a variety of structures, each suited to different evaluative contexts in tech and innovation. While they lack random assignment, they compensate through careful design, data collection, and statistical analysis to bolster the validity of causal claims.

Nonequivalent Groups Design (NEG)

The Nonequivalent Groups Design (NEG) is one of the most common quasi-experimental approaches. It involves comparing two or more pre-existing groups that are not formed through random assignment, with one group receiving the intervention (e.g., a new software update, an AI-powered feature) and the other serving as a comparison.

For instance, a company might roll out a new AI-powered predictive maintenance feature to customers in one geographical region (the treatment group) while continuing to serve customers in another, similar region with the existing system (the control group). Researchers would then compare key metrics like equipment uptime, maintenance costs, or failure rates between the two regions before and after the new feature’s deployment. The challenge lies in ensuring the groups are as similar as possible on relevant characteristics, and statistical methods like propensity score matching or ANCOVA are often employed to adjust for pre-existing differences, thereby increasing the confidence in attributing observed changes to the new technology.

Interrupted Time-Series Design

The Interrupted Time-Series Design is particularly powerful for evaluating tech interventions whose effects are expected to manifest over time. This design involves repeatedly measuring an outcome variable at multiple points before and after a specific technological intervention is introduced to a single group.

Imagine a municipality implementing a new smart traffic light system designed to optimize flow and reduce congestion. Researchers could collect data on traffic volume, average speed, and accident rates for several months or years before the system’s installation and then continue collecting the same data for an equivalent period after its implementation. By analyzing the trends and changes in the time series data, controlling for other concurrent events, it’s possible to discern whether the smart traffic lights had a significant impact beyond pre-existing trends or seasonal variations. This design is invaluable for assessing the long-term impact of autonomous flight regulations, widespread cybersecurity updates, or large-scale data mapping initiatives.

Regression Discontinuity Design (RDD)

Regression Discontinuity Design (RDD) is a particularly strong quasi-experimental approach when an intervention is assigned based on a continuous “assignment variable” exceeding a specific cut-off point. It capitalizes on the often arbitrary nature of eligibility criteria.

Consider a tech program offering advanced AI training tools only to companies whose annual innovation spending exceeds a certain threshold. Companies just above the threshold are compared to those just below it. The assumption is that companies just above and just below the threshold are otherwise very similar in terms of relevant characteristics (e.g., size, industry, general innovation capacity) due to the continuous nature of the assignment variable. Any significant difference in outcomes (e.g., successful patent applications, new product launches) between these two groups, precisely at the cut-off, can then be attributed to the AI training tools. This design is robust for evaluating the impact of tech grants, specialized software licenses, or access to exclusive beta programs based on performance metrics or resource levels.

Practical Applications Across Tech & Innovation Domains

The versatility of quasi-experimental designs makes them applicable across a broad spectrum of tech and innovation sectors, providing valuable insights where true experimental control is impractical.

Autonomous Systems and AI Development

In the development of autonomous systems, such as self-driving cars, drones with AI-powered navigation, or robotic automation in manufacturing, testing in fully controlled environments is crucial but insufficient for real-world validation. Quasi-experimental methods can bridge this gap. For instance, evaluating a new AI decision-making algorithm for drone delivery in a pilot urban area versus a comparable control area allows researchers to assess its impact on delivery efficiency, error rates, and public perception without the ethical and logistical challenges of randomizing individual drone missions. Similarly, assessing the safety improvements introduced by new autonomous flight software can involve comparing accident rates before and after its deployment across a fleet, using an interrupted time-series approach.

Remote Sensing and Mapping Technologies

New remote sensing payloads, satellite imagery processing algorithms, and advanced mapping software promise enhanced data for environmental monitoring, urban planning, and resource management. Quasi-experimental designs help validate these promises. For example, comparing the accuracy of agricultural yield forecasts generated by a new hyperspectral imaging system against traditional methods in specific farm regions (nonequivalent groups) can demonstrate its practical value. An interrupted time-series design could track changes in deforestation rates or land use patterns before and after the widespread adoption of a new high-resolution satellite mapping service, attributing any significant shift to the improved monitoring capabilities.

Smart City Initiatives and IoT Solutions

Smart city initiatives often involve the integrated deployment of various Internet of Things (IoT) devices, sensors, and intelligent systems to improve urban living. Evaluating the holistic impact of such complex interventions necessitates quasi-experimental approaches. For instance, measuring the effect of smart street lighting on energy consumption and crime rates in a pilot neighborhood versus a similar, non-smart neighborhood (nonequivalent groups) can provide concrete evidence of its benefits. Similarly, assessing the effectiveness of an IoT-enabled waste management system on collection efficiency or environmental sustainability can be done by comparing performance metrics before and after its implementation across specific urban zones.

Methodological Rigor and Mitigating Threats to Validity

While quasi-experimental designs offer practical advantages, their lack of randomization means researchers must pay extra attention to potential threats to internal validity (the extent to which the observed effect is truly caused by the intervention). Robust methodological practices are essential to strengthen causal inferences.

The Importance of Strong Baselines and Comparison Groups

A critical aspect of quasi-experimental research is the meticulous selection and characterization of comparison groups and the collection of extensive baseline data. When random assignment is absent, researchers must strive to select comparison groups that are as similar as possible to the treatment group on all relevant pre-intervention characteristics. This could involve matching based on demographics, prior performance, resource levels, or environmental factors. Collecting baseline data for both groups (or for the single group in time-series designs) over an extended period helps establish pre-existing trends and differences, allowing evaluators to statistically control for these factors. For instance, when evaluating a new cybersecurity tool, comparing pre-intervention breach rates between two similar organizations can help establish a credible baseline.

Statistical Controls and Advanced Analytics

To further bolster the validity of quasi-experimental findings, sophisticated statistical techniques are frequently employed. Propensity score matching (PSM) is a popular method where individuals from non-randomized groups are matched based on their likelihood (propensity score) of receiving the intervention, effectively creating more comparable groups. Other techniques include regression analysis, analysis of covariance (ANCOVA), difference-in-differences (DiD) models, and advanced time-series analysis. These methods help to statistically adjust for pre-existing differences between groups and to control for confounding variables that might otherwise obscure the true effect of the technological intervention. In the context of AI evaluation, for example, statistical models can control for user base characteristics, hardware variations, or prior software versions to isolate the impact of a new AI feature.

Ethical Considerations in Tech Evaluation

The ethical implications of deploying and evaluating new technologies are profound, and quasi-experimental designs often align well with ethical research practices. Since randomization is avoided, no group is arbitrarily denied a potentially beneficial technology, and interventions can be deployed based on need, policy, or feasibility. However, ethical considerations remain paramount. Researchers must ensure transparency with all participants, obtain informed consent where applicable, protect data privacy, and ensure that the evaluation itself does not create undue risks or burdens. Furthermore, the findings from quasi-experimental studies often inform policies and future tech development, placing an ethical imperative on ensuring the highest possible methodological rigor to avoid misleading conclusions.

Conclusion

Quasi-experimental research designs are indispensable tools in the modern landscape of tech and innovation. While they diverge from the traditional experimental ideal of random assignment, they offer a powerful, practical, and ethical pathway to rigorously evaluate the impact of new technologies, AI systems, autonomous solutions, and remote sensing applications in real-world settings. By leveraging careful design, robust data collection, sophisticated statistical controls, and a keen awareness of potential biases, researchers and innovators can draw strong, defensible conclusions about what works, what doesn’t, and why. As technology continues to evolve at an unprecedented pace, mastering the art and science of quasi-experimental evaluation will remain critical for fostering responsible innovation, driving progress, and ensuring that technological advancements truly serve humanity’s best interests.