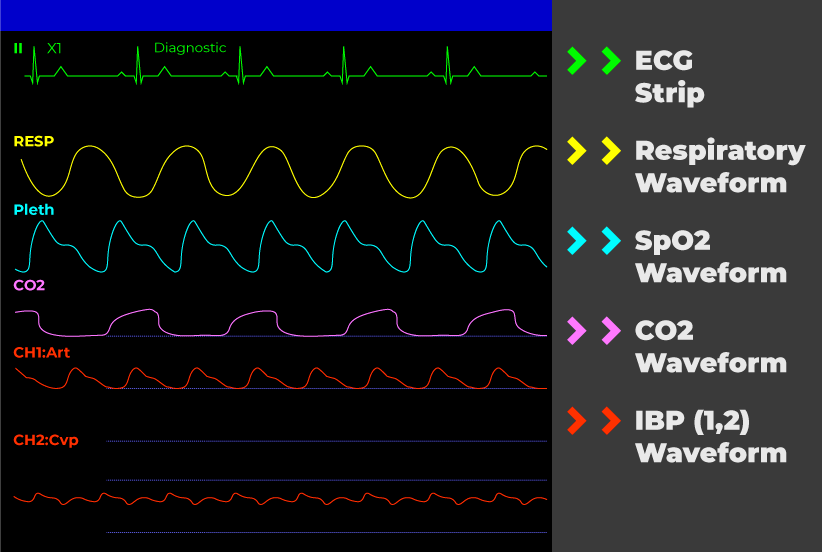

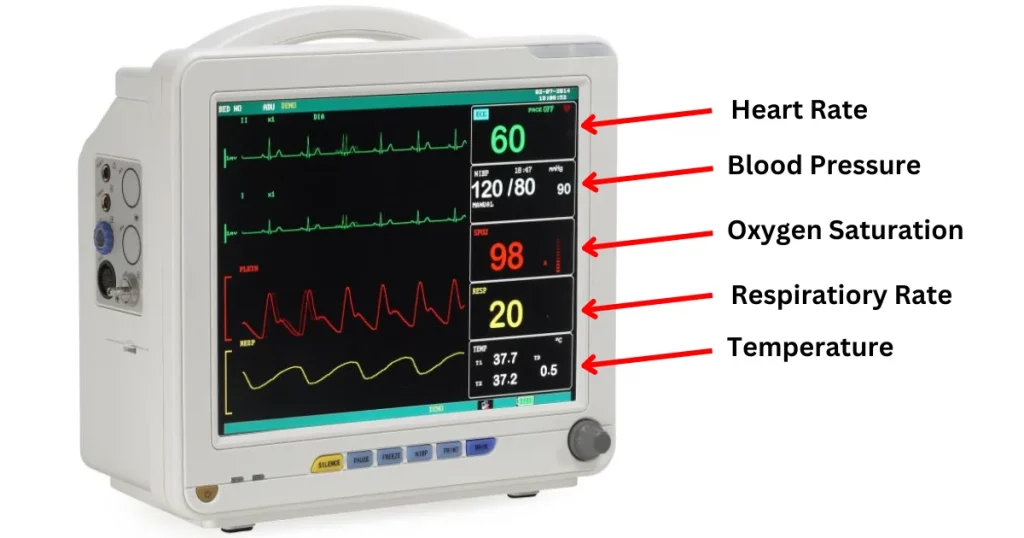

In the sterile environment of an Intensive Care Unit, the term “PLETH” is a staple. It refers to the plethysmograph waveform on a hospital monitor, a visual representation of blood volume changes in the peripheral header. However, as drone technology shifts from simple aerial photography to sophisticated remote sensing, this medical diagnostic tool is migrating from the bedside to the cockpit of advanced Unmanned Aerial Vehicles (UAVs). The convergence of high-resolution imaging, artificial intelligence, and biometric signal processing has allowed engineers to incorporate “PLETH” data into drone systems, revolutionizing fields such as Search and Rescue (SAR), disaster response, and autonomous human-machine interaction.

Understanding what PLETH is in the context of drone technology requires a bridge between biological signals and digital sensor capabilities. While a hospital monitor uses a pulse oximeter clipped to a finger, a drone utilizes Remote Photoplethysmography (rPPG) to extract the same vital data from a distance. This article explores the technical architecture, application, and future innovation of PLETH-capable drone systems.

The Technical Architecture of Aerial Plethysmography

To understand how a drone “sees” a PLETH wave, one must first understand the physics of light and skin. Every time the heart beats, a pressure wave travels through the circulatory system, momentarily increasing the volume of blood in the capillaries. Blood, specifically hemoglobin, absorbs certain wavelengths of light. By monitoring the subtle changes in light absorption and reflection on a person’s skin, a sensor can reconstruct a pulse wave.

Defining the Remote Plethysmograph Waveform

In the drone industry, the PLETH waveform is the digital output of an rPPG algorithm. Unlike a hospital monitor that uses infrared light emitted by a clip-on sensor, a drone relies on ambient light—sunlight or artificial street lighting—and high-bitrate optical sensors. The “wave” seen on the pilot’s telemetry screen represents the rhythmic fluctuations in the green color channel of the video feed, which is where the strongest pulsatile signal is found. This allows for the non-contact monitoring of heart rate, heart rate variability (HRV), and even respiratory rates from altitudes of 10 to 50 meters.

The Transition from Contact Sensors to Remote Sensing

The shift from contact-based PLETH (hospital monitors) to remote PLETH (drones) has been driven by the evolution of CMOS (Complementary Metal-Oxide-Semiconductor) sensor technology. To capture a PLETH signal from a drone, the camera must possess high spatial resolution and a high frame rate (typically 30–60 fps) with minimal compression artifacts. This transition has necessitated a move toward “Global Shutter” cameras in drones, as traditional rolling shutters can introduce “jello effect” vibrations that mask the tiny, sub-pixel changes in skin color required to plot a PLETH graph.

Remote Photoplethysmography (rPPG) and Drone Imaging

Integrating PLETH capabilities into a drone is not as simple as pointing a 4K camera at a person. It requires a sophisticated stack of hardware and software designed to isolate biological signals from a sea of environmental noise. This process is the pinnacle of current Tech & Innovation in the UAV sector.

Utilizing High-Resolution CMOS Sensors and Gimbals

The primary hardware requirement for aerial PLETH is a stabilized imaging payload. Even the slightest vibration from the drone’s brushless motors can destroy the integrity of the PLETH signal. Advanced 3-axis gimbals, often paired with electronic image stabilization (EIS), ensure that the target’s skin—usually the forehead or cheeks—remains locked in the center of the frame.

The sensor must also have a high Signal-to-Noise Ratio (SNR). Because the change in skin color caused by a heartbeat is nearly invisible to the naked eye, the drone’s internal processor must analyze the raw video data to detect changes in the “G” (green) channel of the RGB spectrum. Green light is preferred in drone sensing because it offers a deeper penetration into the tissue compared to blue light, yet provides higher contrast than red light in outdoor settings.

AI Algorithms and Blood Volume Pulse Extraction

The “intelligence” of a PLETH-capable drone lies in its software. Once the camera captures the video feed, on-board AI algorithms—often powered by Edge Computing modules like the NVIDIA Jetson—perform real-time skin detection and tracking.

The algorithm identifies a “Region of Interest” (ROI) on the human subject and averages the pixel values across that area to suppress sensor noise. It then applies independent component analysis (ICA) or chrominance-based methods to separate the blood volume pulse from motion artifacts (such as the person moving their head or the drone swaying in the wind). The result is a clean, oscillating PLETH wave displayed on the ground control station (GCS), providing the pilot with clinical-grade data from the air.

Critical Applications in Search and Rescue (SAR)

The most impactful application of aerial PLETH technology is in the realm of Search and Rescue and emergency medical response. When a drone is deployed to locate a missing hiker or a survivor in a disaster zone, the ability to confirm “life signs” from a distance is a game-changer for tactical decision-making.

Triage from the Air: Assessing Vital Signs Remotely

In a mass-casualty incident, such as an earthquake or a structural collapse, traditional ground-based triage is slow and dangerous. A drone equipped with rPPG/PLETH capabilities can hover over a group of victims and instantly provide the rescue team with a prioritized list of who needs help first. By analyzing the PLETH waveform, the drone can report if a victim has a dangerously high heart rate (tachycardia) or if their pulse is weak and irregular, indicating shock. This “Aerial Triage” allows first responders to allocate resources more efficiently, potentially saving lives that would have been lost during a manual search.

Overcoming Environmental Interference in Aerial Sensing

Unlike the controlled environment of a hospital, drones must operate in unpredictable conditions. Light intensity changes as clouds pass, and wind causes both the drone and the subject to move. To maintain a reliable PLETH signal, innovative drone systems use “multi-modal” sensing. They combine the rPPG data from the optical camera with thermal imaging.

While the PLETH wave provides the pulse, the thermal camera provides the core body temperature and helps the AI identify the best skin patches for the optical sensor to monitor. This synergy between thermal and optical sensors allows PLETH-capable drones to operate in sub-optimal lighting, where a standard camera might struggle to distinguish the subtle color shifts of a heartbeat.

The Future of Autonomous Health Mapping and Innovation

As we look toward the future, the integration of PLETH technology in drones is moving toward full autonomy. We are entering an era where drones will not just observe, but will actively map the “health landscape” of an area.

Integrating PLETH Data with Mapping Software

Innovation in remote sensing is leading to the development of “Biometric Heatmaps.” Using photogrammetry and PLETH data, drones can create 3D maps of a disaster site where survivors are not just represented by their location, but by their physiological status. In this scenario, a drone fleet would autonomously patrol an area, identify human presence, extract PLETH data, and update a live dashboard for emergency commanders. This integration of biometrics into Geographic Information Systems (GIS) represents a massive leap in how we manage large-scale emergencies.

Remote Sensing of Wildlife and Agriculture

The “PLETH” concept is also being adapted for non-human applications. Researchers are using high-end drones to monitor the heart rates of endangered megafauna, such as whales and elephants, from a distance that does not disturb the animals. By observing the PLETH wave of a whale as it surfaces, biologists can assess its stress levels and overall cardiovascular health. Similarly, in advanced “Ag-Tech,” similar optical sensing techniques are being explored to monitor the “pulse” of plants—specifically the sap flow and hydration levels—using specialized spectral sensors that operate on the same principles of light absorption used in human plethysmography.

Technical Challenges and Ethical Considerations

Despite the impressive capabilities of PLETH-enabled drones, several hurdles remain. The most significant technical challenge is “motion compensation.” If a person is running or waving their arms, the PLETH signal becomes distorted. Current innovation focuses on “synthetic motion rejection,” where the drone’s AI uses the person’s movement data to mathematically subtract the motion noise from the pulse signal.

Furthermore, the ability to read a person’s vital signs from a distance raises significant privacy concerns. In a hospital, a PLETH monitor is used with consent for medical benefit. In the sky, a drone could potentially harvest biometric data without a subject’s knowledge. As this technology matures, the drone industry must establish strict protocols and “Privacy by Design” features, ensuring that biometric sensing is used exclusively for life-saving or authorized research purposes.

Conclusion

The transformation of “PLETH” from a simple hospital monitor readout to a sophisticated drone sensing capability is a testament to the rapid pace of Tech & Innovation in the UAV industry. By leveraging the principles of photoplethysmography, drones are no longer just “eyes in the sky”; they are becoming remote diagnostic tools capable of sensing the very heartbeat of a situation.

Whether it is identifying a survivor in a dense forest, triaging victims of a natural disaster, or monitoring the health of wildlife in the deep ocean, the PLETH waveform provides a critical link between digital technology and biological reality. As sensor resolution increases and AI processing becomes more efficient, the presence of a “PLETH” wave on a pilot’s screen will become as common—and as vital—as the GPS coordinates of the drone itself. This convergence of medical science and flight technology is not just an incremental update; it is a fundamental shift in the utility of autonomous systems in the modern world.