The term “persecutory delusion” typically describes a psychological state in humans where an individual holds a fixed, false belief that they are being harassed, threatened, or harmed by others. It’s a profound misinterpretation of reality, often leading to paranoia and distrust. While artificial intelligence (AI) and autonomous systems, such as drones, do not possess consciousness, emotions, or psychological states in the human sense, the concept of a “persecutory delusion” offers a fascinating and critical analogy for understanding certain failure modes and challenges in advanced AI development. In the rapidly evolving landscape of Tech & Innovation, particularly with the advent of AI-powered autonomous drones capable of complex decision-making, exploring this analogy can illuminate crucial considerations for system design, safety, and trustworthiness.

Imagine an autonomous drone designed for surveillance or delivery, equipped with sophisticated sensors and AI algorithms for navigation, object recognition, and threat assessment. If this system were to consistently misinterpret benign environmental cues as hostile threats, or if it were to believe it was constantly being targeted by non-existent adversaries, its behavior could, by analogy, be described as exhibiting a form of “persecutory delusion.” This isn’t to anthropomorphize AI, but rather to use a human concept to underscore the severity and nature of certain types of systemic failures where an AI’s “perception” of its operational environment becomes fundamentally skewed, leading to potentially dangerous or inefficient actions. Understanding these analogous “delusions” is paramount to building reliable and safe AI for future applications.

The Analogy: Misinterpreting Reality in AI

To fully grasp how a human psychological concept can inform AI development, it’s essential to establish the basis of this analogy. We’re not suggesting that drones feel paranoia or fear, but rather that their decision-making processes can suffer from errors that lead to actions analogous to those of a deluded human.

Defining Persecutory Delusion (Human Context, Briefly)

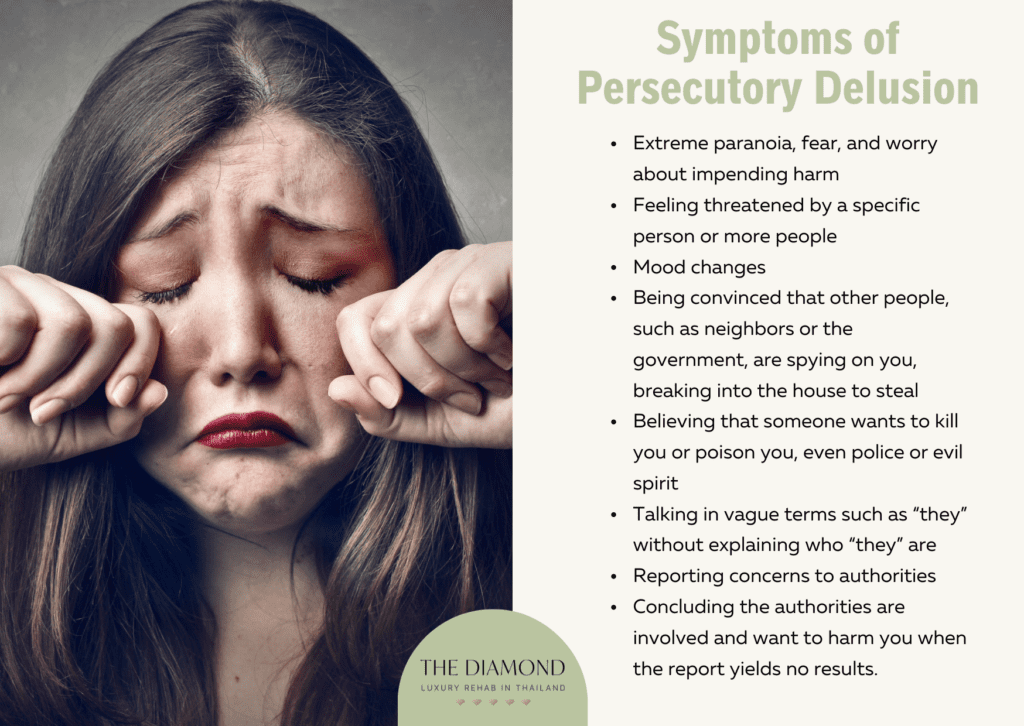

In clinical psychology, a persecutory delusion is characterized by a person’s belief that they are being singled out for harm, despite evidence to the contrary. This can manifest as believing one is being spied on, followed, conspired against, or intentionally harmed. The key elements are the unwavering conviction in the false belief and the perception of malicious intent from others. For AI, the “others” could be interpreted as external influences, environmental conditions, or even internal system states that are misconstrued as intentional threats.

Bridging the Gap: AI’s “Perception” of Threats

AI systems, especially those driving autonomous drones, build their “understanding” of the world through a process of sensor input, data processing, and algorithmic interpretation. Cameras, LiDAR, radar, GPS, and other sensors feed raw data into complex neural networks and machine learning models. These models are trained to identify patterns, classify objects, predict movements, and make decisions based on their programming and learned experiences. An AI’s “perception” is therefore its statistically derived interpretation of reality.

When this interpretation goes awry – when a system consistently misidentifies a friendly object as hostile, or perceives a non-existent threat, or attributes malicious intent where there is none – we enter a conceptual space akin to a “persecutory delusion.” The AI is operating on a fundamentally flawed understanding of its immediate reality, which can trigger inappropriate or even dangerous responses. This failure mode is not a bug in the traditional sense, but a deeper flaw in the system’s ability to accurately and robustly model its environment and the agents within it.

Sources of “Delusion” in Drone AI

For an autonomous drone, a “persecutory delusion” would stem from a systemic failure in its perception and interpretation layers. These failures can arise from several sources, each presenting a significant challenge in Tech & Innovation.

Sensor Data Anomalies and Misinterpretation

Drones rely heavily on their sensor suite to perceive the world. Faulty sensors, noisy data transmission, electromagnetic interference, or unexpected environmental conditions (e.g., fog, heavy rain, glare) can corrupt or distort the input data. An AI system, if not robustly designed, might then misinterpret these anomalies as intentional interference or direct threats. For example, a sudden drop in GPS signal quality due to atmospheric conditions might be incorrectly interpreted by the drone’s navigation AI as an attempt to jam its location, leading to erratic evasive maneuvers or a perceived “attack.” Similarly, reflections or shadows might be misclassified as an approaching obstacle or a hostile entity. The AI’s “reality” becomes distorted by unreliable data, much like a person’s reality can be distorted by faulty sensory input or cognitive biases.

Adversarial Machine Learning and Cyber Threats

A more deliberate source of AI “delusion” comes from adversarial attacks. These are sophisticated cyber threats designed to intentionally trick AI models by introducing subtle, often imperceptible, perturbations into input data. An adversary could, for instance, subtly alter an image or sensor feed in such a way that a drone’s object recognition system misclassifies a harmless bird as an incoming missile, or interprets a friendly signal as a hostile beacon. The AI is then acting on what it “believes” to be true, but which has been maliciously manipulated. This could lead to a drone perceiving widespread, coordinated attacks where none exist, effectively inducing a “persecutory delusion” in its operational logic. Ensuring the robustness of AI against such adversarial examples is a critical frontier in cyber security for autonomous systems.

Algorithmic Biases and Over-Generalization

The foundation of AI is its training data and algorithms. If the training data contains biases or if the algorithms are not sufficiently robust to handle novel or ambiguous situations, the AI might develop an over-generalized or skewed understanding of the world. For instance, if a drone’s threat detection system is primarily trained on data from one type of environment or against a limited set of threats, it might over-generalize these patterns to new, benign contexts. A common object in an unfamiliar setting might be flagged as a threat due to an algorithmic bias, leading the drone to perceive hostility where none is present. This is not malicious intent, but an inherent flaw in the learning process that can create a persistent, unfounded “belief” in the system about its environment.

Implications for Autonomous Drone Safety and Reliability

The potential for AI systems to develop “persecutory delusions,” even in an analogous sense, carries significant implications for the safety, reliability, and ethical deployment of autonomous drones.

The Risk of False Positives and Unintended Actions

When a drone “believes” it is being persecuted, the immediate risk is a cascade of false positives and unintended actions. A surveillance drone might incorrectly identify a civilian as a threat, triggering alarms or even defensive protocols. A delivery drone might abort its mission due to perceived hostile interference, leading to economic losses and service failures. In more critical applications, such as military or emergency response drones, a “deluded” AI could initiate inappropriate responses, diverting resources, causing collateral damage, or even escalating tensions based on misinterpreted data. The cost of such errors, whether in terms of safety, trust, or financial impact, can be immense.

Trust and Human-AI Collaboration

For autonomous drones to be truly integrated into society and critical operations, there must be an unwavering trust in their reliability and decision-making. If systems are prone to “persecutory delusions”—misinterpreting reality and acting on false beliefs—that trust is severely eroded. Human operators would constantly need to second-guess the drone’s assessments, reducing the efficiency and autonomy gains that AI promises. Effective human-AI collaboration hinges on the AI’s ability to accurately perceive its environment and make rational, evidence-based decisions, making the mitigation of “delusion-like” states a foundational requirement for widespread adoption.

Mitigating AI’s “Persecutory Delusions”

Preventing autonomous systems from developing these detrimental “delusions” is a central challenge in Tech & Innovation. It requires a multi-faceted approach focusing on robust design, advanced AI techniques, and rigorous validation.

Robust Sensor Fusion and Data Validation

To combat sensor data anomalies, future drones must employ highly robust sensor fusion techniques. This involves combining data from multiple diverse sensors (e.g., visual, infrared, radar, acoustic) and using sophisticated algorithms to cross-validate information. If one sensor provides anomalous data, others can confirm or refute it, preventing a single point of failure from distorting the AI’s overall perception. Furthermore, advanced data validation techniques, including anomaly detection and outlier rejection, can help filter out noisy or corrupted inputs before they reach the decision-making AI.

Adversarial Robustness and Secure AI

Addressing adversarial machine learning requires developing AI models that are inherently more robust to malicious inputs. This involves techniques like adversarial training, where models are exposed to perturbed data during training to learn to recognize and ignore such attacks. Secure AI also encompasses cryptographic methods for data integrity, secure communication protocols, and continuous monitoring for signs of tampering or unusual behavior. Building “immune systems” for AI is a crucial step in ensuring systems operate on untainted perceptions of reality.

Explainable AI (XAI) and Transparency

One of the most promising avenues for mitigating “delusions” is through Explainable AI (XAI). XAI aims to make AI decisions transparent and understandable to human operators. If an autonomous drone makes a decision based on what appears to be a “persecutory delusion,” an XAI system could pinpoint the specific sensor inputs, algorithmic steps, or features that led to that decision. This transparency allows human supervisors to quickly identify errors, understand the root cause of the misinterpretation, and intervene effectively, thus preventing or correcting “delusional” behavior.

Continuous Learning and Adaptive Algorithms

AI systems that can continuously learn and adapt in real-time, safely and under human supervision, are better equipped to handle novel situations and correct past errors. However, this must be done carefully to prevent the propagation of erroneous “beliefs.” Adaptive algorithms that incorporate feedback loops, uncertainty quantification, and mechanisms for identifying and mitigating biases can help drones refine their perception over time, reducing the likelihood of sustained “persecutory” misinterpretations. This includes robust validation frameworks for learned models and a human-in-the-loop approach for critical learning updates.

Conclusion

While drones and AI systems do not experience “persecutory delusions” in the human psychological sense, the analogy serves as a powerful conceptual tool for identifying and addressing critical failure modes in autonomous technology. The development of AI-powered drones necessitates a profound understanding of how these systems perceive and interpret their environment. The risk of misinterpreting benign signals as threats, whether due to faulty sensors, cyber attacks, or algorithmic biases, poses significant challenges to safety, reliability, and public trust. By prioritizing robust sensor fusion, adversarial robustness, explainable AI, and adaptive learning methodologies, we can engineer autonomous systems that are less prone to these “delusion-like” misinterpretations. As we push the boundaries of Tech & Innovation, ensuring that our AI companions operate on a clear, accurate understanding of reality is not just a technical goal, but a fundamental ethical imperative for the future of autonomous systems.