In the intricate world of computing, where complex applications run seamlessly and artificial intelligence processes vast datasets, a fundamental mechanism works silently behind the scenes to make it all possible: paging. Far from a mere technicality, paging is a cornerstone of modern operating systems, a brilliant solution to the perennial challenge of managing limited physical memory efficiently. It’s the invisible hand that enables your computer to run multiple demanding programs concurrently, from sophisticated drone flight control software incorporating AI-driven navigation to advanced mapping applications processing remote sensing data. Understanding paging is key to appreciating the robust foundation upon which today’s most innovative technologies are built, ensuring stability, security, and the performance we’ve come to expect from our digital devices.

The Core Concept: Managing Virtual Memory

At its heart, paging is a memory management scheme that underpins virtual memory, allowing systems to operate as if they have far more physical memory (RAM) than is actually installed. This clever abstraction is critical for empowering the multitasking capabilities essential to any modern computing environment, from a desktop PC to an embedded system controlling an autonomous drone.

Beyond Physical RAM Limitations

The primary challenge paging addresses is the inherent limitation of physical Random Access Memory (RAM). Programs, especially those involved in AI, big data processing, or complex simulations, often require a significant amount of memory. If every running program had to reside entirely in physical RAM simultaneously, even high-end machines would quickly run out of resources. Furthermore, the total memory demanded by all concurrently executing processes almost invariably exceeds the physical RAM available. Virtual memory, enabled by paging, resolves this by creating the illusion that each program has its own vast, contiguous memory space, independent of other programs and the actual physical memory layout. This allows developers to write software without constant concern for the physical memory constraints of the target system.

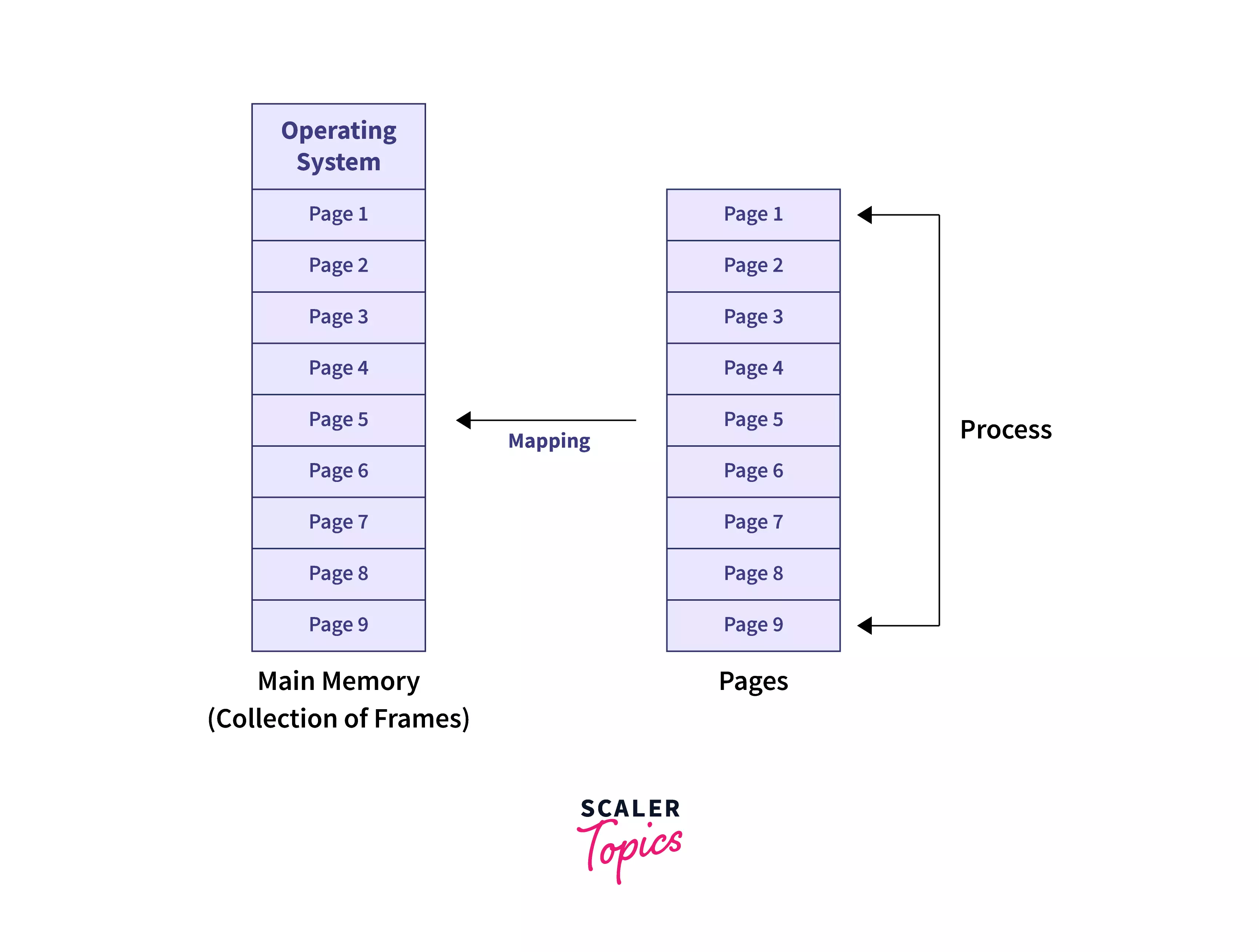

Pages and Frames

To achieve this illusion, paging divides memory into fixed-size blocks. From a process’s perspective, its logical memory space is divided into units called pages. These pages typically range from 4KB to 2MB in size, though larger “superpages” exist for specific performance optimizations. Simultaneously, the physical RAM of the computer is divided into similarly sized blocks called frames. The operating system’s role is to map the logical pages requested by a process to the available physical frames in RAM. This mapping is dynamic and non-contiguous; a process’s pages do not need to occupy adjacent frames in physical memory. This flexibility is what allows the OS to efficiently allocate and deallocate memory as programs start, run, and terminate, without running into fragmentation issues that plagued older memory management schemes.

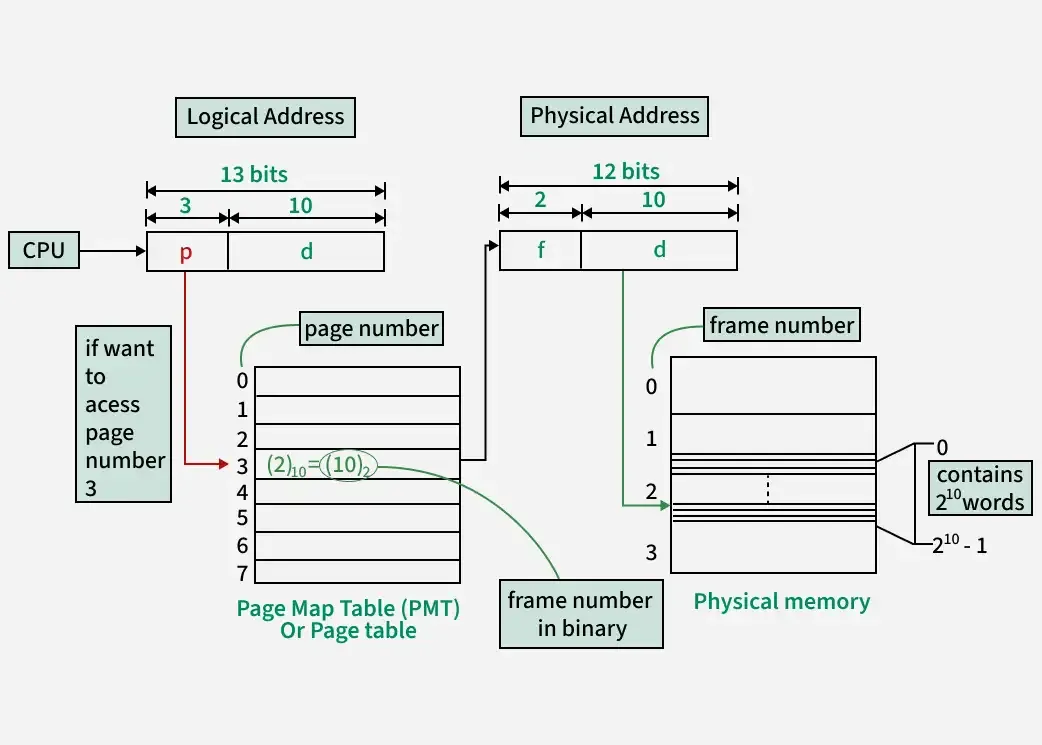

The Page Table

The crucial component responsible for this page-to-frame mapping is the page table. Each running process has its own page table, which is maintained by the operating system. When a program tries to access a memory address (a virtual address), the CPU’s Memory Management Unit (MMU) uses the process’s page table to translate that virtual address into a physical address in RAM. The page table contains an entry for each page in the process’s virtual address space, indicating whether that page is currently in RAM, and if so, which physical frame it occupies. If a page is not currently in RAM (because it hasn’t been used recently or hasn’t been loaded yet), the page table entry will indicate this, triggering a “page fault” and initiating the process of loading the page.

How Paging Works: A Deep Dive into the Mechanism

The elegance of paging lies not just in its conceptual simplicity but also in the sophisticated mechanisms that enable its efficient operation, ensuring a balance between memory utilization and system performance.

Demand Paging and Swapping

A key optimization in paging systems is demand paging. Instead of loading an entire program into memory when it starts, demand paging loads pages only when they are actually needed by the CPU. This significantly reduces the amount of physical RAM required for a process at any given time, allowing more programs to run concurrently and reducing startup times. When a program attempts to access a page that is not currently in a physical frame (as indicated by the page table), a page fault occurs. The operating system then intercepts this fault, identifies the required page, and initiates an I/O operation to load it from secondary storage (like a hard drive or SSD), which is designated as swap space or a page file. Once the page is loaded into an available physical frame, the page table is updated, and the interrupted program instruction is re-executed. This process of moving pages between RAM and swap space is known as swapping. While essential, excessive swapping can lead to significant performance degradation due to the much slower access times of secondary storage compared to RAM.

Page Replacement Algorithms

When a page fault occurs and all physical frames are already occupied, the operating system must decide which existing page to evict from RAM to make room for the newly demanded page. This decision is made by a page replacement algorithm. The goal of these algorithms is to minimize the number of future page faults by selecting a page that is least likely to be needed soon. Common algorithms include:

- First-In, First-Out (FIFO): Evicts the page that has been in memory the longest. Simple but often inefficient.

- Least Recently Used (LRU): Evicts the page that has not been accessed for the longest period. More effective, but harder to implement.

- Optimal (OPT): Evicts the page that will not be used for the longest period in the future. This is a theoretical ideal, impossible to implement in practice, but used as a benchmark.

Modern operating systems often use approximations of LRU or more sophisticated algorithms that combine elements of several strategies, sometimes incorporating usage bits and dirty bits to track page activity and whether a page has been modified since being loaded.

Translation Lookaside Buffer (TLB): Speeding Up Address Translation

Every memory access in a paged system requires a virtual-to-physical address translation. Without optimization, this would mean two memory accesses for every data access: one to read the page table entry and another to access the actual data. This overhead would severely impact performance. To mitigate this, most modern CPUs incorporate a hardware cache specifically for page table entries, known as the Translation Lookaside Buffer (TLB). The TLB stores recent virtual-to-physical address translations. When a virtual address needs translating, the MMU first checks the TLB. If the translation is found (a “TLB hit”), the physical address is retrieved almost instantaneously. If not (a “TLB miss”), the MMU accesses the page table in main memory, and then updates the TLB with the new translation. The high hit rate of the TLB is crucial for the efficient operation of paging, making virtual memory practically viable without prohibitive performance costs.

Paging’s Critical Role in Modern Tech & Innovation

Paging is not merely an academic concept; it’s a practical necessity that empowers the cutting-edge technologies defining our future, especially in fields like autonomous systems, AI, and advanced data processing.

Enabling Complex Software and Multitasking

The most direct and universal impact of paging is its ability to enable robust multitasking. Modern operating systems frequently run dozens, if not hundreds, of processes simultaneously, each with its own memory requirements. Paging allows all these applications to coexist in a shared physical memory space without interfering with each other. This isolation and efficient resource allocation are fundamental to user experience, system stability, and the very architecture of contemporary software. Without paging, operating systems would be far less capable, severely limiting the complexity and number of applications we could run.

Security and Isolation

Beyond performance, paging provides a critical layer of security and isolation. Each process operates within its own private virtual address space, effectively sandboxing it from other processes and the operating system’s kernel. This means that a bug or malicious act in one program cannot directly corrupt the memory of another program or the operating system itself. Page table entries also include protection bits that define access rights (read, write, execute) for different memory regions, preventing unauthorized access and further bolstering system security. This isolation is paramount in environments where system integrity is crucial, such as the embedded systems controlling critical functions in autonomous vehicles or drones.

Impact on AI, Autonomous Systems, and Big Data

The demanding nature of Artificial Intelligence (AI), autonomous systems, and big data processing makes paging an indispensable technological enabler.

- AI and Machine Learning: Training large AI models or processing vast datasets for inference often requires memory footprints that far exceed physical RAM. Paging allows these applications to effectively use virtual memory, seamlessly moving data between RAM and swap space as needed, without crashing due to memory exhaustion. This is vital for tasks like real-time object recognition in drone camera feeds or complex path planning algorithms.

- Autonomous Flight Systems: Drones capable of autonomous flight incorporate numerous sophisticated processes running concurrently: real-time sensor data fusion (Lidar, camera, GPS), navigation algorithms, obstacle avoidance systems, and communication protocols. Each of these components is memory-intensive. Paging ensures that these critical systems can operate simultaneously and reliably, managing their memory demands without compromising the real-time performance necessary for safe and effective autonomous operation.

- Mapping and Remote Sensing: Applications for generating detailed 3D maps or analyzing large volumes of remote sensing data (e.g., from drone-mounted multispectral cameras) deal with enormous datasets. Paging enables these applications to efficiently load and process only the necessary portions of these datasets into RAM, facilitating tasks like image stitching, terrain modeling, and environmental monitoring, which would otherwise be impossible on systems with limited physical memory. The performance implications for such real-time systems mean avoiding excessive swapping is paramount.

Optimizing Paging: Best Practices and Considerations

While paging is a powerful tool, its effectiveness depends on proper system configuration and thoughtful software design. Optimizing its use can significantly impact the performance and stability of any system.

The Importance of Sufficient RAM

Despite the brilliant capabilities of paging and virtual memory, physical RAM remains king for performance. While paging allows systems to run with less physical RAM than theoretically required, it does so at the cost of speed. Accessing data from RAM is orders of magnitude faster than retrieving it from secondary storage (even fast SSDs). Therefore, providing a system with ample physical RAM reduces the frequency of page faults and the need for swapping, leading to a much more responsive and efficient computing experience. For high-performance applications like AI model training or real-time autonomous control, maximizing physical RAM is a primary optimization strategy.

Configuring Swap Space

The size and location of swap space can have a notable impact on system performance. While a common heuristic is to set swap space to 1.5 to 2 times the amount of physical RAM, modern systems with large amounts of RAM often require less. The key is to provide enough swap space to handle peak memory demands without causing “thrashing,” a state where the system spends almost all its time swapping pages in and out, rather than doing useful work. On systems utilizing fast NVMe SSDs, swap performance is vastly improved compared to traditional hard drives, though it still falls far short of RAM speeds. Careful configuration ensures that the system has a safety net for memory overcommitment without becoming a bottleneck.

Software Design for Memory Efficiency

Developers also play a crucial role in optimizing paging by writing memory-efficient code. This involves understanding memory access patterns, reducing unnecessary memory allocations, and structuring data to improve cache locality. By designing applications that minimize their working set (the set of pages actively used by a process), developers can reduce the likelihood of page faults and ensure that their applications perform well even under memory pressure. Techniques like memory pooling, efficient data structures, and avoiding redundant data copies contribute significantly to a lower memory footprint and better overall system performance in a paged environment.

Conclusion

Paging, though often unseen and unappreciated by the end-user, is an indispensable and foundational component of modern computing. It is the silent architect that enables our operating systems to manage memory effectively, providing the illusion of vast memory resources, ensuring process isolation, and facilitating robust multitasking. From the complex AI algorithms driving autonomous drones to the intricate data processing in remote sensing and mapping applications, paging underpins the very possibility of these innovations. As computational demands continue to grow with the advent of even more sophisticated technologies, the principles of paging will remain critical, continuously evolving to ensure that our digital systems are not just powerful, but also stable, secure, and performant.