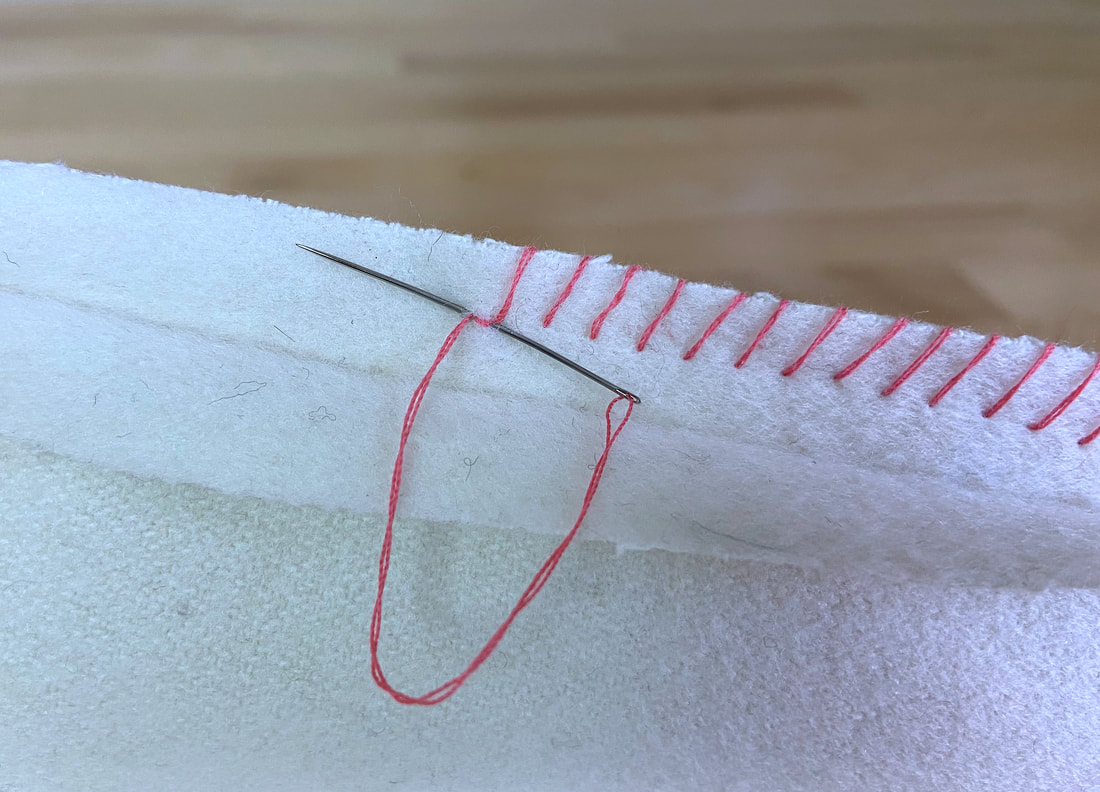

In the rapidly evolving landscape of drone technology, particularly within spatial data acquisition for mapping and remote sensing, the concept of “stitching” various data fragments – be they photographic imagery, LiDAR point clouds, or multispectral scans – into a coherent, comprehensive model is foundational. However, as the demands for precision, volumetric accuracy, and semantic integrity intensify, a more sophisticated approach is required to move beyond simple concatenation. This brings us to the conceptual framework of “overcasting stitch,” an advanced algorithmic paradigm that elevates traditional data fusion by meticulously securing the boundaries, mitigating edge artifacts, and ensuring robust, consistent integration across vast datasets. It’s not a literal sewing technique, but rather a metaphorical term describing a suite of cutting-edge computational methods designed to provide an unparalleled level of finish and durability to drone-derived spatial models, much like an overcasting stitch fortifies a raw fabric edge against fraying.

The Evolution of Spatial Data Integration: From Basic Stitching to Overcasting

Early drone-based mapping relied on rudimentary photogrammetry, where overlapping images were aligned and blended to create orthomosaics or 3D models. This process, often referred to as “image stitching,” aimed to create a seamless visual representation. While effective for many applications, these methods frequently grappled with several inherent challenges: radiometric inconsistencies between images, geometric distortions at overlap zones, parallax errors, and a general lack of volumetric integrity, especially in complex environments. These imperfections, analogous to a loosely sewn seam, could lead to visual artifacts, measurement inaccuracies, and reduced confidence in the derived data.

As drone capabilities advanced, incorporating sophisticated GPS/RTK/PPK systems, high-resolution cameras, and multi-sensor payloads (e.g., LiDAR alongside RGB), the volume and complexity of data exploded. This necessitated more robust integration techniques. The initial focus shifted towards improved bundle adjustment algorithms, feature-matching robustness, and global optimization strategies to minimize geometric discrepancies. However, even with these advancements, residual errors often persisted at the seams where distinct datasets converged.

The “overcasting stitch” philosophy emerges from this need to address these residual imperfections at a finer granularity. It represents a paradigm shift from merely joining data to actively reinforcing and refining the integration points. Instead of simply blending pixels or merging point clouds, overcasting stitch methodologies actively analyze, correct, and optimize the transitional zones, ensuring that the final output is not just seamless visually but also volumetrically and semantically consistent across all dimensions. This approach is critical for high-stakes applications such as digital twin creation, precise volumetric calculations in construction, detailed environmental monitoring, and autonomous navigation planning where minor discrepancies can have significant consequences.

Core Principles of the “Overcasting Stitch” Paradigm

The effectiveness of an overcasting stitch in spatial data processing hinges on several interconnected principles that move beyond conventional stitching algorithms. These principles aim for a holistic data integrity, addressing both the visual and geometric aspects of data fusion.

Multi-Modal Data Harmonization

One of the defining features of an overcasting stitch approach is its proficiency in harmonizing diverse data types. Drones frequently carry multiple sensors: RGB cameras for visual context, multispectral cameras for vegetation health, thermal cameras for heat signatures, and LiDAR for precise 3D geometry. Traditional stitching often processes these streams independently or merges them post-processing, leading to potential misalignments or inconsistencies. An overcasting stitch methodology involves:

- Intrinsic and Extrinsic Calibration Refinement: Beyond initial sensor calibrations, continuous, data-driven recalibration loops identify and correct subtle sensor biases or drift during flight, ensuring all sensor outputs are spatially and temporally synchronized with extreme precision.

- Feature-Rich Cross-Sensor Matching: Advanced algorithms identify common features across different sensor modalities (e.g., correlating object edges from RGB with intensity changes in LiDAR or thermal gradients). This creates robust anchor points for multi-modal alignment, minimizing parallax and ensuring radiometric/photometric consistency.

- Adaptive Weighting for Data Fusion: Instead of uniform blending, an overcasting stitch system intelligently assigns weights to different data sources based on their local quality, confidence metrics, and relevance to specific features. For instance, LiDAR data might be prioritized for vertical accuracy, while RGB data drives textural detail, with the weighting dynamically adjusted at boundaries.

Volumetric and Semantic Consistency at Boundaries

The true power of an overcasting stitch is most evident in how it handles the critical boundary regions where different datasets meet. Rather than just smoothing visual differences, it delves into the underlying geometric and semantic structure.

Geometric Overcasting

Geometric overcasting addresses the 3D accuracy and consistency of models. This includes:

- Advanced Mesh/Point Cloud Refinement: Instead of simple merging, the overlapping sections of 3D meshes or point clouds undergo iterative refinement processes. This might involve deep learning models that learn to identify and correct minor distortions, subtle deformations, or stair-stepping effects often found at model boundaries.

- Constraint-Driven Optimization: The system applies geometric constraints derived from ground control points (GCPs), independent verification points, or known structural properties to actively pull and align adjacent data segments, ensuring that edges and surfaces maintain their true spatial relationships. This is crucial for applications requiring highly accurate measurements.

- Volumetric Hole Filling and Smoothing: When stitching introduces small gaps or irregular surfaces, advanced algorithms can intelligently interpolate or ‘fill’ these voids based on surrounding data, creating a watertight and volumetrically sound model. This is akin to carefully sewing a patch onto fabric, ensuring the repair is indistinguishable from the original.

Semantic Overcasting

Semantic consistency ensures that features and objects are correctly classified and consistently represented across dataset boundaries.

- Contextual Feature Recognition: Utilizing AI and machine learning, the system recognizes and classifies objects (e.g., buildings, trees, vehicles, roads) within the overlapping regions. It then ensures that these classifications remain consistent even as the data transitions from one acquisition pass or sensor to another.

- Boundary-Aware Object Segmentation: Instead of allowing object segments to be arbitrarily cut by stitch lines, semantic overcasting intelligently adjusts segmentation boundaries to align with real-world object edges, preventing fragmented or inconsistent object representations. This is vital for downstream analytics like asset management or change detection.

- Temporal Coherence in Change Detection: For repeat missions, the overcasting stitch approach ensures that historical data can be perfectly aligned and compared with new acquisitions, allowing for precise change detection without false positives caused by stitching inaccuracies.

Algorithmic Foundations: Driving the Overcasting Revolution

The realization of the “overcasting stitch” paradigm is heavily dependent on breakthroughs in computational algorithms, particularly in the realm of artificial intelligence and machine learning.

Deep Learning for Feature Extraction and Alignment

Convolutional Neural Networks (CNNs) and transformer models are increasingly employed to extract rich, invariant features from raw sensor data. Unlike traditional hand-crafted features, these deep learning models can learn complex patterns and contextual information, leading to more robust and accurate matches across different lighting conditions, perspectives, and sensor types. This significantly reduces initial alignment errors, laying a solid groundwork for the subsequent overcasting process.

Generative Adversarial Networks (GANs) for Seamless Blending

GANs hold immense promise for the visual aspect of overcasting. By training a generator network to produce seamless transitions at data boundaries and a discriminator network to identify any remaining artifacts, GANs can create incredibly natural-looking blends that are virtually undetectable by the human eye. This moves beyond simple feathering or color correction, intelligently synthesizing missing information or adjusting radiometric properties to achieve perfect continuity.

Graph-Based Optimization and Global Consistency

Many overcasting stitch methodologies leverage graph-based optimization techniques. Each data fragment or sensor reading can be represented as a node in a graph, with edges representing the relationships and relative transformations between them. Global optimization algorithms, often solved using sparse bundle adjustment or similar methods, simultaneously adjust all nodes to minimize overall error, ensuring maximum consistency across the entire dataset. This “global stitch” approach ensures that local adjustments don’t create inconsistencies elsewhere in the model.

Real-time Edge Processing and Adaptive Refinement

For applications requiring rapid data delivery, real-time or near real-time overcasting is becoming essential. This involves adaptive algorithms that can prioritize processing critical areas, leverage parallel computing architectures, and continually refine the model as new data streams in. Edge computing on the drone itself or in localized ground stations can perform preliminary overcasting steps, reducing the computational load for final processing.

Real-World Applications and the Future of Robust Data Integration

The implications of an “overcasting stitch” approach are profound across various drone-based industries:

- Construction and Infrastructure Monitoring: Creating highly accurate digital twins of construction sites or infrastructure for precise volumetric calculations, change detection, and quality control. Every seam in the model must be geometrically perfect for accurate progress tracking and measurement.

- Environmental and Agricultural Monitoring: Generating robust orthomosaics and 3D models of vast agricultural fields or sensitive ecosystems for detailed analysis of crop health, deforestation, or water management. Consistent data at boundaries ensures accurate area calculations and trend analysis.

- Urban Planning and Smart Cities: Developing highly detailed 3D city models for urban planning, emergency response simulations, and autonomous vehicle navigation. The integrity of building facades and street layouts across model boundaries is paramount.

- Mining and Quarrying: Accurate stockpile volume measurement and pit analysis depend on flawlessly integrated 3D models, where inconsistencies at stitch lines could lead to significant financial errors.

- Security and Surveillance: Creating seamless and highly reliable composite views from multiple drone feeds for comprehensive situational awareness, where even minor visual discrepancies could obscure critical details.

As drone technology continues to miniaturize and integrate more powerful sensors, and as the demand for increasingly accurate and reliable spatial data grows, the “overcasting stitch” will transition from a conceptual ideal to a standard requirement. It represents the pinnacle of data fusion, ensuring that drone-derived insights are not just visually appealing but are fundamentally sound, precise, and ready for critical decision-making. The future of drone-based mapping and remote sensing is intrinsically tied to algorithms that can not only stitch data but truly overcast it, rendering invisible seams and creating an enduring, high-fidelity digital reality.