In the intricate dance of human physiology, the concept of a “normal blood calcium level” is paramount. It represents a finely tuned equilibrium, a precise range within which countless biological processes—from nerve transmission to bone health—function optimally. Deviations from this norm signal distress, prompting the body’s sophisticated homeostatic mechanisms to restore balance. This principle of maintaining a critical “normal level” for systemic health is not exclusive to biology; it resonates profoundly within the realm of advanced technology and innovation. Just as a human body requires balanced calcium, complex AI-driven autonomous systems demand meticulously defined and rigorously maintained “normal operating thresholds” to ensure stability, efficiency, and reliability.

This article will pivot from the literal biological meaning to explore the metaphorical and practical significance of “normal levels” in the context of Tech & Innovation. We will delve into how modern technological systems, especially those venturing into autonomy and intelligence, grapple with defining, monitoring, and maintaining their own versions of “normal” to ensure robust performance and prevent catastrophic failures. Understanding these technological “normal levels” is crucial for developing resilient, self-regulating systems that can adapt to dynamic environments, much like biological organisms adapt to internal and external changes.

The Analogy of ‘Normal Levels’ in Complex Systems

The human body’s intricate regulatory systems, constantly striving for homeostasis, offer a compelling analogy for the challenges faced in advanced technology. Just as blood calcium is a critical biomarker, every complex technological system has its own set of vital parameters that define its “normal” operational state.

Biological Homeostasis vs. Technological Stability

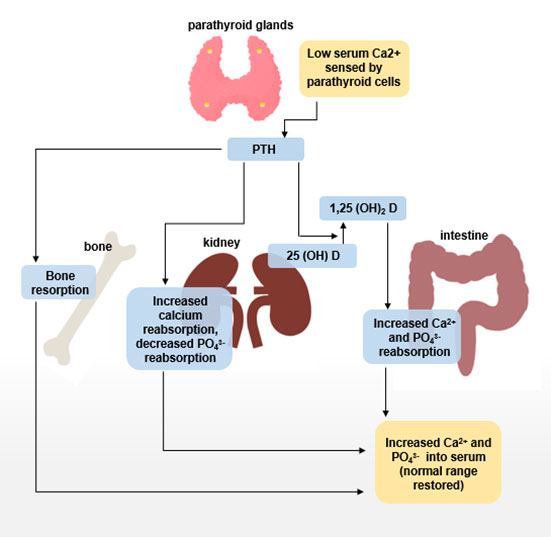

Homeostasis is the property of a system to regulate its internal environment and maintain a stable, relatively constant condition. For humans, this includes maintaining body temperature, pH levels, and, crucially, calcium levels within narrow ranges. These biological systems are inherently adaptive, equipped with feedback loops that detect deviations and initiate corrective actions.

In the world of Tech & Innovation, achieving technological stability mirrors this biological imperative. Autonomous drones, AI-powered predictive maintenance systems, and self-driving vehicles are not merely assemblages of hardware and software; they are complex ecosystems that must operate within defined parameters. Their “normal levels” might include optimal battery voltage, stable data transmission rates, acceptable sensor error margins, processing unit temperature, or the consistency of AI model outputs. A deviation in any of these, much like an abnormal blood calcium level, can indicate a problem that requires immediate attention, impacting performance, safety, or longevity. The aim is to design systems that are not just robust but also “homeostatic,” capable of self-regulation and maintaining optimal function despite internal fluctuations or external disturbances.

Critical Parameters for System Health

Identifying what constitutes “normal” in a technological context requires a deep understanding of the system’s architecture, operational environment, and performance objectives. For a sophisticated AI system designed for real-time decision-making, critical parameters might include:

- Processing Latency: The time taken for data input to generate a decision output. A sudden increase could indicate an overload or a fault.

- Sensor Data Integrity: The consistency and accuracy of incoming data from various sensors (e.g., GPS, LiDAR, cameras). Noise or unexpected patterns could corrupt decision-making.

- Energy Consumption Rate: A sudden spike or drop might signal an inefficient operation or a hardware malfunction.

- Network Bandwidth Utilization: For connected devices, maintaining a “normal” range ensures effective communication and data exchange.

- AI Model Confidence Scores: In machine learning, these scores indicate the certainty of a prediction. A consistent drop could point to data drift or a need for model retraining.

Each of these, when maintained within its “normal level,” contributes to the overall health and reliability of the technological system, just as balanced blood calcium supports human vitality. Establishing these critical parameters is the first step towards building truly resilient and intelligent technologies.

Defining Baseline Performance in AI and Autonomous Tech

The proliferation of artificial intelligence and autonomous systems introduces new complexities to defining “normal.” Unlike traditional, deterministic systems, AI models can exhibit emergent behaviors, and their performance can fluctuate based on data inputs, environmental conditions, and continuous learning.

Establishing ‘Normal’ in Machine Learning Outputs

For AI, “normal” isn’t always a fixed numerical range; it’s often a statistical distribution or a behavioral pattern. For instance, in an AI vision system, the “normal” output might be consistent object recognition accuracy under varying light conditions. If the accuracy suddenly drops significantly in familiar scenarios, that’s a deviation from normal. In natural language processing, a “normal” response might involve maintaining a certain coherence, relevance, and sentiment. Anomalies in these characteristics could signal a problem.

Establishing this baseline requires extensive training data, rigorous testing, and continuous monitoring in real-world environments. Machine learning algorithms, particularly those used for anomaly detection, are crucial here. They learn the “normal” patterns and flag anything that deviates significantly, acting as the technological equivalent of a body’s internal alarm system for abnormal calcium levels. This continuous learning aspect means that “normal” can also evolve over time, requiring adaptive baselines.

Optimal Sensor Data Ranges and Calibration

Autonomous systems heavily rely on sensor data for perceiving their environment. GPS, accelerometers, gyroscopes, LiDAR, radar, and cameras all feed crucial information into the system’s decision-making algorithms. The “normal level” for each sensor includes its operational range, accuracy, precision, and consistency of output.

For example, a GPS module has a normal accuracy range (e.g., within 1-3 meters under clear skies). If it consistently reports positions with much larger errors, that’s abnormal. LiDAR sensors have a normal range for detecting objects and a normal reflectivity reading for various materials. Deviations could indicate sensor obstruction, degradation, or environmental interference. Regular calibration and self-diagnosis routines are essential to ensure these sensors maintain their “normal” operational states, preventing corrupted inputs that could lead to erroneous decisions by the autonomous system. The integrity of sensor data is foundational to the safe operation of any autonomous platform.

Autonomy and Predictive Maintenance Thresholds

One of the most powerful applications of defining “normal levels” in tech is predictive maintenance. By continuously monitoring the operational parameters of components—like drone motor temperatures, battery cycle counts, or propeller vibration levels—AI can establish a “normal” operational signature. Any deviation from this signature, such as unusually high vibration or a rapid increase in temperature, can be identified as a precursor to failure.

These “normal” thresholds are not just about preventing breakdowns; they’re about optimizing performance and extending the lifespan of components. An autonomous system might decide to reduce its operational intensity if a certain parameter approaches its upper “normal” limit, effectively self-regulating to prevent overload. This proactive approach, driven by a deep understanding of normal operational behavior, minimizes downtime, reduces repair costs, and enhances the overall reliability of complex machinery. It’s the technological equivalent of early diagnosis based on subtle changes in biological markers.

Maintaining Equilibrium: Diagnostics and Anomaly Detection

Just as a physician relies on blood tests to diagnose imbalances, advanced technological systems employ sophisticated diagnostic tools and anomaly detection algorithms to monitor their internal states and maintain equilibrium.

Monitoring Key Performance Indicators (KPIs)

Every technological system has its KPIs, which serve as its vital signs. For a drone, KPIs might include flight stability metrics, power consumption, GPS lock quality, and communication link strength. For an AI model, KPIs could be inference speed, accuracy, and resource utilization. Establishing what is “normal” for each KPI involves collecting vast amounts of operational data during optimal conditions and using statistical methods to define acceptable ranges and variance.

Dashboards displaying these KPIs in real-time allow human operators to quickly gauge system health. More importantly, AI-driven monitoring systems continuously analyze these KPIs against their established “normal levels.” Any sustained deviation, even if subtle, triggers alerts and diagnostic routines. This continuous vigilance is critical for systems that operate autonomously in complex, dynamic environments, where a minor anomaly can quickly escalate into a critical failure.

AI for Early Anomaly Detection (The ‘Low Calcium’ Warning)

AI plays a pivotal role in detecting anomalies that might be too subtle for human observation or rule-based systems. Machine learning models, particularly unsupervised learning algorithms, can identify patterns that deviate from learned “normal” behavior without explicit programming. For example, if a drone’s flight path suddenly exhibits a slight, uncommanded yaw that is outside its normal operational variance, an AI anomaly detection system could flag it, even if the yaw is not severe enough to trigger immediate safety protocols.

This is akin to the body detecting a slightly low blood calcium level before it manifests as severe symptoms. Early detection allows for proactive intervention—a system reboot, a software patch, or a scheduled maintenance—before the anomaly compromises the system’s primary mission or safety. The AI acts as a sophisticated digital immune system, constantly scanning for deviations from the norm and predicting potential issues.

Proactive Adjustments and System Self-Correction

The ultimate goal of monitoring “normal levels” is not just detection but also self-correction. Modern autonomous systems are increasingly designed with capabilities for proactive adjustments. If an internal sensor reports readings that are slightly off but still within acceptable bounds, the system might automatically recalibrate that sensor. If an AI model’s confidence scores for a particular type of input start to dip, the system might automatically request more data, switch to a backup model, or even self-retrain with new information to restore its “normal” performance.

These self-correcting mechanisms are vital for resilience, enabling systems to maintain their “normal” operational state without constant human intervention. They represent the technological embodiment of the body’s physiological responses to maintain homeostasis, making the systems more robust, reliable, and capable of operating safely in diverse and challenging conditions.

The Future of Self-Regulating Tech

As technology continues to advance, the concept of “normal levels” will evolve from static thresholds to dynamic, context-aware baselines, paving the way for truly adaptive and resilient intelligent systems.

Towards Adaptive and Resilient Systems

Future autonomous systems will not only define but also learn and adapt their “normal levels” based on evolving operational contexts. For instance, what is “normal” for a drone operating in a calm indoor environment is different from what is “normal” for the same drone battling high winds outdoors. AI will enable systems to dynamically adjust their expectations of “normal” performance parameters based on environmental conditions, mission objectives, and even their own degradation over time. This makes systems more resilient, capable of maintaining functionality even when components are suboptimal, much like an athlete adapts their performance when slightly fatigued.

This adaptive “normal” will allow for graceful degradation, where a system can acknowledge that it’s no longer operating at peak “normal” but can still function safely within a modified set of parameters, communicating its reduced capabilities. This is a crucial step towards creating technologies that are not just intelligent but also wise, understanding their own limitations and operating within them.

Ethical Considerations in Autonomous ‘Self-Care’

As systems become more autonomous in their self-monitoring and self-correction, new ethical considerations emerge. Who defines the “normal” when AI is learning it on its own? What happens when a system’s “self-care” decisions conflict with human objectives or safety protocols? For example, if an autonomous vehicle detects a critical anomaly, its “normal” response might be to pull over immediately. Is this always the optimal decision if it means blocking traffic or putting occupants at a minor inconvenience?

These questions highlight the need for transparency, explainability, and human oversight in the development of self-regulating technologies. While striving for systems that can maintain their own “normal levels” with minimal human intervention, we must ensure that the definition of “normal” is aligned with human values, safety, and societal expectations. The journey to build truly autonomous, self-healing systems is not just an engineering challenge; it’s a profound ethical and philosophical endeavor that mirrors our ongoing quest to understand and maintain the delicate balance of life itself.

In conclusion, the simple question, “What is normal blood calcium level?” serves as a powerful metaphor for the fundamental challenges and innovations in modern technology. From defining critical parameters to implementing AI-driven anomaly detection and self-correction, the pursuit of “normal levels” is at the heart of building robust, reliable, and intelligent systems. As we push the boundaries of AI and autonomy, understanding and maintaining these technological equilibria will be paramount for unlocking the full potential of future innovation.