In the vast and intricate landscape of modern computing, where data reigns supreme and seamless access to information is paramount, foundational technologies often operate behind the scenes, powering the digital experiences we take for granted. Among these critical components is the Network File System (NFS), a distributed file system protocol that allows a user on a client computer to access files over a computer network much like local storage is accessed. Developed by Sun Microsystems in the early 1980s, NFS has stood the test of time, evolving through several iterations to remain a cornerstone of enterprise IT, cloud infrastructure, and various specialized tech environments.

At its core, NFS addresses a fundamental challenge: how to enable multiple machines to share and collaboratively work with files stored on a central server without the complexities and overhead of manual file transfers. It provides a transparent, platform-independent mechanism for remote file access, abstracting away the network intricacies and presenting remote filesystems as if they were local. This capability not only streamlines data management and enhances collaboration but also lays the groundwork for more resilient, scalable, and cost-effective computing architectures. Understanding NFS is not merely about appreciating a legacy protocol; it’s about grasping a critical piece of the puzzle that enables much of the distributed computing, cloud services, and complex data operations that define the “Tech & Innovation” landscape today.

The Core Principles of NFS

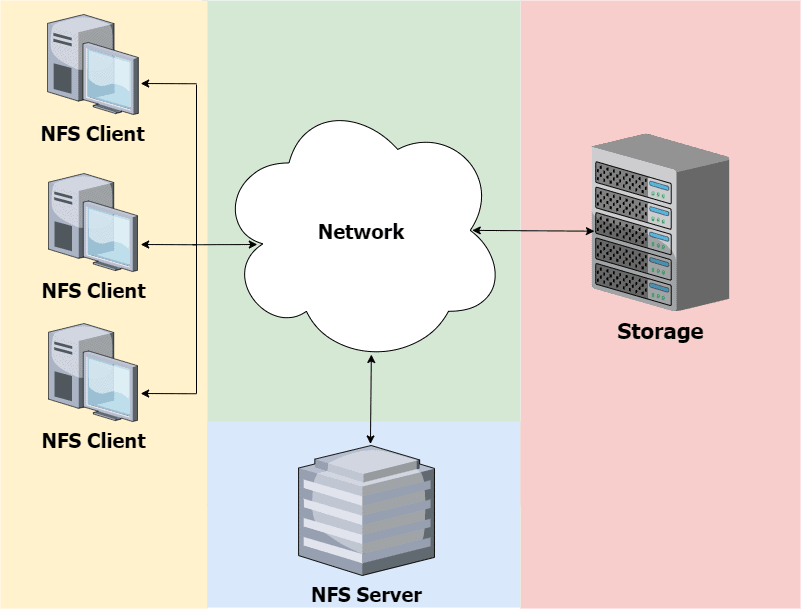

To appreciate the enduring relevance of NFS, it’s essential to delve into its fundamental architectural principles. NFS is built upon a client-server model, designed for efficiency, robustness, and flexibility in a networked environment.

Client-Server Architecture

The operational backbone of NFS is its client-server architecture. In this setup, a machine designated as the NFS server hosts one or more filesystems that it makes available for sharing. These shared directories, known as “exports,” are then accessible to other machines on the network. A machine that wishes to access these filesystems acts as an NFS client. The client sends requests to the server to read, write, create, or delete files and directories located on the exported filesystem.

Crucially, from the client’s perspective, the remote filesystem appears seamlessly integrated into its local directory tree. This transparency is key to NFS’s utility, allowing applications and users to interact with network-stored data using standard file system commands and APIs, without needing to be aware that the files are physically residing on a different machine. This abstraction greatly simplifies application development and user experience, centralizing data management while distributing access.

Protocol and Communication

NFS communication is primarily built upon the Remote Procedure Call (RPC) mechanism. RPC allows a program on one computer to execute a procedure (a function or subroutine) on another computer across a network, without the programmer explicitly coding the details for remote interaction. In the context of NFS, when an NFS client wants to perform an operation like reading a file, it sends an RPC request to the NFS server. The server then executes the requested operation on its local filesystem and sends the result back to the client via another RPC response.

Early versions of NFS typically used UDP (User Datagram Protocol) for its RPC communications, valuing speed over guaranteed delivery, which required the NFS protocol itself to implement retransmission and timeout mechanisms. While UDP offers lower overhead, it can be less reliable over congested or lossy networks. Later versions of NFS, particularly NFSv3 and especially NFSv4, increasingly adopted TCP (Transmission Control Protocol) as the default transport protocol. TCP provides reliable, ordered, and error-checked data delivery, simplifying error handling for the NFS protocol and making it more suitable for wide-area networks (WANs) and environments where data integrity and consistent performance are paramount.

Stateless vs. Stateful Operations

A significant distinction across NFS versions lies in their approach to state management. NFSv2 and NFSv3 are largely stateless protocols. This means that the server does not maintain information about the client’s current state (e.g., open files, file pointers) between successive requests. Each client request is treated independently, containing all the necessary information for the server to fulfill it. While this design simplifies server recovery from crashes (as it doesn’t need to rebuild client state), it can lead to complexities. For instance, file locking mechanisms often require additional auxiliary protocols (like NLM – Network Lock Manager) to provide stateful locking services.

NFSv4 introduced a fundamental shift towards a stateful protocol. In NFSv4, the server maintains state information for clients, such as open files, byte-range locks, and delegations (where the server delegates caching responsibilities to the client). This statefulness simplifies the protocol, eliminating the need for separate locking protocols and improving efficiency, especially over WANs. However, it also means that server crashes require a recovery mechanism to re-establish client state, which NFSv4 handles through features like lease-based state management and callback procedures. The move to statefulness in NFSv4 was a critical step in addressing performance and security challenges faced by its predecessors.

How NFS Works Under the Hood

Understanding the core principles provides a high-level view, but the true ingenuity of NFS becomes apparent when examining its underlying mechanisms.

Mount Process and Exporting Filesystems

Before a client can access files on an NFS server, a crucial step known as the “mount process” must occur. On the server side, an administrator explicitly designates which directories are available for sharing using a configuration file, typically /etc/exports on Unix-like systems. This process is called “exporting” a filesystem. The export entry specifies the directory path, the client machines or networks allowed to access it, and the permissions (read-only, read-write, user mapping, etc.).

On the client side, a client machine uses the mount command to connect to an exported filesystem. For example, mount server_ip:/path/to/export /local/mount/point. When executed, the client’s operating system contacts the NFS server, verifies access permissions, and then integrates the remote filesystem into its own file hierarchy at the specified local mount point. From that point onward, any application or user interacting with /local/mount/point will transparently be accessing files on the NFS server. This seamless integration is what makes NFS so powerful and user-friendly from an operational perspective.

Remote Procedure Calls (RPC)

As mentioned, RPC is the backbone of NFS communication. When a client performs an operation, such as opening a file or listing a directory, its operating system translates this local file system call into a corresponding NFS RPC request. This request is then packaged and sent over the network to the NFS server. The server receives the RPC, executes the necessary action on its local filesystem (e.g., reads blocks from disk, updates metadata), and then packages the result into an RPC response, which is sent back to the client.

Key components in this process include:

- XDR (eXternal Data Representation): A standard data serialization format used by RPC to ensure that data can be exchanged between different types of computer architectures (e.g., a big-endian client communicating with a little-endian server).

- Portmap/rpcbind: Earlier versions of NFS used a service called

portmap(orrpcbind) to dynamically register the port numbers of various RPC services (likenfsd,mountd,lockd). Clients would queryportmapto find out which port to connect to for a specific NFS service. NFSv4 simplified this by consolidating all operations into a single TCP port (2049), reducing firewall complexities.

Data Locking and Consistency

Maintaining data consistency when multiple clients are accessing and potentially modifying the same files concurrently is a critical challenge for any distributed file system. NFS addresses this through various locking mechanisms.

- NFSv2/v3: These versions rely on an auxiliary protocol, the Network Lock Manager (NLM), to provide advisory and mandatory byte-range locking. When a client wants to lock a portion of a file, it sends an NLM request to the server. The server tracks these locks and grants or denies requests based on existing locks. NLM is stateful, which meant that server crashes could lead to lost lock state and require client recovery procedures.

- NFSv4: This version integrates locking directly into the core NFS protocol. It uses a lease-based locking mechanism, where clients acquire locks for a specific period (a lease). If a client crashes or becomes unresponsive, its leases eventually expire, allowing other clients to acquire those locks. NFSv4 also introduced delegations, where the server “delegates” control over a file to a client, allowing the client to perform certain operations (like caching) locally without server interaction, which can significantly boost performance. This stateful approach simplifies lock management and improves efficiency.

Key Benefits and Advantages of NFS

The architectural elegance and robust functionality of NFS translate into several tangible benefits that make it an indispensable technology in many modern IT infrastructures.

Centralized Data Management

One of the most compelling advantages of NFS is its ability to centralize data storage. Instead of having data scattered across numerous client machines, all critical information can reside on a dedicated NFS server or a cluster of servers. This centralization simplifies backup and recovery procedures, enhances data security (as access points are consolidated), and ensures data integrity by preventing data divergence. Administrators can manage storage resources, implement quotas, and perform maintenance from a single point, significantly reducing operational overhead.

Simplified Data Sharing and Collaboration

NFS fosters a highly collaborative environment. Multiple users and applications on different client machines can concurrently access and work on the same set of files. This is invaluable in scenarios like software development, scientific research, media production, and any team-based project where shared access to common resources is essential. Developers can share code repositories, researchers can share datasets, and artists can share project assets without the need for manual file transfers or complex synchronization tools, leading to increased productivity and streamlined workflows.

Scalability and Performance Optimization

NFS is inherently scalable. As data storage needs grow, additional server capacity (disks, memory, processing power) can be added to the NFS server or by deploying additional NFS servers. Load balancing techniques can distribute client requests across multiple servers, preventing bottlenecks. Performance can be optimized through various means, including proper network configuration, high-performance storage on the server side, client-side caching, and protocol tuning (e.g., adjusting read/write block sizes). The evolution from NFSv2/v3 to NFSv4 brought significant performance enhancements, particularly for small file operations and wide-area networks, by reducing chatty communication and improving caching mechanisms.

Cost-Effectiveness

By centralizing storage and facilitating resource sharing, NFS can lead to substantial cost savings. Organizations can leverage less expensive “diskless” workstations or thin clients for users, relying on the central NFS server for all data storage. This reduces hardware costs, simplifies client-side maintenance, and extends the lifespan of client machines. Furthermore, fewer independent storage silos mean reduced administrative complexity and lower licensing costs for specialized data management software, making NFS a pragmatic choice for optimizing IT budgets.

Challenges and Considerations

While NFS offers numerous benefits, its deployment and management are not without challenges. Addressing these considerations is crucial for a successful and secure implementation.

Security Implications

Security is paramount in any networked environment, and NFS, especially older versions, presents specific challenges.

- Authentication: Traditional NFS (NFSv2/v3) often relies on IP addresses for client authentication, which is vulnerable to IP spoofing. User authentication typically uses UID/GID mapping, which requires consistent user management across client and server machines.

- Encryption: Data transmitted over NFSv2/v3 is generally unencrypted, making it susceptible to eavesdropping on the network.

- NFSv4 Security: NFSv4 significantly improved security by integrating robust authentication mechanisms like Kerberos, which provides strong cryptographic authentication and data integrity/privacy (encryption). This makes NFSv4 far more suitable for environments with stringent security requirements. Proper firewall configuration and network segmentation are also essential to restrict access to NFS ports.

Performance Tuning and Network Latency

Achieving optimal performance with NFS requires careful tuning. Network latency is a major factor, as every file operation involves network communication. High latency can severely impact performance, especially for applications performing many small, sequential I/O operations.

- Network Bandwidth: Sufficient network bandwidth is critical. A saturated network will inevitably degrade NFS performance.

- Server Hardware: The NFS server’s hardware (disk I/O speed, CPU, memory) must be capable of handling the aggregate load from all connected clients. Fast storage (SSDs, NVMe) and ample RAM for caching are often necessary.

- Client Caching: Effective client-side caching (e.g., using

nfsdor application-level caching) can reduce the number of requests sent over the network, improving perceived performance. - Mount Options: Various mount options (e.g.,

rsize,wsizefor read/write buffer sizes,hard/softfor error handling,noatimeto reduce metadata writes) can significantly influence performance.

Compatibility and Versioning (NFSv3, NFSv4, etc.)

NFS has evolved through several major versions, each introducing new features and improvements.

- NFSv2: The oldest widely used version, largely stateless, uses UDP, and has limitations on file sizes (2GB).

- NFSv3: A significant improvement over v2, supporting larger file sizes (64-bit offsets), more robust error handling, and capable of using TCP. Still largely stateless.

- NFSv4 (4.0, 4.1, 4.2): A complete redesign, bringing statefulness, integrated locking, improved security (Kerberos), compound operations (reducing network round trips), and better performance over WANs. NFSv4.1 introduced pNFS (parallel NFS) for direct client-to-storage device access, further enhancing scalability and performance in clustered environments. NFSv4.2 added features like server-side cloning and sparse files.

Organizations must carefully choose the appropriate NFS version based on their specific requirements for security, performance, and compatibility with existing infrastructure. While older versions might still be found, NFSv4.x is generally recommended for new deployments due to its advanced features and enhanced security.

NFS in the Modern Tech Landscape

Despite its origins dating back to the 1980s, NFS remains a remarkably relevant and widely utilized technology, underpinning many contemporary “Tech & Innovation” initiatives. Its adaptability and robust feature set ensure its continued importance.

Supporting Cloud and Virtualization Infrastructures

NFS is a fundamental component in many private and hybrid cloud environments, as well as virtualization platforms. Virtual machines (VMs) often rely on NFS shares to store their disk images, configuration files, and application data. This allows for live migration of VMs between physical hosts without downtime, as the VM’s storage remains accessible from anywhere on the network. Cloud providers utilize NFS for shared file storage services, enabling multiple instances to access a common data pool, simplifying application deployment and scaling. Its ability to provide network-attached storage with good performance makes it ideal for supporting dynamic and elastic cloud workloads.

Facilitating Big Data and High-Performance Computing (HPC)

In the realm of Big Data and High-Performance Computing (HPC), where massive datasets are processed by clusters of machines, efficient data sharing is paramount. While specialized distributed file systems like HDFS exist, NFS still plays a critical role for shared scratch spaces, configuration files, and results data that need to be universally accessible. In HPC clusters, NFS can provide a high-throughput, low-latency shared storage layer for applications that require POSIX-compliant file access across many compute nodes. The advent of pNFS (Parallel NFS) in NFSv4.1 further boosts its capabilities for HPC, allowing clients to access storage devices directly and in parallel, circumventing bottlenecks inherent in single-server architectures.

Enterprise Storage and Backup Solutions

For decades, NFS has been a workhorse in enterprise IT for network-attached storage (NAS). It provides a reliable and cost-effective way for departments and teams to share files, host user home directories, and centralize application data. Beyond primary storage, NFS is also heavily utilized in backup and disaster recovery strategies. Backup servers can mount NFS shares from various production systems to efficiently transfer and store backup archives in a centralized repository. Its mature ecosystem and wide OS support make it a go-to choice for managing corporate data assets and ensuring business continuity.

Enabling DevOps and Containerized Environments

The rise of DevOps and containerization (Docker, Kubernetes) has introduced new paradigms for application deployment and management. NFS plays a crucial role in providing persistent storage for stateful applications running in containers. While containers are designed to be ephemeral, many applications require persistent data that outlives the container instance. By mounting an NFS share as a volume within a container, applications can store their data externally, ensuring that it persists even if the container is restarted, moved, or updated. This capability is vital for databases, logging services, and other stateful microservices, enabling flexible and scalable container deployments without sacrificing data durability.

Conclusion

The Network File System (NFS) is more than just a venerable protocol; it’s a testament to robust design and continuous evolution in the face of ever-changing technological demands. From its origins as a solution for simplifying file sharing in localized networks, NFS has adapted and advanced, integrating sophisticated features like statefulness, enhanced security through Kerberos, and parallel data access with pNFS. It continues to be a critical enabling technology across diverse domains within “Tech & Innovation.”

Whether underpinning the flexible storage of cloud infrastructures, facilitating the massive data processing in HPC environments, serving as the bedrock for enterprise-wide file sharing, or providing persistent volumes for modern containerized applications, NFS demonstrates remarkable versatility and enduring utility. Its core value proposition – providing seamless, transparent, and efficient remote file access – remains as relevant today as it was nearly four decades ago. As the digital world continues to generate and consume ever-increasing volumes of data, the principles and implementations of technologies like NFS will continue to be fundamental to building scalable, resilient, and collaborative computing systems that drive future innovation.