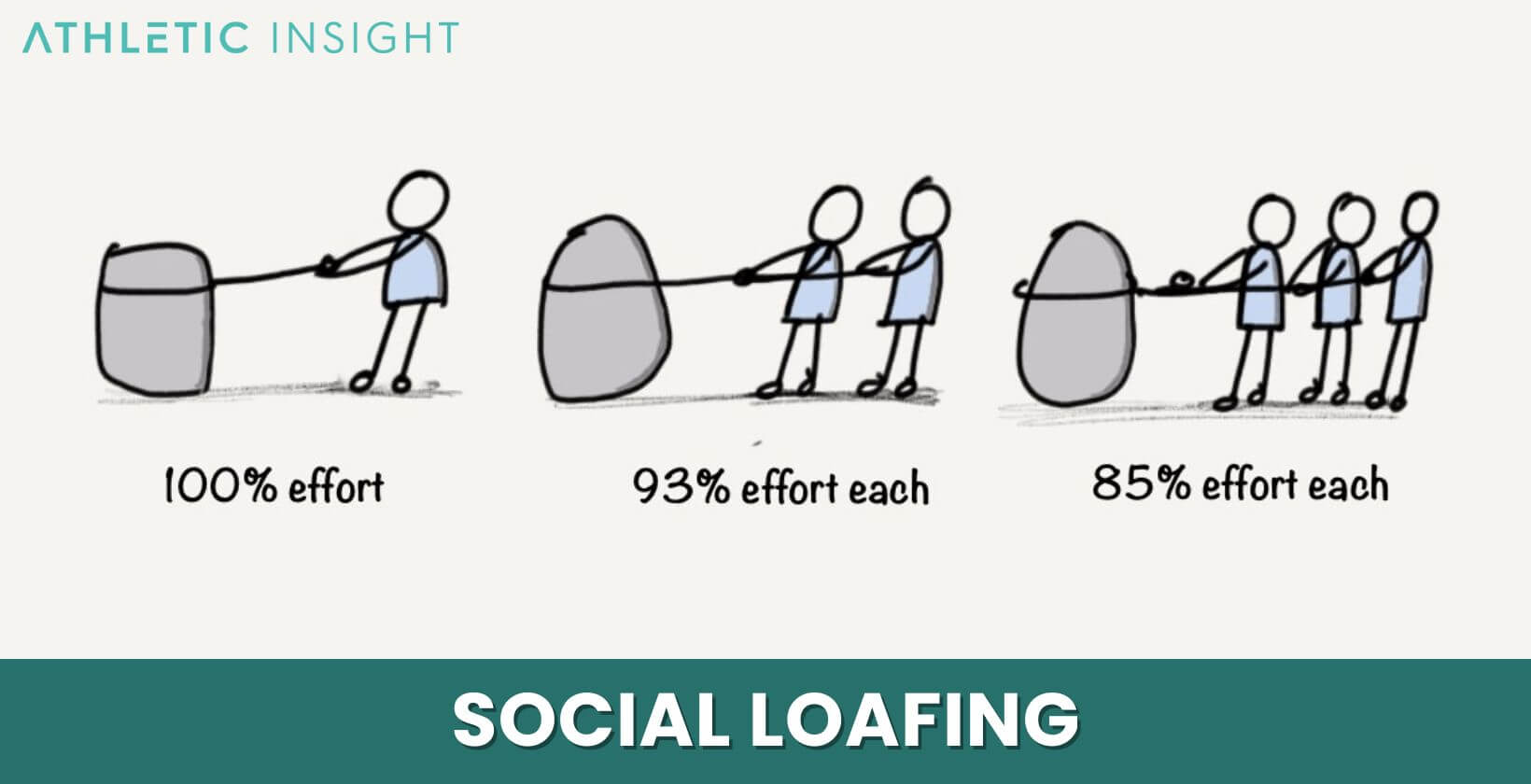

The colloquial phrase “what is it called when a group member does nothing?” immediately brings to mind human social dynamics: concepts like freeloading, social loafing, or shirking. However, when we transcend the human realm and apply this question to the intricate world of technology and innovation, it takes on a profoundly different, yet equally critical, meaning. In complex technological systems, especially those involving multi-agent interactions, distributed computing, or advanced AI, the equivalent of a “group member doing nothing” represents a critical challenge that can hinder performance, compromise efficiency, and even lead to system failure. This article delves into the various manifestations and terminologies for non-contributing elements within tech and innovation, exploring how these issues are identified, understood, and mitigated to ensure optimal system functionality and continuous advancement.

In the context of modern technology, where systems are increasingly autonomous, interconnected, and reliant on distributed intelligence, identifying when a component, an algorithm, or an individual unit is failing to contribute—or worse, actively hindering—is paramount. From drone swarms designed for autonomous flight and remote sensing, to vast networks of sensors mapping environments, or sophisticated AI models performing complex data analysis, the inert “group member” poses a silent threat to efficiency and innovation. Understanding this phenomenon requires a deep dive into diagnostics, system architecture, and intelligent management strategies.

Diagnosing “Inertia” in Autonomous Systems and Swarms

Autonomous systems, particularly those operating in coordinated groups like drone swarms, are designed to perform tasks collaboratively. When an individual unit or a critical component within such a system becomes inactive or contributes negligibly, it creates a significant operational gap. This “inertia” must be swiftly identified and addressed to maintain overall mission integrity and efficiency.

The Idle Drone or Robot

In a multi-drone operation for aerial mapping or remote sensing, for example, the failure of a single drone to execute its assigned flight path, collect data, or maintain communication can be disastrous. Such a drone might be termed an “idle unit,” a “non-responsive agent,” or a “failed node.” The causes are diverse: a critical sensor malfunction (e.g., GPS failure impacting autonomous flight), battery depletion, communication breakdown with the central command system, a software glitch leading to an unresponsive state, or even physical damage. When an idle drone “does nothing,” it not only fails to complete its segment of the task but can also disrupt the coordination of the entire swarm, leading to incomplete data sets, mission delays, or even collisions if its unpredicted movements interfere with other active units. Advanced AI follow mode functionalities and autonomous flight algorithms are designed with redundancy and error correction, but persistent non-contribution from a single agent can still cascade into systemic issues.

Sensor Network Silences

Beyond individual autonomous vehicles, large-scale sensor networks are fundamental to modern remote sensing, environmental monitoring, and smart city initiatives. Imagine a network of hundreds of IoT sensors deployed across an agricultural field to monitor soil moisture, temperature, and nutrient levels for precision farming. If a significant number of these sensors cease transmitting data—become “silent nodes” or “data black holes”—the integrity and completeness of the dataset are severely compromised. This effectively means these “group members” are “doing nothing” to aid the network’s purpose. Causes can range from power failures, physical damage, connectivity issues (Wi-Fi, cellular, LoRaWAN), or internal hardware malfunctions. The impact is direct: gaps in collected data, inaccurate environmental models, and flawed decision-making for agricultural interventions. Identifying these silent nodes quickly through network health monitoring and data flow analysis is crucial for maintaining the efficacy of the entire sensing infrastructure.

Algorithm Stagnation

The concept of “doing nothing” extends even to the abstract realm of algorithms and artificial intelligence. In complex AI models, especially those involving machine learning or distributed computation, a sub-component or even the entire algorithm can effectively “do nothing” useful. This might manifest as “algorithm stagnation,” where an iterative optimization algorithm gets stuck in a local optimum, failing to converge on a better solution. Or, in a distributed computing setup, a specific computational node or “worker” might become unresponsive, endlessly waiting for data, or producing erroneous outputs that are discarded. In deep learning, certain neurons or layers might consistently yield zero activations (“dead neurons”), effectively becoming inert parts of the network during training, thus “doing nothing” to learn or contribute to the model’s accuracy. These scenarios highlight how a non-contributing element, even if technically “active,” can be functionally inert, hindering the innovative capabilities of the system.

Identifying the “Free Rider” in Distributed Tech Architectures

The human concept of a “free rider”—someone who benefits from a group’s efforts without contributing—has parallels in distributed technological systems where resources are shared and contributions are expected from various components or nodes.

Resource Underutilization in Cloud Computing

In cloud computing environments, resources are dynamically allocated based on demand. A virtual machine (VM) or a server instance that is provisioned but consistently underutilized, performing minimal tasks while consuming computational cycles, memory, and network bandwidth, acts like a “free rider.” It’s a “dormant instance” or an “inefficient allocation” that’s “doing nothing” productive relative to its allocated capacity. While it’s not a complete failure, it represents an economic inefficiency, driving up operational costs without delivering commensurate value. Cloud management platforms employ monitoring tools to detect these idle or underutilized resources, often leading to auto-scaling down or termination to optimize resource allocation and prevent wasteful “free riding.”

Inefficient Data Pipelines

Modern data processing relies on complex, multi-stage data pipelines, where raw data is ingested, transformed, and processed across various distributed nodes or services before reaching its final destination for analysis or storage. If a particular stage in this pipeline is poorly optimized, redundant, or frequently stalls without processing data effectively, it becomes an “inefficient node” or a “bottleneck.” While it might technically be running, its contribution to the overall data flow is negligible or even detrimental, effectively “doing nothing” to add value while consuming processing power and delaying downstream processes. Identifying these inefficient segments through performance monitoring and profiling is critical to streamlining data flow, crucial for applications like real-time mapping or remote sensing data processing.

Non-Participatory Nodes in Decentralized Networks

In decentralized networks like those utilizing blockchain technology or peer-to-peer (P2P) systems, the integrity and functionality depend on active participation from numerous nodes. If a node in such a network fails to validate transactions, participate in consensus mechanisms, or share resources (as in a P2P file-sharing network), it is a “non-contributing node” or an “inactive participant.” While it might still be connected, its failure to perform its expected duties means it’s “doing nothing” to uphold the network’s operational principles. In blockchain, this could affect transaction finality or network security. In P2P networks, it reduces overall resource availability. Consensus protocols and reputation systems are often designed to penalize or disregard such inactive nodes, ensuring that only actively contributing “group members” drive the network forward.

Mitigation Strategies and Innovation for Systemic Participation

Preventing and addressing the issue of non-contributing elements is a cornerstone of robust system design in tech and innovation. Advanced strategies combine proactive monitoring, intelligent management, and architectural resilience.

Advanced Diagnostics and Health Monitoring

The first line of defense against “group members doing nothing” is comprehensive health monitoring. This involves real-time telemetry, predictive analytics, and anomaly detection. For drone swarms, each UAV continuously transmits diagnostic data—battery life, sensor readings, motor performance, GPS accuracy—which is analyzed by a central AI system. Anomalies trigger alerts, allowing operators or autonomous systems to identify a “failing unit” before it completely ceases function. In sensor networks, sophisticated network management systems monitor data flow, latency, and sensor uptime, flagging “silent nodes” immediately. Predictive maintenance, driven by machine learning, can even forecast component failure (e.g., in a drone’s gimbal camera or flight controller) before it happens, enabling proactive replacement or repair.

Dynamic Task Allocation and Re-prioritization

Once a non-contributing element is identified, the system must adapt. This is where dynamic task allocation and re-prioritization, often powered by AI, become crucial. If a drone in a mapping mission becomes an “idle unit,” an intelligent swarm management system can immediately re-distribute its remaining task segments among active drones, ensuring the mission is completed without significant delays. Similarly, if a server in a cloud environment becomes unresponsive, its workload is automatically shifted to healthy servers. This adaptive capacity, facilitated by AI-driven resource management, ensures that the system as a whole remains productive even when individual “group members” fail or become inefficient.

Redundancy and Self-Healing Architectures

Designing systems with built-in redundancy is a fundamental strategy. This can take many forms: N+1 redundancy in server farms (one extra server beyond what’s needed for peak load), mirrored databases, or employing larger drone swarms than strictly necessary for a mission, allowing for a certain percentage of failures without compromising outcomes. “Self-healing architectures” take this a step further by not only detecting failures but also automatically initiating recovery procedures. This could involve automatically restarting a failed software service, provisioning a new virtual machine to replace a crashed one, or even re-flashing firmware on an unresponsive device. These strategies ensure that the system can autonomously identify and rectify instances of “doing nothing,” minimizing human intervention and maximizing uptime.

The Future of Collaborative Tech: Ensuring Every “Member” Contributes

The continuous evolution of tech and innovation pushes the boundaries of collaboration, autonomy, and efficiency. As systems become more complex, ensuring active contribution from every “member” is not just about efficiency but also about trust and reliability.

AI for Proactive Anomaly Detection

The future lies in moving from reactive to proactive identification of non-contributing elements. Advanced AI, particularly machine learning models trained on vast datasets of system performance, will be able to predict potential failures or inefficiencies before they manifest. By identifying subtle patterns that indicate an impending “idle drone” or “stagnant algorithm,” systems can take preventative action, such as scheduling maintenance, re-routing tasks, or adjusting parameters, ensuring continuous high performance. This proactive approach minimizes downtime and maximizes the contribution of every part of the system.

Enhanced Human-System Interaction

As autonomous systems grow in scale and complexity, the interface between human operators and the technology becomes critical. Intuitive dashboards, augmented reality (AR) overlays, and natural language processing (NLP) will enable human supervisors to quickly grasp the status of every “group member” within a swarm, a network, or a distributed computation. Rapidly identifying a “silent node” in a remote sensing array or an “inefficient instance” in a cloud environment will be made effortless, allowing for more effective human oversight and intervention when autonomous recovery mechanisms are insufficient.

Ethical Considerations in Autonomous System Design

As we design increasingly autonomous and self-managing systems, ethical considerations also come into play regarding the “accountability” of individual “group members.” While not a human “free rider,” a failing component still impacts the overall system’s mission and reliability. Therefore, robust logging, traceable decision-making by AI agents, and clear fault isolation mechanisms become essential. This ensures that when a “group member does nothing,” the system can not only adapt but also provide a clear post-mortem, fostering continuous improvement and ethical responsibility in the development of cutting-edge technology.

In conclusion, the question “what is it called when a group member does nothing?” translates into a multifaceted challenge within the realm of Tech & Innovation. It encompasses the failure of individual components, the inefficiency of algorithms, and the underperformance of networked agents. Addressing these issues—whether termed idle units, silent nodes, algorithm stagnation, or inefficient allocations—is fundamental to harnessing the full potential of autonomous systems, distributed computing, and artificial intelligence. By leveraging advanced diagnostics, intelligent management, and resilient architectural design, the world of technology continues to innovate, striving for a future where every “group member” actively contributes to the collective success.