In the burgeoning field of autonomous aerial systems, the concept of “transcription” extends far beyond its traditional biological definition. Within the realm of Tech & Innovation, particularly concerning AI-powered drones, “transcription” refers to the intricate process of converting raw, often disparate, sensor data and high-level mission objectives into structured, actionable intelligence that guides autonomous flight and complex task execution. The “initiation” phase of this transcription is paramount—it’s the critical first step where a drone begins to make sense of its environment and mission, setting the stage for all subsequent autonomous operations. Without a robust initiation, the drone’s ability to navigate, adapt, and perform tasks effectively would be severely compromised.

The Foundation of Autonomous Intelligence

Autonomous drones are sophisticated platforms equipped with a multitude of sensors, including high-resolution cameras, LiDAR, thermal imagers, GPS, inertial measurement units (IMUs), and ultrasonic detectors. These sensors continuously generate torrents of raw data about the drone’s position, orientation, velocity, and the surrounding environment. However, this raw data, by itself, is mere noise—unprocessed signals without inherent meaning. For a drone to operate autonomously, to follow a path, avoid obstacles, or identify targets, this data must be transformed into a coherent, semantic understanding of its world. This transformation process is what we can metaphorically term “transcription” in the context of drone AI.

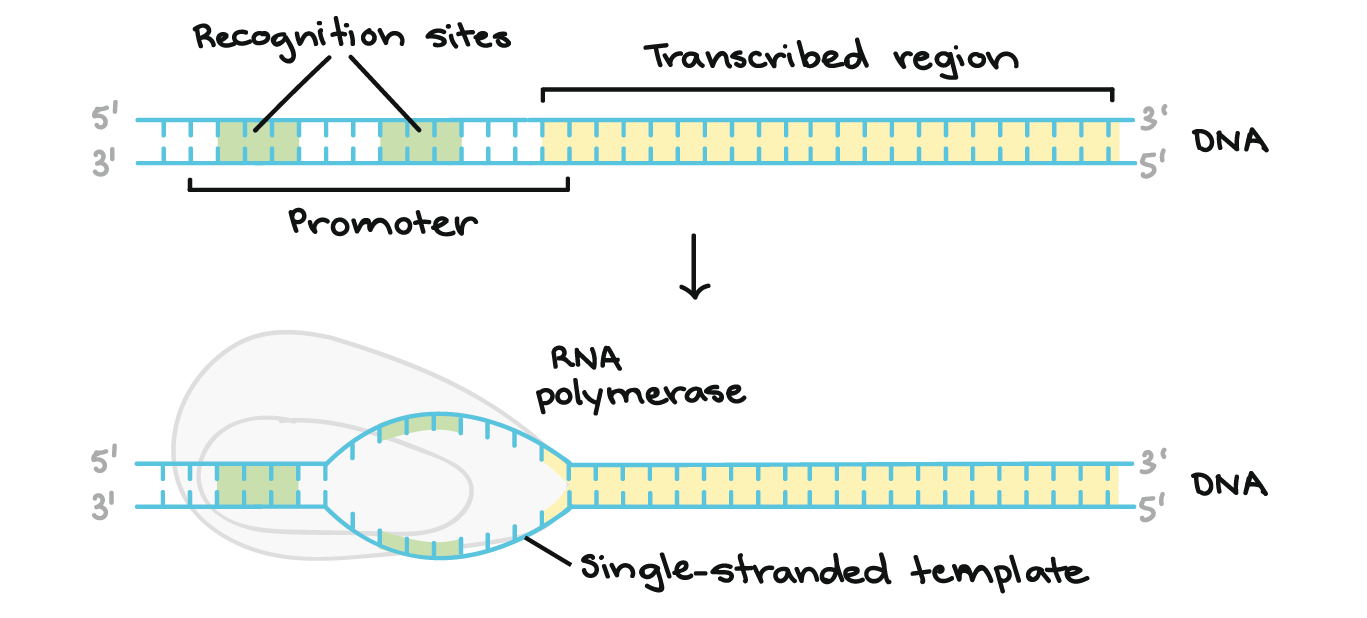

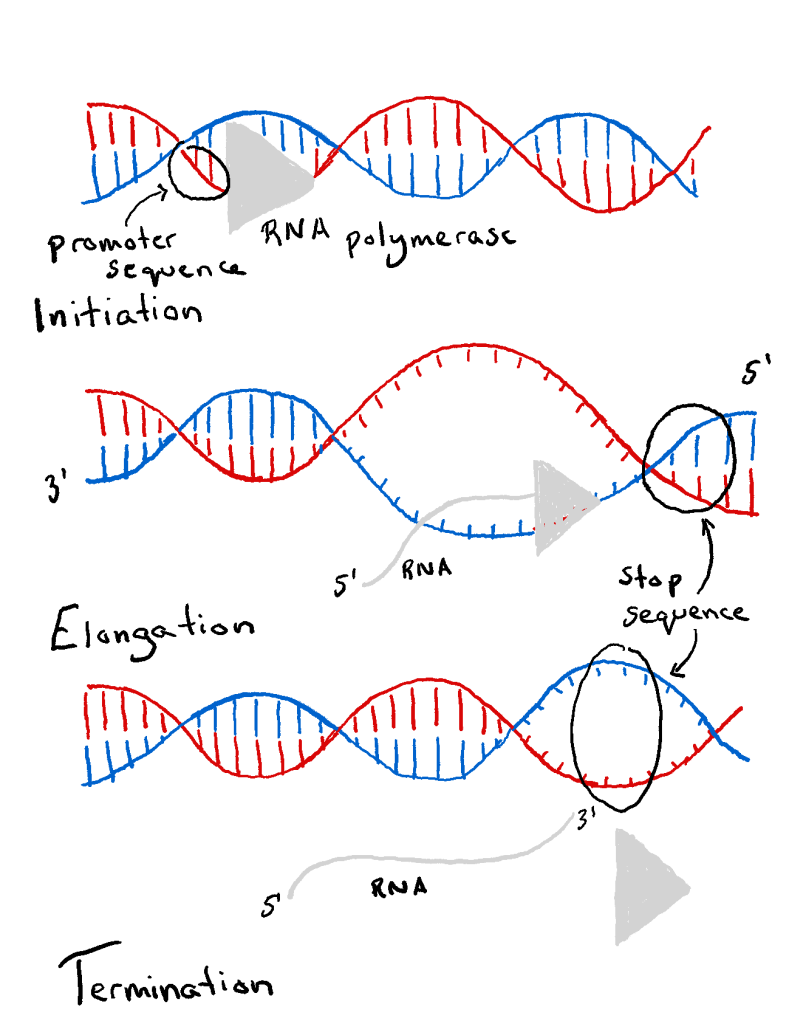

The foundation of autonomous intelligence lies in this ability to “read” the environment. Just as biological transcription converts genetic information into a functional RNA molecule, an autonomous system “transcribes” physical inputs into computational models, maps, and decision trees. This allows the drone’s onboard intelligence to interpret its surroundings, build internal representations, and predict future states. The fidelity and speed of this transcription directly influence the drone’s responsiveness, accuracy, and safety. Any delay or error in this foundational step can have cascading negative effects on mission success, particularly in dynamic and unpredictable operational scenarios.

Initiating the Data-to-Action Pipeline

The initiation of this data transcription pipeline is a multifaceted process that occurs almost instantaneously upon the activation of autonomous systems or the commencement of a mission. It’s where the drone transitions from a dormant state to an actively perceiving and processing entity. This phase is characterized by several critical initial steps:

Raw Data Acquisition and Pre-processing

The very first aspect of initiation involves the high-speed acquisition of data from all active sensors. This isn’t just about collecting bytes; it’s about preparing them for interpretation. This includes:

- Sensor Synchronization: Ensuring that data from different sensors (e.g., visual frames from a camera and distance readings from LiDAR) are timestamped and aligned correctly to provide a unified snapshot of the environment at any given moment.

- Noise Filtering and Calibration: Raw sensor data is often noisy and prone to inaccuracies. Initiation involves applying initial filters to remove spurious readings and calibrating sensors against known parameters to ensure accuracy. For instance, an IMU might undergo bias correction, or camera images might be corrected for lens distortion.

- Data Aggregation: Bringing together initial readings from various sources into a preliminary, common data structure that subsequent processing stages can access.

Contextualization and Initial State Estimation

Beyond mere data collection, initiation also involves establishing the drone’s initial context within its operational environment. This includes:

- GPS Fix and Initial Geolocation: Obtaining a robust GPS fix provides the drone with its global position, which is crucial for outdoor navigation and mapping. This initial fix serves as a primary anchor point.

- Attitude and Heading Reference System (AHRS) Initialization: IMUs provide raw angular rates and linear accelerations. During initiation, these inputs are processed to calculate the drone’s initial orientation (pitch, roll, yaw) relative to the Earth’s frame. This establishes the drone’s “body frame” in space.

- Initial Mission Parameter Loading: If the drone is executing a pre-programmed mission, initiation involves loading and understanding the initial waypoints, boundaries, and objectives. These parameters provide critical context for how the incoming sensor data should be interpreted and utilized.

Feature Extraction and Early Interpretation

Even at the initiation stage, advanced algorithms begin to extract meaningful features from the pre-processed data. This is not yet a full semantic understanding but rather the identification of rudimentary patterns and structures:

- Basic Obstacle Detection: Using LiDAR or ultrasonic sensors, the system performs an initial sweep to detect any immediate, close-range obstacles in the drone’s direct path, allowing for immediate evasive action if necessary.

- Ground Plane Estimation: For navigation, it’s often critical to identify the ground plane. Vision algorithms or LiDAR point cloud processing initiate this by finding the largest flat surface below the drone.

- Visual Odometry Initialization: For environments where GPS may be unreliable (e.g., indoors or under heavy tree cover), visual odometry (VO) algorithms start tracking visual features in video streams to estimate the drone’s movement and position relative to its starting point. The initiation involves identifying robust feature points (e.g., corners, edges) and establishing their initial positions.

The Role of AI and Machine Learning in Initiation

The rapid advancements in Artificial Intelligence and Machine Learning (AI/ML) have revolutionized how “transcription” initiation occurs in autonomous drones. AI/ML models are not merely reactive; they enable the drone to quickly establish a sophisticated understanding of its environment and mission.

Model Loading and Activation

At the core of AI-driven initiation is the loading and activation of specialized ML models. These pre-trained models, optimized for various perception tasks, are instantiated in the drone’s onboard computing hardware. For example:

- Object Detection Models: For tasks like surveillance or inspection, models trained to recognize specific objects (e.g., vehicles, infrastructure components, people) are activated. The initiation phase involves preparing these models to process incoming visual data in real-time.

- Semantic Segmentation Models: These models are loaded to classify every pixel in an image into categories (e.g., sky, building, tree, road). Their initiation allows for immediate, granular environmental understanding.

- Predictive Models: For trajectory planning or anomaly detection, models that forecast future states or identify unusual patterns are prepared to analyze the initial streams of data.

Initial State Estimation and Pattern Recognition

AI/ML accelerates the initial state estimation beyond traditional filtering techniques. Machine learning algorithms, particularly those leveraging deep learning, are adept at recognizing complex patterns in noisy, high-dimensional data, which is crucial for rapid initiation:

- Learned Localization: Instead of relying solely on GPS and IMUs, ML models can use visual cues (e.g., matching current camera frames against a pre-built map database) to instantly localize the drone with high precision, especially useful in GPS-denied environments.

- Environmental Categorization: Upon initiation, AI models can swiftly categorize the operational environment (e.g., urban, rural, indoor) based on the first few seconds of sensor data. This categorization can then dynamically adjust subsequent processing pipelines and flight behaviors.

- Anomaly Detection: ML models can be initialized to immediately flag unusual sensor readings or environmental conditions that deviate significantly from expected norms, potentially indicating a fault or an unforeseen hazard.

The immediate outcome of this AI/ML-driven initiation is the creation of a nascent, dynamic digital twin of the drone’s immediate surroundings. This initial understanding, though continuously refined, is robust enough to inform immediate flight decisions and to serve as the baseline for more complex cognitive processes.

Challenges and Future Directions in Transcription Initiation

Despite significant progress, the initiation of transcription in autonomous drone systems presents several ongoing challenges and exciting future directions.

Real-time Processing Demands and Computational Overhead

The initiation phase must occur almost instantaneously to ensure safe and responsive drone operation, especially in fast-paced or critical missions. This demands extremely efficient algorithms and powerful, yet energy-constrained, onboard computing hardware. The challenge lies in performing complex data fusion, initial state estimation, and AI model inference within milliseconds. Future advancements are focusing on highly optimized edge AI processors and novel neural network architectures designed for low-latency inference.

Ambiguity, Noise, and Unforeseen Conditions

The real world is messy. Initial sensor data can be ambiguous, corrupted by noise, or present completely novel scenarios not encountered during training. A robust initiation process must be resilient to these imperfections. Research into uncertainty quantification in AI models and adaptive filtering techniques is crucial. Furthermore, systems capable of “zero-shot” or “few-shot” learning could enable drones to initiate understanding of previously unseen objects or environments more effectively.

Robustness and Security

As drones become more integrated into critical infrastructure, the initiation process must be highly robust against sensor failures, cyber-attacks, or intentional interference. Secure boot processes, cryptographic verification of AI models, and redundant sensor systems are becoming essential to ensure that the initial transcription is trustworthy and uncompromised.

Looking ahead, the future of transcription initiation in autonomous drones is characterized by deeper integration of advanced AI, cognitive computing, and potentially even bio-inspired approaches. Research areas like neuro-symbolic AI could allow drones to combine the pattern recognition power of neural networks with the logical reasoning of symbolic AI, leading to more explainable and robust initiation processes. Federated learning could enable drones to learn from a collective experience while preserving data privacy, leading to more generalized and resilient initial understanding across diverse operational contexts. Ultimately, the goal is to create autonomous systems that can not only transcribe their environment rapidly and accurately but also intelligently adapt their initiation strategies to ensure optimal performance in any given scenario. This continuous evolution of transcription initiation is pivotal for unlocking the full potential of autonomous drone technology.