In the intricate tapestry of modern computing, the Graphics Processing Unit (GPU) stands as a monumental pillar, often misunderstood yet universally impactful. Far from being a mere accessory for gamers, the GPU has evolved into one of the most critical components within a computer system, driving innovation across a myriad of fields from artificial intelligence and scientific research to professional visualization and, yes, breathtaking gaming experiences. To truly grasp the essence of modern technology, understanding the GPU – its origins, architecture, and transformative applications – is indispensable.

At its core, a GPU is a specialized electronic circuit designed to rapidly manipulate and alter memory to accelerate the creation of images in a frame buffer intended for output to a display device. While this technical definition highlights its primary function, it barely scratches the surface of its capabilities and the profound role it plays in today’s computational landscape. Originally conceived to render 3D graphics more efficiently than the central processing unit (CPU), the GPU’s unique parallel processing architecture has proven invaluable for a much broader spectrum of computational tasks, establishing it as a cornerstone of high-performance computing.

The Genesis and Evolution of Graphics Processing

The journey of the GPU is a fascinating narrative of specialized innovation responding to an evolving technological demand. In the early days of computing, all graphical output was handled directly by the CPU. As graphics became more complex – particularly with the advent of 3D gaming and graphical user interfaces – CPUs struggled to keep pace, leading to slow and cumbersome visual experiences. This bottleneck sparked the need for a dedicated processor.

From Fixed-Function to Programmable Shaders

Early graphics accelerators were largely fixed-function pipelines. They were designed to perform a specific set of operations for rendering graphics, such as drawing lines, triangles, and simple textures, without much flexibility. Companies like S3 Graphics, 3dfx, and ATI (now AMD) pioneered these early cards, offloading basic geometry and pixel rendering tasks from the CPU. The introduction of 3D graphics in the mid-1990s, notably with titles like Doom and Quake, significantly accelerated this demand.

The true revolution, however, came with the introduction of programmable shaders in the early 2000s. NVIDIA’s GeForce 3 and ATI’s Radeon 8500 were among the first to feature programmable pixel and vertex shaders. This paradigm shift meant that developers could write custom programs (shaders) to define how graphics were rendered, allowing for unprecedented levels of detail, realism, and visual effects previously unimaginable. This marked the birth of the modern GPU as we know it: a highly parallel, programmable processor. This evolution not only enhanced gaming graphics dramatically but also laid the groundwork for the GPU’s eventual expansion into non-graphics applications.

The Rise of General-Purpose Computing on GPUs (GPGPU)

As GPUs became more programmable and their parallel processing capabilities grew, researchers began to recognize their potential beyond graphics. The GPU’s architecture, characterized by thousands of smaller, simpler cores working in parallel, was inherently suited for problems that could be broken down into many independent, simultaneous calculations – a stark contrast to the CPU’s fewer, more powerful cores designed for sequential tasks. This realization spurred the development of General-Purpose computing on Graphics Processing Units (GPGPU).

NVIDIA’s introduction of CUDA (Compute Unified Device Architecture) in 2007 was a watershed moment. CUDA provided developers with a software platform that allowed them to program GPUs using familiar languages like C++, abstracting away much of the complexities of graphics rendering. This made GPUs accessible for general-purpose parallel computing, unleashing their power for scientific simulations, data processing, and eventually, the monumental task of training artificial intelligence models. AMD followed suit with OpenCL, an open standard for parallel programming across various processors, further solidifying the GPGPU movement.

Understanding the GPU’s Unique Architecture

To appreciate the GPU’s prowess, it’s crucial to understand how its internal architecture differs from that of a CPU. While both are complex integrated circuits that perform computations, they are fundamentally optimized for different types of workloads.

CPU: The Generalist Maestro

The Central Processing Unit (CPU) is often described as the “brain” of the computer. It’s designed to excel at a wide range of tasks, executing complex instructions sequentially and efficiently. CPUs typically have a small number of powerful cores (e.g., 4 to 16 for consumer desktop processors), each optimized for high clock speeds, large cache memory, and sophisticated branch prediction to quickly process single threads of execution. This makes CPUs ideal for tasks requiring strong single-thread performance, managing operating systems, running applications, and handling I/O operations.

GPU: The Parallel Processing Powerhouse

In contrast, a GPU is purpose-built for parallel processing. It features hundreds or even thousands of smaller, simpler arithmetic logic units (ALUs), often referred to as “cores” (e.g., CUDA cores for NVIDIA, Stream Processors for AMD). These cores are designed to handle many calculations simultaneously, but each individual core is less powerful and has less cache than a CPU core.

Imagine a CPU as a small team of highly skilled architects, each capable of designing an entire building from scratch, one project at a time. A GPU, on the other hand, is like an enormous workforce of construction workers, each capable of laying bricks or pouring concrete for thousands of buildings simultaneously. While a single construction worker cannot design a building, their collective effort can build many structures far faster than the small team of architects could. This analogy perfectly illustrates the difference: CPUs excel at deep, complex sequential tasks, while GPUs shine at broad, simple, parallelizable tasks. This massive parallelism is what gives GPUs their incredible speed advantage in domains like graphics rendering (where millions of pixels need to be processed simultaneously) and machine learning (where huge matrices of data need identical operations applied).

Key Architectural Components

A modern GPU is not just a collection of cores. It’s a sophisticated system-on-a-chip that includes:

- Processing Units (Cores/Stream Processors): The arithmetic heart, performing calculations.

- Video Memory (VRAM): High-bandwidth, low-latency memory (e.g., GDDR6, HBM2e) directly attached to the GPU, essential for storing textures, frame buffers, and large datasets that the GPU needs immediate access to. Unlike system RAM, VRAM is optimized for the GPU’s parallel access patterns.

- Memory Controllers: Manage the flow of data to and from VRAM.

- Texture Mapping Units (TMUs): Handle the application of textures to 3D objects.

- Render Output Units (ROPs): Responsible for final pixel operations like anti-aliasing and blending before the image is sent to the display.

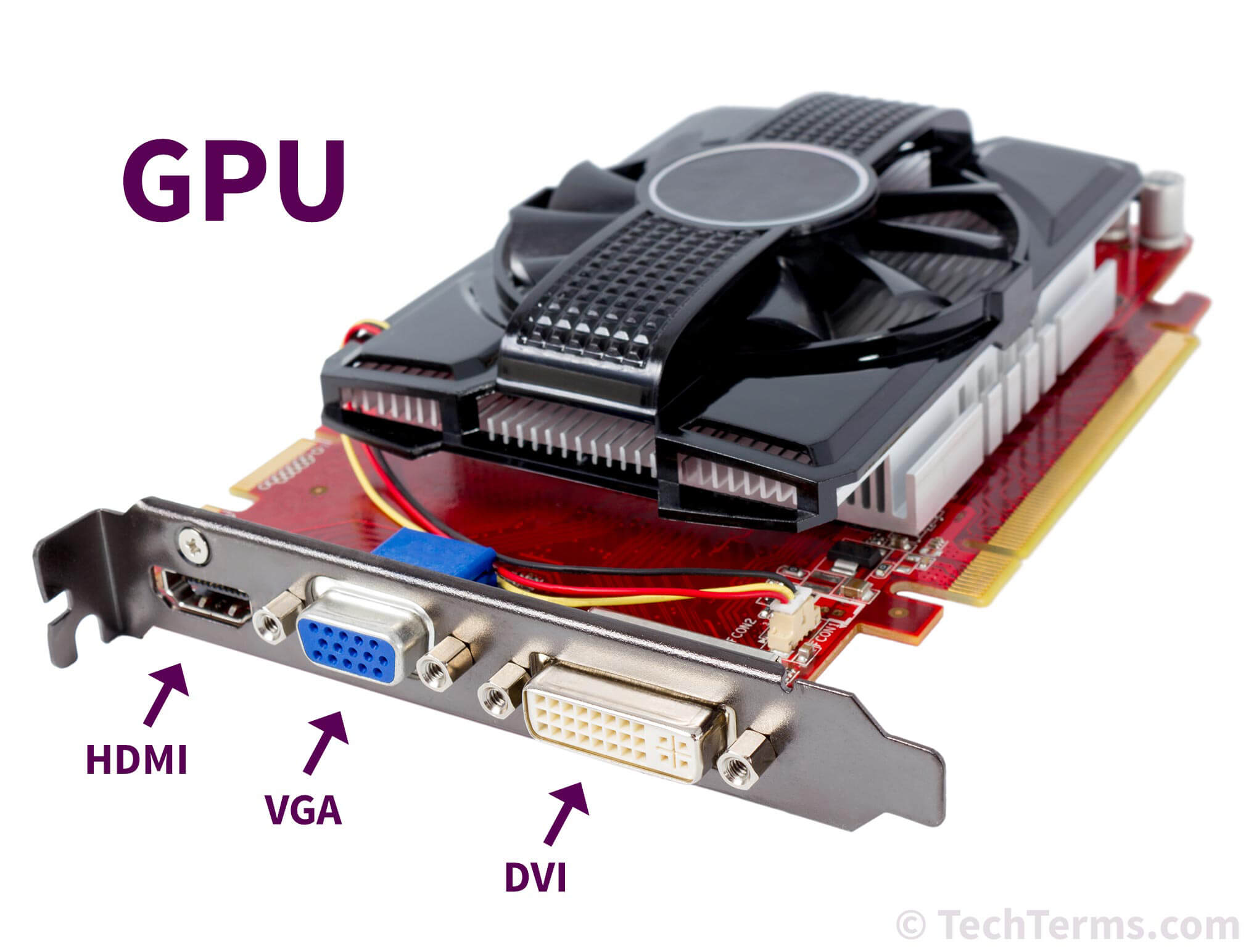

- Interface: Connects the GPU to the motherboard, typically via a PCIe (Peripheral Component Interconnect Express) slot, providing high-speed communication with the CPU and system memory.

Beyond Graphics: The Versatile Applications of GPUs

While gaming and professional visualization remain core applications, the GPU’s parallel processing capabilities have unlocked revolutionary advancements in seemingly disparate fields, establishing it as a general-purpose accelerator for some of the most demanding computational challenges.

Artificial Intelligence and Machine Learning

Perhaps the most impactful application of GPUs outside of graphics is in the field of Artificial Intelligence (AI) and Machine Learning (ML). Training deep neural networks, which are the backbone of modern AI systems, involves performing vast numbers of matrix multiplications and other linear algebra operations on massive datasets. This is precisely the kind of highly parallelizable task that GPUs excel at. Without GPUs, the rapid advancements in AI – from natural language processing and computer vision to autonomous driving and drug discovery – would simply not be possible. GPUs dramatically reduce the training time for complex AI models from weeks or months to days or hours.

Scientific Research and Simulation

From astrophysics and climate modeling to molecular dynamics and fluid mechanics, scientific research frequently relies on complex simulations that demand immense computational power. GPUs are now indispensable tools for researchers, accelerating calculations for:

- Drug Discovery: Simulating molecular interactions to identify potential drug candidates.

- Weather Forecasting: Running complex atmospheric models to predict weather patterns with greater accuracy.

- Physics Simulations: Modeling particle interactions, galaxy formation, or material properties.

- Data Analysis: Processing vast datasets in fields like genomics and high-energy physics.

The ability to perform these simulations faster means scientists can iterate on hypotheses more quickly, leading to faster discoveries and deeper insights.

Cryptocurrency Mining and Blockchain

The early 2010s saw GPUs become central to cryptocurrency mining, particularly for Bitcoin and Ethereum. The cryptographic hash functions used in these blockchain networks are computationally intensive and highly parallelizable, making GPUs significantly more efficient than CPUs for these tasks. While specialized ASICs (Application-Specific Integrated Circuits) have largely taken over Bitcoin mining, GPUs remain relevant for many other cryptocurrencies and blockchain-related computations.

Data Processing and Big Data Analytics

In an era defined by “big data,” organizations are increasingly turning to GPUs to accelerate data processing and analytics. Tasks such as database queries, data sorting, filtering, and complex statistical analysis can be significantly sped up by offloading them to GPUs, especially when dealing with petabytes of information. This enables faster insights, more responsive business intelligence, and the ability to process real-time data streams effectively.

The Future of GPU Technology

The trajectory of GPU development shows no signs of slowing down. As demand for AI, high-fidelity graphics, and complex simulations continues to grow, so too will the innovation in GPU design and application.

Integrated vs. Discrete GPUs

The market currently features two primary types of GPUs:

- Integrated GPUs (iGPUs): Built directly into the CPU or motherboard chipset, sharing system RAM with the CPU. They are cost-effective, energy-efficient, and suitable for basic tasks, office productivity, and casual gaming. Their performance is generally limited compared to discrete cards.

- Discrete GPUs (dGPUs): Separate, dedicated graphics cards with their own processing unit and dedicated VRAM. These are designed for maximum performance in demanding applications like high-end gaming, professional video editing, 3D rendering, and AI model training.

The future will likely see further advancements in both, with iGPUs becoming increasingly capable for mainstream tasks, while dGPUs push the boundaries of extreme performance and specialized acceleration.

Cloud-Based GPU Services

The high cost and power consumption of top-tier GPUs have led to the proliferation of cloud-based GPU services. Platforms like AWS, Google Cloud, and Microsoft Azure offer access to powerful GPU clusters on demand, democratizing access to high-performance computing for startups, researchers, and individuals who cannot afford to build their own GPU farms. This trend will only grow, making GPU power a utility rather than a luxury.

Specialized AI Accelerators

While general-purpose GPUs are excellent for AI, there’s a growing trend towards even more specialized hardware accelerators specifically designed for AI workloads, often referred to as Tensor Processing Units (TPUs) or Neural Processing Units (NPUs). These chips are optimized for specific AI operations, like matrix multiplication, and can offer even greater energy efficiency and performance for inference (running trained AI models) or certain training tasks. While not replacing GPUs entirely, they represent a complementary path in the evolution of accelerated computing.

In conclusion, the GPU has transcended its origins as a mere graphics rendering device to become a fundamental accelerator for a vast array of computational challenges. Its unique parallel processing architecture has not only redefined visual computing but has also catalyzed revolutions in artificial intelligence, scientific discovery, and data analytics. As technology continues to advance, the GPU will undoubtedly remain at the forefront of innovation, continually expanding the horizons of what computers can achieve.