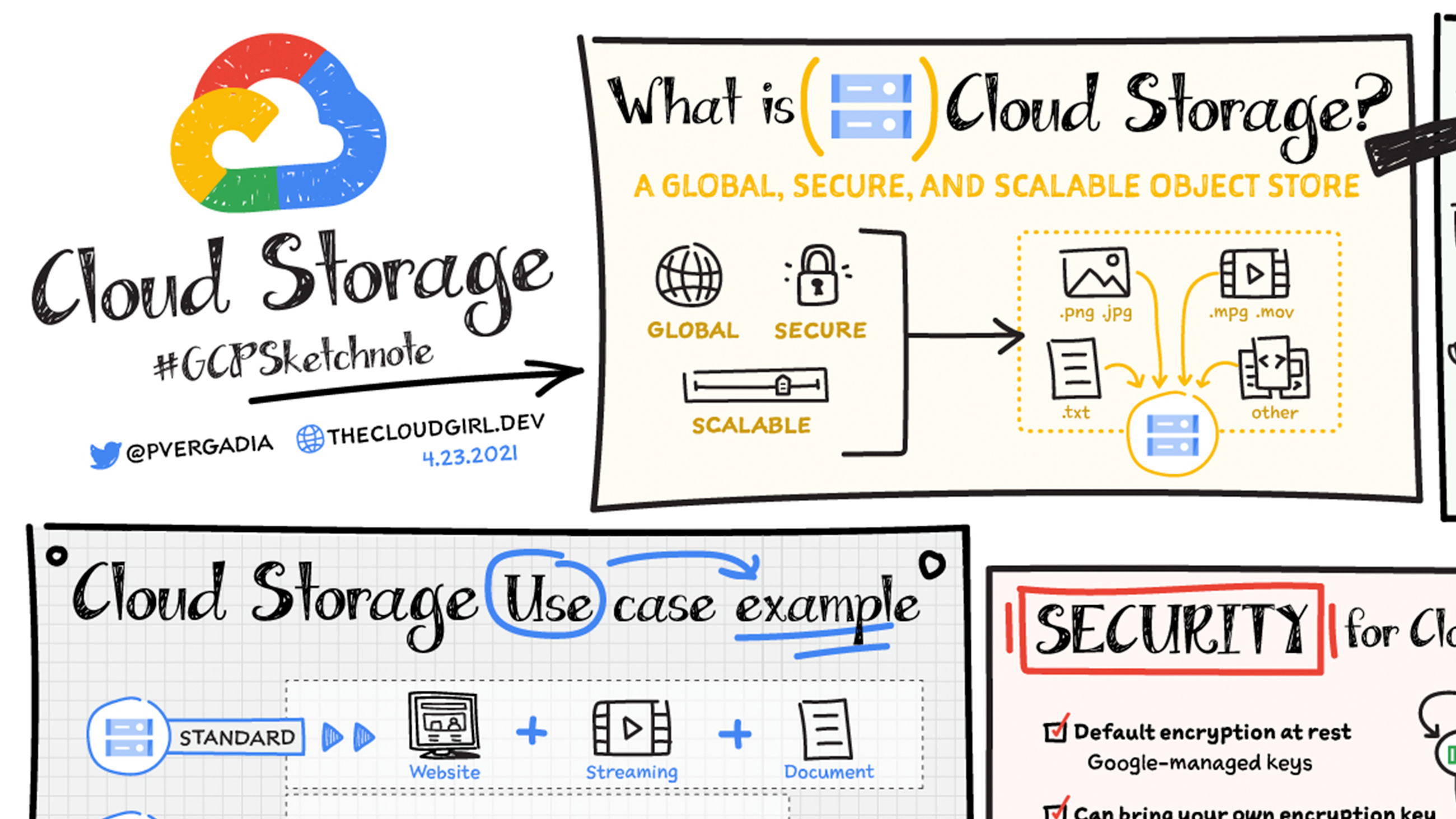

Google Cloud Storage (GCS) is a foundational service within the Google Cloud Platform, designed to provide highly scalable, durable, and available object storage for developers and businesses. It acts as a central repository for a vast array of data, from static website assets and media files to backups and archive data. Unlike traditional file systems or block storage, GCS is optimized for storing unstructured data in the form of “objects.” Each object comprises the data itself, metadata describing that data, and a unique identifier. This architecture makes it incredibly versatile for a wide range of applications, particularly those that benefit from cloud-native solutions, distributed access, and cost-effective data management.

At its core, GCS offers a robust infrastructure that can handle petabytes of data with ease, ensuring that information is accessible whenever and wherever it’s needed. Its seamless integration with other Google Cloud services further enhances its utility, enabling complex workflows involving data analytics, machine learning, and application deployment. This article will delve into the fundamental concepts of Google Cloud Storage, explore its key features and benefits, and outline common use cases that showcase its power and flexibility in the modern digital landscape.

Understanding the Fundamentals of Object Storage

Object storage is a data storage architecture that manages data as discrete units called “objects.” This paradigm differs significantly from hierarchical file systems (like those found on your computer) or block storage (used for traditional hard drives). In GCS, an object consists of the data payload, descriptive metadata, and a globally unique identifier. This metadata can be used for various purposes, including indexing, searching, and access control, providing a rich context for the stored data.

The Object Model: Data, Metadata, and Identifiers

Every piece of data uploaded to Google Cloud Storage is treated as an object. An object is not just the raw bytes of your file; it also includes associated metadata and a unique key. The data itself is the actual content – be it an image, a video, a document, or a backup file. Metadata, on the other hand, is information about the data. This can include user-defined metadata, such as the content type, creation date, or ownership, as well as system-generated metadata like object size, storage class, and checksums. The unique identifier, often referred to as the object key or name, is crucial for retrieving the object. It’s a hierarchical path that helps organize objects within a bucket. For example, images/users/profile.jpg could be the key for a user’s profile picture. This hierarchical naming convention provides a logical structure, even though the underlying storage mechanism is flat.

Buckets: The Organizational Framework

The fundamental organizational unit in Google Cloud Storage is the “bucket.” Buckets are global, meaning their names must be unique across all of Google Cloud. Think of a bucket as a container for your objects. You create a bucket, assign it a globally unique name, choose a region or multi-region location for it, and then populate it with objects. Buckets are instrumental in managing access permissions, setting data lifecycle policies, and configuring versioning. When you upload an object, you specify the bucket it should reside in. This hierarchical structure, with buckets containing objects, allows for logical organization and simplifies management of large datasets.

Key Features and Benefits of Google Cloud Storage

Google Cloud Storage offers a compelling set of features that address the diverse needs of modern data storage. Its inherent scalability, durability, and accessibility are complemented by advanced capabilities like granular access control, data lifecycle management, and integration with a broad ecosystem of cloud services. These features collectively contribute to its adoption by businesses of all sizes for a wide range of applications.

Scalability and Performance

One of the most significant advantages of GCS is its virtually limitless scalability. You don’t need to provision storage capacity in advance; GCS automatically scales to accommodate your data growth. This elasticity ensures that you can store as much data as you need without worrying about running out of space or over-provisioning. Performance is also a key consideration. GCS is designed for high throughput and low latency, making it suitable for applications that require rapid data access. Whether you are serving web content, processing large datasets for analytics, or performing real-time backups, GCS can deliver the performance your applications demand. The underlying infrastructure is globally distributed, allowing for fast access to data from different geographical locations.

Durability and Availability

Data durability and availability are paramount for any storage solution, and GCS excels in these areas. Google Cloud Storage is designed for extreme durability, with data replicated across multiple physical locations within a region or across multiple regions in the case of multi-regional buckets. This redundancy ensures that your data is protected against hardware failures, natural disasters, and other potential disruptions. The service offers 99.999999999% (11 nines) of annual durability, meaning that the probability of losing an object is exceedingly low. Similarly, GCS provides high availability, with a commitment to making your data accessible whenever you need it. The availability SLA varies depending on the storage class and location, but it is generally very high, ensuring business continuity.

Data Management and Security

GCS provides robust tools for managing and securing your data. Access control is handled through Identity and Access Management (IAM), allowing you to define granular permissions for users and service accounts. You can control who can read, write, or delete objects within a bucket. For enhanced security, GCS supports server-side encryption by default, encrypting your data at rest. You can also choose to manage your own encryption keys for greater control. Data lifecycle management is another critical feature. You can configure policies to automatically transition objects to colder storage classes (like Nearline or Coldline) to reduce costs, or to delete them after a specified period. This automation is essential for managing large volumes of data and optimizing storage expenses. Versioning is also supported, allowing you to retain multiple versions of an object, which is invaluable for recovering from accidental deletions or overwrites.

Common Use Cases for Google Cloud Storage

The versatility of Google Cloud Storage makes it an ideal solution for a wide array of applications and workloads. Its ability to handle massive amounts of data, coupled with its integration capabilities, positions it as a cornerstone service for many cloud-native architectures. From serving static content for websites to powering machine learning pipelines, GCS plays a crucial role in modern data-driven operations.

Website Hosting and Content Delivery

Google Cloud Storage is an excellent choice for hosting static websites and delivering rich media content. You can store your HTML, CSS, JavaScript, images, and videos directly in GCS and serve them to users globally. By configuring a bucket for website hosting, GCS can automatically serve an index page and handle error pages. For faster delivery, GCS can be integrated with Google Cloud CDN (Content Delivery Network), which caches your content at edge locations closer to your users, significantly reducing latency and improving the user experience. This approach is cost-effective, highly scalable, and removes the need for traditional web servers for static assets.

Data Archiving and Backup

For long-term data archiving and disaster recovery, Google Cloud Storage offers cost-effective solutions. Objects can be moved to archive storage classes, such as Archive, which provide the lowest storage costs but have higher retrieval times and costs. This makes it ideal for compliance requirements, historical data retention, and infrequently accessed backups. Businesses can use GCS to back up databases, application data, or entire virtual machines, ensuring that their critical information is safely stored and recoverable in the event of an emergency. The durability and availability guarantees of GCS provide peace of mind for organizations entrusting their data to the cloud.

Big Data Analytics and Machine Learning Pipelines

In the realm of big data and machine learning, GCS serves as a vital data lake. It can store massive datasets from various sources, such as logs, sensor data, and user activity. Tools like Google Cloud Dataproc, BigQuery, and Vertex AI can then access this data directly from GCS for processing, analysis, and model training. The ability to scale storage independently of compute resources allows data scientists and engineers to experiment with large datasets without being constrained by storage limitations. GCS’s integration with these services streamlines the entire data science workflow, from data ingestion and storage to model development and deployment. The granular access control and versioning features are also crucial for managing the complex data pipelines involved in machine learning.

Conclusion

Google Cloud Storage is a powerful, flexible, and cost-effective object storage service that forms the backbone of many cloud-native applications and data strategies. Its inherent scalability, exceptional durability, and high availability make it a trusted choice for storing and managing vast amounts of data. The comprehensive set of features, including granular security controls, intelligent data lifecycle management, and seamless integration with the broader Google Cloud ecosystem, empower developers and businesses to build innovative solutions and optimize their data operations. Whether you are hosting a website, archiving critical business data, or fueling cutting-edge machine learning initiatives, Google Cloud Storage provides the robust foundation necessary to succeed in today’s data-intensive world. Understanding its capabilities and best practices is essential for anyone looking to leverage the full potential of cloud computing.