The rapid advancement of technology, particularly in areas like artificial intelligence, autonomous systems, and sophisticated data analytics, presents a complex landscape where ethical considerations are no longer an afterthought but a foundational element of responsible innovation. As we push the boundaries of what’s possible, understanding and implementing robust ethical decision-making frameworks becomes paramount to ensuring that technological progress benefits humanity without inadvertently causing harm or exacerbating societal inequities. This exploration delves into the core principles and practical applications of ethical decision-making within the dynamic realm of tech and innovation.

The Pillars of Ethical Decision-Making in Technology

At its heart, ethical decision-making in technology revolves around navigating the moral implications of design, development, deployment, and use. This isn’t a monolithic concept; rather, it’s built upon several interconnected pillars that guide individuals and organizations through complex dilemmas.

Fairness and Equity

One of the most critical ethical considerations is ensuring that technological innovations do not perpetuate or amplify existing biases and inequalities. This involves actively working to identify and mitigate biases embedded in data sets, algorithms, and design choices.

Algorithmic Bias and Its Mitigation

Algorithms are trained on data, and if that data reflects historical societal biases – be it racial, gender, or socioeconomic – the algorithm will learn and replicate those biases. This can lead to discriminatory outcomes in areas like hiring, loan applications, and even criminal justice. Ethical decision-making requires a proactive approach to audit data sources, develop bias detection tools, and implement fairness-aware machine learning techniques. Techniques like adversarial debiasing, reweighing training data, and ensuring diverse representation in development teams are crucial.

Access and Digital Divide

The benefits of technological advancements should be accessible to all segments of society. Ethical innovation considers the digital divide and strives to create solutions that are inclusive, affordable, and usable by individuals with varying levels of technological literacy and access. This might involve designing user interfaces with accessibility in mind, developing low-cost solutions, or advocating for digital literacy programs.

Transparency and Explainability

In an era of increasingly complex “black box” AI systems, transparency and explainability are vital for building trust and accountability. Understanding how a system arrives at its decisions is crucial for identifying potential errors, biases, and unintended consequences.

The Need for Explainable AI (XAI)

When AI systems make decisions that significantly impact individuals’ lives, such as medical diagnoses or credit scoring, it is imperative that these decisions can be explained. Explainable AI (XAI) aims to develop methods that allow humans to understand and trust the outputs of machine learning algorithms. This can involve techniques like feature importance analysis, LIME (Local Interpretable Model-agnostic Explanations), and SHAP (SHapley Additive exPlanations) values.

Openness in Data and Development

While proprietary information is a reality, a commitment to transparency in development processes and data usage, where feasible and appropriate, fosters greater trust. This can include open-sourcing certain components, publishing research methodologies, and clearly articulating the intended use and limitations of a technology.

Accountability and Responsibility

As technologies become more autonomous and influential, establishing clear lines of accountability when things go wrong is essential. This requires a thoughtful consideration of who is responsible – the developer, the deployer, the user, or the technology itself.

Defining Ownership and Liability in Autonomous Systems

The rise of autonomous vehicles, drones, and AI-powered decision systems raises novel questions about liability in the event of accidents or failures. Ethical frameworks must address how to assign responsibility, ensuring that victims are compensated and that lessons are learned to prevent future incidents. This often involves a complex interplay of legal, technical, and ethical considerations.

Corporate Social Responsibility (CSR) in Tech

Companies developing innovative technologies have a profound responsibility to consider the broader societal impact of their creations. This extends beyond legal compliance to proactively engaging with ethical implications, investing in responsible AI research, and fostering a culture of ethical awareness within the organization.

Privacy and Data Protection

The collection and utilization of vast amounts of personal data are fundamental to many technological innovations. Upholding individuals’ right to privacy and ensuring the secure and ethical handling of their data is a paramount ethical imperative.

Data Minimization and Purpose Limitation

Ethical data practices advocate for collecting only the data that is strictly necessary for a specific, clearly defined purpose, and retaining it only for as long as it is needed. This principle of data minimization and purpose limitation helps to reduce the risk of data breaches and misuse.

User Consent and Control

Informed consent is a cornerstone of ethical data handling. Users should have a clear understanding of what data is being collected, how it will be used, and who it will be shared with, and they should have meaningful control over their personal information. This includes the right to access, rectify, and erase their data.

Safety and Security

Ensuring that technological systems are robust, reliable, and secure against malicious attacks is not just a technical challenge but an ethical obligation. The failure to prioritize safety and security can have devastating consequences.

Cybersecurity as an Ethical Mandate

The increasing interconnectedness of systems makes them vulnerable to cyber threats. Developers and deployers have an ethical duty to implement strong cybersecurity measures to protect systems and user data from breaches, manipulation, and disruption. This includes regular vulnerability assessments, secure coding practices, and prompt patching of discovered flaws.

Human Oversight and Intervention

For critical systems, especially those with the potential for significant harm, maintaining a degree of human oversight and the ability to intervene remains an important ethical safeguard. This is particularly relevant in AI systems where decision-making can occur at speeds exceeding human comprehension.

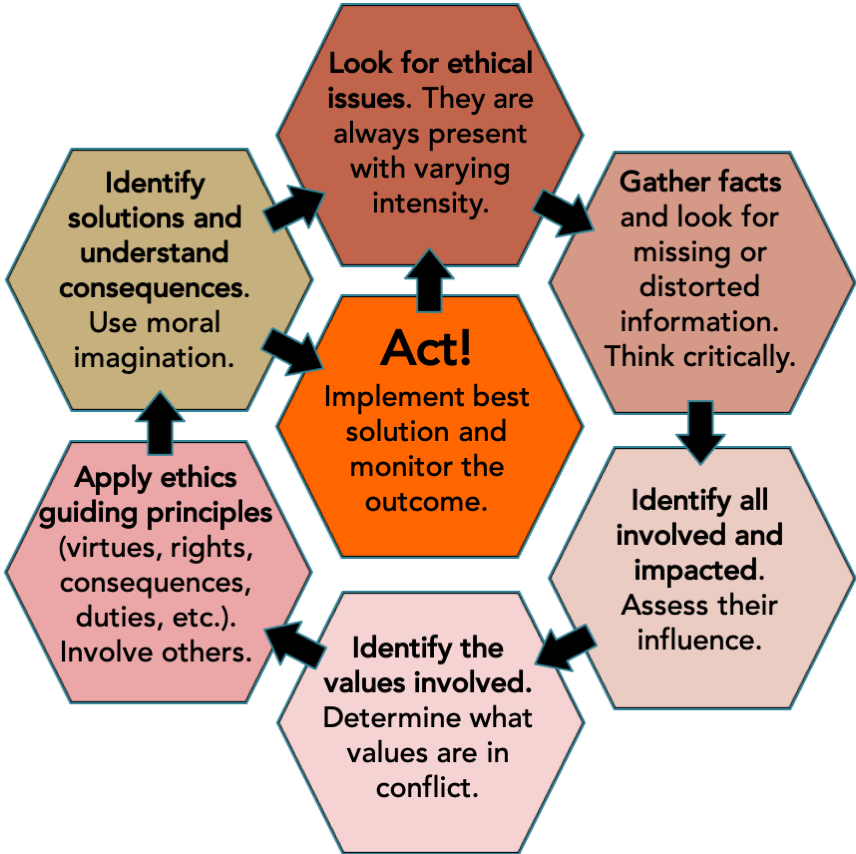

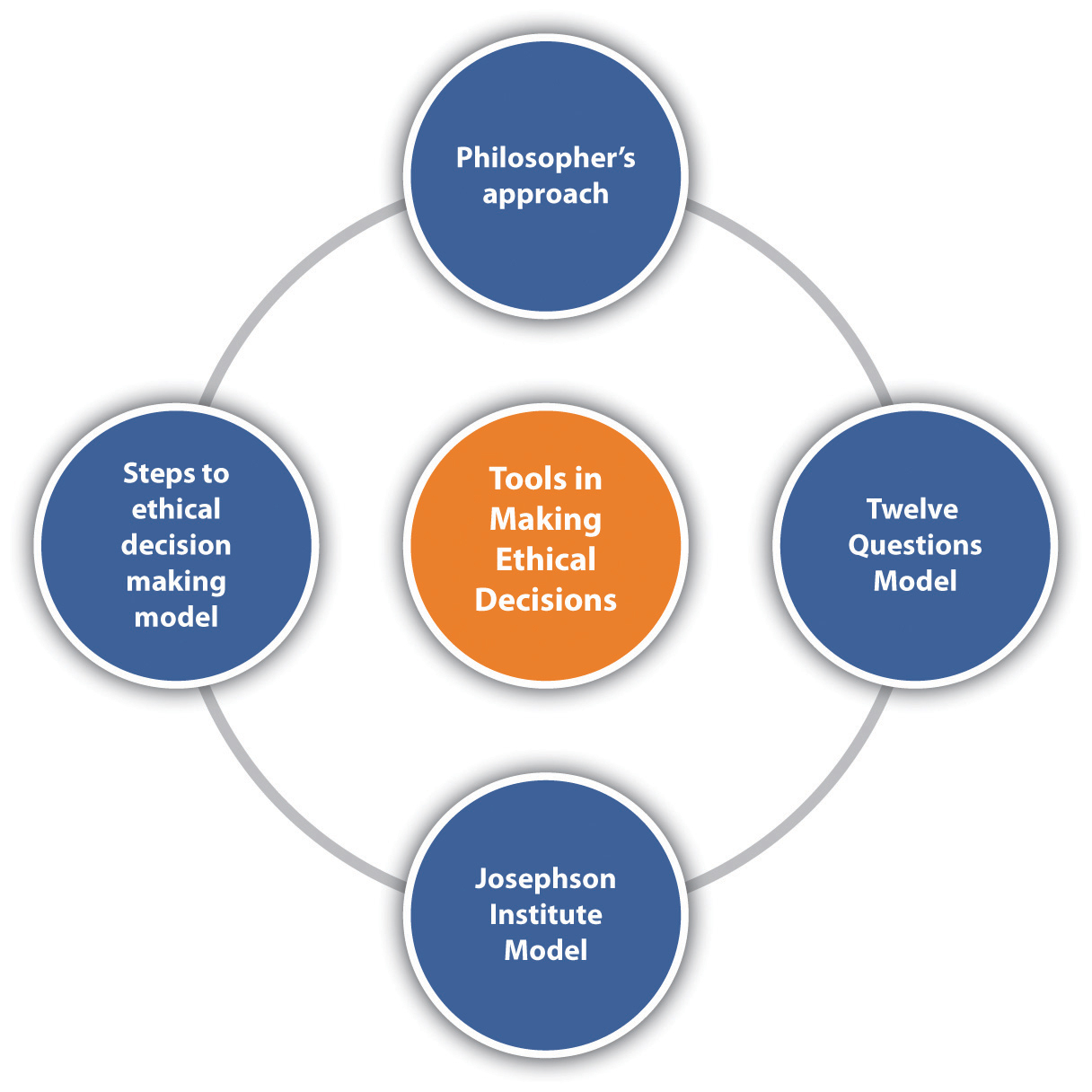

Implementing Ethical Decision-Making Frameworks

Beyond understanding the pillars, effective ethical decision-making requires practical frameworks and processes.

Proactive Ethical Design and Development

Ethical considerations should be integrated from the very inception of a project, not as an add-on. This “ethics by design” approach ensures that potential moral issues are identified and addressed early in the development lifecycle.

Ethical Impact Assessments

Similar to environmental impact assessments, ethical impact assessments can be conducted to systematically evaluate the potential ethical risks and benefits of a new technology or application before its widespread deployment. This involves stakeholder consultations, scenario planning, and risk analysis.

Ethical Review Boards and Committees

Establishing internal or external ethical review boards can provide an independent layer of oversight. These committees, composed of diverse experts and stakeholders, can review projects, offer guidance, and help resolve ethical quandaries.

Cultivating an Ethical Culture

Technology companies and research institutions must foster a culture where ethical considerations are discussed openly, valued, and integrated into everyday decision-making.

Training and Education

Providing comprehensive ethical training for all employees, from engineers and designers to product managers and executives, is essential. This training should cover relevant ethical theories, practical dilemmas, and company policies.

Whistleblower Protections and Reporting Mechanisms

Creating safe and effective channels for employees to report ethical concerns without fear of retaliation is crucial for identifying and addressing issues before they escalate.

Continuous Monitoring and Adaptation

The ethical landscape is not static. Technologies evolve, societal norms shift, and new challenges emerge. Therefore, ethical decision-making must be an ongoing process of monitoring, evaluation, and adaptation.

Post-Deployment Audits and Feedback Loops

Regularly auditing deployed systems for unintended consequences, bias, and security vulnerabilities is vital. Establishing feedback loops from users and affected communities can provide invaluable insights for iterative improvement.

Staying Abreast of Emerging Ethical Debates

The field of technology ethics is constantly evolving. Engaging with academic research, public discourse, and policy developments related to AI, data privacy, and other cutting-edge areas is necessary to make informed and forward-thinking decisions.

In conclusion, ethical decision-making in tech and innovation is a multifaceted and ongoing commitment. It requires a deep understanding of core ethical principles, the implementation of robust frameworks, and a dedication to fostering a culture of responsibility. By prioritizing ethics, we can harness the transformative power of technology to create a future that is not only innovative but also just, equitable, and beneficial for all.