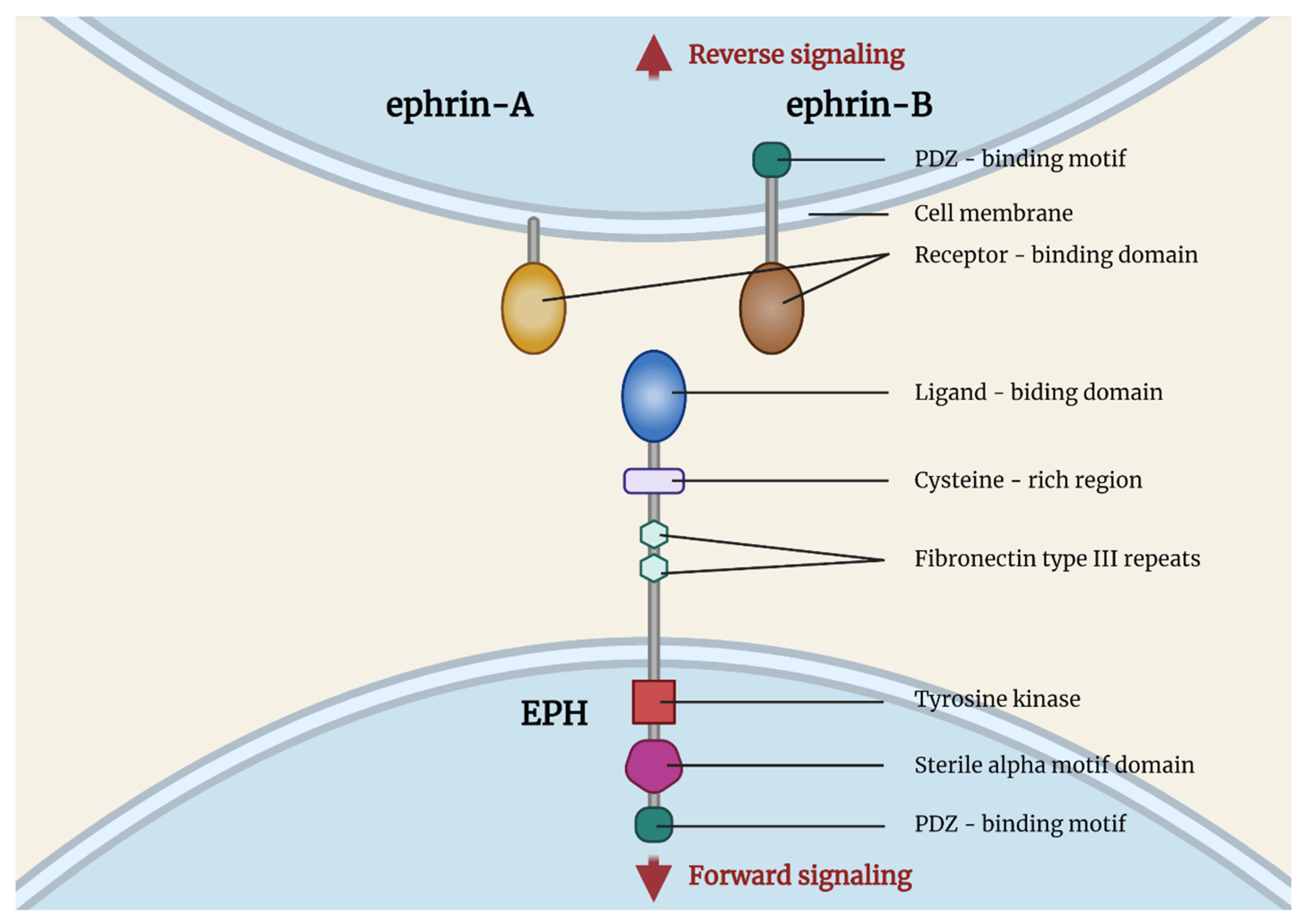

The realm of drone technology is in constant flux, evolving at a breathtaking pace from simple remote-controlled toys to sophisticated autonomous systems capable of complex missions. At the forefront of this evolution lies a critical area of innovation often encapsulated by the acronym EPH: Environmental Perception & Haptics. This cutting-edge concept represents a paradigm shift in how drones interact with their surroundings and how human operators interface with these intelligent machines. EPH is not merely an incremental upgrade; it is a foundational technology that underpins the next generation of autonomous flight, advanced data acquisition, and intuitive human-drone collaboration.

At its core, EPH refers to the symbiotic relationship between a drone’s ability to comprehensively perceive and understand its environment, and its capacity to communicate this understanding, often through haptic (tactile) feedback, to either an onboard AI or a remote human operator. This goes far beyond basic obstacle detection; it encompasses a holistic, real-time spatial awareness coupled with a sophisticated feedback loop that profoundly impacts decision-making, control, and operational safety. In essence, EPH endows drones with a heightened sense of their world and provides a more natural, intuitive means for humans to engage with their complex operations, propelling the industry towards truly intelligent and collaborative aerial systems.

The Core Pillars of Environmental Perception

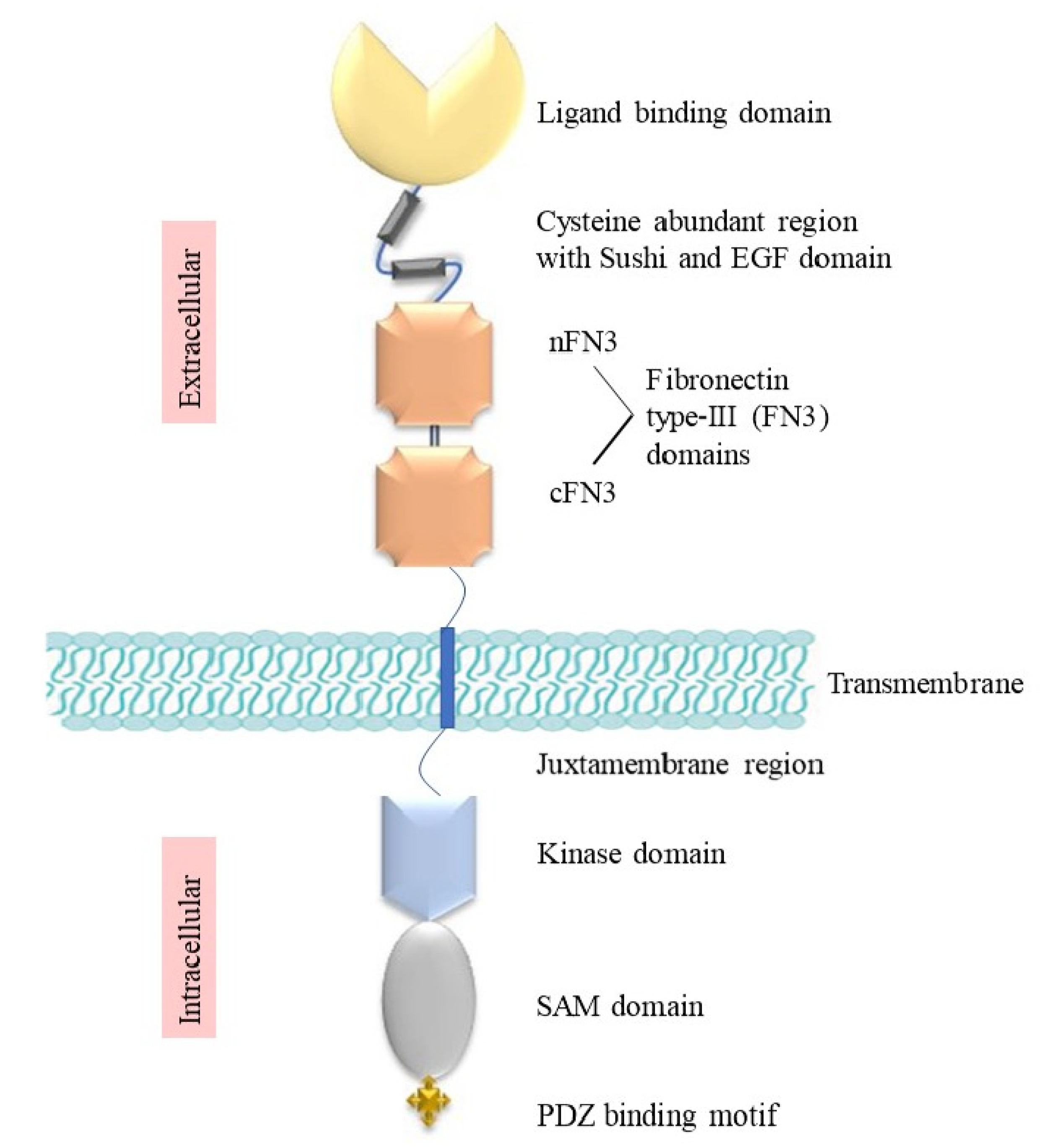

Environmental Perception, the “EP” in EPH, is the cornerstone of intelligent drone operation. It involves equipping drones with the sensory capabilities and computational intelligence required to build and maintain a dynamic, accurate understanding of their operational space. This sophisticated awareness is crucial for tasks ranging from autonomous navigation to precision mapping and complex industrial inspections.

Advanced Sensor Fusion: Building a Comprehensive Worldview

At the heart of environmental perception is the masterful integration of diverse sensor technologies, known as sensor fusion. A single sensor type provides only a limited perspective; true perception emerges from combining the strengths of multiple modalities.

- Lidar (Light Detection and Ranging): Provides highly accurate 3D point clouds, crucial for precise mapping, distance measurement, and creating detailed topographical models, especially in varying light conditions. Its ability to penetrate light foliage also offers advantages in complex outdoor environments.

- Radar (Radio Detection and Ranging): Offers robust detection capabilities, particularly effective in adverse weather conditions like fog, rain, or dust where optical sensors struggle. Radar excels at long-range object detection and velocity measurement, providing critical data for collision avoidance.

- Vision-based Systems (RGB & Stereo Cameras): Essential for capturing high-resolution visual data, enabling tasks such as object recognition, visual SLAM (Simultaneous Localization and Mapping), and optical flow for precise positioning. Stereo cameras provide depth information, simulating human binocular vision.

- Thermal Cameras: Detect heat signatures, invaluable for search and rescue, wildlife monitoring, security applications, and identifying anomalies in industrial inspections, such as overheating components.

- Ultrasonic Sensors: Offer short-range, highly accurate proximity sensing, ideal for close-quarters maneuvering and precise landing in cluttered environments.

The data from these disparate sensors are continuously collected, timestamped, and then fused together by advanced algorithms. This fusion process resolves ambiguities, reduces noise, and generates a unified, more reliable representation of the environment than any single sensor could provide, creating a rich, multi-layered spatial model in real-time.

AI-Driven Object Recognition & Classification: Understanding the Environment

Raw sensor data is just information; it becomes perception when processed and interpreted. This is where Artificial Intelligence and Machine Learning algorithms play a transformative role.

- Semantic Segmentation: AI models analyze visual data to categorize every pixel in an image, identifying roads, buildings, trees, sky, and even individual objects like vehicles or people. This allows the drone to understand the meaning of different parts of its environment.

- Object Detection & Tracking: Beyond simply knowing what is present, EPH systems use AI to locate specific objects within the sensor data stream, classify them (e.g., human, animal, vehicle, power line), and continuously track their movement. This is vital for dynamic obstacle avoidance, following targets, or monitoring specific activities.

- Environmental State Estimation: AI algorithms process a continuous stream of sensor data to estimate the overall state of the environment, including weather conditions (wind speed, precipitation), terrain types, and potential hazards. This intelligence allows the drone to adapt its flight parameters and mission strategy in real-time, optimizing performance and safety. The ability to distinguish between static obstacles and dynamic elements (like a moving car or a flock of birds) is paramount for truly intelligent navigation.

Real-time Situational Awareness: Dynamic Environmental Modeling

The ultimate goal of environmental perception is to furnish the drone with real-time situational awareness. This involves not just processing current data but also building a dynamic, continuously updated 3D map of its surroundings.

- SLAM (Simultaneous Localization and Mapping): Advanced SLAM algorithms allow drones to simultaneously build a map of an unknown environment while tracking their own position within that map. This is crucial for GPS-denied environments (indoors, urban canyons) and for creating highly accurate spatial datasets.

- Predictive Modeling: EPH systems go a step further by using AI to predict the future states of dynamic elements in the environment. For instance, anticipating the trajectory of a moving vehicle or the drift of a cloud helps the drone make proactive rather than reactive decisions, enabling smoother and safer autonomous flight paths.

- Threat Assessment & Prioritization: Based on its comprehensive understanding, the EPH system can assess potential threats (e.g., an approaching bird, a rapidly changing wind pattern, an unexpected structural integrity issue detected by thermal imaging) and prioritize responses, ensuring the drone maintains a safe and efficient operational state. This involves distinguishing between critical immediate threats and minor environmental nuances.

Haptics: Bridging Human Intuition with Machine Precision

The “H” in EPH, Haptics, refers to the technology that creates an experience of touch by applying forces, vibrations, or motions to the user. In the context of drones, haptic feedback systems serve as a crucial interface, translating complex environmental data and system states into intuitive, tactile sensations for the human operator. This bridge enhances control, improves situational awareness, and fosters a more natural interaction with highly complex aerial systems.

Tactile Feedback Systems: Communicating Beyond Visuals

While visual displays provide a wealth of information, the human sense of touch offers an unparalleled channel for immediate, intuitive understanding. Haptic systems leverage this by incorporating various forms of tactile feedback into drone controllers or specialized operator interfaces.

- Vibratory Alerts: Simple yet effective, varied vibration patterns can signal different events. A gentle pulse might indicate optimal flight conditions, while an intense, rapid vibration could warn of an impending collision or a critical system error. This allows operators to receive critical information without constantly diverting their gaze to a screen.

- Force Feedback Joysticks: More advanced systems incorporate force feedback into the control sticks. If the drone detects strong headwinds, the joystick might resist movement in that direction, giving the operator a physical sensation of the environmental force. Conversely, it could gently nudge the stick to guide the operator around an obstacle or suggest an optimal flight path.

- Spatial Haptics: Future iterations might involve wearable haptic devices (e.g., vests, gloves) that provide directional cues. For instance, if an obstacle is detected to the drone’s left, the operator might feel a localized vibration on the left side of their vest, instinctively guiding their response. This creates an immersive experience that enhances spatial awareness in complex 3D environments.

Intuitive Control & Emergency Intervention: Augmenting Human Skill

Haptics are not just about warnings; they are about enhancing the operator’s control and augmenting their natural piloting skills.

- Proactive Guidance: Instead of simply presenting data, haptic feedback can proactively guide the operator. When flying near a geofence boundary, the controls might subtly push back, indicating the limit. During complex maneuvers, the system could provide haptic cues to help maintain stability or achieve a precise trajectory. This creates a “feel” for the drone’s interaction with its environment, much like a pilot feels the controls of a manned aircraft.

- Enhanced Situational Awareness in Adverse Conditions: In high-stress situations or environments with low visibility (e.g., smoky areas, dense fog), visual cues can be unreliable. Haptic feedback can cut through this noise, providing immediate, unambiguous warnings about proximity to obstacles, sudden wind gusts, or changes in drone attitude. This allows operators to maintain control even when their visual sense is compromised.

- Emergency Overrides & Collaborative Control: In situations where autonomous systems detect an imminent threat that might be missed by the human operator, haptic feedback can initiate an urgent, undeniable warning, or even gently guide the controls to assist in an emergency maneuver. This creates a collaborative control paradigm where the AI and human work together, leveraging each other’s strengths to avert potential disasters.

The Future of Human-Drone Interaction: Symbiotic Interfaces

The evolution of haptics in EPH points towards a future where human-drone interaction becomes increasingly seamless and intuitive, blurring the lines between operator and machine.

- Immersive Teleoperation: Combining advanced haptics with VR/AR interfaces could allow operators to “feel” the drone’s flight, sense environmental resistance, and even manipulate virtual objects with force feedback, creating an unparalleled sense of presence and control. This could revolutionize remote inspection, search and rescue, and hazardous environment operations.

- Multi-Modal Haptic Feedback for Swarm Control: Imagine controlling an entire swarm of drones not through individual commands, but through gestures and haptic feedback that conveys the collective state and emergent behavior of the swarm. This could enable complex coordinated missions with unprecedented ease.

- Personalized Haptic Profiles: Just as individuals have different learning styles, they may also respond differently to haptic feedback. Future EPH systems could incorporate personalized haptic profiles, adapting the intensity, frequency, and type of feedback to optimize each operator’s performance and comfort, leading to more efficient and less fatiguing operations.

Applications and Impact Across Industries

The synergistic capabilities of Environmental Perception & Haptics are not confined to theoretical discussions; they are actively revolutionizing a multitude of industries, enhancing safety, efficiency, and expanding the operational envelope of drone technology. EPH is a foundational enabler for advanced applications that were once the exclusive domain of science fiction.

Autonomous Operations & Smart Navigation: Unleashing True Independence

EPH is the linchpin for drones achieving true autonomy, allowing them to operate intelligently without constant human intervention.

- Dynamic Route Planning and Obstacle Avoidance: Unlike pre-programmed flight paths, EPH-enabled drones can dynamically adapt their routes in real-time based on perceived environmental changes. If a new obstacle appears (e.g., a crane moves into airspace, a sudden dust storm), the drone can autonomously detect it, assess the threat, and instantly recalculate a safe bypass trajectory. This capability is vital for complex urban environments, industrial sites, and disaster response.

- Precision Landing and Docking: EPH systems allow drones to identify suitable landing zones with high precision, even in unstructured environments, by analyzing terrain, identifying hazards, and accounting for wind conditions. This extends to autonomous docking with charging stations or delivery platforms, enabling truly continuous operations without human oversight.

- Autonomous Inspection and Surveillance: Drones equipped with EPH can autonomously navigate complex industrial structures (bridges, wind turbines, pipelines) while maintaining a safe distance and ensuring comprehensive coverage. They can identify anomalies, track changes over time, and alert operators to critical issues, transforming maintenance and security protocols.

Precision Mapping & Remote Sensing: Unveiling Detailed Insights

The advanced perception capabilities of EPH are particularly transformative for applications requiring highly accurate spatial data.

- High-Resolution 3D Modeling: By integrating data from Lidar, photogrammetry, and other sensors, EPH systems can generate incredibly detailed and accurate 3D models of landscapes, buildings, and infrastructure. This is invaluable for urban planning, construction progress monitoring, cultural heritage preservation, and virtual reality applications.

- Environmental Monitoring and Analysis: Drones with EPH can precisely map vegetation health, monitor water quality, track wildlife populations, and assess geological changes. Their ability to perceive subtle environmental shifts allows for early detection of problems like deforestation, pollution, or land degradation, providing critical data for conservation efforts and environmental management.

- Precision Agriculture and Forestry: In agriculture, EPH allows drones to map crop health with unprecedented detail, identify areas requiring specific treatment (water, fertilizer, pest control), and monitor growth patterns across vast fields. In forestry, it aids in inventory management, disease detection, and fire risk assessment, optimizing resource management and yield.

Enhancing Safety & Reliability: Mitigating Risks in the Skies

Perhaps the most significant impact of EPH is its profound contribution to operational safety and the overall reliability of drone systems.

- Reduced Accident Rates: By providing drones with superior situational awareness and predictive capabilities, EPH drastically reduces the likelihood of collisions with terrain, structures, or other aircraft. This translates into fewer accidents, protecting valuable assets and preventing potential harm to people.

- Operation in Challenging Environments: EPH enables drones to operate safely and effectively in conditions that would be hazardous or impossible for human-piloted or less intelligent systems. This includes flying in GPS-denied areas, navigating through dense fog or smoke, or operating in environments with significant electromagnetic interference, thereby expanding the utility of drones in critical scenarios.

- Regulatory Compliance and Public Trust: As drone operations become more widespread, robust safety systems like EPH are crucial for meeting stringent regulatory requirements and building public trust. The demonstrable ability of drones to perceive, understand, and safely interact with their environment is key to gaining acceptance for advanced urban air mobility and beyond-visual-line-of-sight (BVLOS) operations.

The Road Ahead: Challenges and Future Prospects

While Environmental Perception & Haptics represents a monumental leap forward in drone technology, its full potential is still being realized. The journey ahead involves overcoming significant technical hurdles and navigating complex regulatory landscapes, all while continuously pushing the boundaries of what is possible.

Data Processing & Miniaturization: Powering the Edge

The sophistication of EPH systems generates immense volumes of data from multiple sensors in real-time. Processing this data effectively and efficiently onboard the drone remains a primary challenge.

- Edge Computing Power: There is a continuous demand for more powerful, yet energy-efficient, onboard processors (GPUs, NPUs) capable of running complex AI algorithms for sensor fusion, object recognition, and predictive modeling at the drone’s “edge.” This minimizes latency and reduces reliance on cloud connectivity.

- Miniaturization and SWaP-C: All these advanced sensors and processing units must be lightweight, compact, and consume minimal power (Size, Weight, and Power – Cost, or SWaP-C). Reducing the footprint of EPH components while enhancing their capabilities is crucial for extending flight times, increasing payload capacity, and enabling smaller drone designs.

- Efficient Data Management: Developing intelligent algorithms to filter, compress, and prioritize sensor data effectively will be key to managing the data deluge without overwhelming onboard computational resources or communication links. This includes smart data logging and selective transmission of critical information.

Standardization & Regulatory Frameworks: Paving the Way for Widespread Adoption

For EPH technology to achieve widespread adoption and seamless integration into airspace, robust standardization and clear regulatory guidelines are imperative.

- Common Protocols and Interfaces: Establishing industry-wide standards for sensor data formats, communication protocols between EPH modules, and performance metrics will foster interoperability and accelerate innovation. This ensures that EPH systems from different manufacturers can integrate seamlessly.

- Performance Benchmarking: Developing standardized testing methodologies and benchmarks for EPH system performance (e.g., accuracy of object detection, reliability of obstacle avoidance, responsiveness of haptic feedback) will be crucial for certification and regulatory approval. This allows regulators to objectively assess the safety and efficacy of new technologies.

- Airspace Integration Regulations: As EPH enables increasingly autonomous drone operations, regulatory bodies worldwide must develop clear frameworks for integrating these intelligent aircraft into existing airspace, particularly for BVLOS operations, urban air mobility, and package delivery. This includes defining responsibilities, failure modes, and secure communication channels.

Beyond Current Capabilities: The Horizon of EPH

The trajectory of EPH development points towards exciting, even revolutionary, future capabilities.

- Predictive Analytics and Intent Recognition: Future EPH systems will move beyond simply reacting to the environment to proactively anticipating events. This includes predicting human intent (e.g., a person walking towards the drone, a car about to turn) and environmental changes (e.g., micro-weather phenomena), allowing for truly intelligent and adaptable decision-making.

- Multi-Modal Interaction and Bio-Integration: Human-drone interfaces will become even more intuitive, potentially incorporating neuro-interfaces, eye-tracking, and advanced haptic feedback that adapts to the operator’s physiological state. This would create a truly symbiotic relationship, where the drone understands and responds to the nuances of human thought and emotion.

- Symbiotic AI and Self-Healing Systems: The ultimate evolution of EPH might involve drones that are not just intelligent but possess a form of self-awareness regarding their operational health and environmental context. This could lead to self-diagnosing, self-repairing, and self-optimizing drone systems that can learn and evolve their perception and haptic capabilities over time, operating with unprecedented levels of resilience and autonomy in dynamic, unpredictable environments.

In conclusion, EPH, or Environmental Perception & Haptics, is more than just a technological enhancement; it is a foundational pillar that is redefining the capabilities and interaction models of modern drone systems. By enabling drones to profoundly understand their environment and providing intuitive, tactile communication with human operators, EPH is driving the industry towards a future of safer, more autonomous, and far more intelligent aerial operations across every conceivable application. The ongoing advancements in this field promise to unlock entirely new paradigms for aerial innovation, pushing the boundaries of what drones can achieve.